没有合适的资源?快使用搜索试试~ 我知道了~

2023年美赛特等奖论文-C-2322645-解密.pdf

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

0 下载量 62 浏览量

2024-05-06

22:06:00

上传

评论

收藏 1.4MB PDF 举报

温馨提示

试读

22页

大学生,数学建模,美国大学生数学建模竞赛,MCM/ICM,2023年美赛特等奖O奖论文

资源推荐

资源详情

资源评论

Problem Chosen

C

2023

MCM/ICM

Summary Sheet

Team Control Number

2322645

With the rising popularity of Wordle, people have eagerly taken to Twitter

to report their results daily by the tens of thousands. Three very natural

questions arise regarding this data: (1) Can we use this data to predict the

difficulty of a given target word in Wordle? (2) Can we use this data to

predict future Wordle player reporting trends? (3) How does the difficulty

of given target word affect player reporting and results? In our paper, we

develop a comprehensive Bayesian model consisting of three submodels which

predict the distribution of the number of guesses, number of reported results

on Twitter and the number of reporting players playing in hard mode.

Initially, we decompose words into quantifiable traits associated with rel-

evant difficulty characteristics. Most notably, we formulate a novel Wordle-

specific entropy measure we call Subset Entropy which effectively quantifies

the average amount of information revealed by typical players after initial

guesses. We also develop a method to represent the distribution of player

attempts, and hence the observed difficulty of a word, using just two values

α, β corresponding to the cumulative mass function of the Beta distribution.

We use a preliminary Lasso regression to isolate the most relevant predictors

of word difficulty, which we then use in our Bayesian model.

Our Bayesian model predicts, for a given date and word, the reported

difficulty of a word, the number of player reports, and the number of players

reporting playing in hard-mode. To accomplish these three tasks, it is made

up of three submodels which are conditionally independent given the data,

making it efficient to sample from its posterior using Markov Chain Monte-

Carlo (MCMC).

We find that a word having a higher number of unique letters, usage

frequency in English, average number of revealed yellow squares over all

guesses, and Subset Entropy all make a word easier for players to guess. We

also find that higher word difficulty decreases the number of player reports.

Under the assumption that the Times choose words randomly, this can be

interpreted as a causal effect.

Our model is able to predict outcomes for new data and retrodict for old

data. Our model gives gives a 95% prediction interval that between 20238

and 27876 players will report results for “eerie” on March 1, 2023 and that

it will be in the 50th percentile of difficulty. Most notably, our model does

not just provide such simple point estimates and prediction intervals, but

full posterior distributions.

Keywords: Entropy, Lasso regression, MCMC, Bayesian methods,

Causal inference

How many Wordle words will Wordle guessers guess if

Wordle’s Wednesday Wordle word is “Eerie”?

Contents

1 Introduction 3

2 Data 3

2.1 Data cleaning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

2.2 Wordbank . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4

3 Word & Difficulty Representation 4

3.1 Vowels . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

3.2 Usage . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

3.3 Green & Yellow Tiles . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

3.4 Unique Letters . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

3.5 Entropies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

3.6 Representation of word difficulty . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

4 Modeling Methodology 9

4.1 Lasso regression . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

4.2 Bayesian models for prediction . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

5 Model Results 14

5.1 Interpretation of parameter posteriors . . . . . . . . . . . . . . . . . . . . . . . . 14

5.2 Retrodiction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

5.3 Prediction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

5.4 Difficulty representation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

6 Model Evaluation 18

6.1 Limitations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

6.2 Strengths . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

7 Conclusion 19

2

Team # 2322645 Page 3 of 22

1 Introduction

Wordle is a language-based game currently owned by the New York Times that became a viral

sensation in early 2022. The goal of the game is simple to understand: At the the start of each

day there is a 5-letter target word that players have to guess. Players have six tries to do so,

and attempt to get the word in as few attempts as possible, each time using a valid English

word.

There are 11,881,376 possible 5-letter words if taking every possible sequence of five letters.

Even restricting it to words found in English dictionaries and those in common usage today would

only drop it down to around 12,000 and 4,000 words respectively[2]. For a person to randomly

guess a target word in six tries would statistically be almost impossible. This, however, is where

tile color feedback comes into play. For a given guess word, for each letter Wordle returns a

green tile if the corresponding letter is in the target word and in the right location, a yellow

tile if the corresponding letter is in the true word but in the wrong location, and a gray tile if

neither of these is true. With this information, most players are able to guess the word from

thousands of possibilities within six tries.

The game has captured the attention of millions, with people taking to social media to share

their guess results and comment on the difficulty for certain words. One Twitter account that

has popped up as a result of this trend is “@WordleStats”, a bot that tallies all posted Wordle

attempts and the distribution of attempts each day. Via this data, we can discover a wealth

of information about Wordle player behavior. Particularly, in this paper we develop a model

which utilizes both the trends in twitter reporting and the resulting inferred difficulty of target

words gleamed from this data to predict future Wordle statistics.

2 Data

2.1 Data cleaning

Data errors We fixed several errors that appear in the provided data by referencing the

Twitter posts of the @WordleStats Twitter bot. These are logged below for full transparency.

• Day 239: hardmode 3249 → 9249

• Day 314: tash → trash

• Day 500: hardmode 3667 → 2667

• Day 525: clen → clean

• Day 529: Reported players 2569 → 25569

• Day 540: na¨ıve → naive (¨ı is not a letter in Wordle)

• Day 545: rprobe → probe

Percentages to counts Because the percentages of reports in the different categories are

rounded and do not necessarily sum to 100, we divided the percentages in each row by their sum

to obtain proportions. As our Bayesian model predicts the number of players in each category,

we converted these proportions into counts by applying the following method for each row:

Team # 2322645 Page 4 of 22

1. Multiply the proportions by the number of reports on that day to obtain “counts” with

decimal values

2. Round the counts down

3. Add 1 back to the counts, in order from the count which was rounded down most to that

which was rounded down least, until the total again matches the number of reports on

that day.

This method gives counts which correspond to the given percentages, are integers, and whose

sum is the number of reports on that day.

2.2 Wordbank

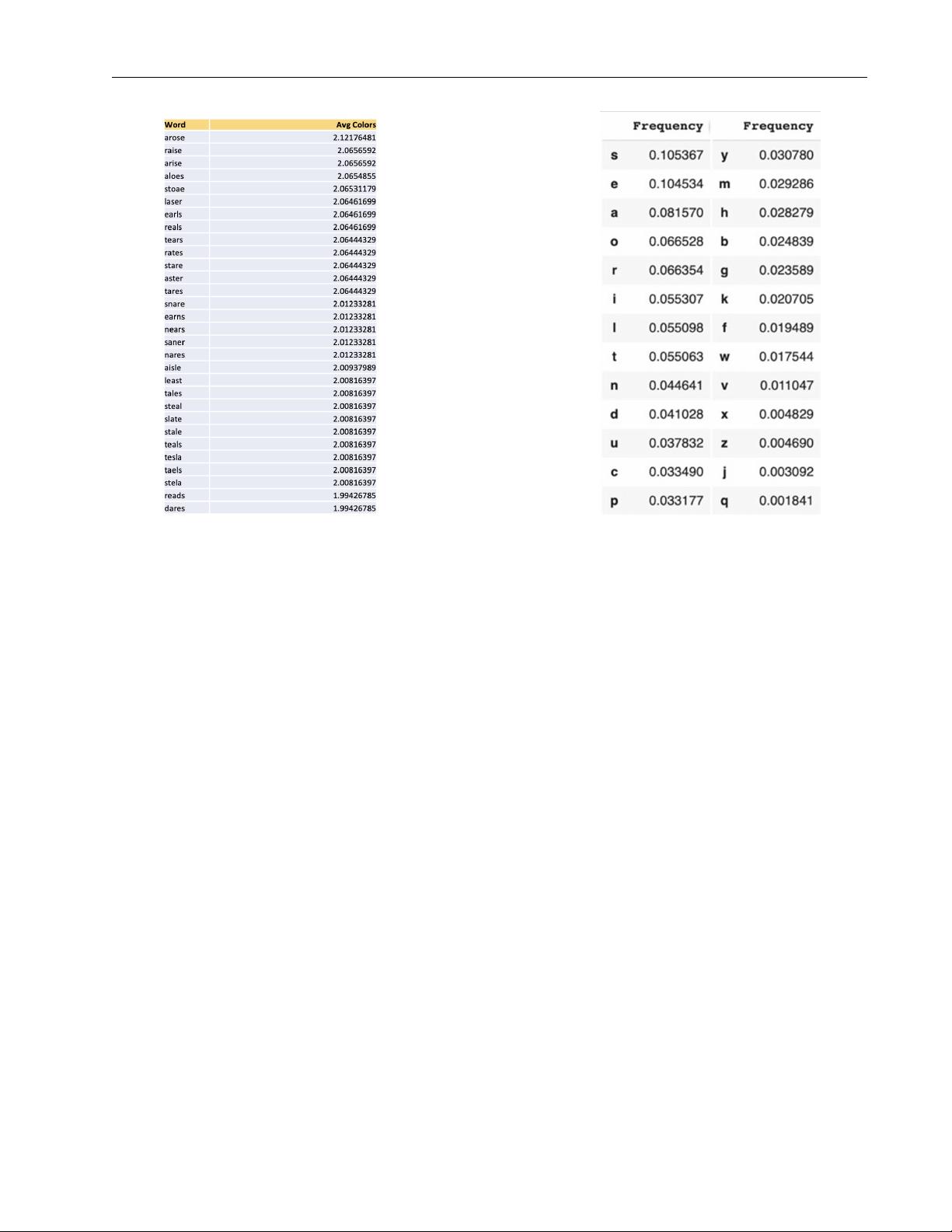

To model 5-letter words and their properties, we rely on the Stanford GraphBase (SGB) word-

bank of 5757 5-letter words created by Donald Knuth [6], which provides a good approximation

of the set of words that a player could guess and expect as a target. This word bank is then used

in a few different ways. First off, it is used to build the Order Frequency table and the Letter

Frequency table. The Letter Frequency table tells us how often each letter appears in 5-letter

words, and follows what we would expect. S and E are the most common letters, followed by A

and O. The Order Frequency table then shows given that a certain letter is in the word, what

proportion of the time is that letter in each position (e.g. given A is in a word, it is the 4th

letter 24% of the time).

As will be detailed later on, we also use this table in numerous word specific calculations,

namely computing for a given word t the average number of green, yellow and colored tiles

that are returned on any guess chosen uniformly at random from the SGB wordbank when t is

the actual target word. Additionally, for any word g we compute the average number of colors

returned when a target word t is chosen uniformly at random from the SGB wordbank and g

is guessed, which gives a somewhat naive but reasonable metric to evaluate guess words players

would use. Using this guess word metric, we compile a list of the 30 words with the highest

corresponding average, which we take to be a set of common guess words (see Figure 1). This

list will be used in the calculation of Subset Entropy later on.

3 Word & Difficulty Representation

Many factors contribute towards the difficulty associated with a given word. For example,

‘‘zingy’’ intuitively seems to be difficult for a variety of reasons - it has uncommon letters

(“z” and “y”), only one “canonical” vowel, and is a generally infrequently used word in English.

A word like ‘‘onion’’ on the other hand would also seem to be difficult, despite all of its letters

being fairly common and its usage in every day spoken language being much higher. The reason

it is perceived as difficult is due to a repetition of the letter “o” and “n”, which people may

be less likely to guess again once they have already discovered one position of. Thus, given a

word, our first task was to list and quantify such characteristics, so that when evaluating word

difficulty later on we could instead simply consider the vector of values corresponding to these

relevant characteristics.

Team # 2322645 Page 5 of 22

Figure 1: Left: 30 Most common words based on overlap. Right: Frequency of Letters in the SGB wordbank

3.1 Vowels

In all letter-guessing based games (beyond Wordle think hangman), a typical strategy is to

exploit the higher frequency of letters which are vowels in words. In Wordle, the presence of

particular vowel should tend to be discovered faster than those of non-vowels, leading to a

reasonable assumption that words with more vowels will on average be easier to guess. Thus,

one characteristic computed and considered for each word was the number of vowels it contained

(excluding “y”, as this is a traditionally uncommon letter and hence does not align with the

reasoning given above for why we consider vowels in the first place).

3.2 Usage

It makes sense that words which are used more commonly will be more familiar to people, and

hence easier to guess from given clues. Using the wordfreq library [5] in python, this value was

easily returned for each word under consideration.

3.3 Green & Yellow Tiles

Another desirable feature of a target word is that there is a high likelihood that after any given

guess Wordle will return a large number of green and yellow squares. Once this occurs, players

get very direct hints that they can immediately put into action. Thus, for any given word, we

computed the the average number of green, yellow, and colored (i.e. non-gray) tiles returned

considering the given word as the target and assuming guesses were drawn uniformly at random

from the SGB wordbank.

剩余21页未读,继续阅读

资源评论

阿拉伯梳子

- 粉丝: 1654

- 资源: 5735

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功