没有合适的资源?快使用搜索试试~ 我知道了~

2023年美赛特等奖论文-C-2311035-解密.pdf

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

0 下载量 89 浏览量

2024-05-06

22:05:58

上传

评论

收藏 9.46MB PDF 举报

温馨提示

试读

23页

大学生,数学建模,美国大学生数学建模竞赛,MCM/ICM,2023年美赛特等奖O奖论文

资源推荐

资源详情

资源评论

Problem Chosen

C

2023

MCM/ICM

Summary Sheet

Team Control Number

2311035

Winners in Wordle

Summary

Wordle is a phenomenal network game. Its appearance intensely aroused people’s attention.

Although it looks tiny, the hidden information behind it is huge and meaningful. Capturing and

understanding this information will help the New York Times better design and operate Wordle.

We built three models to finish the tasks. Model I uses LSTM to forecast the number of

reported scores in the future. Model II uses seven XGBoost regressors to predict the percentage

distribution of a given the word. Model III classifies words by their difficulties using SVM with

RBF kernel. Based on our three models, we can provide some advice to help improve Wordle.

The specific details are shown below:

Model I: LSTM is an improved recurrent neural network that can solve the long-distance

dependence problem that other neural networks cannot handle. We trained processed data of

the number of reported scores for the model and used an iterative method to predict the number

until March 1st (2023). After 150 times of independent model training, the prediction interval is

[20745.72, 22914.74]. Additionally, from the linear regression on the proportion of hard mode

with word attributes, we can also find no correlation between hard-mode-ratio and target word.

Model II: To get the percentage distribution of a given day associated with a specific word, we

trained seven separate XGBoost models. The R

2

of our model is 0.68, which can be accurately

predicted with low uncertainty after testing. We apply "EERIE" to the model and gain a prediction

percentage distr ibution, showing that ERRIE should be considered as an problematic word.

Model III: We quantified the difficulty of words by the unequally weighted average of percentage

distribution and divided them into three levels: easy, medium and hard. Then we used labeled to

fit SVM model with RBF kernel and gain an accuracy score of 0.6556 and an F1 score of 0.6634.

Also, the classification result for EERIE is hard, consistent with the result in model 2.

Except for these three models, we also found some interesting observations from the dataset,

one of which discussed the differences between human thinking and machine learning.

Finally, we write a letter including our models, results, and advice to the New York Times

Wordle editor. We hope this letter will become a valuable reference for the further development of

Wordle.

Keywords: Wordle; LSTM; Recursive Regression; XGBoost; Feature Engineering

Team # 2311035 Page 1 of 22

Contents

1 Introduction 3

1.1 Background ...................................... 3

1.2 Restatement of Problem ................................ 3

1.3 Literature Review ................................... 4

1.4 Our Work ....................................... 5

2 Assumptions 5

3 Notations 5

4 Task 1: Fundamental Model for Predicting Results 6

4.1 Data Cleaning ..................................... 6

4.2 Formulating the Model ................................ 6

4.2.1 Long-term and Short-term Memory ..................... 7

4.2.2 Data Normalization .............................. 8

4.2.3 Implementation of LSTM .......................... 9

4.2.4 Prediction Interval from the Result ...................... 9

4.3 Correlation between Hard-mode Percentage and Words ............... 10

5 Task 2: Model for Predicting Percentage Distribution 11

5.1 Features Used to Measure the Performance of Reported Results ........... 11

5.2 Feature Engineering .................................. 11

5.3 XGBoost Model Training ............................... 11

5.4 Uncer tainties Involved in the Model ......................... 12

5.5 Predicted Distr ibution of EERIE ........................... 13

6 Task 3: Classification of Words 14

6.1 Definition of Word Difficulty ............................. 14

6.2 Feature Engineering .................................. 15

6.3 Model Constr uction and Prediction .......................... 15

Team # 2311035 Page 2 of 22

6.4 Model Evaluation ................................... 15

7 Task 4: Other Interesting Findings 16

7.1 Fundamental Difference in Wordle Playing Strategy between Human Brain and

Machine Learning ................................... 16

7.2 Other Interesting Features ............................... 17

8 Strengths and Weaknesses 18

8.1 Strengths ....................................... 18

8.2 Weaknesses ...................................... 19

9 Letter 20

References 22

Team # 2311035 Page 3 of 22

1 Introduction

1.1 Background

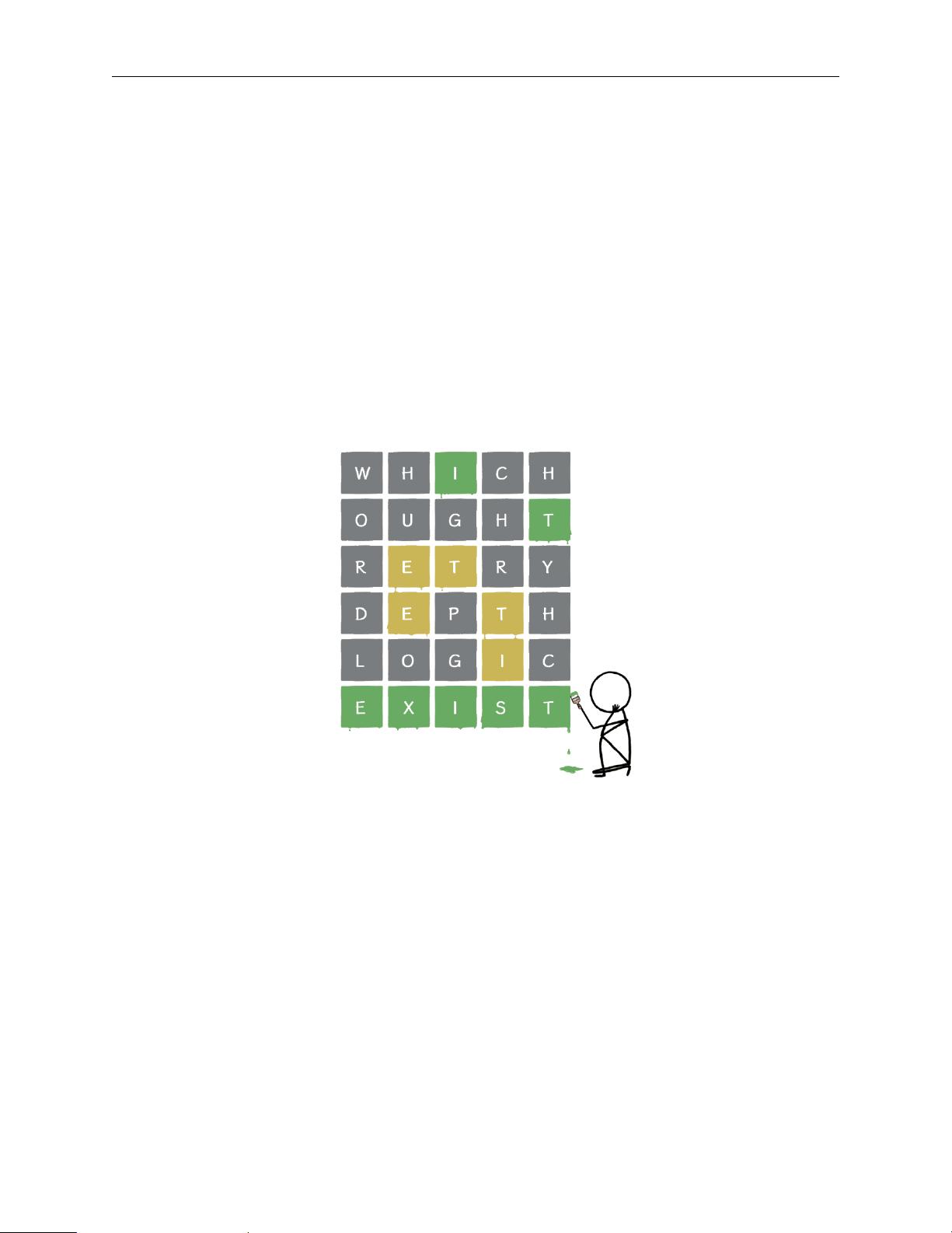

Nowadays, a puzzle game called "Wordle" has taken the world by storm with its light-hearted

approach and confusing but attractive yellow, green, and grey squares.

Generally speaking, it is a game that requires players to guess the correct words within less than

six times. Players can only guess a word that is officially recognized. Every time players make

a guess (no more than six wrong guesses), they will be given a hint in the form of yellow, g reen,

and grey squares. A green indicates correct; a yellow indicates correct letter in the wrong location;

and a grey means that the letter is not included in the word. Wordles Hard Mode makes the game

more challenging by forcing players to use correct letters found before in subsequent guesses. The

example of "Wordle" is shown in Figure.1.

Figure 1: Example of Wordle Puzzle

To help the New York Times better understand the difficulty and audience of "Wordle", we hope

to construct a prediction model using daily performance data posted by players on Twitter in 2022.

In other words, to make the "Wordle" develop better, we should use the prediction model and get

reasonable estimates of the number of future players, the distribution of reported results, and the

measurement of word difficulty.

1.2 Restatement of Problem

• Construct a prediction model to explain the total variation and a prediction interval for the

number of reported results on March 1st, 2023.

• Verify whether the attributes of words would affect the percentage of players who post their

hard-mode results on Twitter.

剩余22页未读,继续阅读

资源评论

阿拉伯梳子

- 粉丝: 1655

- 资源: 5735

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功