没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

CurricuLLM 是一种利用大型语言模型(LLMs)为复杂机器人控制任务自动生成教学大纲的方法。该方法通过逐步增加任务难度来促进目标任务的学习。CurricuLLM 包括生成子任务序列的自然语言描述、将子任务描述翻译成可执行的任务代码,以及基于轨迹回滚和子任务描述评估训练策略。在多种机器人模拟环境中评估了 CurricuLLM,并在现实世界中验证了通过 CurricuLLM 学习到的类人机器人运动策略。

资源推荐

资源详情

资源评论

CurricuLLM: Automatic Task Curricula Design for Learning Complex

Robot Skills using Large Language Models

Kanghyun Ryu

1

, Qiayuan Liao

1

, Zhongyu Li

1

, Koushil Sreenath

1

, Negar Mehr

1

Abstract— Curriculum learning is a training mechanism in

reinforcement learning (RL) that facilitates the achievement of

complex policies by progressively increasing the task difficulty

during training. However, designing effective curricula for a

specific task often requires extensive domain knowledge and

human intervention, which limits its applicability across various

domains. Our core idea is that large language models (LLMs),

with their extensive training on diverse language data and

ability to encapsulate world knowledge, present significant

potential for efficiently breaking down tasks and decomposing

skills across various robotics environments. Additionally, the

demonstrated success of LLMs in translating natural language

into executable code for RL agents strengthens their role in gen-

erating task curricula. In this work, we propose CurricuLLM,

which leverages the high-level planning and programming capa-

bilities of LLMs for curriculum design, thereby enhancing the

efficient learning of complex target tasks. CurricuLLM consists

of: (Step 1) Generating sequence of subtasks that aid target

task learning in natural language form, (Step 2) Translating

natural language description of subtasks in executable task

code, including the reward code and goal distribution code, and

(Step 3) Evaluating trained policies based on trajectory rollout

and subtask description. We evaluate CurricuLLM in various

robotics simulation environments, ranging from manipulation,

navigation, and locomotion, to show that CurricuLLM can aid

learning complex robot control tasks. In addition, we validate

humanoid locomotion policy learned through CurricuLLM in

real-world. The code is provided in https://github.com/

labicon/CurricuLLM

I. INTRODUCTION

Deep reinforcement learning (RL) has achieved notable

success across various robotics tasks, including manipula-

tion [1], navigation [2], and locomotion [3]. However, RL re-

quires informative samples for learning, and obtaining these

from a random policy is highly sample-inefficient, especially

for complex tasks. In contrast, human learning strategies

differ significantly from random action trials; they typically

start with simpler tasks and progressively increase difficulty.

Curriculum learning, inspired by this structured approach of

learning, aims to train models in a meaningful sequence [4],

gradually enhancing the complexity of the training data [5]

or the tasks themselves [6]. Particularly in RL, curriculum

learning improves training efficiency by focusing on simpler

tasks that can provide informative experiences to reach more

complex target task, instead of starting from scratch [6].

Although effective, designing a good curriculum is chal-

lenging. Manual curriculum design often necessitates the

*This work is supported by the National Science Foundation, under grants

ECCS-2438314 CAREER Award, CNS-2423130, and CCF-2423131

1

Mechanical Engineering, University of California Berkeley

{kanghyun.ryu, qiayuanl, zhongyu li, koushils,

negar}@berkeley.edu

Design a curriculum for

humanoid locomotion

Prompts: Environment Description, Robot

Description, Target Task Description, …

Sequential Training throughout Curriculum

Fine-tuning Policy

(Step 2)

Task Code Sampling

def compute_rewards(self):

# balance reward

…

# velocity reward

(Step 3)

Optimal Policy Selection

Policy 1:

base_lin_vel: [-0.202 -0.114 0.017]

base_ang_vel: [-0.001 -0.004 0.084]

Policy from

Preceding Subtask

(Step 1)

Curriculum Design

Basic Stability Learning

Learn to Walk

Increase Speed

Target Task

Sequentially Trained Policies

Fig. 1: CurricuLLM takes natural language description of environ-

ments, robots, and target task that we wish the robot to learn, and

then generates a sequence of subtasks. In each subtasks, it samples

different task codes and evaluates the resulting trained policy to

find the policy which is best aligned within the current subtask.

These iterations are repeated throughout the curriculum subtasks to

sequentially train a policy that reaches complex target task.

costly intervention of human experts [7], [8], [9] and is

typically restricted to a limited set of predefined tasks [10].

Consequently, several works focused on automatic curricu-

lum learning (ACL). To generate task curricula, ACL re-

quires the ability of both determining subtasks aligned with

the target task, ranking the difficulty of each subtask, and

organizing them in ascending order of difficulty [11]. How-

ever, autonomously evaluating the relevance and difficulty

of these subtasks remains unresolved. As a result, ACL has

been limited to initial state curricula [12], [13], goal state

curricula [14], or environment curricula [10], [15], rather

than task-level curricula.

Meanwhile, in recent years, large language models

(LLMs) trained on extensive collections of language

data [16], [17], [18] have been recognized as reposito-

ries of world knowledge expressed in linguistic form [19].

Leveraging this world knowledge, LLMs have demonstrated

their capabilities in task planning [20] and skill decompo-

sition for complex robotic tasks [21], [22]. Furthermore,

the programming skills of LLMs enabled smooth integration

between high-level language description and robotics through

API call composition [23], [24], simulation environment

generation [25], [26], or reward design [27], [28].

In this paper, we introduce CurricuLLM, which leverages

the reasoning and coding capabilities of LLMs to design

curricula for complex robotic control tasks. Our goal is to

autonomously generate a series of subtasks that facilitate

the learning of complex target tasks without the need for

extensive human intervention. Utilizing the LLM’s task de-

composition and coding, CurricuLLM autonomously gener-

ates sequences of subtasks along with appropriate reward

arXiv:2409.18382v1 [cs.RO] 27 Sep 2024

functions and goal distributions for each subtask, enhancing

the efficiency of training complex robotic policies.

Our contribution can be summarized in threefold. First, we

propose CurricuLLM, a task-level curriculum designer that

uses the high-level planning and code writing capabilities of

LLMs. Second, we evaluate CurricuLLM in diverse robotics

simulation environments ranging from manipulation, navi-

gation, and locomotion, demonstrating its efficacy in learn-

ing complex control tasks. Finally, we validate the policy

trained with CurricuLLM on the Berkeley Humanoid [29],

illustrating that the policy learned through CurricuLLM can

be transferred to the real world.

II. RELATED WORKS

A. Curriculum Learning

In RL, curriculum learning is recognized for enhanc-

ing sample efficiency [30], addressing previously infeasi-

ble challenging tasks [31], and facilitating multitask policy

learning [32]. Key elements of curriculum learning include

the difficulty measure, which ranks the difficulty of each

subtask, and training scheduling, which arranges subtasks at

an appropriate pace [11]. The teacher-student framework, for

example, has a teacher agent that monitors the progress of the

student agent, recommending suitable tasks or demonstra-

tions accordingly [33], [34]. However, this method requires

a predefined set of tasks provided by human experts or a

teacher who has superior knowledge of the environment.

Although self-play has been proposed as a means to escalate

opponent difficulty [35], [36], it is limited to competitive

multi-agent settings and may converge to a local minimum.

An appropriate difficulty measure is also crucial in curricu-

lum learning. In goal-conditioned environments, it is often

suggested to start training from a goal distribution close to

the initial state [14], [37] or an initial state distribution that is

in proximity to the goal state [12], [13] to regulate difficulty.

However, these methods are limited to goal-conditioned

environment where the task difficulty is correlated with how

“far” the goal is from the start location. In this work, we

use LLM to provide a more general method to measure task

difficulty and design curricula.

The most closely related work to CurricuLLM is

DrEureka [38] and Eurekaverse [26], which utilizes LLMs

to generate an domain randomization parameters, such as

gravity or mass, or environment curriculum, such as ter-

rain height. Especially, Eurekaverse employs a co-evolution

mechanism that gradually increases the complexity of the

environment by using the LLM for environment code gen-

eration. Compared to Eurekaverse, our method focuses on

the task curriculum, which focuses on task break-down for

learning complex robotic tasks compared to the Eurekaverse

that focuses on generalization across different environments.

B. Large Language Model for Robotics

Task Planning. The robotics community has recently been

exploring the use of LLMs for high-level task planning [39],

[24]. However, these methods are limited to task planning

within predefined finite skill sets and suffers when LLMs’

plan is not executable within given skill sets or environ-

ment [20], [24], [40]. In contrast, Voyager [21] introduces

an automatic skill discovery, attempting to learn new skills

that is not currently available but required for open-ended

exploration. Nonetheless, skills in Voyager are limited to

composing discrete actions and is inapplicable to continuous

control problems. In this work, we propose the automatic

generation of task curriculum consisting of a sequence of

subtasks that facilitates the efficient learning of robotic

control tasks. To manage the control of robots with high

degrees of freedom, we utilize the coding capabilities of

LLMs to generate a reward function for each subtask and

sequentially train each subtasks in given order.

Reward Design. In continuation from works using natural

language as a reward [41], [42], several works have proposed

using LLMs as a tool to translate language to reward. For

example, [43] uses LLMs to translate motion description to

cost parameters, which are optimized using model predictive

control (MPC). However, they are limited to changing the

parameters in cost functions that are hand-coded by human

experts. On the other hand, some works proposed directly

using LLM [44] or vision-language model (VLM) [45] as a

reward function, which observes agent behavior and outputs

a reward signal. However, these approach require expensive

LLM or VLM interaction during training. Most similar ap-

proaches with our work are [27], [46], which leverage LLMs

to generate reward functions and utilize a evolutionary search

to identify the most effective reward function. However, these

methods require an evaluation metric in their feedback loops,

and their reward search tends to optimize specifically for

this metric. Therefore, for tasks only described in natural

language, as subtasks in our curriculum, finding reward

function without these evaluation metric can be challenging.

Additionally, their evolutionary search is highly sample-

inefficient, contradicting the efficient learning objectives of

curriculum learning. In contrast, our work divides a single

complex task into a series of subtasks, and then employ a

reasoning approach analogous to the chain of thoughts [19]

to generate reward functions for complex target tasks.

III. PROBLEM FORMULATION

In this work, we consider task curriculum generation for

learning control policies for complex robot tasks. First, we

model a (sub)task as a goal-conditioned Markov Decision

Process (MDP), formally represented by a tuple m =

(S, G, A, p, r, ρ

g

). Here, S is set of states, A is set of action,

p(s

′

|s, a) is a transition probability function, r(s, a, s

′

, g)

is a reward function, G is a goal space, and ρ

g

is a goal

distribution. We use subscript n to describe n

th

subtask in

our curriculum. Then, following [6], we formally define a

task curriculum as:

Definition 1 (Task-level Sequence Curriculum. [6]): A

task-level sequence curriculum can be represented as an

ordered list of tasks C = [m

1

, m

2

, . . . , m

N

] where if i ≤ j

for some m

i

, m

j

∈ C, then the task m

i

should be learned

before task m

j

.

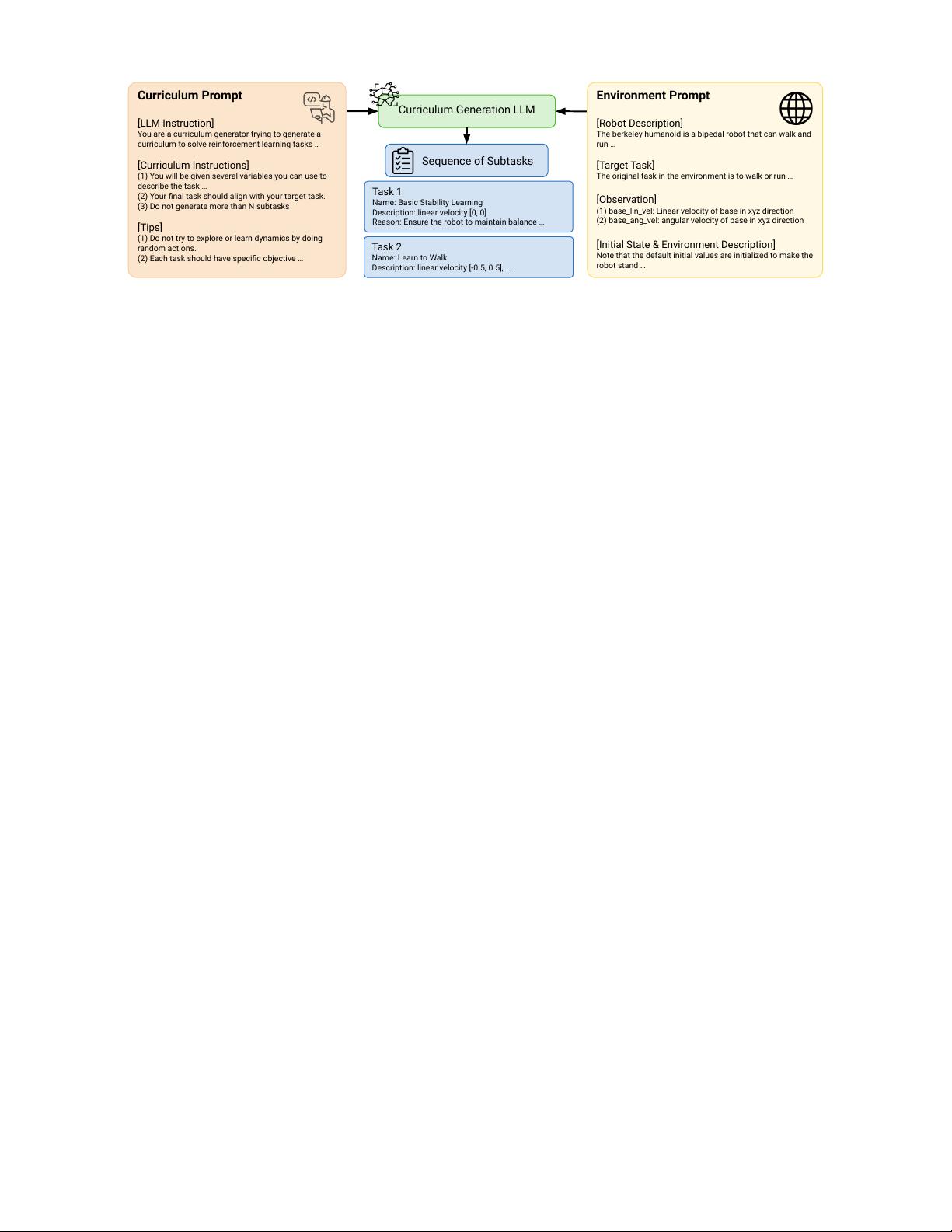

Environment Prompt

[Robot Description]

The berkeley humanoid is a bipedal robot that can walk and

run …

[Target Task]

The original task in the environment is to walk or run …

[Observation]

(1) base_lin_vel: Linear velocity of base in xyz direction

(2) base_ang_vel: angular velocity of base in xyz direction

[Initial State & Environment Description]

Note that the default initial values are initialized to make the

robot stand …

Curriculum Prompt

[LLM Instruction]

You are a curriculum generator trying to generate a

curriculum to solve reinforcement learning tasks …

[Curriculum Instructions]

(1) You will be given several variables you can use to

describe the task …

(2) Your final task should align with your target task.

(3) Do not generate more than N subtasks

[Tips]

(1) Do not try to explore or learn dynamics by doing

random actions.

(2) Each task should have specific objective …

Curriculum Generation LLM

Sequence of Subtasks

Task 1

Name: Basic Stability Learning

Description: linear velocity [0, 0]

Reason: Ensure the robot to maintain balance …

Task 2

Name: Learn to Walk

Description: linear velocity [-0.5, 0.5], …

Fig. 2: Curriculum generation LLM receives the natural language form of a curriculum prompt as well as the environment description to

generate a sequence of subtasks. Our prompt includes instruction for tje curriculum designer, rules for how to describe the subtasks, and

other tips on describing the curriculum. Environment description consists of the robot and its state variable description, the target task,

and the initial state description.

In our work, we aim to generate a task curriculum

C = [m

1

, m

2

, . . . , m

N

] which helps learning a pol-

icy π that maximizes the cumulative reward V

m

T

(π) =

E

π

P

H

t=0

γ

t

r(s

t

, a

t

, s

t+1

, g)

associated with target task

m

T

.

Problem 1: For target task m

T

= (S, G, A, p, r

T

, ρ

g,T

)

and task curriculum C = [m

1

, m

2

, . . . , m

N

], we denote a

policy trained with curriculum C as π

C

. Our objective for

curriculum design is finding a curriculum C that maximizes

the reward on the target task arg max

C

V

m

T

(π

C

).

We assume the state space S, the action space A, the tran-

sition probability function p, and the goal space G are fixed,

i.e., they do not change between subtasks. Therefore, we

can specify the task curriculum with the sequence of reward

functions and goal distributions that are associated with the

subtasks, C

(r,ρ

g

)

= [(r

1

, ρ

g,1

), (r

2

, ρ

g,2

), . . . , (r

N

, ρ

g,N

)].

Here, we express the reward function and goal distribu-

tion tuple (r, ρ

g

) with a programming code for simulation

environment and define it as a task code. Therefore, Cur-

ricuLLM’s objective reduces to generating sequence of task

codes C

(r,ρ

g

)

that maximize the target task performance.

IV. METHOD

Even for LLMs encapsulating world knowledge, directly

generating the sequence of task codes C

(r,ρ

g

)

can be chal-

lenging. Since LLM is known to show better reasoning

capability by following step-by-step instructions [19], we

divide our curriculum generation into three main modules

(see Figure 1):

• Curriculum Design (Step 1): A curriculum genera-

tion LLM receives natural language descriptions of

the robot, environment, and target task to generate

sequences of language descriptions C

l

= [l

1

, l

2

, . . . , l

N

]

of the task sequence curriculum C.

• Task Code Sampling (Step 2): A task code generation

LLM generates K task code candidates (r

k

n

, ρ

k

g,n

), k =

{1, 2, . . . , K} for the given subtask description l

n

.

These are in the form of executable code and are used

to fine-tune the policy trained for the previous subtask.

• Optimal Policy Selection (Step 3): An evaluation

LLM evaluates policies π

k

n

, k = {1, 2, . . . , K} trained

with different task code candidates (r

k

n

, ρ

k

g,n

), k =

{1, 2, . . . , K} to identify the policy that best aligns with

the current subtask. Selected policy π

∗

n

is used as a

pretrained policy for the next subtask.

A. Generating Sequence of Language Description

Leveraging the high-level task planning from LLMs, we

initially ask an LLM to generate a series of language

descriptions C

l

= [l

1

, l

2

, . . . , l

N

] for a task-level sequence

curriculum C = [m

1

, m

2

, . . . , m

N

] facilitating the learning

of a target task m

T

. Initially, the LLM is provided with a

language description of the target task l

T

and a language

description of the environmental information l

E

to generate

an environment-specific curriculum. When generating cur-

riculum, we query LLM to use the target task m

T

as a

final task in curriculum m

N

. Moreover, we require the LLM

to describe the subtask using available state variables. This

enables the LLM to generate curricula that are grounded in

the environment information and ensures the generation of

a reliable reward function later (discussed in Section IV-B).

For example, to generate a curriculum for a humanoid to

learn running, we query the LLM to generate a curricu-

lum for following a velocity and heading angle command

{(v

x

, v

y

, θ) : −2 ≤ v

x

, v

y

≤ 2, −π ≤ θ ≤ π}, while

providing state variables, such as base linear velocity, base

angular velocity, or joint angle. Then, the LLM generates

the sequence of subtasks descriptions such as (1) Basic

Stability Learning: Maintain stability by minimizing the joint

deviation and height deviation, (2) Learn to Walk: Follow low

speed commands of the form {(v

x

, v

y

, θ) : −1 ≤ v

x

, v

y

≤

1, −π/2 ≤ θ ≤ π/2}, and others.

B. Task Code Generation

After generating a curriculum with a series of language

descriptions C

l

= [l

1

, l

2

, . . . , l

N

], we should translate these

language descriptions to a sequence of task codes C

(r,ρ

g

)

=

[(r

1

, ρ

g,1

), (r

2

, ρ

g,2

), . . . , (r

N

, ρ

g,N

)]. These task codes are

described in executable code so that the RL policy can

be trained on these subtasks. In the n

th

subtask which we

剩余12页未读,继续阅读

资源评论

sp_fyf_2024

- 粉丝: 1234

- 资源: 53

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功