没有合适的资源?快使用搜索试试~ 我知道了~

EetroMAE(Efficient Transformer-based Multi-Modal AutoEncoder)是一种基于Transformer的多模态自编码器模型,旨在有效地处理和融合来自不同模态的数据,例如文本、图像和音频。该模型采用了自编码器的架构,其中编码器和解码器都是基于Transformer的模型,可以同时处理多种类型的数据。 EetroMAE模型的主要特点包括: 多模态数据处理:EetroMAE可以同时处理来自不同模态的数据,例如文本、图像和音频。这使得它非常适合于需要同时分析多种类型数据的应用场景,例如多媒体内容理解、语音识别和图像描述生成等。 高效性:EetroMAE采用了高效的Transformer架构,可以快速地处理大量数据。同时,该模型还可以通过模型剪枝和量化等技术进一步减小模型大小和计算复杂度,使其适用于资源受限的环境。 自编码器架构:EetroMAE采用了自编码器的架构,其中编码器用于将输入数据编码为低维表示,解码器则用于将低维表示解码回原始数据。这种架构可以帮助模型学习到数据的有用特征,并减少噪声和冗余信息的影响。

资源推荐

资源详情

资源评论

RetroMAE: Pre-Training Retrieval-oriented Language Models Via

Masked Auto-Encoder

Shitao Xiao

1†

, Zheng Liu

2†

, Yingxia Shao

1

, Zhao Cao

2

1: Beijing University of Posts and Telecommunications, Beijing, China

2: Huawei Technologies Ltd. Co., Shenzhen, China

{stxiao,shaoyx}@bupt.edu.cn, {liuzheng107,caozhao1}@huawei.com

Abstract

Despite pre-training’s progress in many impor-

tant NLP tasks, it remains to explore effec-

tive pre-training strategies for dense retrieval.

In this paper, we propose RetroMAE, a new

retrieval oriented pre-training paradigm based

on Masked Auto-Encoder (MAE). RetroMAE

is highlighted by three critical designs. 1)

A novel MAE workflow, where the input

sentence is polluted for encoder and decoder

with different masks. The sentence embed-

ding is generated from the encoder’s masked

input; then, the original sentence is recovered

based on the sentence embedding and the de-

coder’s masked input via masked language

modeling. 2) Asymmetric model structure,

with a full-scale BERT like transformer as en-

coder, and a one-layer transformer as decoder.

3) Asymmetric masking ratios, with a mod-

erate ratio for encoder: 15∼30%, and an ag-

gressive ratio for decoder: 50∼70%. Our

framework is simple to realize and empirically

competitive: the pre-trained models dramati-

cally improve the SOTA performances on a

wide range of dense retrieval benchmarks, like

BEIR and MS MARCO. The source code and

pre-trained models are made publicly avail-

able at https://github.com/staoxiao/RetroMAE

so as to inspire more interesting research.

1 Introduction

Dense retrieval is important to many web appli-

cations. By letting semantically correlated query

and document represented as spatially close em-

beddings, dense retrieval can be efficiently con-

ducted via approximate nearest neighbour search,

such as PQ (Jegou et al., 2010; Xiao et al., 2021,

2022a) and HNSW (Malkov and Yashunin, 2018).

Recently, large-scale language models have been

widely used as the encoding networks for dense

retrieval (Karpukhin et al., 2020; Xiong et al.,

†.

The two researchers make equal contributions to this

work and are designated as co-first authors.

2020; Luan et al., 2021). The mainstream mod-

els, e.g., BERT (Devlin et al., 2019), RoBERTa

(Liu et al., 2019), T5 (Raffel et al., 2019), are usu-

ally pre-trained by token-level tasks, like MLM and

Seq2Seq. However, the sentence-level representa-

tion capability is not fully developed in these tasks,

which restricts their potential for dense retrieval.

Given the above defect, there have been in-

creasing interests to develop retrieval oriented pre-

trained models. One popular strategy is to lever-

age self-contrastive learning (Chang et al., 2020;

Guu et al., 2020), where the model is trained to

discriminate positive samples from data augmenta-

tion. However, the self-contrastive learning can be

severely limited by the data augmentation’s quality;

besides, it usually calls for massive amounts of neg-

ative samples (He et al., 2020a; Chen et al., 2020).

Another strategy relies on auto-encoding (Gao and

Callan, 2021; Lu et al., 2021; Wang et al., 2021),

which is free from the restrictions on data augmen-

tation and negative sampling. The current works

are differentiated in how the encoding-decoding

workflow is designed, and it remains an open prob-

lem to explore more effective auto-encoding frame-

work for retrieval oriented pre-training.

We argue that two factors are critical for the auto-

encoding based pre-training: 1) the reconstruction

task must be demanding enough on encoding qual-

ity, 2) the pre-training data needs to be fully uti-

lized. We propose RetroMAE (Figure 1), which

optimizes both aspects with the following designs.

•A novel MAE workflow

. The pre-training fol-

lows a novel masked auto-encoding workflow. The

input sentence is polluted twice with two differ-

ent masks. One masked input is used by encoder,

where the sentence embedding is generated. The

other one is used by decoder: joined with the sen-

tence embedding, the original sentence is recovered

via masked language modeling (MLM).

• Asymmetric structure

. RetroMAE adopts

an asymmetric model structure. The encoder is a

arXiv:2205.12035v2 [cs.CL] 17 Oct 2022

Norwegian forest cat is a breed of dom-estic cat originating in

northern Europe

[M] forest cat is a breed of [M]

cat originating in [M] Europe

[M] [M] cat is [M] [M] of dom-

estic [M] [M] in northern [M]

Encoder

Decoder

Mask (EN) Mask (DE)

Norwegian forest cat is a breed

of domestic cat originating in

northern Europe

Sentence

embedding

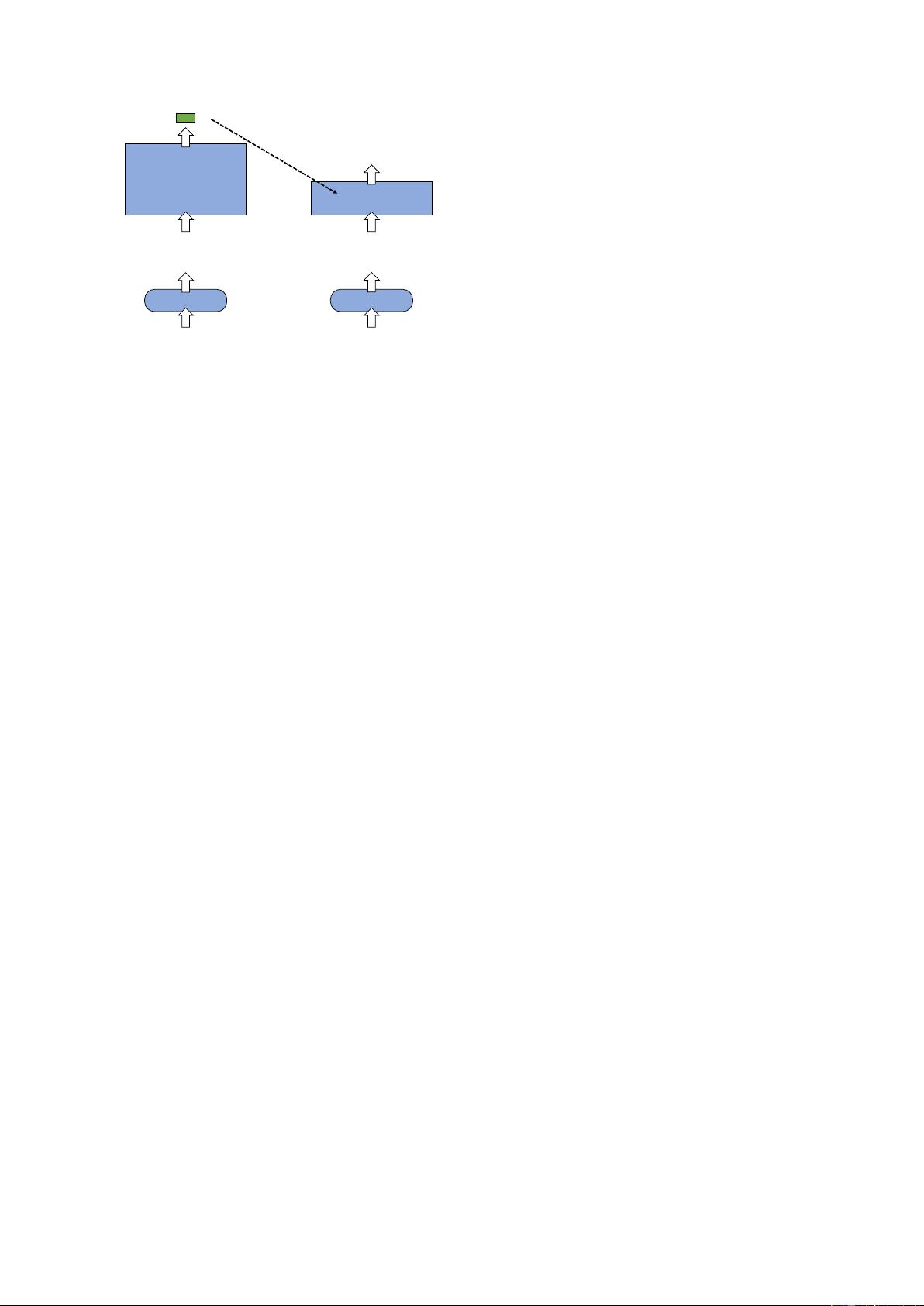

Figure 1: RetroMAE. The encoder utilizes a full-scale

BERT, whose input is moderately masked. The decoder

is a one-layer transformer, whose input is aggressively

masked. The original input is recovered based on the

sentence embedding and the decoder’s input via MLM.

full-scale BERT, which is able to generate discrim-

inative embedding for the input sentence. In con-

trast, the decoder follows an extremely simplified

structure, i.e., a single-layer transformer, which is

learned to reconstruct the input sentence.

• Asymmetric masking ratios

. The encoder’s

input is masked at a moderate ratio: 15

∼

30%,

which is slightly above its traditional value in

MLM. However, the decoder’s input is masked

at a much more aggressive ratio: 50∼70%.

The above designs of RetroMAE are favorable

to the pre-training effectiveness thanks to the fol-

lowing reasons. Firstly, the auto-encoding is made

more demanding on encoding quality

. The con-

ventional auto-regression may attend to the prefix

during the decoding process; and the conventional

MLM only masks a small portion (15%) of the

input tokens. By comparison, RetroMAE aggres-

sively masks most of the input for decoding. As

such, the reconstruction will be not enough to lever-

age the decoder’s input alone, but heavily depend

on the sentence embedding. Thus, it will force

the encoder to capture in-depth semantics of the

input. Secondly, it ensures

training signals to be

fully generated

from the input sentence. For con-

ventional MLM-style methods, the training signals

may only be generated from 15% of the input to-

kens. Whereas for RetroMAE, the training signals

can be derived from the majority of the input. Be-

sides, knowing that the decoder only contains one-

single layer, we further propose the

enhanced de-

coding

on top of two-stream attention (Yang et al.,

2019) and position-specific attention mask (Dong

et al., 2019). As such, 100% of the tokens can

be used for reconstruction, and each token may

sample a unique context for its reconstruction.

RetroMAE is simple to realize and empirically

competitive. We merely use a moderate-amount of

data (Wikipedia, BookCorpus, MS MARCO cor-

pus) for pre-training, where a BERT base scale

encoder is learned. For the zero-shot setting, it pro-

duces an average score of

45.2 on BEIR

(Thakur

et al., 2021); and for the supervised setting, it may

easily reach an MRR@10 of

41.6 on MS MARCO

passage retrieval (Nguyen et al., 2016) following

standard knowledge distillation procedures. Both

values are unprecedented for dense retrievers with

the same model size and pre-training conditions.

We also carefully evaluate the impact introduced

from each of the components, whose results may

bring interesting insights to the future research.

2 Related works

Dense retrieval is widely applied to web applica-

tions, like search engines (Karpukhin et al., 2020),

advertising (Lu et al., 2020; Zhang et al., 2022)

and recommender systems (Xiao et al., 2022b). It

encodes query and document within the same la-

tent space, where relevant documents to the query

can be efficiently retrieved via ANN search. The

encoding model is critical for the retrieval quality.

Thanks to the recent development of large-scale

language models, e.g., BERT (Devlin et al., 2019),

RoBERTa (Liu et al., 2019), and T5 (Raffel et al.,

2019), there has been a major leap-forward for

dense retrieval’s performance (Karpukhin et al.,

2020; Luan et al., 2021; Lin et al., 2021).

The large-scale language models are highly dif-

ferentiated in terms of pre-training tasks. One com-

mon task is the masked language modeling (MLM),

as adopted by BERT (Devlin et al., 2019) and

RoBERTa (Liu et al., 2019), in which the masked

tokens are predicted based on their context. The

basic MLM is extended in many ways. For ex-

ample, tasks like entity masking, phrase masking

and span masking (Sun et al., 2019; Joshi et al.,

2020) may help the pre-trained models to better

support the sequence labeling applications, such

as entity resolution and question answering. Be-

sides, tasks like auto-regression (Radford et al.,

2018; Yang et al., 2019) and Seq2Seq (Raffel et al.,

2019; Lewis et al., 2019) are also utilized, where

the pre-trained models are enabled to serve NLG

related scenarios. However, most of the generic

pre-trained models are based on token-level tasks,

where the sentence representation capability is not

effectively developed (Chang et al., 2020). Thus, it

may call for a great deal of labeled data (Nguyen

et al., 2016; Kwiatkowski et al., 2019) and sophis-

ticated fine-tuning methods (Xiong et al., 2020;

Qu et al., 2020) to ensure the pre-trained models’

performance for dense retrieval.

To mitigate the above problem, recent works

propose retrieval oriented pre-trained models. The

existing methods can be divided as the ones based

on self-contrastive learning (SCL) and the ones

based on auto-encoding (AE). The SCL based

methods (Chang et al., 2020; Guu et al., 2020;

Xu et al., 2022) rely on data augmentation, e.g.,

inverse cloze task (ICT), where positive samples

are generated for each anchor sentence. Then, the

language model is learned to discriminate the posi-

tive samples from the negative ones via contrastive

learning. However, the self-contrastive learning

usually calls for huge amounts of negative sam-

ples, which is computationally expensive. Besides,

the pre-training effect can be severely restricted by

the quality of data augmentation. The AE based

methods are free from these restrictions, where

the language models are learned to reconstruct the

input sentence based on the sentence embedding.

The existing methods utilize various reconstruction

tasks, such as MLM (Gao and Callan, 2021) and

auto-regression (Lu et al., 2021; Wang et al., 2021;

Li et al., 2020), which are highly differentiated in

terms of how the original sentence is recovered and

how the training loss is formulated. For example,

the auto-regression relies on the sentence embed-

ding and prefix for reconstruction; while MLM

utilizes the sentence embedding and masked con-

text. The auto-regression derives its training loss

from the entire input tokens; however, the conven-

tional MLM only learns from the masked positions,

which accounts for 15% of the input tokens. Ideally,

we expect the decoding operation to be demanding

enough, as it will force the encoder to fully capture

the semantics about the input so as to ensure the

reconstruction quality. Besides, we also look for-

ward to high data efficiency, which means the input

data can be fully utilized for the pre-training task.

3 Methodology

We develop a novel masked auto-encoder for re-

trieval oriented pre-training. The model contains

two modules: a BERT-like encoder

Φ

enc

(·)

to gen-

erate sentence embedding, and a one-layer trans-

former based decoder

Φ

dec

(·)

for sentence recon-

struction. The original sentence

X

is masked as

˜

X

enc

and encoded as the sentence embedding

h

˜

X

.

The sentence is masked again (with a different

mask) as

˜

X

dec

; together with

h

˜

X

, the original sen-

tence

X

is reconstructed. Detailed elaborations

about RetroMAE are made as follows.

3.1 Encoding

The input sentence

X

is polluted as

˜

X

enc

for the

encoding stage, where a small fraction of its tokens

are randomly replaced by the special token [M]

(Figure 2. A). We apply a moderate masking ratio

(15

∼

30%), which means the majority of informa-

tion about the input will be preserved. Then, the

encoder

Φ

enc

(·)

is used to transform the polluted

input as the sentence embedding h

˜

X

:

h

˜

X

← Φ

enc

(

˜

X

enc

). (1)

We apply a BERT like encoder with 12 layers and

768 hidden-dimensions, which helps to capture the

in-depth semantics of the sentence. Following the

common practice, we select the [CLS] token’s final

hidden state as the sentence embedding.

3.2 Decoding

The input sentence

X

is polluted as

˜

X

dec

for the

decoding stage (Figure 2. B). The masking ra-

tio is more aggressive than the one used by the

encoder, where 50

∼

70% of the input tokens will

be masked. The masked input is joined with the

sentence embedding, based on which the original

sentence is reconstructed by the decoder. Particu-

larly, the sentence embedding and the masked input

are combined into the following sequence:

H

˜

X

dec

← [h

˜

X

, e

x

1

+ p

1

, ..., e

x

N

+ p

N

]. (2)

In the above equation,

e

x

i

denotes the embedding

of

x

i

, to which an extra position embedding

p

i

is added. Finally, the decoder

Φ

dec

is learned to

reconstruct the original sentence

X

by optimizing

the following objective:

L

dec

=

X

x

i

∈masked

CE(x

i

|Φ

dec

(H

˜

X

dec

)), (3)

where

CE

is the cross-entropy loss. As men-

tioned, we use a one-layer transformer based de-

coder. Given the aggressively masked input and

the extremely simplified network, the decoding be-

comes challenging, which forces the generation of

high-quality sentence embedding so that the origi-

nal input can be recovered with good fidelity.

剩余10页未读,继续阅读

资源评论

就是一顿骚操作

- 粉丝: 446

- 资源: 40

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功