没有合适的资源?快使用搜索试试~ 我知道了~

2019-2019-D-VAE, A Variational Autoencoder for Directed Acyclic

需积分: 0 0 下载量 72 浏览量

2022-08-04

13:16:17

上传

评论

收藏 2.34MB PDF 举报

温馨提示

试读

20页

D-VAE: A Variational Autoencoder for DirectedMuhan Zhang, Shali Jiang, Zhicheng

资源详情

资源评论

资源推荐

D-VAE: A Variational Autoencoder for Directed

Acyclic Graphs

Muhan Zhang, Shali Jiang, Zhicheng Cui, Roman Garnett, Yixin Chen

Department of Computer Science and Engineering

Washington University in St. Louis

{muhan, jiang.s, z.cui, garnett}@wustl.edu, chen@cse.wustl.edu

Abstract

Graph structured data are abundant in the real world. Among different graph types,

directed acyclic graphs (DAGs) are of particular interest to machine learning re-

searchers, as many machine learning models are realized as computations on DAGs,

including neural networks and Bayesian networks. In this paper, we study deep

generative models for DAGs, and propose a novel DAG variational autoencoder

(D-VAE). To encode DAGs into the latent space, we leverage graph neural networks.

We propose an asynchronous message passing scheme that allows encoding the

computations defined by DAGs, rather than using existing simultaneous message

passing schemes to encode local graph structures. We demonstrate the effectiveness

of our proposed D-VAE through two tasks: neural architecture search and Bayesian

network structure learning. Experiments show that our model not only generates

novel and valid DAGs, but also produces a smooth latent space that facilitates

searching for DAGs with better performance through Bayesian optimization.

1 Introduction

Many real-world problems can be posed as optimizing of a directed acyclic graph (DAG) representing

some computational task. In machine learning, deep neural networks are DAGs. Although they have

achieved remarkable performance on a wide range of learning tasks, tremendous efforts need to be

devoted to designing their architectures, which is essentially a DAG optimization task. Similarly,

optimizing the connection structures of Bayesian networks is also a critical problem in learning

graphical models [

1

]. DAG optimization is pervasive in other fields as well. For example, in

electronic circuit design, engineers need to optimize directed network structures not only to realize

target functions, but also to meet specifications such as power usage and operating temperature.

DAGs, as well as other discrete structures, cannot be optimized directly with traditional gradient-

based techniques, as gradients are not available. Bayesian optimization, a state-of-the-art black-box

optimization technique, requires a kernel to measure the similarity between discrete structures as

well as a method to explore the design space and extrapolate to new points. Principled solutions to

these problems are still lacking. Recently, there has been increased interest in training generative

models for discrete data types such as molecules [

2

,

3

], arithmetic expressions [

4

], source code [

5

],

general graphs [

6

], etc. In particular, Kusner et al.

[3]

developed a grammar variational autoencoder

(GVAE) for molecules, which is able to encode and decode molecules into and from a

continuous

latent space

, allowing one to optimize molecule properties by searching in this well-behaved space

instead of a discrete space. Inspired by this work, we propose to train variational autoencoders for

DAGs, and optimize DAG structures in the latent space based on Bayesian optimization.

Existing graph generative models can be classified into three categories: token-based, adjacency-

matrix-based, and graph-based approaches. Token-based graph generative models [

2

,

3

,

7

] represent a

graph as a sequence of tokens (e.g., characters, grammar production rules) and model these sequences

Preprint. Work in progress.

arXiv:1904.11088v2 [cs.LG] 9 May 2019

based on established RNN modules such as gated recurrent unit (GRU) [

8

]. Adjacency-matrix-based

models [

9

,

10

,

11

,

12

,

13

] generate columns/entries of the adjacency matrix of a graph sequentially

or generate the entire adjacency matrix in one shot. Graph-based models [

6

,

14

,

15

,

16

] iteratively

add new nodes or new edges to a graph based on the the existing graph state and node hidden states.

Among the three types, token-based models require task-specific graph grammars such as SMILES

for molecules [

17

], and thus is less general. Adjacency-matrix-based models leverage proxy matrix

representations of graphs, and generate graphs through multi-layer perceptrons (MLPs) or RNNs

which could be less expressive and less suitable for graphs. On the other hand, graph-based models

seem to be more general and natural, since they operate directly on graph structures instead of proxy

representations. In addition, the iterative process is driven by the current graph and nodes’ states as

represented by

graph neural networks (GNNs)

, which have already shown their powerful graph

feature learning ability on various tasks [18, 19, 20, 21, 22, 23, 24].

A GNN extracts local features around nodes by passing neighboring nodes’ messages to the center.

Traditionally, such message passing happens at all nodes

simultaneously

to extract local substructure

features for all nodes [

25

]. Then, these feature vectors are summed to be the graph embedding.

Although such symmetric message passing works for undirected graphs, it fails to capture the

computation dependencies defined by DAGs – nodes within a DAG naturally have some ordering

based on its dependency structures, which is ignored by existing GNNs but crucial for performing

the computation on the DAG. If we encode a DAG using existing GNNs, the embedding may only

capture its local structures but fail to encode the computation.

To encode the computation on a DAG, we propose an

asynchronous

message passing scheme, which

no longer passes messages for all nodes simultaneously, but respects the computation dependencies

among the nodes. For example, suppose node A has two parent nodes, B and C, in a DAG. Our

scheme does not perform feature learning for A until the feature learning on B and C are both finished.

Then, the aggregated message from B and C is passed to A to trigger A’s feature learning.

We incorporate this feature learning scheme in both our encoder and decoder, and propose the DAG

variational autoencoder (D-VAE). D-VAE has an excellent theoretical property for modeling DAGs –

we prove that D-VAE can

injectively

encode computations on DAGs. This means, we can build a

mapping from the discrete space to the continuous latent space so that every DAG computation has

its unique embedding in the latent space, which justifies performing optimization in the latent space

instead of the original design space.

We validate the proposed D-VAE on neural networks and Bayesian networks. We show that our model

not only generates novel, realistic, and valid DAGs, but also produces smooth latent spaces effective

for searching better neural architectures and Bayesian networks through Bayesian optimization.

Our contributions in this paper are: 1) We propose D-VAE, a variational autoencoder for DAGs

with a novel asynchronous message passing scheme. 2) We for the first time apply graph generative

models to optimizing architectures of learning algorithms. 3) We theoretically prove D-VAE encodes

computations injectively, thus justifying optimizing discrete architectures in a continuous latent space.

2 Related Work

2.1 Variational Autoencoder

Variational autoencoder (VAE) [

26

,

27

] provides a framework to learn both a probabilistic generative

model

p

θ

(x|z)

(the decoder) as well as an approximated posterior distribution

q

φ

(z|x)

(the encoder).

VAE is trained through maximizing the evidence lower bound (ELBO)

L(φ, θ; x) = E

z∼q

φ

(z|x)

[log p

θ

(x|z)] − KL[q

φ

(z|x)kp(z)]. (1)

The prior distribution

p(z)

is typically taken to be

N(0, I)

. The posterior approximation

q

φ

(z|x)

is

usually a multivariate Gaussian distributions whose mean and covariance matrix are parameterized

by the encoder network and the covariance matrix is often constrained to be diagonal. The generative

model

p

θ

(x|z)

can in principle take arbitrary parametric forms whose parameters are output by

the decoder network. After learning

p

θ

(x|z)

, we can generate new data by decoding latent vectors

z

sampled from the prior distribution

p(z)

. For generating discrete data types,

p

θ

(x|z)

is often

decomposed into a series of decision steps.

2

1

x

sin

sin

(")

$

(")

$

+

x sin

(")

$

(")

$

+

1

2

3

5

4

6

1 2

4

3

5

2

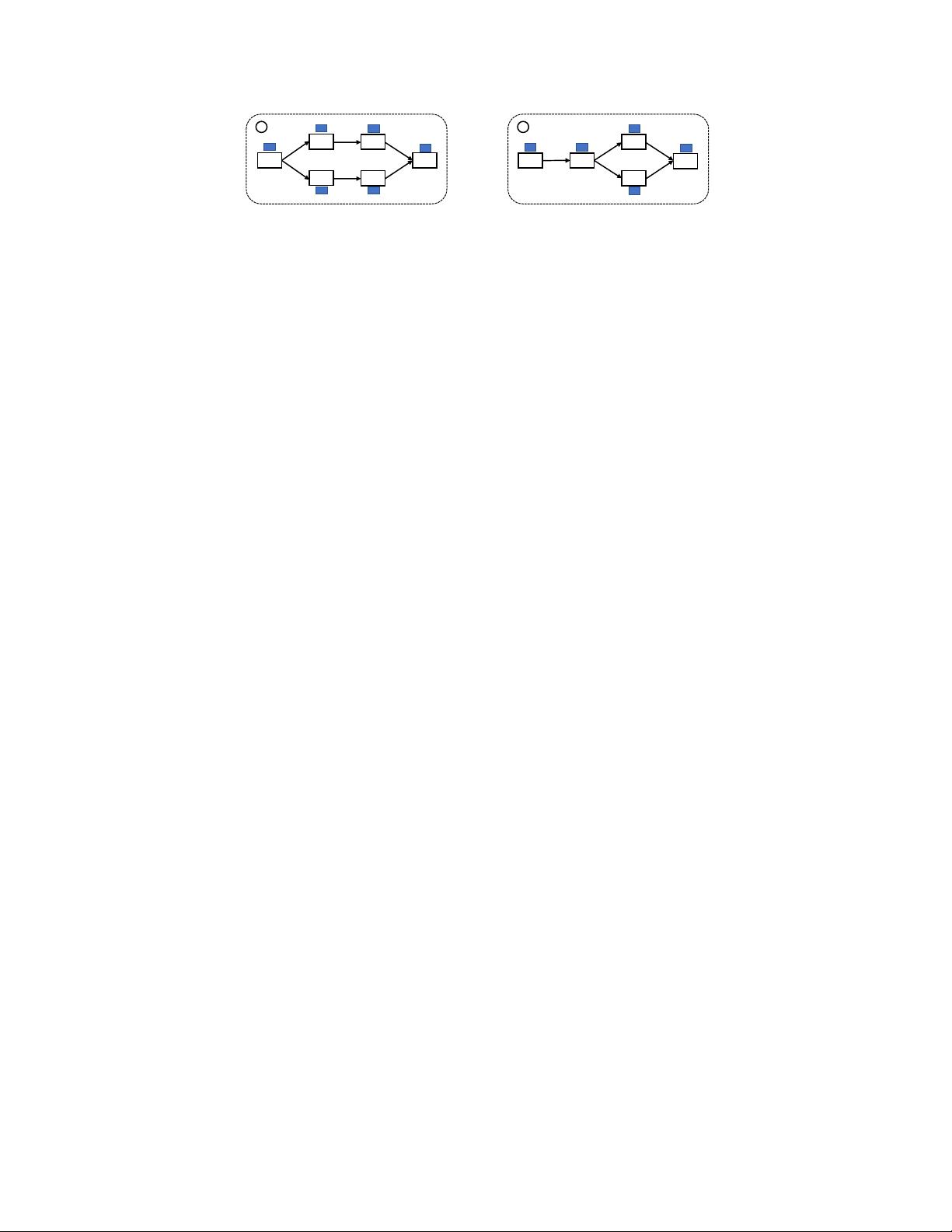

Figure 1: Examples of computations on DAGs.

2.2 Neural Architecture Search

Neural architecture search (NAS) aims at automating the design of neural network architectures by

searching candidate architectures in a search space automatically. It has seen major advances in

recent years [

28

,

29

,

30

,

31

,

32

,

33

]. See Hutter et al.

[34]

for an overview. Methods for NAS can be

categorized according to their search space, search strategy, and performance estimation strategy etc.,

which all greatly affect the performance of the final architecture. Based on search strategy, there are

1) reinforcement learning based methods [

28

,

31

,

33

] which train controllers to generate architectures

with high rewards in terms of validation accuracy, 2) Bayesian optimization based methods [

35

] which

define kernels to measure architecture similarities and extrapolate the architecture space heuristically,

3) evolutionary approaches [

29

,

36

,

37

] which use evolutionary algorithms for optimizing the neural

architectures, and 4) differentiable methods [

32

,

38

] which use continuous relaxation/embedding of

the search space to enable gradient-based optimization. In Appendix A we include more discussion

on several most related works. In this paper, we introduce a new NAS method different from existing

approaches. Based on the proposed D-VAE, we learn a compact encoding space and apply principled

Bayesian optimization methods over this continuous latent space to select latent points that are most

promising to decode to better neural architectures.

2.3 Bayesian Network Structure Learning

Bayesian network (BN) is an important type of graphical model with wide applications [

1

]. Bayesian

network structure learning (BNSL) is to learn the structure of the underlying Bayesian network from

observed data. BNSL dates at least back to Chow and Liu

[39]

where the Chow-Liu tree algorithm

was developed for learning tree structured models. Much effort has been devoted to this area in recent

decades (see Chapter 18 of Koller and Friedman

[1]

for a detailed discussion), and there is still a

great deal of research on this topic [

40

,

41

,

42

]. One of the main approaches for BNSL is score-based

search. That is, we define some “goodness-of-fit” score for a given network structure, and search

for one with the optimal score. Commonly used scores include BIC and BDeu etc., mostly based

on marginal likelihood [

1

]. Which score to use is by itself an ongoing research topic [

43

]. It is well

known that finding an optimally scored BN with indegree at most

d

is NP-hard for

d ≥ 2

[

44

], so

exact algorithms such as dynamic programming [

45

] or shortest path approaches [

46

,

47

] can only

solve small-scale problems. We have to resort to heuristic methods such as local search, simulated

annealing etc. [

48

]. All these approaches optimize the network structure in the discrete space. In

this paper, we propose to embed the discrete space into a continuous Euclidean space, and show that

model scores such as BIC can be modeled smoothly in the embedded space, and hence approximate

the NP-hard combinatorial search problem to a continuous optimization problem.

3 DAG Variational Autoencoder (D-VAE)

In this section, we describe our proposed DAG variational autoencoder (D-VAE). D-VAE uses an

asynchronous message passing scheme to encode and decode DAGs respecting the computational

dependencies. In contrast to the simultaneous message passing in traditional GNNs, D-VAE allows

encoding computations rather than encoding local structures. We first define computation below.

Definition 1.

Given a set of elementary operations

O

, a computation

C

is the composition of a finite

number of operations

o ∈ O

applied to an input signal

x

, with the output of each operation being the

input to its following operations.

The set of elementary operations

O

depends on specific applications. For example, when we are

interested in computations given by a calculator, the elementary operation set will be all the operations

defined on the functional buttons of the calculator, such as

+

,

−

,

×

,

÷

etc. When modeling neural

3

conv3

max3

conv5

conv5

max3

conv3

output

conv5

conv5

max3

conv3

conv5

max3

conv5 input

input

max3

conv5

max3

conv3

input

conv3 conv3

max3

conv5

input

conv5

conv3

max3

input

conv5

conv3

max3

conv5

max3

input

conv3

inputinput

1 2 3 4 5 6 7

Aggregate messages

from predecessors

Update the hidden

state of this node

output

conv5

max3

conv5

max3

conv3

output

conv5

conv5

max3

conv3

output

conv5

max3

conv3

output

max3

conv3

output

conv3

output

conv5

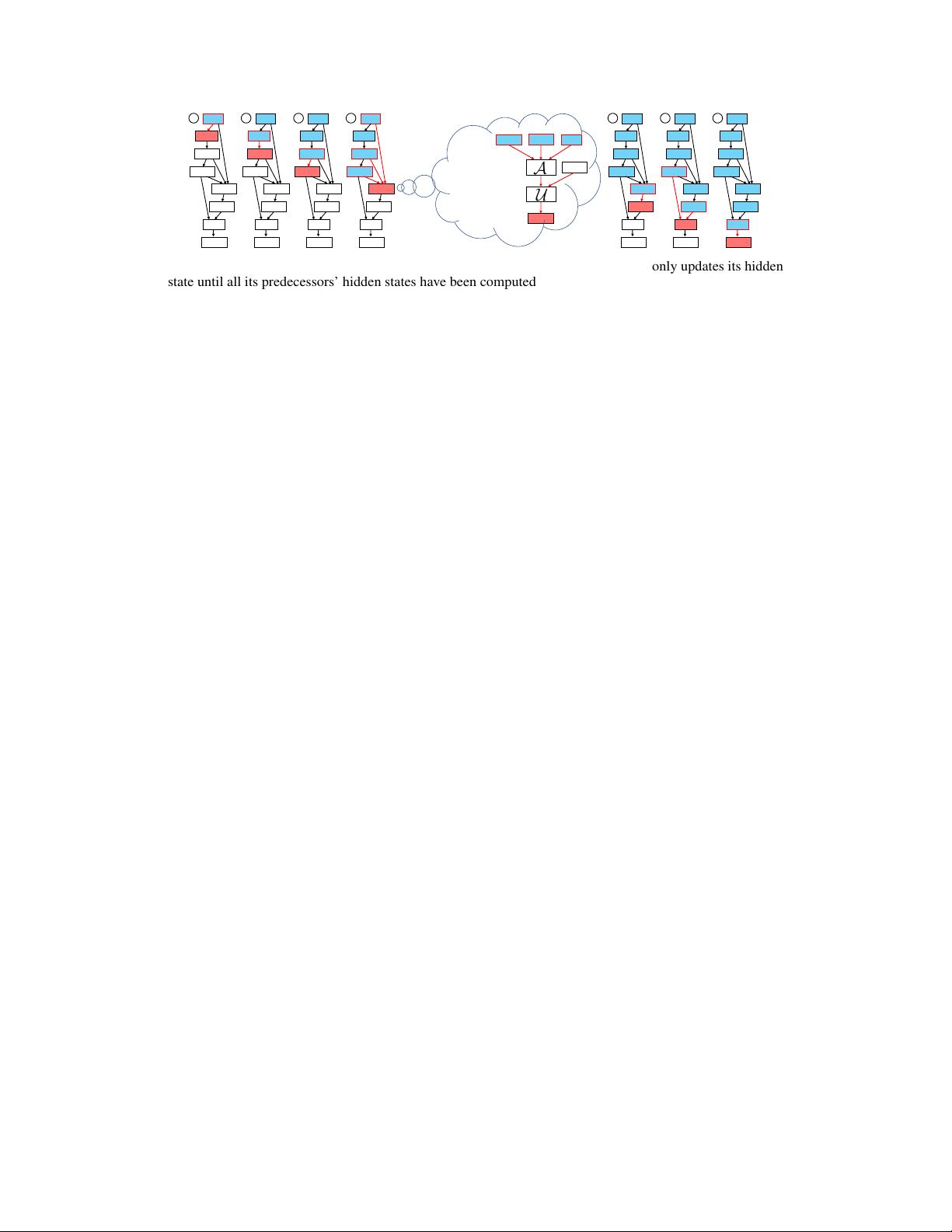

Figure 2: An illustration of the encoding procedure for neural architectures. A node only updates its hidden

state until all its predecessors’ hidden states have been computed. Nodes in blue boxes have already updated

their hidden states, and nodes in red boxes are the ones being updated.

networks, the set of elementary operations can be a predefined set of basic layers, such as 3

×

3

convolution, 5

×

5 convolution, 7

×

7 convolution, 2

×

2 max pooling etc. Note that a computation is not

restricted to be a line of operations with the last operation’s output being the current operation’s input.

It allows an operation to take arbitrary previous operations’ outputs as input, with these operations

forming a directed acyclic graph (DAG). The graph must be acyclic, since otherwise it will represent

an infinite number of operations so that the computation never stops. Figure 1 shows two examples.

Having defined computation, we are ready to introduce how D-VAE encodes and decodes DAGs.

3.1 Encoding

We first describe how the D-VAE encoder works. The D-VAE encoder can be seen as a graph neural

network (GNN) using an asynchronous message passing scheme. Given a DAG, we assume there is

a single starting node which does not have any predecessors (if there are multiple such nodes, we

add a virtual starting node connecting to all of them). In a neural architecture, this starting node

corresponds to the input layer. We use a function

U

to update the hidden state of each node based

on its neighbors’ hidden states. The update function

U

takes two input variables: 1)

x

v

, the one-hot

encoding of the current vertex

v

’s type, and 2)

h

in

v

, the aggregated message from

v

’s predecessors,

given by:

h

in

v

= A(

h

u

: u → v

), (2)

where

u → v

denotes there is a directed edge from

u

to

v

,

A

is an aggregation function on the

(ordered) multiset of

v

’s predecessors’ hidden states. For the starting node without predecessors,

A

outputs an all-zero initialization vector. Having x

v

and h

in

v

, the hidden state of v is updated by

h

v

= U(x

v

, h

in

v

). (3)

Compared to the traditional simultaneous message passing, the message passing for a node in D-VAE

must wait until all of its predecessors’ hidden states have already been computed. To make sure all

the predecessors’ hidden states are available when a new node comes, we can feed in nodes following

a topological ordering of the DAG.

Finally, after all nodes’ hidden states are computed, we use

h

v

n

, the hidden state of

v

n

(the ending

node without any successors) as the output of the encoder. In Figure 2, we use a real neural architecture

to illustrate the encoding process. Then we feed

h

v

n

to two multi-layer perceptrons (MLPs) to get

the mean and variance parameters of

q

φ

in (1). If there are multiple nodes without successors, we

again add a virtual ending node connecting from all these “loose ends”.

Note that although topological orderings are usually not unique for a DAG, we can take either one of

them as the input node order while ensuring the encoding result is always the same, formalized by

the following theorem. We include all theorem proofs in the appendix.

Theorem 1.

If the aggregation function

A

is invariant to the order of its inputs, then the D-VAE

encoder is permutation-invariant.

Theorem 1 means that we can get the same encoding result for isomorphic DAGs, no matter what

node ordering/indexing is used. This property is much desirable since real world DAGs do not often

have a canonical indexing – we do not want a DAG to be encoded into a different latent vector after

permuting its nodes. The next theorem shows another property of D-VAE that is crucial for its success

in modeling computation graphs, i.e., it is able to injectively encode computations on DAGs.

4

剩余19页未读,继续阅读

稚气筱筱

- 粉丝: 14

- 资源: 320

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0