没有合适的资源?快使用搜索试试~ 我知道了~

2019-[Havard]Alan-Variational quantum generators, Generative adv

需积分: 0 0 下载量 157 浏览量

2022-08-04

14:18:50

上传

评论

收藏 2MB PDF 举报

温馨提示

试读

15页

2019-[Havard]Alan-Variational quantum generators, Generative adversarial quantum machine learning-李昕1

资源详情

资源评论

资源推荐

Variational quantum generators: Generative adversarial quantum machine learning

for continuous distributions.

Jonathan Romero

1, 2, ∗

and Alán Aspuru-Guzik

2, 3, 4, 5, †

1

Department of Chemistry and Chemical Biology, Harvard University, 12 Oxford Street,

Cambridge, MA 02138, USA

2

Zapata Computing Inc., 501 Massachusetts Avenue, Cambridge, MA, 02139, USA.

3

Department of Chemistry and Department of Computer Science,

University of Toronto, 80 St. George Street, Toronto, Ontario M5S 3H6, Canada.

4

Vector Institute, 661 University Ave., Suite 710 Toronto, ON M5G 1M1, Canada.

5

Canadian Institute for Advanced Research (CIFAR) Senior Fellow,

661 University Ave., Suite 505, Toronto, ON M5G 1M1, Canada.

We propose a hybrid quantum-classical approach to model continuous classical probability distri-

butions using a variational quantum circuit. The architecture of the variational circuit consists of

two parts: a quantum circuit employed to encode a classical random variable into a quantum state,

called the quantum encoder, and a variational circuit whose parameters are optimized to mimic a

target probability distribution. Samples are generated by measuring the expectation values of a set

of operators chosen at the beginning of the calculation. Our quantum generator can be comple-

mented with a classical function, such as a neural network, as part of the classical post-processing.

We demonstrate the application of the quantum variational generator using a generative adversarial

learning approach, where the quantum generator is trained via its interaction with a discriminator

model that compares the generated samples with those coming from the real data distribution. We

show that our quantum generator is able to learn target probability distributions using either a

classical neural network or a variational quantum circuit as the discriminator. Our implementation

takes advantage of automatic differentiation tools to perform the optimization of the variational

circuits employed. The framework presented here for the design and implementation of variational

quantum generators can serve as a blueprint for designing hybrid quantum-classical architectures

for other machine learning tasks on near-term quantum devices.

I. INTRODUCTION

Quantum computing, a technology that relies on the

properties of quantum systems to process information, is

rapidly reaching maturity. Important problems that are

hard to solve on classical computers based on transis-

tors, such as factoring and simulating quantum systems,

can be solved more efficiently using quantum computers

[1, 2]. These devices are nearing the noisy intermediate-

scale quantum (NISQ) era [3], corresponding to machines

with 50 to 100 qubits and capable of executing circuits

with depths on the order of thousands of elementary two-

qubit operations [3, 4]. While NISQ devices will not be

able to implement error-correction, as opposed to fault-

tolerant quantum computers (FTQC), they are expected

to provide computational advantages over classical super-

computers for certain problems, which includes sampling

from hard-to-simulate probability distributions [3, 5, 6].

The limitations in the number of qubits and coherence

times of NISQ devices have encouraged the adoption of

the hybrid quantum-classical (HQC) framework as the

de facto strategy to design practical algorithms in the

near term. The basic idea behind the HQC framework

is that a computational problem can be divided into sev-

eral subtasks, several of which can be executed more effi-

∗

E-mail:jromerofontalv[email protected]ard.edu

†

E-mail:[email protected]

ciently using a quantum computer while the rest can be

deployed to a classical computer. A subset of HQC algo-

rithms called adaptive hybrid quantum-classical (AHQC)

algorithms, use classical resources to perform optimiza-

tion of algorithm parameters. In this case, the quan-

tum subtask generally refers to the process of preparing

a parameterized quantum state, followed by the measure-

ment of the expectation values of a polynomial number

of observables that encode information relevant to the

problem-of-interest. The parameterized quantum state

is obtained using a variational quantum circuit, which

consists of a set of tunable quantum gates whose pa-

rameters are subject to optimization. Examples of HQC

algorithms include the variational quantum eigensolver

(VQE) [7, 8], the quantum approximate optimization

algorithm (QAOA) [9], the variational quantum error-

correction scheme (QVECTOR) [10], among others.

The HQC framework has also been adopted as the ba-

sis for designing quantum machine learning algorithms

for NISQ devices. One of the first algorithms of this

type is the quantum autoencoder (QAE) [11, 12], where

a variational quantum circuit is optimized to compress a

set of quantum states. This is analogous to a classical

autoencoder where an artificial neural network (ANN)

is trained to compress classical datasets. The connection

between neural networks and variational circuits has been

further investigated, where it was shown that the HQC

framework can approximate nonlinear functions just as

classical neural networks can [13, 14]. Furthermore, vari-

arXiv:1901.00848v1 [quant-ph] 3 Jan 2019

2

ational circuits have provided a new strategy for encoding

classical information into quantum states, which is a fun-

damental step for machine learning applications. In con-

trast with amplitude encoding, in which the input vector

is normalized and transformed directly into a quantum

state, variational circuits can encode classical data by en-

coding the input vector as the set of variational circuit

parameters [14–16].

In recent months, the combination of the strategies de-

scribed above for encoding classical data and designing

HQC algorithms have led to rapid growth of publications

on quantum machine algorithms for performing both dis-

criminative [14–21] and generative tasks [22–25] on clas-

sical data using NISQ devices. In machine learning, dis-

criminative models are trained to learn the conditional

probability distribution of a target variable y with respect

to a set of observations x, or p(y|x). In contrast, genera-

tive models are trained to learn the joint probability dis-

tribution p(x, y), or alternatively, the conditional prob-

ability of the observed data with respect to the target

variable, p(x|y). Most HQC algorithms for discrimina-

tive modeling use a variational quantum classifier [14, 16–

19, 21], where a variational circuit is optimized to directly

model p(y|x) using training data {x

i

, y

i

}. Another strat-

egy is to use a variational circuit as a quantum feature

map for unsupervised classification with a support vec-

tor machine [15, 16]. Meanwhile, HQC approaches to

generative modeling have focused on modeling discrete

probability distributions by using a variational circuit

as a Born machine [25–28]. Born machines generate

samples via projective measurement on the qubits, for

example, by measuring the qubits in the computational

basis. While this approach can learn probability distri-

butions for small datasets used for benchmarking, such

as Bars-and-Stripes [25, 28], as well as quantum circuits

for preparation of certain quantum states [25], the ap-

plication of this model to general problems in generative

modeling may be difficult due to the exponential scaling

of the number of measurements required for sampling the

distribution [25].

So far, HQC approaches for generative modeling of

continuous probability distributions have not been de-

veloped. Most industrial applications, such as image and

sound generation fall into this category. In this paper

we present a variational circuit architecture designed to

generate continuous probability distributions. This vari-

ational quantum generator (VQG) comprises two quan-

tum circuit components: the first one consists of a pa-

rameterized quantum circuit used to encode a classical

random variable to a quantum state. The second circuit

corresponds to a variational circuit whose parameters are

optimized to mimic the target classical probability distri-

bution. The output distribution is obtained by measuring

the expectation values of a set of predefined operators,

whose values can be post-processed using a classical func-

tion. This construction provides considerable flexibility

in the design of the variational circuit, allowing to easily

incorporate VQG into classical neural network architec-

tures. Furthermore, we show that our VQG architecture

can be trained using an adversarial learning approach

[29, 30] leveraging automatic differentiation [31–33] to

perform gradient-based optimization. That is, our VQG

architecture learns to generate samples from the data dis-

tribution based on feedback obtained from a discrimi-

nator model, which simultaneously learns to distinguish

between the samples coming from the real data distribu-

tion and those produced by the generator. We show that

VQG can be trained using a classical neural network as

well as a variational quantum classifier as discriminators.

Our paper is organized as follows: Section II briefly

describes generative learning using generative adversarial

networks and summarizes some proposals for generative

learning on quantum computers. Section III describes the

VQG architecture, its implementation, cost analysis, and

training process using adversarial learning. In Section

IV we provide a proof-of-principle implementation and

numerical simulation of a VQG example and describe the

main challenges for its implementation on NISQ devices.

Section V offers some concluding remarks.

II. BACKGROUND

A. Classical and quantum generative adversarial

learning (GANs)

The machine learning literature provides a variety of

generative models. Most of them are trained using the

principle of maximum likelihood, that consists of taking

several samples from the data generating distribution to

form a training set and changing the parameters of the

model to maximize the likelihood of the observed data

of being generated by the model. Generative models in

machine learning can be classified as explicit or implicit,

depending on whether or not a explicit form of the prob-

ability density function is used [34]. Very few tractable

explicit models are known, and most of them rely on

approximations to the density function. On the other

hand, most of the implicit models consist of approxima-

tions that can mimic the process of sampling from the

generating distribution. Implicit models are further clas-

sified into models that require several steps to generate

a single sample, such as Markov chains, and models that

can generate a sample in a single step. Generative ad-

versarial networks (GANs) belong to the latter category.

Generally, GANs consist of two neural networks, the

discriminator and the generator, competing against each

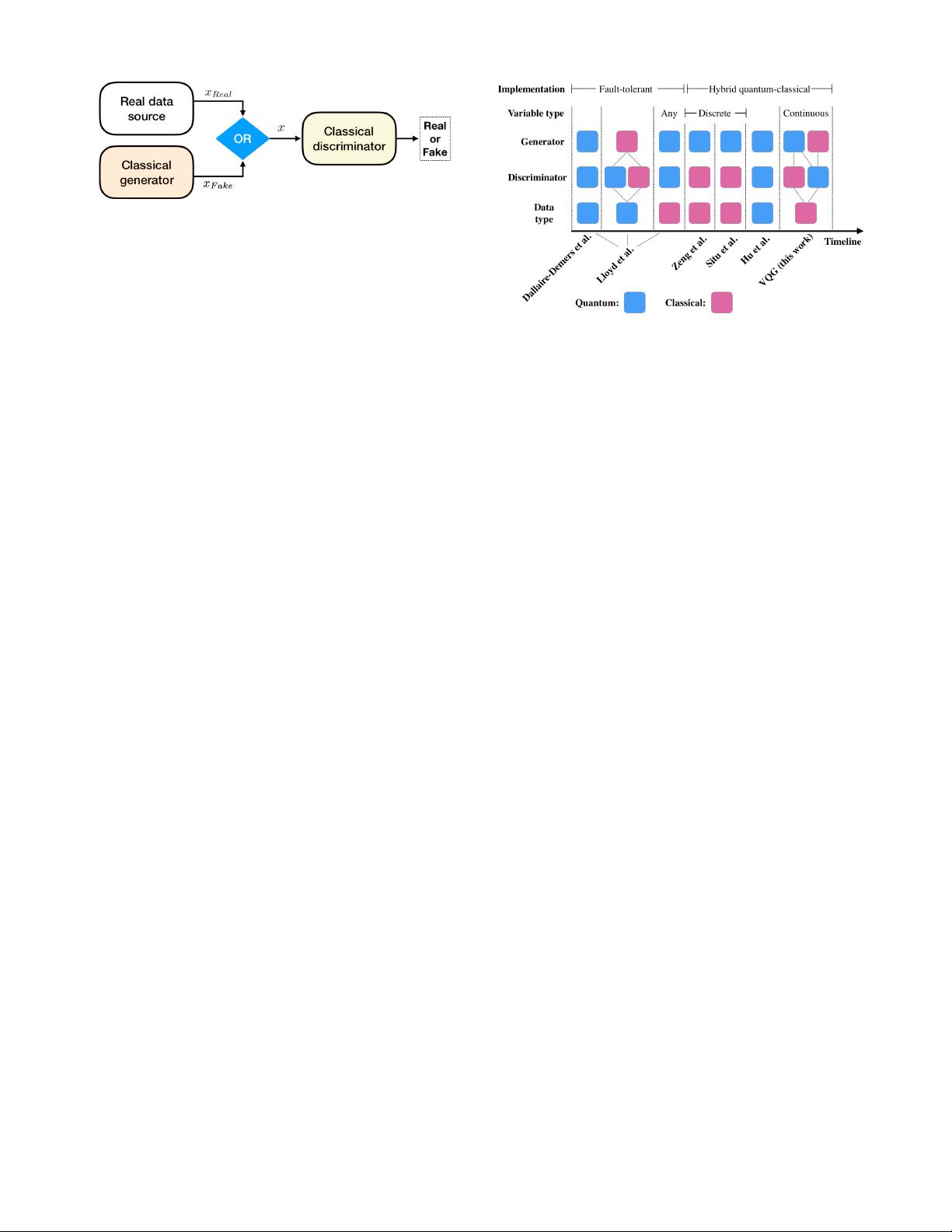

other in a zero-sum game. Figure 1 illustrates the gen-

eral framework of GANs. Given a prior distribution over

the noise parameters p

z

(z), the generator consists of a

neural network F

G

(z; Θ

g

) over the parameters Θ

g

that

generates the distribution p

g

. On the other hand, the

discriminator is another neural network F

D

(x; Θ

d

) that

outputs a single scalar corresponding to the probability

of x coming from the real data distribution. Accordingly,

F

D

is trained to maximize the probability of assigning the

3

FIG. 1. Depiction of the classical generative adversarial net-

works (GANs) scheme: the generator, equipped with ran-

dom samples from a prior distribution (noise source), pro-

duces samples that attempt to mimic the real data samples.

The discriminator outputs the probability that a given sam-

ple came from the real distribution rather than the synthetic

one.

correct label to both the training examples and examples

coming from F

G

. Simultaneously, F

G

is trained to min-

imize log(1 − F

D

(F

G

(z))), related to the probability of

fooling the discriminator. In summary, F

D

and F

G

play

the following adversarial game:

min

G

max

D

(E

x∼p

data

(x)

[log F

D

(x)]

+ E

z∼p

z

(z)

[log(1 − F

D

(F

G

(z)))]). (1)

Assuming that the discriminator and the generator

have infinite capacity, meaning that they can represent

any probability distribution, it is possible to show that

the final stage of the game reaches a Nash equilibrium

where the generator produces data that corresponds to

the observed probability distribution, and the discrim-

inator has 1/2 probability of discriminating correctly.

Therefore, the final result of the GAN is a generator

model that produces samples from the observed distribu-

tion by sampling the prior distribution p

z

(z). The space

of z is usually called the latent space, and F

G

is said to

map samples from the latent space to the output space x.

The adversarial framework has proven very successful at

training the generator to model a variety of probability

distributions, leading to practical applications in many

fields, including image synthesis, semantic image editing,

molecular discovery, among others [35–37]. Nowadays,

the application of GANs constitute an exciting and fast

growing research field that promises to impact many in-

dustries such as self-driving cars, finance, and drug and

materials discovery [38–42].

Recently, different quantum adaptations of the GAN

scheme have been proposed [26, 27, 30, 43, 44]. These

methodologies can be characterized according to whether

the data source and the models used as discriminator and

generator are classical or quantum. The different scenar-

ios considered so far are summarized in Figure 2. In

particular, Ref. [30] offers a theoretical perspective on

three possible adversarial learning scenarios. The first

of these settings corresponds to a purely quantum ver-

sion of GANs, where the data distribution is a quantum

source and the models correspond to quantum circuits.

FIG. 2. Timeline of the development of quantum genera-

tive adversarial network models (Dallaire-Demers et al. [43],

Lloyd et al. [30], Zeng et al. [26], Situ et al. [27], Hu et al. [44]

and this work (VQG)). We describe each proposal in terms of

the nature of the data, the discriminator and the generator,

that can be either classical or quantum. Lines indicate possi-

ble combinations of models and data type. For those models

where the type of the data generated is classical, we describe

whether the type of variable is discrete or continuous. We also

describe the type of implementation proposed, whether it is

based on a fault-tolerant model or a hybrid quantum-classical

one.

This proposal is further developed in [43], and experi-

mentally demonstrated for a proof-of-principle quantum

computation with a superconducting qubit architecture

[44]. The second scenario considers a classical generator

that is trying to produce quantum data at an exponen-

tial cost. The third scenario corresponds to classical data

encoded in the amplitudes of a quantum state, such as

quantum generators and discriminator can be employed.

As described in [30], these proposals are designed for

error-corrected quantum computers. More recently, some

groups have proposed hybrid-quantum classical adversar-

ial learning schemes that could be implemented on NISQ

devices. These approaches utilize a classical data source

and a classical discriminator combined with a variational

circuit sampled as a Born machine as generator [26, 27].

As noted earlier, the Born machine approach consists of

generating a discrete distribution via projective measure-

ment on the qubits.

III. THE VARIATIONAL QUANTUM

GENERATOR ARCHITECTURE

A. Architecture

Existing quantum models for generative learning col-

lect data by measuring the system as a Born machine,

which is convenient for discrete distributions but can can-

not be easily adapted for continuous cases. We propose a

scheme to generate continuous distributions that builds

剩余14页未读,继续阅读

挽挽深铃

- 粉丝: 10

- 资源: 274

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- Pytorch-pytorch深度学习教程之Tensorboard.zip

- 基于C++和Python开发yolov8-face作为人脸检测器dlib作为人脸识别器的人脸考勤系统源码+项目说明.zip

- Pytorch-pytorch深度学习教程之变分自动编码器.zip

- Pytorch-pytorch深度学习教程之神经风格迁移.zip

- Pytorch-pytorch深度学习教程之深度残差网络.zip

- Pytorch-pytorch深度学习教程之循环神经网络.zip

- Pytorch-pytorch深度学习教程之逻辑回归.zip

- Pytorch-pytorch深度学习教程之双向循环网络.zip

- Pytorch-pytorch深度学习教程之卷积神经网络.zip

- Pytorch-pytorch深度学习教程之前馈神经网络.zip

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0