没有合适的资源?快使用搜索试试~ 我知道了~

清华&南开最新「视觉注意力机制Attention」综述论文

需积分: 39 39 下载量 38 浏览量

2021-11-22

06:14:36

上传

评论 4

收藏 5.26MB PDF 举报

温馨提示

试读

27页

注意力机制是深度学习方法的一个重要主题。清华大学计算机图形学团队和南开大学程明明教授团队、卡迪夫大学Ralph R. Martin教授合作,在ArXiv上发布关于计算机视觉中的注意力机制的综述文章[1]。该综述系统地介绍了注意力机制在计算机视觉领域中相关工作,并创建了一个仓库.

资源详情

资源评论

JOURNAL OF L

A

T

E

X CLASS FILES, VOL. 14, NO. 8, AUGUST 2015 1

Attention Mechanisms in Computer Vision:

A Survey

Meng-Hao Guo, Tian-Xing Xu, Jiang-Jiang Liu, Zheng-Ning Liu, Peng-Tao Jiang, Tai-Jiang Mu, Song-Hai

Zhang, Ralph R. Martin, Ming-Ming Cheng, Senior Member, IEEE, Shi-Min Hu, Senior Member, IEEE,

Abstract—Humans can naturally and effectively find salient regions in complex scenes. Motivated by this observation, attention

mechanisms were introduced into computer vision with the aim of imitating this aspect of the human visual system. Such an attention

mechanism can be regarded as a dynamic weight adjustment process based on features of the input image. Attention mechanisms have

achieved great success in many visual tasks, including image classification, object detection, semantic segmentation, video

understanding, image generation, 3D vision, multi-modal tasks and self-supervised learning. In this survey, we provide a comprehensive

review of various attention mechanisms in computer vision and categorize them according to approach, such as channel attention, spatial

attention, temporal attention and branch attention; a related repository https://github.com/MenghaoGuo/Awesome-Vision-Attentions is

dedicated to collecting related work. We also suggest future directions for attention mechanism research.

Index Terms—Attention, Transformer, Survey, Computer Vision, Deep Learning, Salience.

F

1 INTRODUCTION

M

ETHODS for diverting attention to the most impor-

tant regions of an image and disregarding irrelevant

parts are called attention mechanisms; the human visual

system uses one [1], [2], [3], [4] to assist in analyzing and

understanding complex scenes efficiently and effectively.

This in turn has inspired researchers to introduce attention

mechanisms into computer vision systems to improve their

performance. In a vision system, an attention mechanism can

be treated as a dynamic selection process that is realized by

adaptively weighting features according to the importance

of the input. Attention mechanisms have provided benefits

in very many visual tasks, e.g. image classification [5], [6],

object detection [7], [8], semantic segmentation [9], [10], face

recognition [11], [12], person re-identification [13], [14], action

recognition [15], [16], few-show learning [17], [18], medical

image processing [19], [20], image generation [21], [22], pose

estimation [23], super resolution [24], [25], 3D vision [26],

[27], and multi-modal task [28], [29].

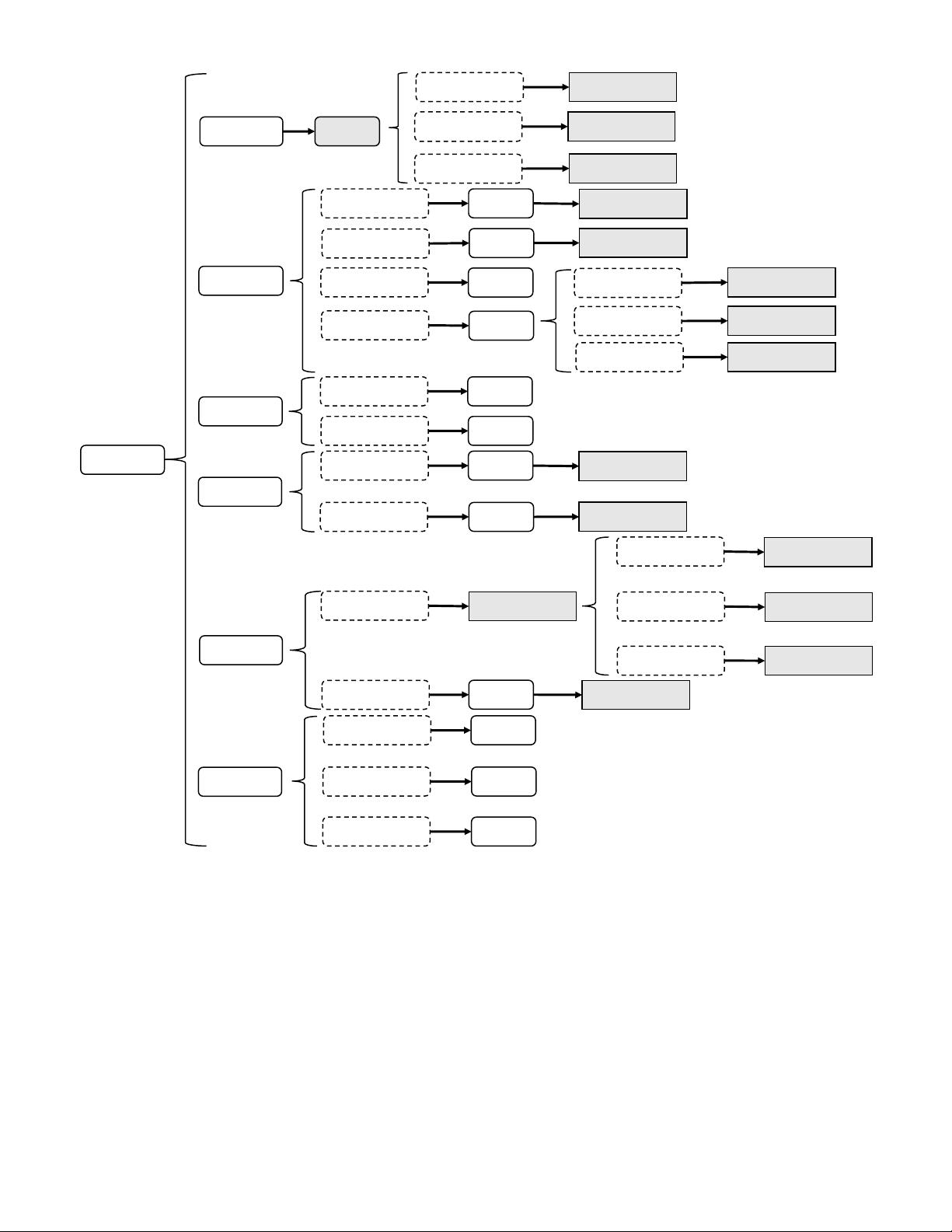

In the past decade, the attention mechanism has played

an increasingly important role in computer vision; Fig. 3,

briefly summarizes the history of attention-based models

in computer vision in the deep learning era. Progress can

be coarsely divided into four phases. The first phase begins

from RAM [31], pioneering work that combined deep neural

networks with attention mechanisms. It recurrently predicts

the important region and updates the whole network in an

end-to-end manner through a policy gradient. Later, various

works [21], [35] adopted a similar strategy for attention in

•

M.H.Guo, T.X.Xu, Z.N.Liu, T.J.Mu, S.H.Zhang and S.M.Hu are with

the BNRist, Department of Computer Science and Technology, Tsinghua

University, Beijing 100084, China.

•

J.J.Liu, P.T.Jiang and M.M.Cheng are with TKLNDST, College of Computer

Science, Nankai University, Tianjin 300350, China.

•

R.R.Martin was with the School of Computer Science and Informatics,

Cardiff University, UK.

• S.M.Hu is the corresponding author.

E-mail: shimin@tsinghua.edu.cn.

Channel Attention

e.g.,SENet

What to attend

Spatial Attention

e.g.,Self-attention

Where to attend

Channel & Spatial

Attention

e.g.,CBAM

Temporal Attention

e.g., GLTR

When to attend

∅

Spatial&Temporal

Attention

e.g., STA-LSTM

∅

Branch Attention

e.g., SKNet

Which to attend

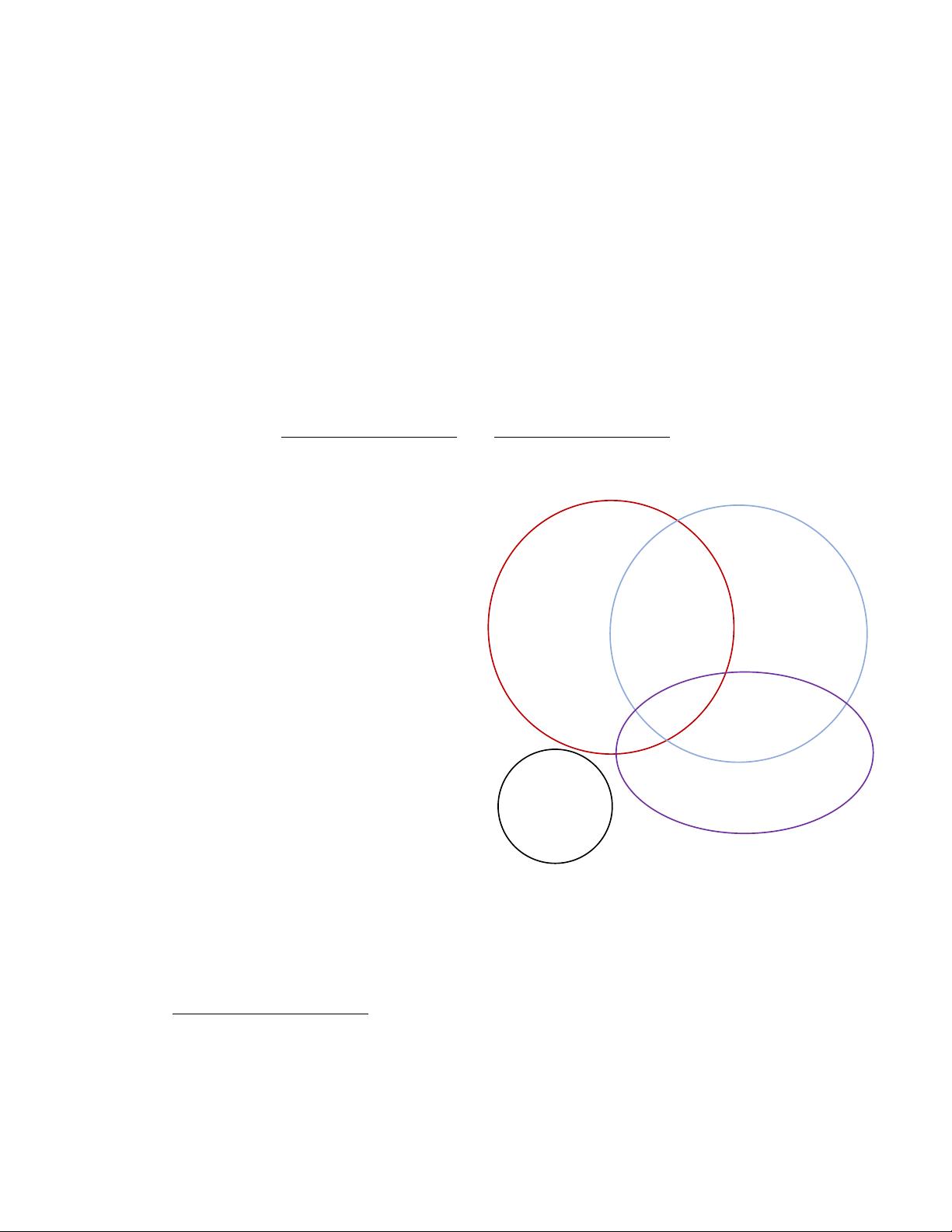

Fig. 1. Attention mechanisms can be categorised according to data

domain. These include four fundamental categories of channel attention,

spatial attention, temporal attention and branch attention, and two hybrid

categories, combining channel & spatial attention and spatial & temporal

attention. ∅ means such combinations do not (yet) exist.

vision. In this phase, recurrent neural networks(RNNs) were

necessary tools for an attention mechanism. At the start of

the second phase, Jaderberg et al. [32] proposed the STN

which introduces a sub-network to predict an affine trans-

formation used to to select important regions in the input.

Explicitly predicting discriminatory input features is the

major characteristic of the second phase; DCNs [7], [36] are

representative works. The third phase began with SENet [5]

arXiv:2111.07624v1 [cs.CV] 15 Nov 2021

JOURNAL OF L

A

T

E

X CLASS FILES, VOL. 14, NO. 8, AUGUST 2015 2

C

H,W

Channel Attention

T

C

H,W

Tem p o ral Attention

T

C

H,W

Spatial Attention

T

C

H,W

Channel&Spatial Attention

T

C

H,W

Spatial&Temporal Attention

T

Branch Attention

Branch n

…

Branch 2Branch 1

: Wher e attention is used.

Fig. 2. Channel, spatial and temporal attention can be regarded as

operating on different domains.

C

represents the channel domain,

H

and

W

represent spatial domains, and

T

means the temporal domain.

Branch attention is complementary to these. Figure following [30].

that presented a novel channel-attention network which

implicitly and adaptively predicts the potential key features.

CBAM [6] and ECANet [37] are representative works of this

phase. The last phase is the self-attention era. Self-attention

was firstly proposed in [33] and rapidly provided great

advances in the field of natural language processing [33],

[38], [39]. Wang et al. [15] took the lead in introducing self-

attention to computer vision and presented a novel non-local

network with great success in video understanding and

object detection. It was followed by a series of works such as

EMANet [40], CCNet [41], HamNet [42] and the Stand-Alone

Network [43], which improved speed, quality of results,

and generalization capability. Recently, various pure deep

self-attention networks (visual transformers) [27], [34], [44],

[45], [46], [47], [48], [49] have appeared, showing the huge

potential of attention-based models. It is clear that attention-

based models have the potential to replace convolutional

neural networks and become a more powerful and general

architecture in computer vision.

The goal of this paper is to summarize and classify

current attention methods in computer vision. Our approach

TABLE 1

Key notation in this paper. Other minor notation is explained where used.

Symbol Description

X input feature map, X ∈ R

C×H×W

Y output feature map

W learnable kernel weight

FC fully-connected layer

Conv convolution

GAP global average pooling

GMP global max pooling

[ ] concatenation

δ ReLU activation [51]

σ sigmoid activation

tanh tanh activation

Softmax softmax activation

BN batch normalization [52]

Expand expan input by repetition

is shown in Fig. 1 and further explained in Fig. 2: it is

based around data domain. Some methods consider the

question of when the important data occurs, or others where it

occurs, etc., and accordingly try to find key times or locations

in the data. We divide existing attention methods into

six categories which include four basic categories: channel

attention (what to pay attention to [50]), spatial attention (where

to pay attention), temporal attention (when to pay attention)

and branch channel (which to pay attention to), along with two

hybrid combined categories: channel & spatial attention and

spatial & temporal attention. These ideas are further briefly

summarized together with related works in Tab. 2.

The main contributions of this paper are:

•

a systematic review of visual attention methods, cov-

ering the unified description of attention mechanisms,

the development of visual attention mechanisms as

well as current research,

•

a categorisation grouping attention methods accord-

ing to their data domain, allowing us to link visual

attention methods independently of their particular

application, and

• suggestions for future research in visual attention.

Sec. 2 considers related surveys, then Sec. 3 is the main

body of our survey. Suggestions for future research are given

in Sec. 4 and finally, we give conclusions in Sec. 5.

2 OTHER SURVEYS

In this section, we briefly compare this paper to various

existing surveys which have reviewed attention methods

and visual transformers. Chaudhari et al. [140] provide

a survey of attention models in deep neural networks

which concentrates on their application to natural language

processing, while our work focuses on computer vision.

Three more specific surveys [141], [142], [143] summarize the

development of visual transformers while our paper reviews

attention mechanisms in vision more generally, not just self-

attention mechanisms. Wang et al. [144] present a survey of

attention models in computer vision, but it only considers

RNN-based attention models, which form just a part of our

survey. In addition, unlike previous surveys, we provide

a classification which groups various attention methods

according to their data domain, rather than according to

their field of application. Doing so allows us to concentrate

JOURNAL OF L

A

T

E

X CLASS FILES, VOL. 14, NO. 8, AUGUST 2015 3

SENet

2017.09.05

(It proposes to

adaptively recalibrate

channel by using

attention weight)

Non-Local Network

2017.11.21

(It first successfully

uses self-attention to

model non-local

relationship in

computer vision)

CBAM

2018.07.17

(It proposes tp

combine channel

attention with spatial

attention)

Highway Network

2015.07.22

(It proposes to

combine different

branches by using

attention method)

RAM

2014.06.24

(It adopts RNN and

reinforcement learning

to achieve spatial

attention)

ViT

2020.10.22

(The first pure

transformer structure

achieves great results

in computer vision)

STN

2015.06.05

(It proposes to select

important region by

learning affine

transformation)

2018-2020

(Some popular

attention related

network such as

SAGAN, OCNet,

DANet, EMANet,

OCRNet and HamNet)

2020-Now

(Various transformer

variants such as PCT,

Deformable DETR DeiT,

T2T-ViT, IPT,PVT and

Swin-Transformer)

Fig. 3. Brief summary of key developments in attention in computer vision, which have loosely occurred in four phases. Phase 1 adopted RNNs to

construct attention, a representative method being RAM [31]. Phase 2 explicitly predicted important regions, a representative method being STN [32].

Phase 3 implicitly completed the attention process, a representative method being SENet [5]. Phase 4 used self-attention methods [15], [33], [34].

TABLE 2

Brief summary of attention categories and key related works.

Attention category Description Related work

Channel attention

Generate attention mask across the channel domain and

use it to select important channels.

[5], [37], [53], [54], [55], [56], [57], [58] [25], [59],

[60]

Spatial attention

Generate attention mask across spatial domains and use

it to select important spatial regions (e.g. [15], [61]) or

predict the most relevant spatial position directly (e.g. [7],

[31]).

[8], [9], [15], [21], [31], [32], [34], [35] , [22], [26],

[62], [63], [64], [65], [66], [67] , [41], [68], [69], [70],

[71], [72], [73], [74] , [8], [34], [42], [43], [75], [76],

[77], [78] , [27], [44], [45], [46], [79], [80], [81], [82]

, [61], [83], [84], [85], [86], [87], [88], [89] , [47],

[90], [91], [92], [93], [94], [95], [96] , [97], [98], [99],

[100], [101], [102], [103], [104] , [20], [105], [106],

[107], [108], [109]

Temporal attention

Generate attention mask in time and use it to select key

frames.

[110], [111], [112]

Branch attention

Generate attention mask across the different branches

and use it to select important branches.

[113], [114], [115], [116]

Channel & spatial atten-

tion

Predict channel and spatial attention masks separately

(e.g. [6], [117]) or generate a joint 3-D channel, height,

width attention mask directly (e.g. [118], [119]) and use

it to select important features.

[6], [50], [117], [119], [120], [121], [122], [10],

[101], [118], [123], [124], [125], [126] , [13], [14],

[127], [128], [129]

Spatial & temporal at-

tention

Compute temporal and spatial attention masks sepa-

rately (e.g. [16], [130]), or produce a joint spatiotemporal

attention mask (e.g. [131]), to focus on informative

regions.

[130], [132], [133], [134], [135], [136], [137], [138],

[139]

on the attention methods in their own right, rather than

treating them as supplementary to other tasks.

3 ATTENTION METHODS IN COMPUTER VISION

In this section, we first sum up a general form for the

attention mechanism based on the recognition process of

human visual system in Sec. 3.1. Then we review various

categories of attention models given in Fig. 1, with a

subsection dedicated to each category. In each, we tabularize

representative works for that category. We also introduce

that category of attention strategy more deeply, considering

its development in terms of motivation, formulation and

function.

3.1 General form

When seeing a scene in our daily life, we will focus on the

discriminative regions, and process these regions quickly.

The above process can be formulated as:

Attention = f(g(x), x) (1)

Here

g(x)

can represent to generate attention which

corresponds to the process of attending to the discriminative

regions.

f(g(x), x)

means processing input

x

based on the

attention

g(x)

which is consistent with processing critical

regions and getting information.

With the above definition, we find that almost all existing

attention mechanisms can be written into the above formu-

lation. Here we take self-attention [15] and squeeze-and-

JOURNAL OF L

A

T

E

X CLASS FILES, VOL. 14, NO. 8, AUGUST 2015 4

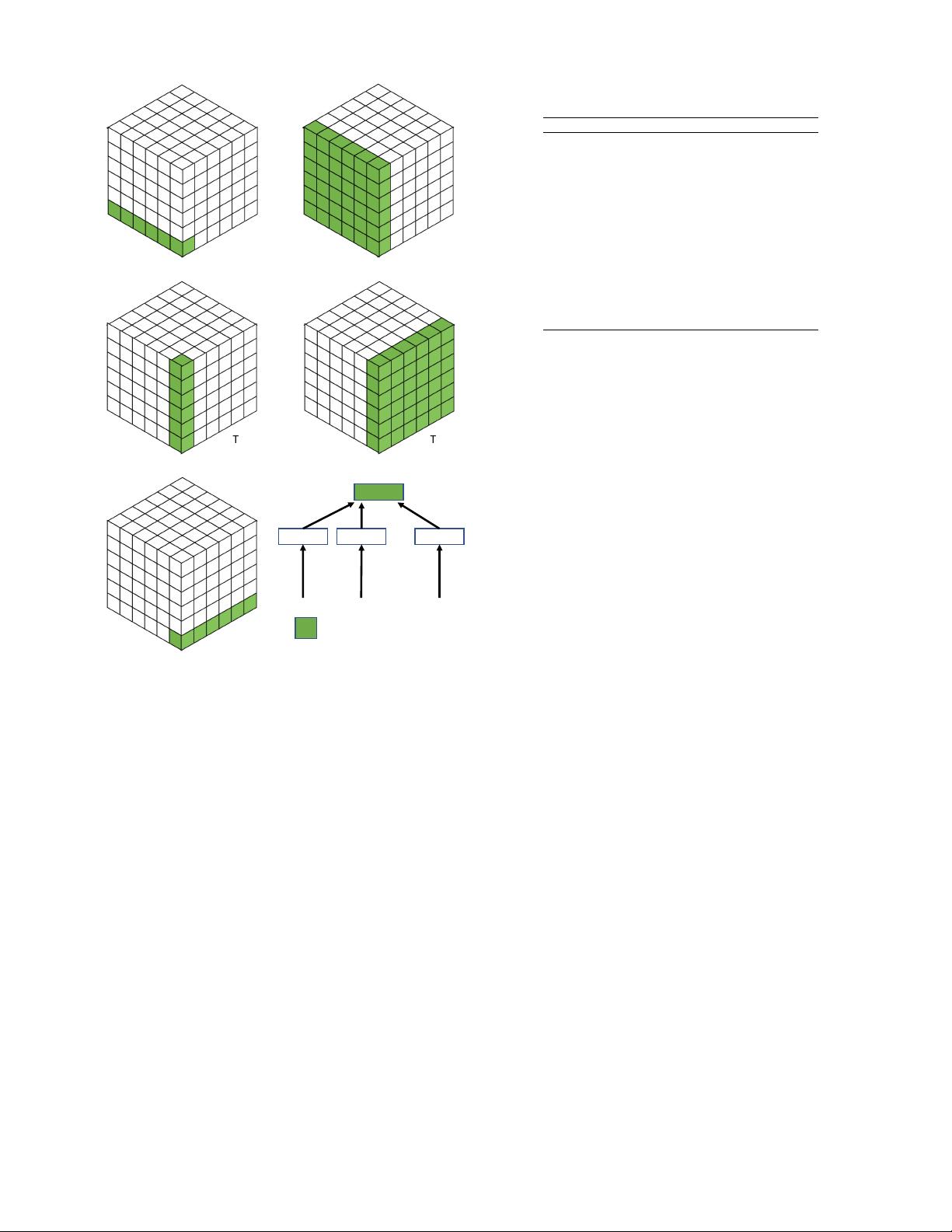

Channel

attention

SENet

Improve squeeze

module

Improve excitation

module

Improve both squeeze

and excitation module

GSoP-Net, FcaNet, etc.

ECANet

SRM, GCT, etc.

DRAW, Glimpse Net, etc.

DCN, DCN V2, etc.

Spatial attention

RNN-based attention

Predict the relevant

region explicitly

Self-attention based

Predict a soft mask

implicitly

RAM

Non-local

GENet

STN

Visual Transformers

Local self-attention

Efficient self-attention

DETR, ViT, etc.

SASA, SAN, etc.

CCNet, EMANet, etc.

Branch attention

SKNet, ReNeSt, etc.

Dynamic Conv.

Combining features of

different branches

Combine different

convolution kernels

Highway

Network

CondConv

Channel &

Spatial attention

CBAM, BAM, scSE et al.

Split channel attention

and spatial attention

SimAM, Strip Pooling,

SCNet

Directly Estimate 3D

attention map

Residual

attention

Triplet Attention

Cross-dimension

interaction

Coordinate Attention,

DANet

Long-range

dependencies

RGA

Relation-aware

attention

Attention

Mechanisms

Temporal

attention

Combining local

attention and global

attention

Self-attention based

TAM

GLTR

Temporal &

Spatial attention

Split temporal

attention and spatial

attention

Jointly produce spatial

and temporal attention

RSTAN

STA

Pairwise relation-

based

STGCN

Fig. 4. Developmental context of visual attention.

excitation(SE) attention [5] as examples. For self-attention,

g(x) and f(g(x), x) can be written as

Q, K, V = Linear(x) (2)

g(x) = Softmax(QK) (3)

f(g(x), x) = g(x)V (4)

(5)

For SE, g(x) and f(g(x), x) can be written as

g(x) = Sigmoid(MLP(GAP(x))) (6)

f(g(x), x) = g(x)x (7)

In the following, we will introduce various attention

mechanisms and specify them to the above formulation.

3.2 Channel Attention

In deep neural networks, different channels in different

feature maps usually represent different objects [50]. Channel

attention adaptively recalibrates the weight of each channel,

and can be viewed as an object selection process, thus

determining what to pay attention to. Hu et al. [5] first

proposed the concept of channel attention and presented

SENet for this purpose. As Fig. 4 shows, and we discuss

JOURNAL OF L

A

T

E

X CLASS FILES, VOL. 14, NO. 8, AUGUST 2015 5

shortly, three streams of work continue to improve channel

attention in different ways.

In this section, we first summarize the representative

channel attention works and specify process

g(x)

and

f(g(x), x)

described as Eq. 1 in Tab. 3 and Fig. 5. Then

we discuss various channel attention methods along with

their development process respectively.

3.2.1 SENet

SENet [5] pioneered channel attention. The core of SENet

is a squeeze-and-excitation (SE) block which is used to collect

global information, capture channel-wise relationships and

improve representation ability.

SE blocks are divided into two parts, a squeeze module

and an excitation module. Global spatial information is

collected in the squeeze module by global average pooling.

The excitation module captures channel-wise relationships

and outputs an attention vector by using fully-connected

layers and non-linear layers (ReLU and sigmoid). Then, each

channel of the input feature is scaled by multiplying the

corresponding element in the attention vector. Overall, a

squeeze-and-excitation block

F

se

(with parameter

θ

) which

takes X as input and outputs Y can be formulated as:

s = F

se

(X, θ) = σ(W

2

δ(W

1

GAP(X))) (8)

Y = sX (9)

SE blocks play the role of emphasizing important channels

while suppressing noise. An SE block can be added after each

residual unit [145] due to their low computational resource

requirements. However, SE blocks have shortcomings. In

the squeeze module, global average pooling is too simple

to capture complex global information. In the excitation

module, fully-connected layers increase the complexity of the

model. As Fig. 4 indicates, later works attempt to improve the

outputs of the squeeze module (e.g. GSoP-Net [54]), reduce

the complexity of the model by improving the excitation

module (e.g. ECANet [37]), or improve both the squeeze

module and the excitation module (e.g. SRM [55]).

3.2.2 GSoP-Net

An SE block captures global information by only using global

average pooling (i.e. first-order statistics), which limits its

modeling capability, in particular the ability to capture high-

order statistics.

To address this issue, Gao et al. [54] proposed to improve

the squeeze module by using a global second-order pooling

(GSoP) block to model high-order statistics while gathering

global information.

Like an SE block, a GSoP block also has a squeeze

module and an excitation module. In the squeeze module,

a GSoP block firstly reduces the number of channels from

c

to

c

0

(

c

0

< c

) using a

1x1

convolution, then computes a

c

0

× c

0

covariance matrix for the different channels to obtain

their correlation. Next, row-wise normalization is performed

on the covariance matrix. Each

(i, j)

in the normalized

covariance matrix explicitly relates channel i to channel j.

In the excitation module, a GSoP block performs row-wise

convolution to maintain structural information and output a

vector. Then a fully-connected layer and a sigmoid function

are applied to get a

c

-dimensional attention vector. Finally, it

multiplies the input features by the attention vector, as in an

SE block. A GSoP block can be formulated as:

s = F

gsop

(X, θ) = σ(W RC(Cov(Conv(X)))) (10)

Y = sX (11)

Here,

Conv(·)

reduces the number of channels,

Cov(·)

computes the covariance matrix and

RC(·)

means row-wise

convolution.

By using second-order pooling, GSoP blocks have im-

proved the ability to collect global information over the

SE block. However, this comes at the cost of additional

computation. Thus, a single GSoP block is typically added

after several residual blocks.

3.2.3 SRM

Motivated by successes in style transfer, Lee et al. [55] pro-

posed the lightweight style-based recalibration module (SRM).

SRM combines style transfer with an attention mechanism. Its

main contribution is style pooling which utilizes both mean

and standard deviation of the input features to improve

its capability to capture global information. It also adopts

a lightweight channel-wise fully-connected (CFC) layer, in

place of the original fully-connected layer, to reduce the

computational requirements.

Given an input feature map

X ∈ R

C×H×W

, SRM first

collects global information by using style pooling (

SP(·)

)

which combines global average pooling and global standard

deviation pooling. Then a channel-wise fully connected

(

CFC(·)

) layer (i.e. fully connected per channel), batch nor-

malization

BN

and sigmoid function

σ

are used to provide

the attention vector. Finally, as in an SE block, the input

features are multiplied by the attention vector. Overall, an

SRM can be written as:

s = F

srm

(X, θ) = σ(BN(CFC(SP(X)))) (12)

Y = sX (13)

The SRM block improves both squeeze and excitation mod-

ules, yet can be added after each residual unit like an SE

block.

3.2.4 GCT

Due to the computational demand and number of parameters

of the fully connected layer in the excitation module, it

is impractical to use an SE block after each convolution

layer. Furthermore, using fully connected layers to model

channel relationships is an implicit procedure. To overcome

the above problems, Yang et al. [56] propose the gated channel

transformation (GCT) to efficiently collect information while

explicitly modeling channel-wise relationships.

Unlike previous methods, GCT first collects global in-

formation by computing the

l

2

-norm of each channel. Next,

a learnable vector

α

is applied to scale the feature. Then a

competition mechanism is adopted by channel normalization

to interact between channels. Like other common normal-

ization methods, a learnable scale parameter

γ

and bias

β

are applied to rescale the normalization. However, unlike

previous methods, GCT adopts tanh activation to control

the attention vector. Finally, it not only multiplies the input

剩余26页未读,继续阅读

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0

最新资源