没有合适的资源?快使用搜索试试~ 我知道了~

Cross entropy From Wikipedia

需积分: 0 3 下载量 159 浏览量

2021-11-25

11:22:10

上传

评论

收藏 435KB PDF 举报

温馨提示

Wiki上的叉乘介绍

资源推荐

资源详情

资源评论

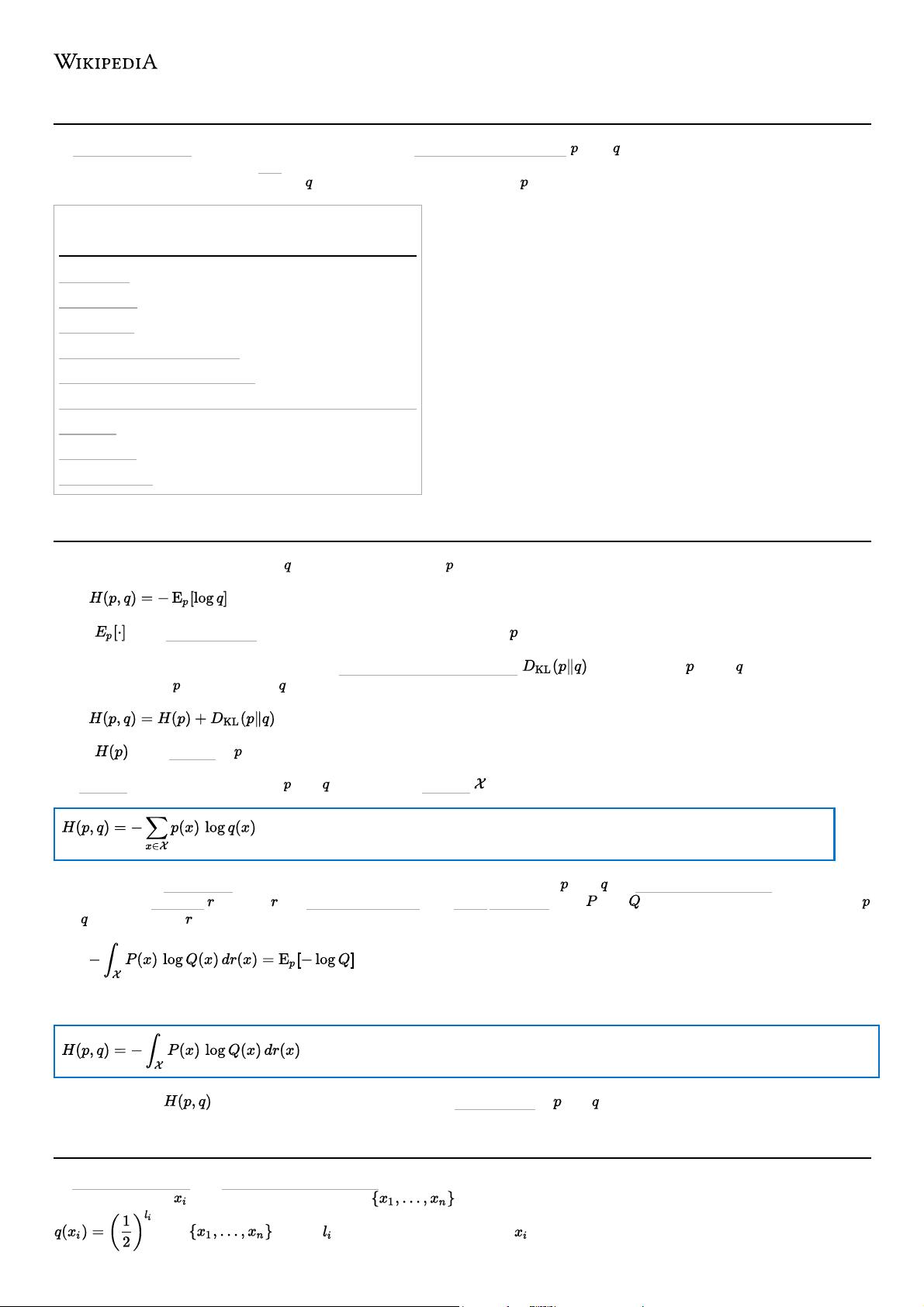

Cross entropy

In information theory, the cross-entropy between two probability distributions and over the same underlying set of events

measures the average number of bits needed to identify an event drawn from the set if a coding scheme used for the set is optimized

for an estimated probability distribution , rather than the true distribution .

Definition

Motivation

Estimation

Relation to log-likelihood

Cross-entropy minimization

Cross-entropy loss function and logistic regression

See also

References

External links

The cross-entropy of the distribution relative to a distribution over a given set is defined as follows:

,

where is the expected value operator with respect to the distribution .

The definition may be formulated using the Kullback–Leibler divergence , divergence of from (also known as the

relative entropy of with respect to ).

,

where is the entropy of .

For discrete probability distributions and with the same support this means

(Eq.1)

The situation for continuous distributions is analogous. We have to assume that and are absolutely continuous with respect to

some reference measure (usually is a Lebesgue measure on a Borel σ-algebra). Let and be probability density functions of

and with respect to . Then

and therefore

(Eq.2)

NB: The notation is also used for a different concept, the joint entropy of and .

In information theory, the Kraft–McMillan theorem establishes that any directly decodable coding scheme for coding a message to

identify one value out of a set of possibilities can be seen as representing an implicit probability distribution

over , where is the length of the code for in bits. Therefore, cross-entropy can be interpreted as

Contents

Definition

Motivation

资源评论

passer__jw767

- 粉丝: 816

- 资源: 3

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功