MonoSLAM: Real-Time Single Camera SLAM

Andrew J. Davison, Ian D. Reid, Member, IEEE, Nicholas D. Molton, and

Olivier Stasse, Member, IEEE

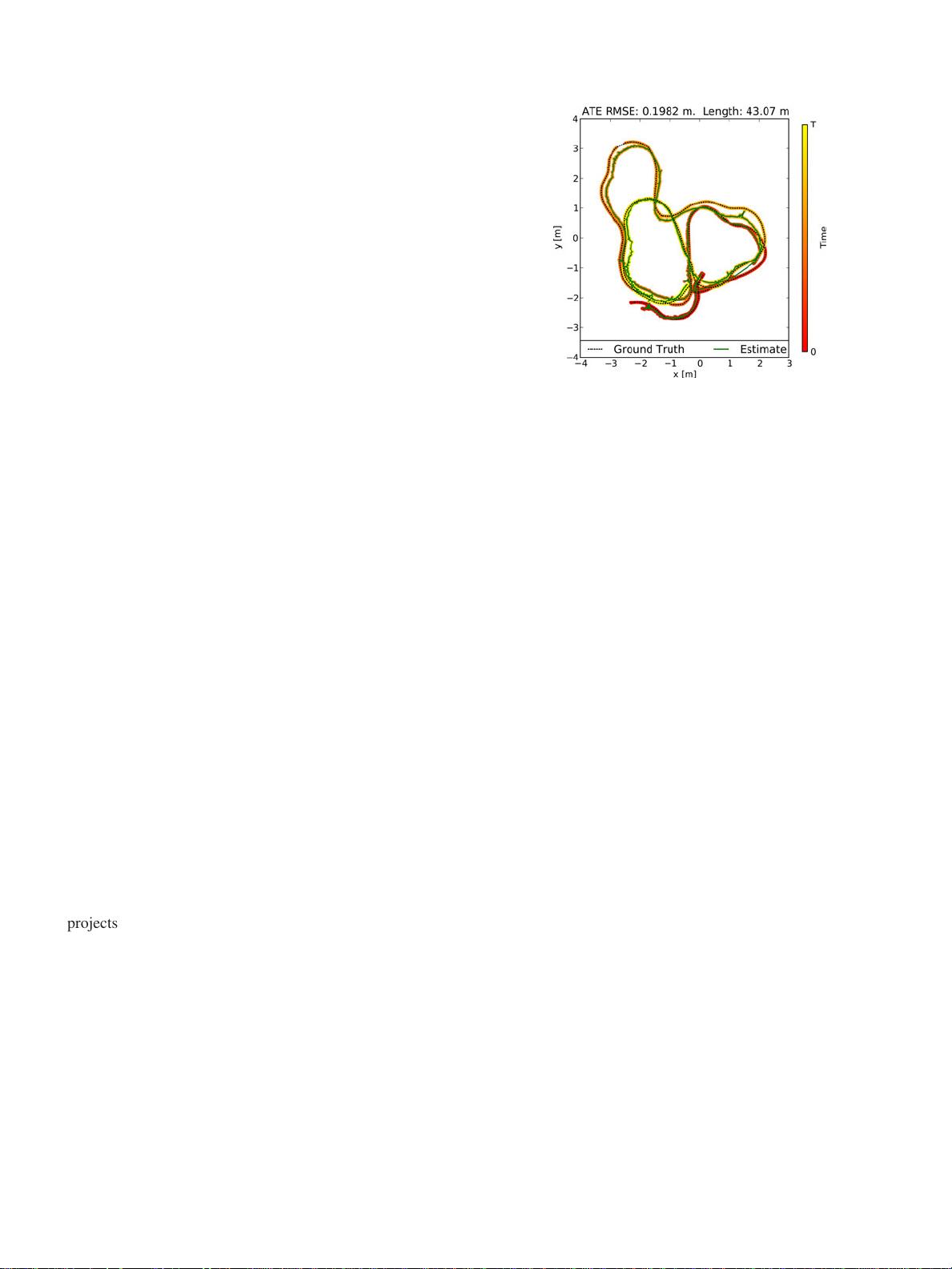

Abstract—We present a real-time algorithm which can recover the 3D trajectory of a monocular camera, moving rapidly through a

previously unknown scene. Our system, which we dub MonoSLAM, is the first successful application of the SLAM methodology from

mobile robotics to the “pure vision” domain of a single uncontrolled camera, achieving real time but drift-free performance inaccessible

to Structure from Motion approaches. The core of the approach is the online creation of a sparse but persistent map of natural

landmarks within a probabilistic framework. Our key novel contributions include an active approach to mapping and measurement, the

use of a general motion model for smooth camera movement, and solutions for monocular feature initialization and feature orientation

estimation. Together, these add up to an extremely efficient and robust algorithm which runs at 30 Hz with standard PC and camera

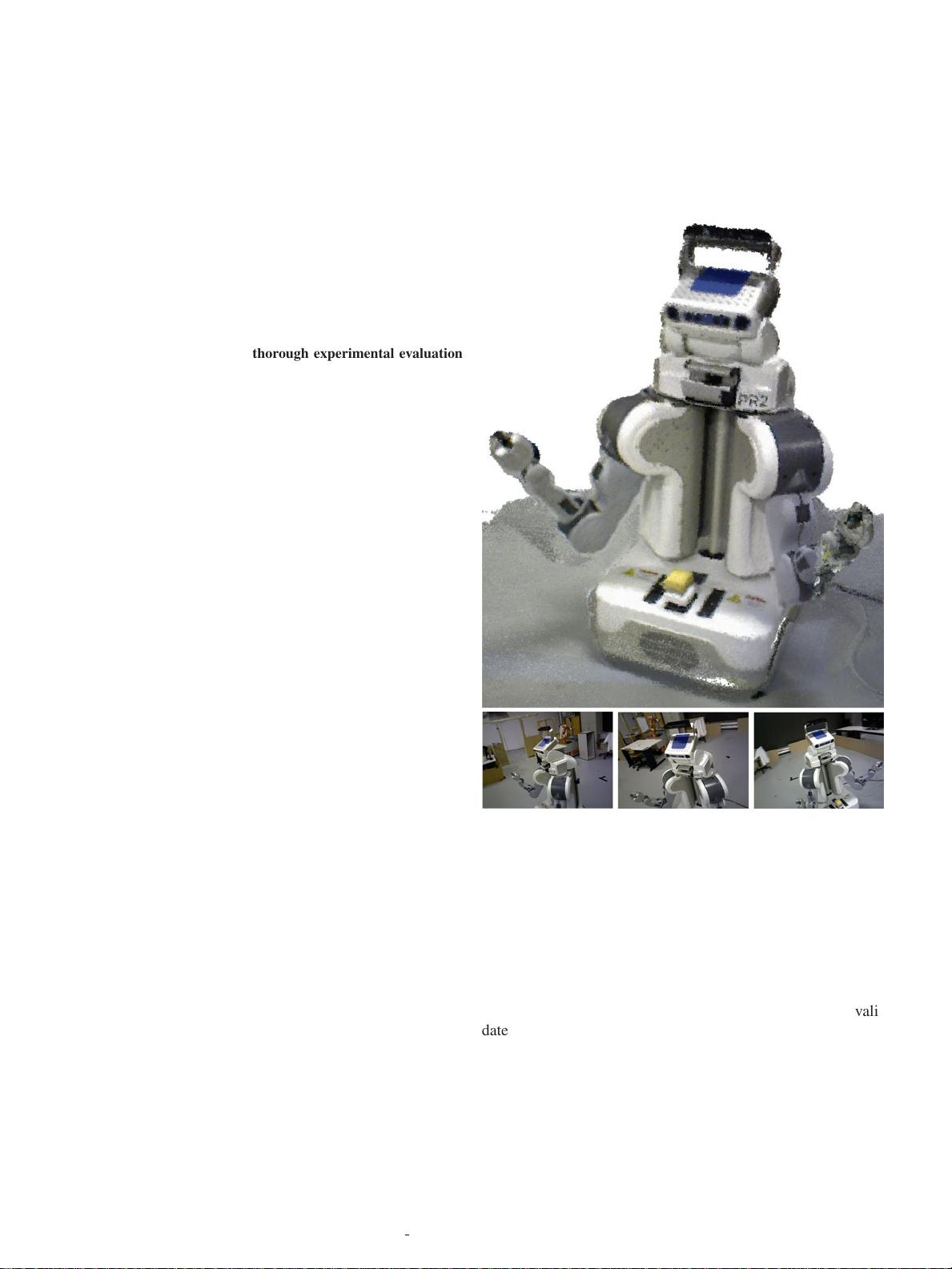

hardware. This work extends the range of robotic systems in which SLAM can be usefully applied, but also opens up new areas. We

present applications of MonoSLAM to real-time 3D localization and mapping for a high-performance full-size humanoid robot and live

augmented reality with a hand-held camera.

Index Terms—Autonomous vehicles, 3D/stereo scene analysis, tracking.

Ç

1INTRODUCTION

T

HE last 10 years have seen significant progress in

autonomous robot navigation and, specifically, Simulta-

neous Localization and Mapping (SLAM) has become well-

defined in the robotics community as the question of a moving

sensor platform constructing a representation of its environ-

ment on the fly while concurrently estimating its ego-motion.

SLAM is today is routinely achieved in experimental robot

systems using modern methods of sequential Bayesian

inference and SLAM algorithms are now starting to cross

over into practical systems. Interestingly, however, an d

despite the large computer vision research community, until

very recently the use of cameras has not been at the center of

progress in robot SLAM, with much more attention given to

other sensors such as laser range-finders and sonar.

This may seem surprising since for many reasons vision is

an attractive choice of SLAM sensor: cameras are compact,

accurate , noninvasive , and well -understood—and today

cheap and ubiquitous. Vision, of course, also has great

intuitive appeal as the sense humans and animals primarily

use to navigate. However, cameras capture the world’s

geometry only indirectly through photometric effects and it

was thought too difficult to turn the sparse sets of features

popping out of an image into rel iable long-term maps

generated in real-time, particularly since the data rates

coming from a camera are so much higher than those from

other sensors.

Instead, vision researchers concentrated on reconstruc-

tion problems from small image sets, developing the field

known as Structure from Motion (SFM). SFM algorithms

have been extended to work on longer image sequences,

(e.g., [1], [2], [3]), but these systems are fundamentally

offline in nature, analyzing a complete image sequence to

produce a reconstruction of the camera trajectory and scene

structure observed. To obtain globally consistent estimates

over a sequence, local motion estimates from frame-to-

frame feature matching are refined in a global optimization

moving backward and forward through the whole sequence

(called bundle adjustment). These methods are perfectly

suited to the automatic analysis of short image sequences

obtained from arbitrary sources—movie shots, consumer

video, or even decades-old archive footage—but do not scale

to consistent localization over arbitrarily long sequences in

real time.

Our work is highly focused on high frame-rate real-time

performance (typically 30Hz) as a requirement. In applica-

tions, real-time algorithms are necessary only if they are to

be used as part of a loop involving other components in the

dynamic world—a robot that must control its next motion

step, a human that needs visual feedback on his actions or

another computational process which is waiting for input.

In these cases, the most immediately useful information to

be obtained from a moving camera in real time is where it is,

rather than a fully detailed “final result” map of a scene

ready for display. Although localization and mapping are

intricately coupled problems and it has been proven in

SLAM research that solving either requires solving both, in

this work we focus on localization as the main output of

interest. A map is certainly built, but it is a sparse map of

landmarks optimized toward enabling localization.

Further, real-time camera tracking scenarios will often

involve extended and looping motions within a restricted

environment (as a humanoid performs a task, a domestic

robot cleans a home, or room is viewed from different angles

IEEE TRANSACTIONS ON PATTERN ANALYSIS AND MACHINE INTELLIGENCE, VOL. 29, NO. 6, JUNE 2007 1

. A.J. Davison is with the Department of Computing, Imperial College, 180

Queen’s Gate, SW7 2AZ, London, UK. E-mail: ajd@doc.ic.ac.uk.

. I.D. Reid is with the Robotics Research Group, Department of Engineering

Science, University of Oxford, OX1 3PJ, UK. E-mail: ian@robots.ox.ac.uk.

. N.D. Molton is with Imagineer Systems Ltd., The Surrey Technology

Centre, 40 Occam Road, The Surrey Research Park, Guildford GU2 7YG,

UK. E-mail: ndm@imagineersystems.com.

. O. Stasse is with the Joint Japanese-French Robotics Laboratory (JRL),

CNRS/AIST, AIST Central 2, 1-1-1 Umezono, Tsukuba, Ibaraki, 305-

8568, Japan. E-mail: olivier.stasse@aist.go.jp.

Manuscript received 13 Dec. 2005; revised 29 June 2006; accepted 6 Sept.

2006; published online 18 Jan. 2007.

Recommended for acceptance by C. Taylor.

For information on obtaining reprints of this article, please send e-mail to:

tpami@computer.org, and reference IEEECS Log Number TPAMI-0705-1205.

Digital Object Identifier no. 10.1109/TPAMI.2007.1049.

0162-8828/07/$25.00 ß 2007 IEEE Published by the IEEE Computer Society