没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

CS229 Lecture notes

Andrew Ng

Supervised learning

Lets start by talking about a few examples of supervised learning problems.

Suppose we have a dataset giving the living areas and prices of 47 houses

from Portland, Oregon:

Living area (feet

2

) Price (1000$s)

2104 400

1600

330

2400

369

1416

232

3000

540

.

.

.

.

.

.

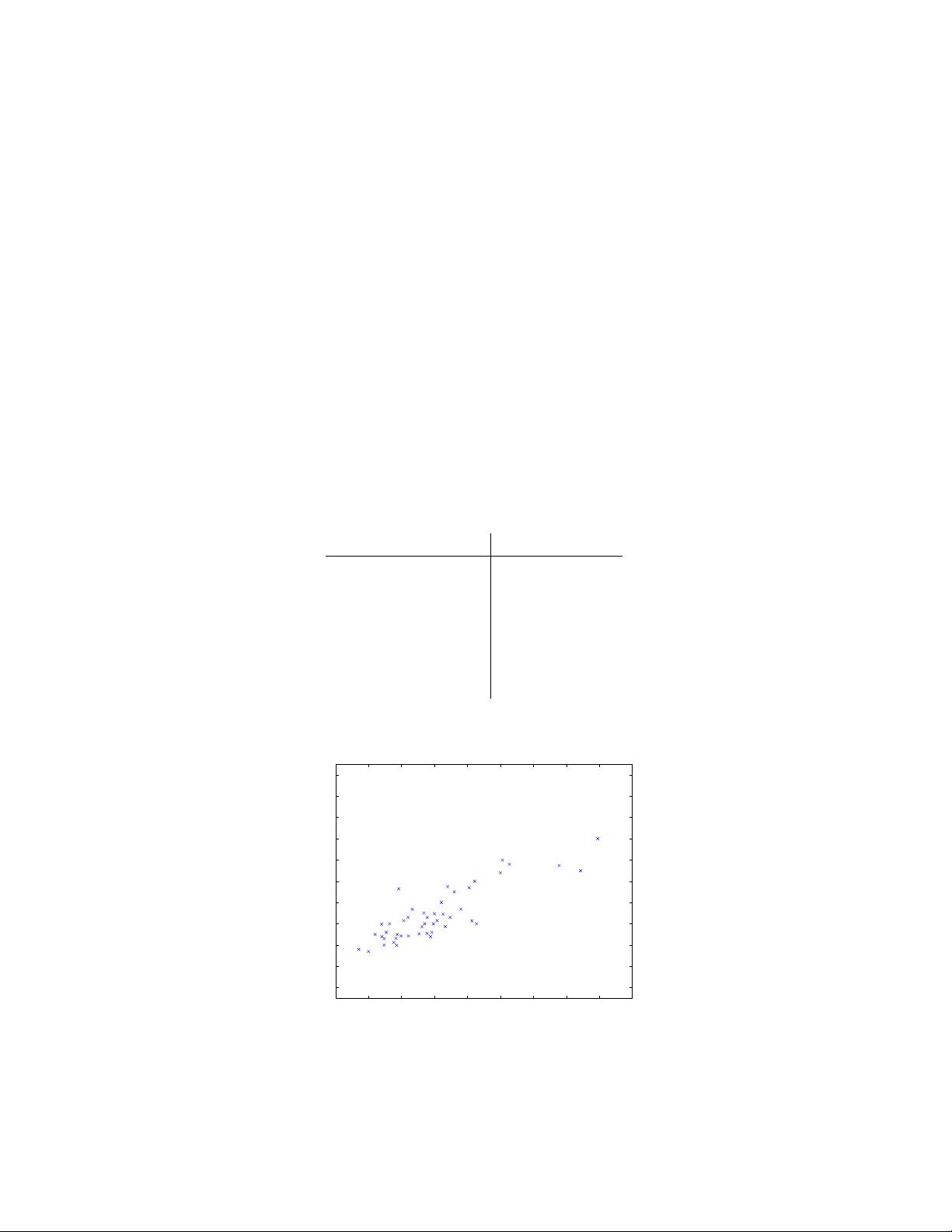

We can plot this data:

500 1000 1500 2000 2500 3000 3500 4000 4500 5000

0

100

200

300

400

500

600

700

800

900

1000

housing prices

square feet

price (in $1000)

Given data like this, how can we learn to predict the prices of other houses

in Portland, as a function of the size of their living areas?

1

CS229 Winter 2003 2

To establish notation for future use, we’ll use x

(i)

to denote the “inp ut”

variables (living area in this example), also called input features, and y

(i)

to denote the “output” or target variable that we are trying to predict

(price). A pair (x

(i)

, y

(i)

) is called a training example, and the dataset

that we’ll be using to learn—a list of m training examples {(x

(i)

, y

(i)

); i =

1, . . . , m}—is called a training set. Note that the superscript “(i)” in the

notation is simply an index into the training set, and has nothing to do with

exponentiation. We will also use X denote the space of input values, and Y

the space of output values. In this example, X = Y = R.

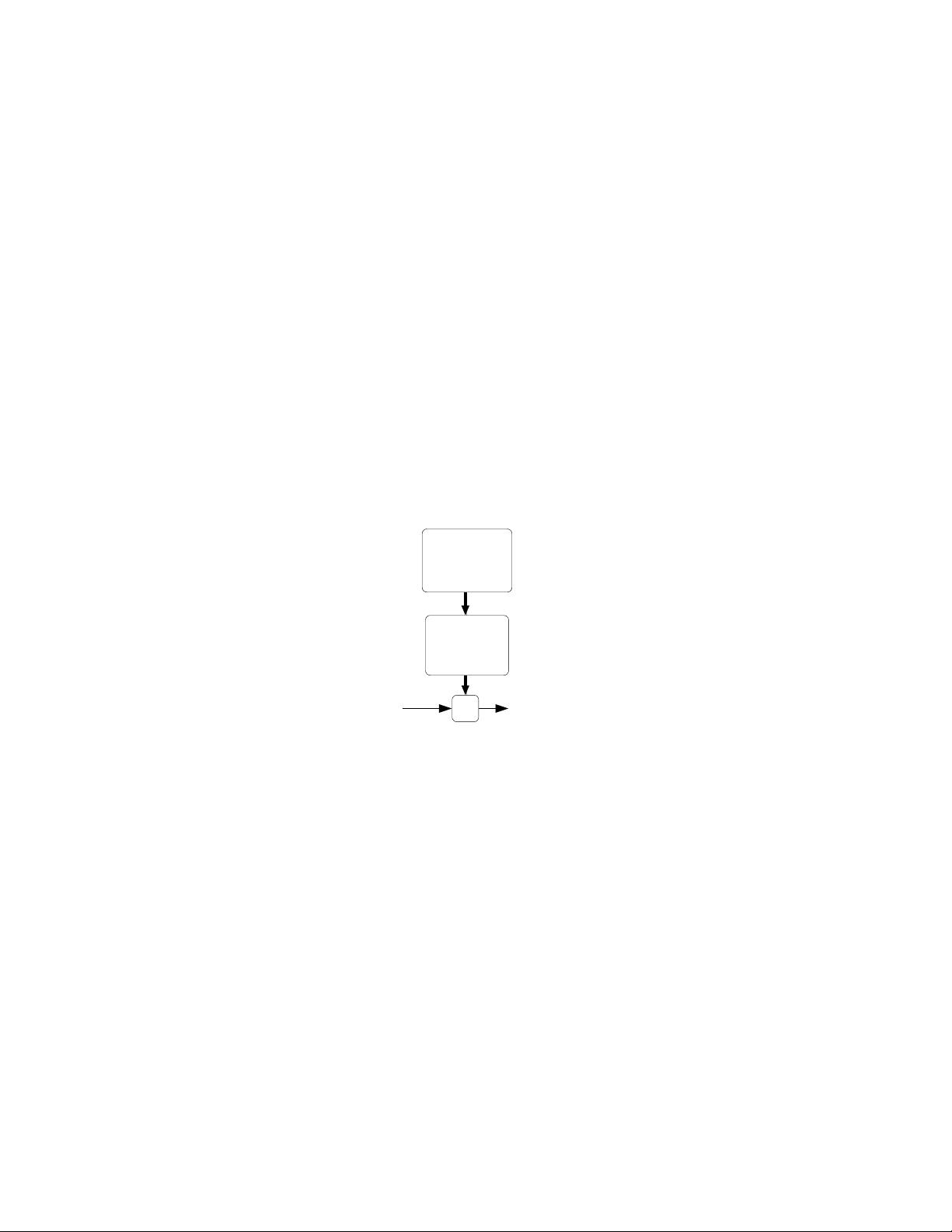

To describe the supervised learning problem slightly more formally, our

goal is, given a training set, to learn a function h : X 7→ Y so that h(x) is a

“good” predictor for the corresponding value of y. For historical reasons, th is

function h is called a hypothesis. Seen pictorially, the process is therefore

like this:

Training

set

house.)

(living area of

Learning

algorithm

h

predicted yx

(predicted price)

of house)

When the target variable that we’re trying to predict is continuous, such

as in our housing example, we call the learning problem a regression prob-

lem. When y can take on only a small number of d iscrete values (such as

if, given the living area, we wanted to predict if a dwelling is a house or an

apartment, say), we call it a classification problem.

3

Part I

Linear Regression

To make our housing example more interesting, lets consider a slightly richer

dataset in which we also know the number of bedrooms in each house:

Living area (feet

2

)

#bedrooms Price (1000$s)

2104 3 400

1600

3 330

2400

3 369

1416

2 232

3000

4 540

.

.

.

.

.

.

.

.

.

Here, the x’s are two-dimensional vectors in R

2

. For instance, x

(i)

1

is the

living area of the i-th house in the training set, and x

(i)

2

is its number of

bedrooms. (In general, when designing a learning problem, it will be up to

you to d ecide what features to choose, so if you are out in Portland gathering

housing data, you might also decide to include other features such as whether

each house has a fireplace, the number of bathrooms, and so on. We’ll say

more about feature selection later, but for now lets take the features as given.)

To perform super vised learning, we must decide how we’re going to rep-

resent functions/hypotheses h in a computer. As an initial choice, lets say

we decide to approximate y as a linear function of x:

h

θ

(x) = θ

0

+ θ

1

x

1

+ θ

2

x

2

Here, the θ

i

’s are the parameters (also called weights) parameterizing the

space of linear functions mapping from X to Y. When there is no risk of

confusion, we will drop the θ subscript in h

θ

(x), and write it more simply as

h(x). To simplify our notation, we also introduce the convention of letting

x

0

= 1 (this is the intercept term), so that

h(x) =

n

X

i=0

θ

i

x

i

= θ

T

x,

where on the right-hand side above we are viewing θ and x both as vectors,

and here n is the number of input variables (not counting x

0

).

Now, given a training set, how do we pick, or learn, the parameters θ?

One reasonable method seems to be to make h(x) close to y, at least for

4

the training examples we have. To formalize this, we will define a function

that measures, for each value of the θ’s, how close the h(x

(i)

)’s are to the

corresponding y

(i)

’s. We define the cost function:

J(θ) =

1

2

m

X

i=1

(h

θ

(x

(i)

) − y

(i)

)

2

.

If you’ve seen linear regression before, you may recognize this as the familiar

least-squares cost function that gives rise to the ordinary least squares

regression model. Whether or not you have seen it previously, lets keep

going, and we’ll eventually show this to be a special case of a much broader

family of algorithms.

1 LMS algorithm

We want to choose θ so as to minimize J(θ). To do so, lets use a search

algorithm that starts with some “initial guess” for θ, and that repeatedly

changes θ to make J(θ) smaller, until hopefully we converge to a value of

θ that minimizes J(θ). Specifically, lets consider the gradient descent

algorithm, which starts with some initial θ, and repeatedly performs the

update:

θ

j

:= θ

j

− α

∂

∂θ

j

J(θ).

(This update is simultaneously performed for all values of j = 0, . . . , n.)

Here, α is called the learning rate. This is a very natural algorithm that

repeatedly takes a step in the direction of steepest decrease of J.

In order to implement this algorithm, we have to work out what is the

partial derivative term on the right hand side. Lets first work it out for the

case of if we have only one training example (x, y), so that we can neglect

the sum in the definition of J. We have:

∂

∂θ

j

J(θ) =

∂

∂θ

j

1

2

(h

θ

(x) − y)

2

= 2 ·

1

2

(h

θ

(x) − y) ·

∂

∂θ

j

(h

θ

(x) − y)

= (h

θ

(x) − y) ·

∂

∂θ

j

n

X

i=0

θ

i

x

i

− y

!

= (h

θ

(x) − y) x

j

剩余134页未读,继续阅读

资源评论

DOOM

- 粉丝: 150

- 资源: 2

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功