没有合适的资源?快使用搜索试试~ 我知道了~

资源详情

资源评论

资源推荐

http://bradhedlund.com/?p=3108

This article is Part 1 in series that will take a closer look at the architecture and

methods of a Hadoop cluster, and how it relates to the network and server

infrastructure. The content presented here is largely based on academic work

and conversations I‘ve had with customers running real production clusters. If you

run production Hadoop clusters in your data center, I'm hoping you'll provide your

valuable insight in the comments below. Subsequent articles to this will cover the

server and network architecture options in closer detail. Before we do that though,

lets start by learning some of the basics about how a Hadoop cluster works. OK, lets

get started!

1

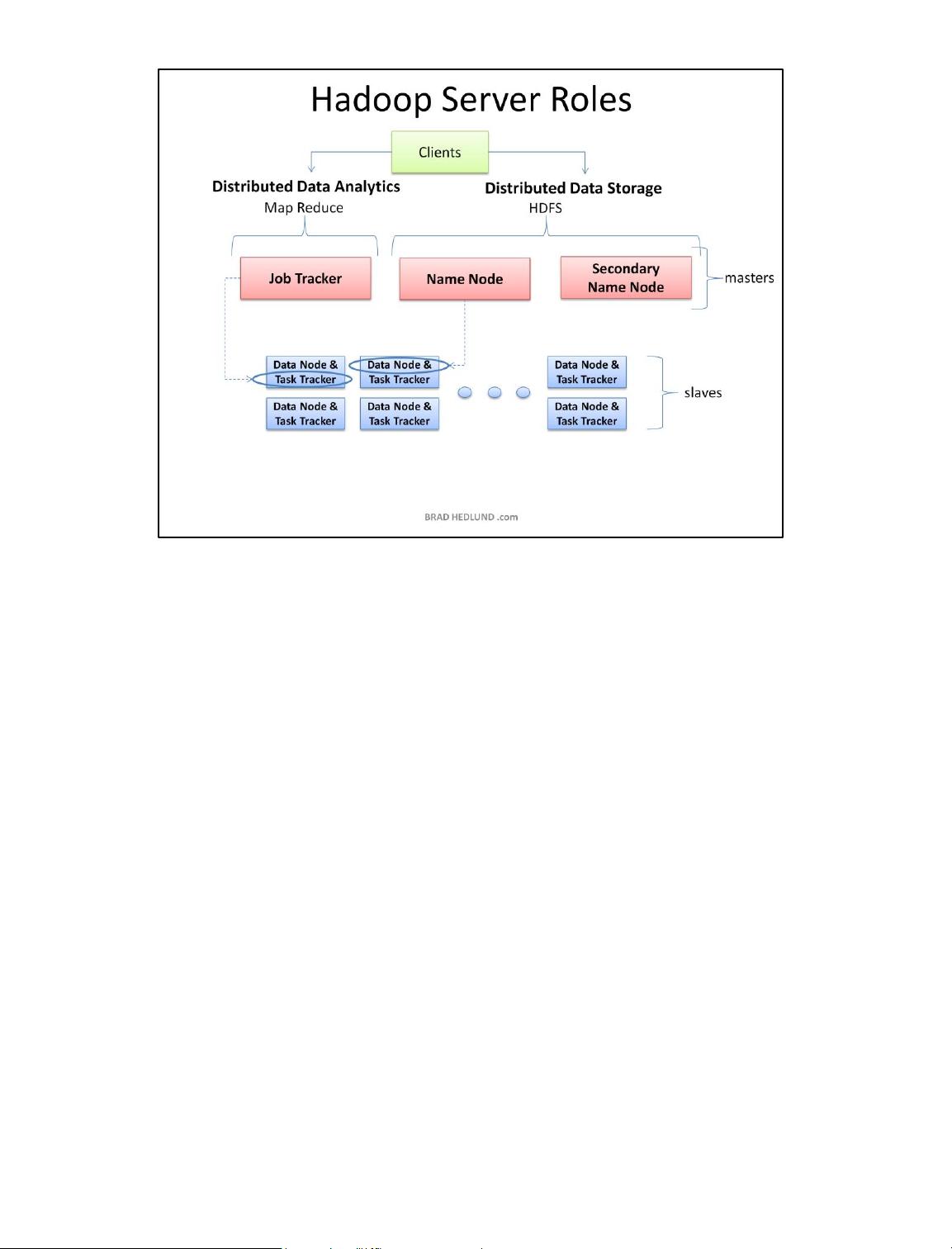

The three major categories of machine roles in a Hadoop deployment are Client machines,

Masters nodes, and Slave nodes. The Master nodes oversee the two key functional pieces

that make up Hadoop: storing lots of data (HDFS), and running parallel computations on all

that data (Map Reduce). The Name Node oversees and coordinates the data storage

function (HDFS), while the Job Tracker oversees and coordinates the parallel processing of

data using Map Reduce. Slave Nodes make up the vast majority of machines and do all the

dirty work of storing the data and running the computations. Each slave runs both a Data

Node and Task Tracker daemon that communicate with and receive instructions from their

master nodes. The Task Tracker daemon is a slave to the Job Tracker, the Data Node

daemon a slave to the Name Node.

Client machines have Hadoop installed with all the cluster settings, but are neither a

Master or a Slave. Instead, the role of the Client machine is to load data into the cluster,

submit Map Reduce jobs describing how that data should be processed, and then retrieve

or view the results of the job when its finished. In smaller clusters (~40 nodes) you may

have a single physical server playing multiple roles, such as both Job Tracker and Name

Node. With medium to large clusters you will often have each role operating on a single

server machine.

In real production clusters there is no server virtualization, no hypervisor layer. That would

only amount to unnecessary overhead impeding performance. Hadoop runs best on Linux

machines, working directly with the underlying hardware. That said, Hadoop does work in

a virtual machine. That's a great way to learn and get Hadoop up and running fast and

cheap. I have a 6-node cluster up and running in VMware Workstation on my Windows 7

laptop.

2

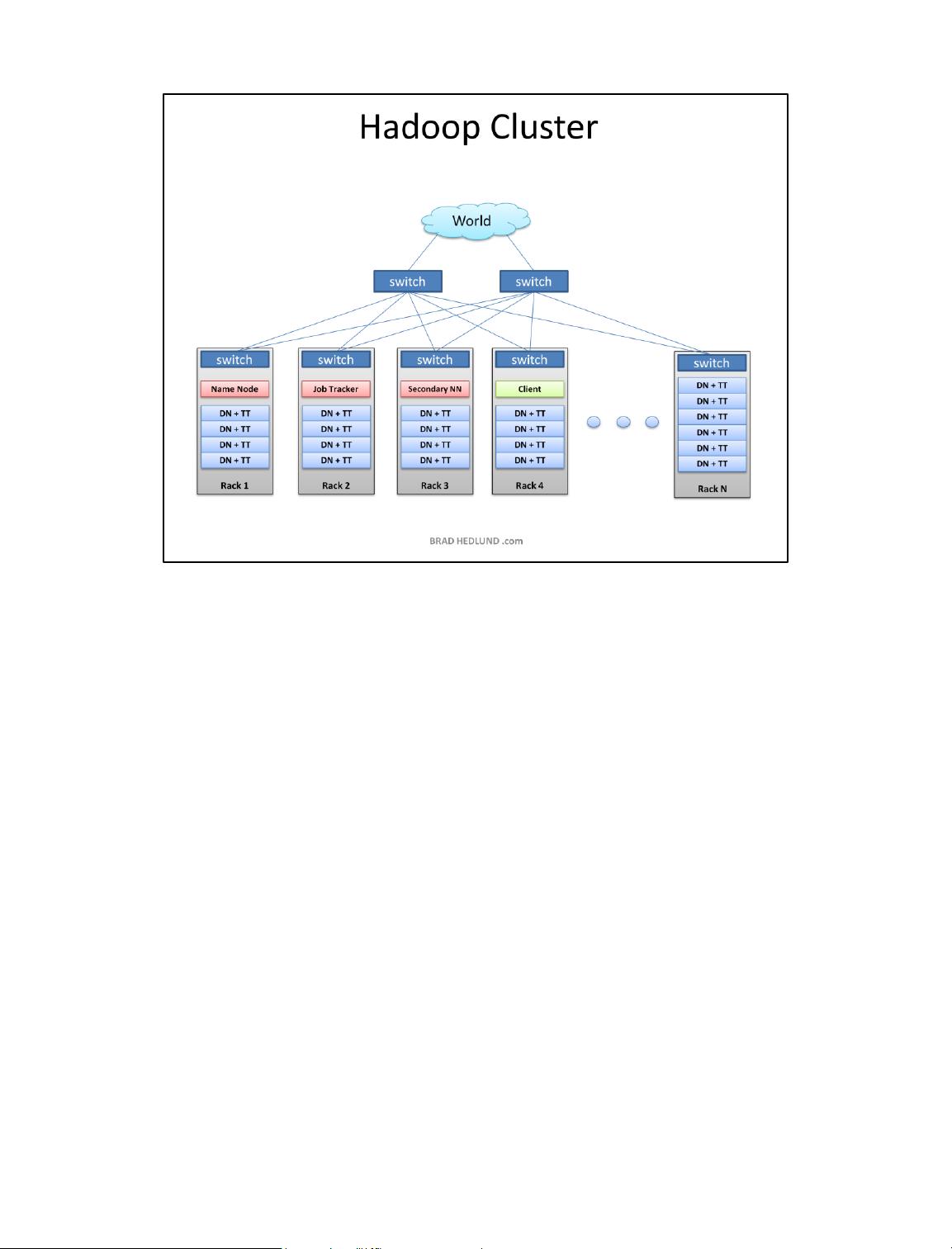

This is the typical architecture of a Hadoop cluster. You will have rack servers (not blades)

populated in racks connected to a top of rack switch usually with 1 or 2 GE boned

links. 10GE nodes are uncommon but gaining interest as machines continue to get more

dense with CPU cores and disk drives. The rack switch has uplinks connected to another

tier of switches connecting all the other racks with uniform bandwidth, forming the

cluster. The majority of the servers will be Slave nodes with lots of local disk storage and

moderate amounts of CPU and DRAM. Some of the machines will be Master nodes that

might have a slightly different configuration favoring more DRAM and CPU, less local

storage.

In this post, we are not going to discuss various detailed network design options. Let's save

that for another discussion (stay tuned). First, lets understand how this application works...

3

Why did Hadoop come to exist? What problem does it solve? Simply put, businesses and

governments have a tremendous amount of data that needs to be analyzed and processed

very quickly. If I can chop that huge chunk of data into small chunks and spread it out over

many machines, and have all those machines processes their portion of the data in parallel

-- I can get answers extremely fast -- and that, in a nutshell, is what Hadoop does.

In our simple example, we'll have a huge data file containing emails sent to the customer

service department. I want a quick snapshot to see how many times the word "Refund"

was typed by my customers. This might help me to anticipate the demand on our returns

and exchanges department, and staff it appropriately. It's a simple word count

exercise. The Client will load the data into the cluster (File.txt), submit a job describing

how to analyze that data (word count), the cluster will store the results in a new file

(Results.txt), and the Client will read the results file.

4

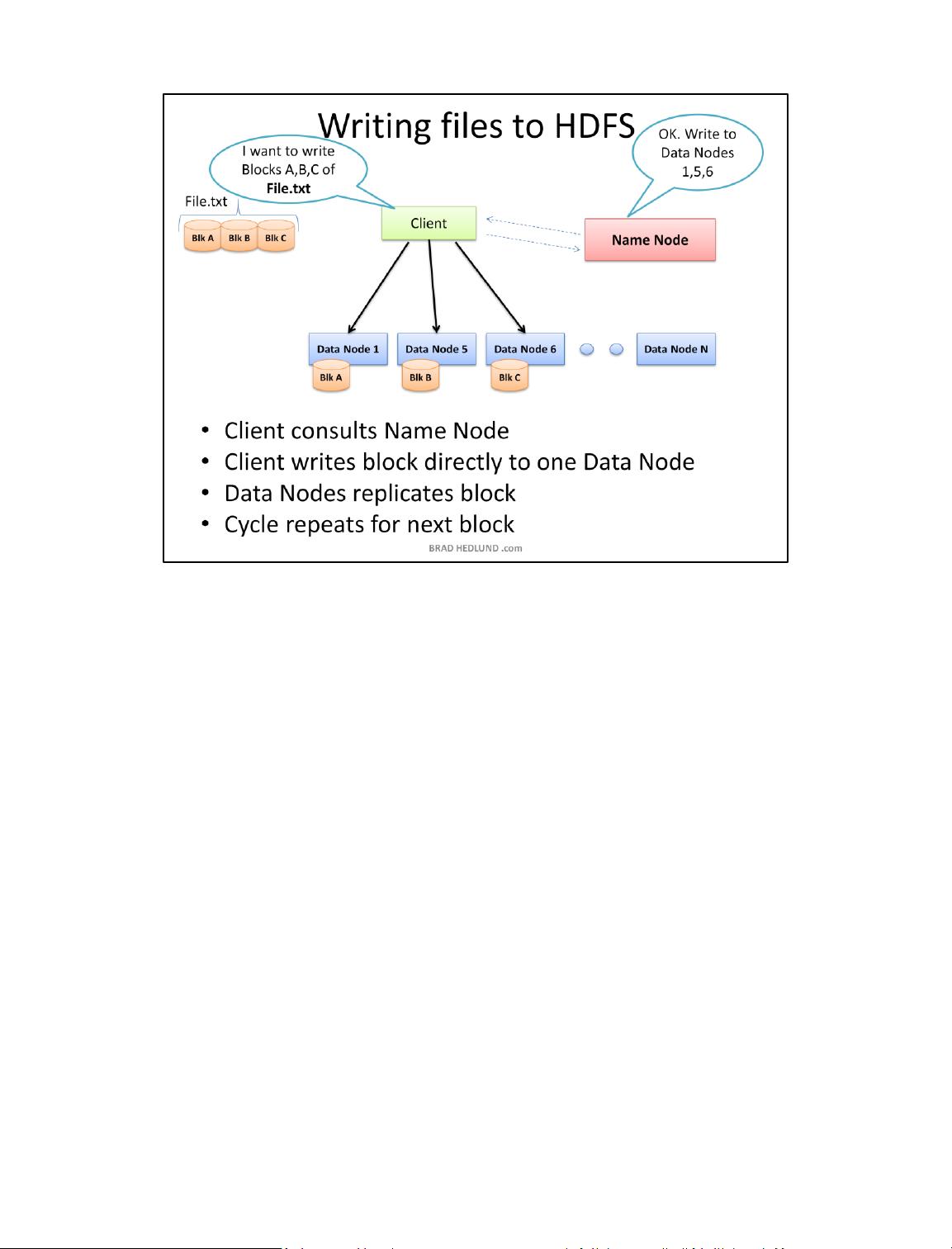

Your Hadoop cluster is useless until it has data, so we'll begin by loading our huge File.txt

into the cluster for processing. The goal here is fast parallel processing of lots of data. To

accomplish that I need as many machines as possible working on this data all at once. To

that end, the Client is going to break the data file into smaller "Blocks", and place those

blocks on different machines throughout the cluster. The more blocks I have, the more

machines that will be able to work on this data in parallel. At the same time, these

machines may be prone to failure, so I want to insure that every block of data is on multiple

machines at once to avoid data loss. So each block will be replicated in the cluster as its

loaded. The standard setting for Hadoop is to have (3) copies of each block in the

cluster. This can be configured with the dfs.replication parameter in the file hdfs-site.xml.

The Client breaks File.txt into (3) Blocks. For each block, the Client consults the Name

Node (usually TCP 9000) and receives a list of (3) Data Nodes that should have a copy of

this block. The Client then writes the block directly to the Data Node (usually TCP

50010). The receiving Data Node replicates the block to other Data Nodes, and the cycle

repeats for the remaining blocks. The Name Node is not in the data path. The Name Node

only provides the map of where data is and where data should go in the cluster (file system

metadata).

5

剩余25页未读,继续阅读

bigdatayunzhongyan

- 粉丝: 23

- 资源: 14

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0