1

Sparse Subspace Clustering:

Algorithm, Theory, and Applications

Ehsan Elhamifar, Student Member, IEEE, and Ren

´

e Vidal, Senior Member, IEEE

Abstract—Many real-world problems deal with collections of high-dimensional data, such as images, videos, text and web documents, DNA

microarray data, and more. Often, such high-dimensional data lie close to low-dimensional structures corresponding to several classes or

categories to which the data belong. In this paper, we propose and study an algorithm, called Sparse Subspace Clustering (SSC), to cluster

data points that lie in a union of low-dimensional subspaces. The key idea is that, among the infinitely many possible representations of a data

point in terms of other points, a sparse representation corresponds to selecting a few points from the same subspace. This motivates solving a

sparse optimization program whose solution is used in a spectral clustering framework to infer the clustering of the data into subspaces. Since

solving the sparse optimization program is in general NP-hard, we consider a convex relaxation and show that, under appropriate conditions

on the arrangement of the subspaces and the distribution of the data, the proposed minimization program succeeds in recovering the desired

sparse representations. The proposed algorithm is efficient and can handle data points near the intersections of subspaces. Another key

advantage of the proposed algorithm with respect to the state of the art is that it can deal directly with data nuisances, such as noise,

sparse outlying entries, and missing entries, by incorporating the model of the data into the sparse optimization program. We demonstrate the

effectiveness of the proposed algorithm through experiments on synthetic data as well as the two real-world problems of motion segmentation

and face clustering.

Index Terms—High-dimensional data, intrinsic low-dimensionality, subspaces, clustering, sparse representation, `

1

-minimization, convex

programming, spectral clustering, principal angles, motion segmentation, face clustering.

F

1 INTRODUCTION

H

IGH-DIMENSIONAL data are ubiquitous in many areas of

machine learning, signal and image processing, computer

vision, pattern recognition, bioinformatics, etc. For instance,

images consist of billions of pixels, videos can have millions of

frames, text and web documents are associated with hundreds of

thousands of features, etc. The high-dimensionality of the data not

only increases the computational time and memory requirements

of algorithms, but also adversely affects their performance due to

the noise effect and insufficient number of samples with respect

to the ambient space dimension, commonly referred to as the

“curse of dimensionality” [1]. However, high-dimensional data

often lie in low-dimensional structures instead of being uniformly

distributed across the ambient space. Recovering low-dimensional

structures in the data helps to not only reduce the computational

cost and memory requirements of algorithms, but also reduce

the effect of high-dimensional noise in the data and improve the

performance of inference, learning, and recognition tasks.

In fact, in many problems, data in a class or category can

be well represented by a low-dimensional subspace of the high-

dimensional ambient space. For example, feature trajectories of

a rigidly moving object in a video [2], face images of a subject

under varying illumination [3], and multiple instances of a hand-

written digit with different rotations, translations, and thicknesses

[4] lie in a low-dimensional subspace of the ambient space. As a

result, the collection of data from multiple classes or categories

lie in a union of low-dimensional subspaces. Subspace clustering

• E. Elhamifar is with the Department of Electrical Engineering and

Computer Science, University of California, Berkeley, USA. E-mail:

ehsan@eecs.berkeley.edu.

• R. Vidal is with the Center for Imaging Science and the Department of

Biomedical Engineering, The Johns Hopkins University, USA. E-mail: rvi-

dal@cis.jhu.edu.

(see [5] and references therein) refers to the problem of separating

data according to their underlying subspaces and finds numerous

applications in image processing (e.g., image representation and

compression [6]) and computer vision (e.g., image segmentation

[7], motion segmentation [8], [9], and temporal video segmen-

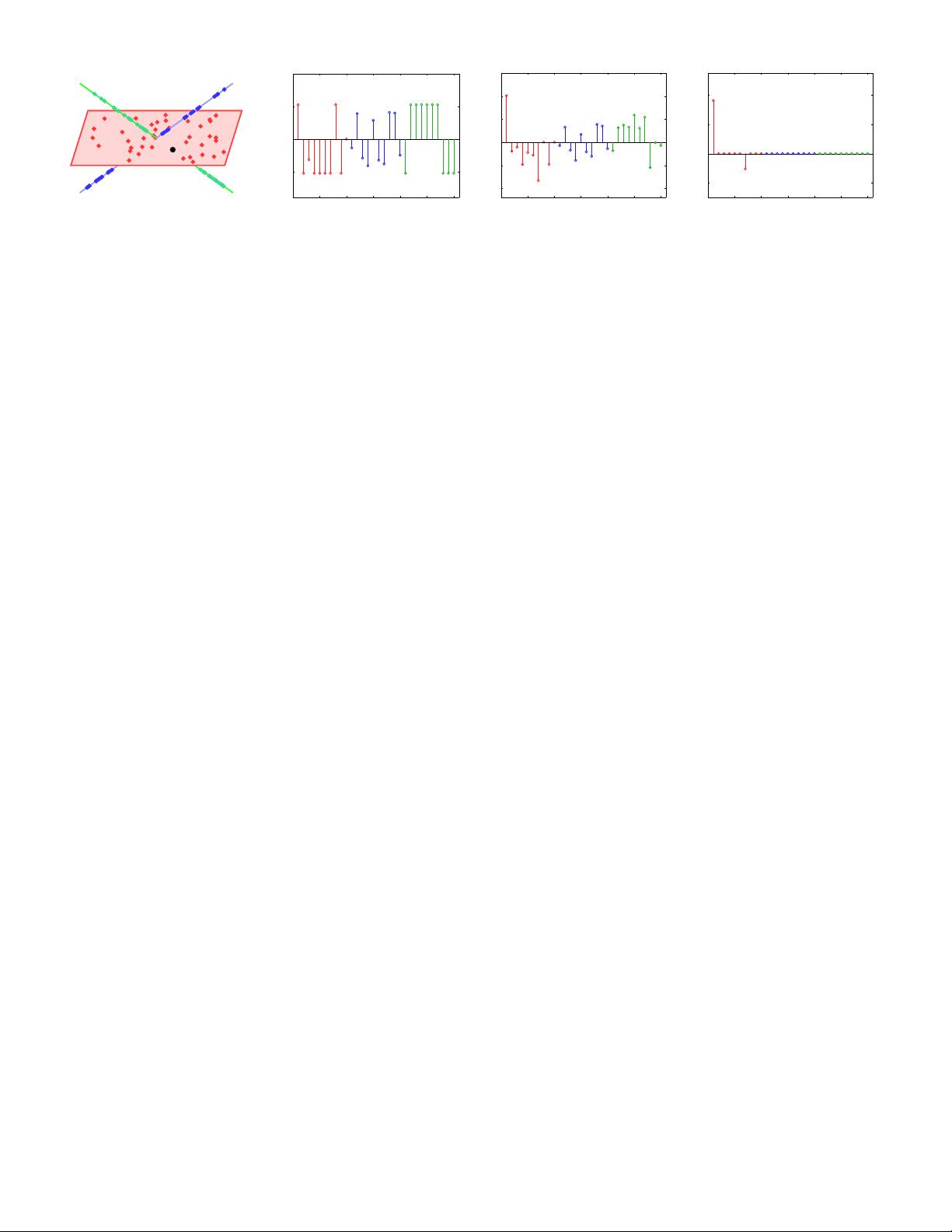

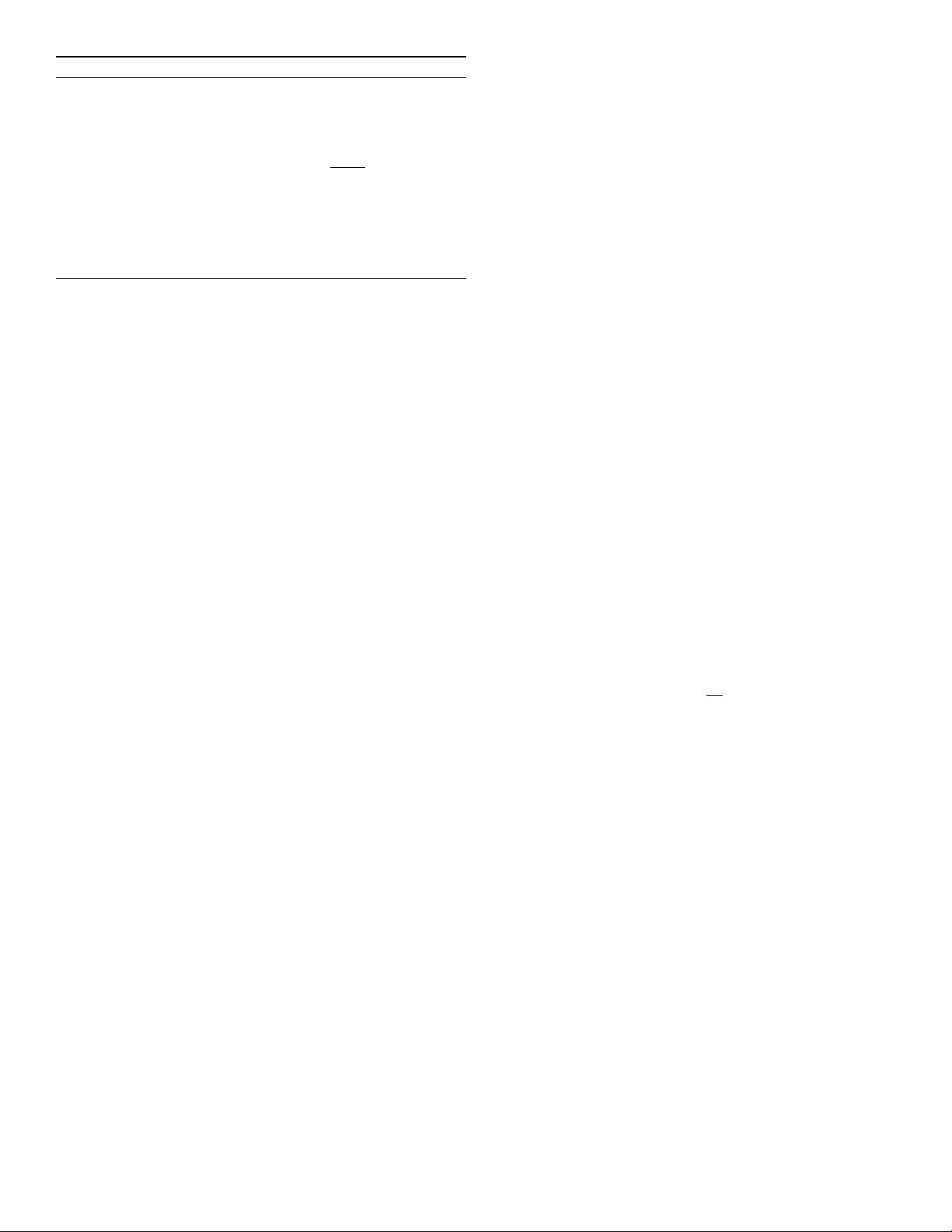

tation [10]), as illustrated in Figures 1 and 2. Since data in

a subspace are often distributed arbitrarily and not around a

centroid, standard clustering methods [11] that take advantage

of the spatial proximity of the data in each cluster are not in

general applicable to subspace clustering. Therefore, there is a

need for having clustering algorithms that take into account the

multi-subspace structure of the data.

1.1 Prior Work on Subspace Clustering

Existing algorithms can be divided into four main categories: iter-

ative, algebraic, statistical, and spectral clustering-based methods.

Iterative methods. Iterative approaches, such as K-subspaces

[12], [13] and median K-flats [14] alternate between assigning

points to subspaces and fitting a subspace to each cluster. The

main drawbacks of such approaches are that they generally require

to know the number and dimensions of the subspaces, and that

they are sensitive to initialization.

Algebraic approaches. Factorization-based algebraic approaches

such as [8], [9], [15] find an initial segmentation by thresholding

the entries of a similarity matrix built from the factorization

of the data matrix. These methods are provably correct when

the subspaces are independent, but fail when this assumption

is violated. In addition, they are sensitive to noise and outliers

in the data. Algebraic-geometric approaches such as Generalized

Principal Component Analysis (GPCA) [10], [16], fit the data

with a polynomial whose gradient at a point gives the normal

vector to the subspace containing that point. While GPCA can

arXiv:1203.1005v3 [cs.CV] 5 Feb 2013