没有合适的资源?快使用搜索试试~ 我知道了~

Fine-tuning Graph

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

0 下载量 142 浏览量

2024-06-18

14:23:10

上传

评论

收藏 8.77MB PDF 举报

温馨提示

试读

18页

Fine-tuning Graph Neural Networks by Preserving Graph Generative Patterns

资源推荐

资源详情

资源评论

Fine-tuning Graph Neural Networks by Preserving Graph Generative Patterns

Yifei Sun

1

, Qi Zhu

2

, Yang Yang

1

*

, Chunping Wang

3

, Tianyu Fan

1

, Jiajun Zhu

1

, Lei Chen

3

1

Zhejiang University, Hangzhou, China

2

University of Illinois Urbana-Champaign, USA

1

FinVolution Group, Shanghai, China

{yifeisun, yangya, fantianyu, junnian}@zju.edu.cn, qiz3@illinois.edu, {wangchunping02, chenlei04}@xinye.com

Abstract

Recently, the paradigm of pre-training and fine-tuning graph

neural networks has been intensively studied and applied in

a wide range of graph mining tasks. Its success is generally

attributed to the structural consistency between pre-training

and downstream datasets, which, however, does not hold in

many real-world scenarios. Existing works have shown that the

structural divergence between pre-training and downstream

graphs significantly limits the transferability when using the

vanilla fine-tuning strategy. This divergence leads to model

overfitting on pre-training graphs and causes difficulties in

capturing the structural properties of the downstream graphs.

In this paper, we identify the fundamental cause of structural

divergence as the discrepancy of generative patterns between

the pre-training and downstream graphs. Furthermore, we

propose G-TUNING to preserve the generative patterns of

downstream graphs. Given a downstream graph

G

, the core

idea is to tune the pre-trained GNN so that it can reconstruct

the generative patterns of

G

, the graphon

W

. However, the

exact reconstruction of a graphon is known to be computa-

tionally expensive. To overcome this challenge, we provide

a theoretical analysis that establishes the existence of a set

of alternative graphons called graphon bases for any given

graphon. By utilizing a linear combination of these graphon

bases, we can efficiently approximate

W

. This theoretical

finding forms the basis of our proposed model, as it enables

effective learning of the graphon bases and their associated

coefficients. Compared with existing algorithms, G-TUNING

demonstrates an average improvement of 0.5% and 2.6% on

in-domain and out-of-domain transfer learning experiments,

respectively.

1 Introduction

The development of graph neural networks (GNNs) has rev-

olutionized many tasks of various domains in recent years.

However, labeled data is extremely scarce due to the time-

consuming and laborious labeling process. To address this

obstacle, the “pre-train and fine-tune” paradigm has made

substantial progress (Xia et al. 2022; Li, Zhao, and Zeng

2022; Jiao et al. 2023) and attracted considerable research

interests. Specifically, this paradigm involves pre-training a

model on a large-scale graph dataset, followed by fine-tuning

its parameters on downstream graphs by specific tasks.

*

Corresponding author.

Copyright © 2024, Association for the Advancement of Artificial

The success of “pre-train and fine-tune” paradigm is gen-

erally attributed to the structural consistency between pre-

training and downstream graphs (Hu et al. 2020b,a; Qiu et al.

2020; Sun et al. 2021; Jiarong et al. 2023). However, in real-

world scenarios, structural patterns vary dramatically across

different graphs, and patterns in the downstream graphs may

not be readily available in the pre-training graph. In the con-

text of molecular graphs, a well-known out-of-distribution

problem arises when the training and testing graphs originate

from different environments, characterized by variations in

size or scaffold. As a consequence, the downstream molecular

graph data often encompasses numerous novel substructures

that has not been encountered during training. Hence, struc-

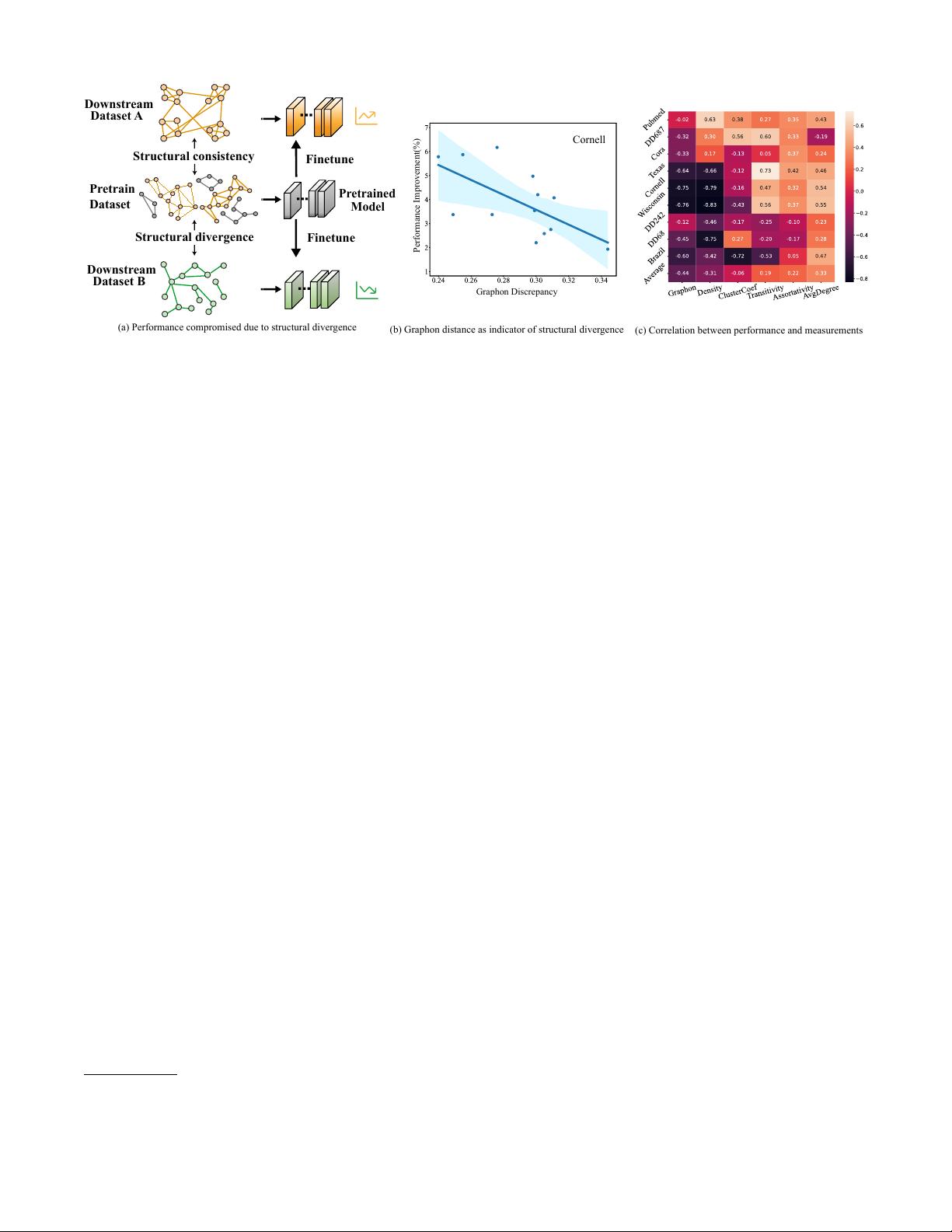

tural consistency does not always hold. Fig 1(a) shows that

while structural consistency (shown in orange) between the

pre-training and downstream dataset A ensures the promo-

tion of performance, the structural divergence (shown in

green) causes a degradation of performance when fine-tuned

on downstream dataset B. In some cases, it can even lead to

worse results than those obtained without pre-training.

In light of this, we are intrigued by the relationship be-

tween structural divergence and the extent of performance im-

provements on downstream graphs. Specifically, graphon is a

well-known non-parametric function on graph that has been

proved to effectively describe the generative mechanism of

graphs (i.e., generative patterns) (Lovász 2012). In Fig 1(b),

we calculate the Gromov-Wasserstain (GW) discrepancy (a

distance metric between geometry objects) of graphons be-

tween different pre-training and one test graph and report

the corresponding performance on the same downstream

graph. Interestingly, as the difference between graphons of

pre-training dataset and that of downstream dataset increases,

the performance improvement diminishes. To further validate

this, we compute the Pearson CC (Correlation Coefficient)

between other representative graph measurements (e.g., den-

sity, transitivity, etc.) and the performance improvement. As

Fig 1(c) suggests, most of them cannot reflect the degree

of performance improvement, and only the graphon discrep-

ancy is consistently negatively correlated with the degree

of performance promotion. Hence, we attribute the subpar

performance of fine-tuning to the disparity in the genera-

tive patterns between the pre-training and fine-tuning graphs.

Intelligence (www.aaai.org). All rights reserved.

arXiv:2312.13583v1 [cs.LG] 21 Dec 2023

Pretrained

Model

Finetune

Finetune

Structural consistency

Structural divergence

Downst

ream

Downstream

Dataset A

Dataset B

Pretrain

Dataset

(a) Performance compromised due to structural divergence

(b) Graphon distance as indicator of structural divergence

(c) Correlation between performance and measurements

Graphon Discrepancy

P

e

rformance Improveme

n

t

(

%

)

Cornell

Figure 1: The sketch and the observations of the structural divergence. (a) The sketch shows the performance under different

scenarios. (b) The case study shows that the larger the graphon Gromov-Wasserstain (GW) discrepancy between different

pre-train datasets (shown in App § A.4) and downstream dataset Cornell (Rozemberczki, Allen, and Sarkar 2021) is, the less the

performance (%) is promoted. (c) The pearson correlation between: (Horizontal) discrepancy of different graph measurements

and graphon between pre-training and downstream datasets, (Vertical) the performance promotion for different downstream

datasets when using different pre-train datasets.

Nevertheless, fine-tuning with respect to generative patterns

poses significant challenges: (1) the structural information

of the pre-training may not be accessible during fine-tuning,

and (2) effectively representing intricate semantics within

these generative patterns, such as graphons, requires careful

design considerations.

In this paper, we aim to address these challenges by propos-

ing a fine-tuning strategy, G-TUNING, which is agnostic to

pre-training data and algorithms. Specifically, it performs

graphon reconstruction of the downstream graphs during fine-

tuning. In order to enable efficient reconstruction, we provide

a theoretical result (Theorem 1) that given a graphon

W

, it’s

possible to find a set of other graphons, called graphon bases,

whose linear combination can closely approximate

W

. Then,

we develop a graphon decoder that transforms the embed-

dings from the pre-trained model into a set of coefficients.

These coefficients are combined with the structure-aware

learnable bases to form the reconstructed graphon. To en-

sure the fidelity of the reconstructed graphon, we introduce a

GW-discrepancy based loss, which minimizes the distance

between the approximated graphon and an oracle graphon

(Xu et al. 2021). Furthermore, by optimizing our proposed

G-TUNING, we obtain provable results regarding the dis-

criminative subgraphs relevant to the task (Theorem 2).

The main contributions of our work are as follows:

1

•

We identify the generative patterns of downstream graphs

as a crucial step in bridging the gap between pre-training

and fine-tuning.

•

Building upon on our theoretical results, we design the

model architecture, G-TUNING to efficiently reconstruct

graphon as generative patterns with rigorous generaliza-

tion results.

•

Empirically, our method shows an average of 0.47% and

2.62% improvement on 8 in-domain and 7 out-of-domain

transfer learning datasets over the best baseline.

1

Supplement materials: https://github.com/zjunet/G-Tuning

2 Preliminaries

Notations. Let

G = (V, A, X)

denote a graph, where

V

is

the node set,

A ∈ {0, 1}

|V |×|V |

is the adjacency matrix and

X ∈ R

|V |×d

is the node feature matrix where

d

is the dimen-

sion of feature.

G

s

and

G

t

denotes a pre-training graph and a

downstream graph respectively. The classic and commonly

used pre-training paradigm is to first pre-train the backbone

Φ

on abundant unlabeled graphs by a self-supervised task

with the loss as

L

SSL

. Then the pre-trained

Φ

is employed to

fine-tuning on labeled downstream graphs. The embedding

H ∈ R

|V |×d

with the hidden dimension

d

encoded by

Φ

is

further input to a randomly initialized task-specific shallow

model

f

ϕ

. The goal of fine-tune is to adapt both

Φ

and

f

ϕ

for

the downstream task with the loss

L

task

and the label

Y

. The

pre-training setup has two variations: one is the in-domain

setting, where

G

s

and

G

t

come from the same domain, and the

other is the out-of-domain setting, where

G

s

and

G

t

originate

from different domains. In the latter case, the structural diver-

gence between

G

s

and

G

t

is greater, posing greater challenges

for fine-tuning.

Definition 1 (Graph generative patterns). Graph generative

patterns are data distributions parameterized by

Θ

where

the observed graphs

{G

1

, ..., G

n

}

are sampled from,

G

i

∼

P (G; Θ).

According to our definition,

Θ

can be traditional graph

generative model such as Erdos-Rényi (Erd

˝

os and Rényi

1959), stochastic block model (Airoldi et al. 2008), forest-

fire graph (Leskovec, Kleinberg, and Faloutsos 2007) and

etc. Besides,

Θ

can also be any deep generative model like

GraphRNN (You et al. 2018). In this paper, we propose a

theoretically sound fine-tuning framework by preserving the

graph generative pattern of the downstream graphs.

Graphon. A graphon (Airoldi, Costa, and Chan 2013),

short for "graph function", can be interpreted as a gener-

alization of a graph with an uncountable number of nodes or

a graph generative model or, more important for this work, a

mathematical object representing

Θ

from graph generative

patterns

P (G; Θ)

. Formally, a graphon is a continuous and

symmetric function

W : [0, 1]

2

→ [0, 1]

. Given two points

u

i

, u

j

∈ [0, 1]

as “nodes”,

W (i, j) ∈ [0, 1]

indicates the prob-

ability of them forming an edge. The main idea of graphon is

that when we extract subgraphs from the observed graph, the

structure of these subgraphs becomes increasingly similar to

that of the observed one as we increase the size of subgraphs.

The structure then converges in some sense to a limit object,

graphon. The convergence is defined via the convergence of

homomorphism densities. Homomorphism density

t(F, G)

is

used to measure the relative frequency that homomorphism of

graph

F

appears in graph

G

:

t(F, G) =

| hom(F,G)|

|V

G

|

|

V

H

|

, which

can be seen as the probability that a random mapping of ver-

tices from

F

to

G

is a homomorphism. Thus, the convergence

can be formalized as

lim

n→∞

t (F, G

n

) = t(F, W )

. When used

as the graph generative patterns, the adjacency matrix

A

of

graph

G

with

N

nodes are sampled from

P (G; W )

as fol-

lows,

v ∼ U(0, 1), v ∈ V ; A

ij

∼ Ber(W (v

i

, v

j

)), ∀i, j ∈ [N ].

(1)

where Ber

(·)

is the Bernoulli distribution. Since there is no

available closed-form expression of graphon, existing works

mainly employ a two-dimensional step function, which can

be seen as a matrix, to represent a graphon (Xu et al. 2021;

Han et al. 2022). In fact, the weak regularity lemma of

graphon (Lovász 2012) indicates that an arbitrary graphon

can be approximated well by a two-dimensional step function.

Hence, we follow the above mentioned works to employ a

step function

W ∈ [0, 1]

D×D

to represent a graphon, where

D is a hyper-parameter.

3 Related Work

Fine-tuning strategies. Designing fine-tuning strategies

first attracts attention in computer vision (CV), which can

be categorized into model parameter regularization and fea-

ture regularization. L2_SP(Xuhong, Grandvalet, and Davoine

2018) uses

L

2

distance to constrain the parameters around

pre-trained ones. StochNorm (Kou et al. 2020) replaces

BN(batch normalization) layers in pre-trained model with

their StochNorm layers. DELTA (Li et al. 2018) selects fea-

tures with channel-wise attention to constrain. BSS (Chen

et al. 2019) penalizes small eigenvalues of features to prevent

negative transfer. However, there is only one work focusing

on promoting performance of downstream task during fine-

tuning phase specially for the graph structured data. GTOT-

Tuning (Zhang et al. 2022) presents an optimal transport-

based feature regularization, which achieves node-level trans-

port through graph structure. Moreover, the gap between

pre-train and fine-tune is also noted in L2P (Lu et al. 2021)

and AUX-TS (Han et al. 2021). Specifically, L2P leverages

meta-learning to adjust tasks during pre-training stage. AUX-

TS adaptively selects and combines auxiliary tasks with the

target task in fine-tuning stage, which means only one pre-

train task is not enough. However, they both require to use

the same dataset for both pre-train and fine-tune, and to in-

sert auxiliary tasks in pre-training phase , which indicates

they are not generally applicable. Unlike them, we focus on

the fine-tuning phase and propose that preserving generative

patterns of downstream graphs during fine-tuning is the key

to mine knowledge from pre-trained GNNs without altering

the pre-training process.

Graphon. Over the past decade, graphon has been studied

intensively as a mathematical object (Borgs et al. 2008, 2012;

Lovász and Szegedy 2006; Lovász 2012) and been applied

broadly, like graph signal processing (Ruiz, Chamon, and

Ribeiro 2020b, 2021), game theory (Parise and Ozdaglar

2019), network science (Avella-Medina et al. 2018; Vizuete,

Garin, and Frasca 2021). Moreover, G-mixup (Han et al.

2022) is proposed to conduct data augmentation for graph

classification since a graphon can serve as a graph generator.

From the another perspective of being the graph limit, (Ruiz,

Chamon, and Ribeiro 2020a) leverage graphon to analyse

the transferability of GNNs. Graphon, as limit of graphs,

forms a natural method to describe graphs and encapsulates

the generative patterns (Borgs and Chayes 2017). Thus, we

incorporate the graphon into the fine-tuning stage to preserve

the generate patterns of downstream graphs. In App § A.1, we

provide more detailed related work about graph pre-training

and graphon.

4 G-TUNING

4.1 Framework Overview

Similar to our seminal results in Fig 1, recent research (Zhu

et al. 2021) has started to analyze the transfer performance

of a pre-trained GNN w.r.t. discrepancy between pre-train

and fine-tune graphs. However, the pre-training graphs are

typically not accessible during the fine-tuning phase. Thus,

G-TUNING aims to adapt the pre-trained GNN to the fine-

tuning graphs by preserving the generative patterns. During

fine-tuning, a pre-trained GNN

Φ

obtains latent representa-

tions

H

for downstream graphs

G

t

= {G

1

, ..., G

n

}

and feeds

them into task-specific layers

f

ϕ

to train with the fine-tuning

labels

Y

. For a specific graph

G

i

(A, X)

, the pre-trained node

embeddings H are obtained by pre-trained model Φ:

L

task

= L

CE

(f

ϕ

(H), Y ), H = Φ(A, X), (2)

where

Φ

can be any pre-training backbone model,

L

CE

is the

cross entropy classification loss and

f

ϕ

is a shallow neural

network f

ϕ

: H →

ˆ

Y .

However, the vanilla strategy may fail to improve fine-

tuning performance due to the large discrepancy between

pre-training and fine-tuning graphs, namely negative trans-

fer. To alleviate this, we propose to enable the pre-trained

GNN

Φ

to preserve the generative patterns of the downstream

graphs

G

t

by reconstructing their graphons

W

(overall work-

flow in Fig 2). However, the pre-trained model is inherently

biased towards the generative patterns of the pre-training

graphs. Consequently, at the begining of the fine-tuning, the

embeddings

H

also contain the bias from the pre-training

data. Thus, we need both the

H

and graph structure

A

of

downstream graph to reconstruct the graphon. Specifically,

we design a graphon reconstruction module

Ω

to reconstruct

Vanilla Fine-tuning

Graphon

Encoder

Pre-trained

Model

Coefficients

Learnable Bases

Structural-aware Initialization

Oracle Graphon

Downstream

Graph

Predicted Graphon

Estimation

task

H

Figure 2: Overall workflow of G-TUNING. Each basis B

i

and predicted graphon

ˆ

W is produced during actual training.

the graphon

ˆ

W of each downstream graph G

i

(A, X) ∈ G

t

:

ˆ

W = Ω(A, H), (3)

However, the computation of oracle graphons

W

of real-

world graphs in continuous function form itself is in-

tractable (Han et al. 2022). Thus, the graphon reconstruction

module

Ω

approximates an estimated oracle graphon (i.e.

W ∈ [0, 1]

D×D

) for each downstream graph through

L

aux

,

D is the size of oracle graphon. Finally, in the framework of

G-TUNING (Fig 2), we leverage both the downstream task

loss and our reconstruction loss to optimize the parameters

of pre-trained GNN encoder

Φ

, the layer

f

ϕ

and graphon

reconstruction module Ω as follows,

L = L

task

+ λL

G-TUNING

(W,

ˆ

W ). (4)

where λ is a hyper-parameter of G-TUNING.

4.2 Approximating Oracle Graphon

In this section, we first discuss the calculation of the under-

lying graphon, denoted as

W

, given downstream graphs

G

t

.

Then we introduced proposed algorithm to reconstruct this

graphon as an auxiliary loss during the fine-tuning process.

Extensive research has been conducted on the methods for

estimating graphons (Airoldi, Costa, and Chan 2013; Chatter-

jee 2015; Pensky 2019; Ruiz, Chamon, and Ribeiro 2020a)

from observed graphs. In this paper, the oracle graphon

W

used in Eq 4 can be estimated via structured Gromov-

Wasserstein barycenters (SGB) (Xu et al. 2021). Suppose

there are N observed graphs

{G

n

}

N

n=1

and their adjacency

matrices {A

n

}

N

n=1

, at each step t,

min

T

n

∈Π(µ,µ

W

)

d

2

gw,2

(W

t

, A

n

) , (5)

W

t+1

=

1

µ

W

µ

⊤

W

N

X

n=1

T

n

⊤

A

n

T

n

, (6)

where

W

t+1

∈ [0, 1]

D×D

is calculated barycenters with the

optimal transportation plan

{T

n

}

N

n=1

of 2-order Gromov-

Wasserstein distance

d

2

gw,2

. The probability measures

µ

W

is

estimated by merging and sorting the normalized node de-

grees in

{A

n

}

N

n=1

. Further details regarding the calculations

of

d

2

gw,2

and the iterative procedure for estimating the oracle

graphon can be found in App § A.3.

After obtaining the oracle graphon

W ∈ [0, 1]

D×D

from

the downstream graphs and the reconstructed graphon

ˆ

W ∈

[0, 1]

M×M

from the reconstruction module

Ω

, we also adopt

the Gromov-Wasserstein distance to measure the distance

between the two unaligned graphons. Formally, our

p

-order

GW distance is calculated as:

L

G-TUNING

(W,

ˆ

W ) = min

T ∈Γ

X

i,j,k,l

(W

i,k

−

ˆ

W

j,l

)

p

T

i,j

T

k,l

, (7)

where

Γ

is the set of transportation plans that satisfy

Γ =

{T ∈ R

D×M

+

|T 1

M

= 1

D

, T

⊺

1

D

= 1

M

}

. In each epoch,

an optimal

T

minimizes the transportation cost between two

graphons

(W,

ˆ

W )

. Fixing

T

, the graphon reconstruction mod-

ule

Ω

is optimized to minimize the GW discrepancy. We will

introduce the model design of

Ψ

in the next section for scal-

able training and inference.

4.3 Efficient Optimization of L

G-TUNING

A straightforward way to approximate the graphon is to learn

a mapping function graph structure

A

and node embedding

H

to the target

W

. For example, we can simply define the

graphon reconstruction module

Ω

in Eq 3 as a GNN or a shal-

low MLP. Suppose we have

M

graphs and each graph has at

most

|V |

nodes, brute-force graphon reconstruction from the

pre-trained node embeddings

H ∈ R

|V |×d

requires a large

number of parameters, e.g.,

Ψ : R

|V |×d

→ R

M×M

. Addi-

tionally, properties such as permutation-variance of graphons

are not guaranteed without a carefully designed architecture.

To this end, we first establish a theorem for graphon de-

composition and utilize it for efficient graphon approxima-

tion. Specifically, we propose that any graphon can be recon-

structed by a linear combination of graphon bases B

k

∈ B.

Theorem 1.

∀ W (x, y) ∈ W

C+1

, there exists

C

graphon

bases

B

k

(x, y)

that satisfies

W (x, y) =

P

C

k=1

α

k

B

k

(x, y)+

R

C+1

(x, y)

, where

α

i

∈ R

and

R

C+1

(x, y)

is the remainder

of order C + 1.

W (x, y) =

P

C

k=0

α

k

B

k

(x, y) + R

C+1

. (8)

剩余17页未读,继续阅读

资源评论

东方佑

- 粉丝: 8232

- 资源: 773

下载权益

C知道特权

VIP文章

课程特权

开通VIP

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- elasticsearch数据库下载、配置、使用案例

- springboot的概要介绍与分析

- C语言的概要介绍与分析

- 第一个较大的Android项目,基于Android平台的图书管理系统(Android studio).zip

- Cisco Packet Tracer 6.2 for Windows Instructor Version

- 使⽤pyIAST计算⽓体吸附选择性

- tmp_b056727e59b8123365486983f32baa9732607ec3c6137b12.pdf

- C代码实现文件的拆分和合并,本质上就是文件的读写操作.zip

- TVMP3player.apk.1

- 出马出马出马出马出马出马出马

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功