没有合适的资源?快使用搜索试试~ 我知道了~

Fine-Tuning Language Models from Human Preferences.pdf

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

温馨提示

试读

26页

Fine-Tuning Language Models from Human Preferences.pdf

资源推荐

资源详情

资源评论

Fine-Tuning Language Models from Human Preferences

Daniel M. Ziegler

∗

Nisan Stiennon

∗

Jeffrey Wu Tom B. Brown

Alec Radford Dario Amodei Paul Christiano Geoffrey Irving

OpenAI

{dmz,nisan,jeffwu,tom,alec,damodei,paul,irving}@openai.com

Abstract

Reward learning enables the application of rein-

forcement learning (RL) to tasks where reward is

defined by human judgment, building a model of

reward by asking humans questions. Most work

on reward learning has used simulated environ-

ments, but complex information about values is of-

ten expressed in natural language, and we believe

reward learning for language is a key to making

RL practical and safe for real-world tasks. In this

paper, we build on advances in generative pretrain-

ing of language models to apply reward learning

to four natural language tasks: continuing text

with positive sentiment or physically descriptive

language, and summarization tasks on the TL;DR

and CNN/Daily Mail datasets. For stylistic con-

tinuation we achieve good results with only 5,000

comparisons evaluated by humans. For summa-

rization, models trained with 60,000 comparisons

copy whole sentences from the input but skip irrel-

evant preamble; this leads to reasonable ROUGE

scores and very good performance according to

our human labelers, but may be exploiting the fact

that labelers rely on simple heuristics.

1. Introduction

We would like to apply reinforcement learning to complex

tasks defined only by human judgment, where we can only

tell whether a result is good or bad by asking humans. To

do this, we can first use human labels to train a model of

reward, and then optimize that model. While there is a long

history of work learning such models from humans through

interaction, this work has only recently been applied to mod-

ern deep learning, and even then has only been applied to

relatively simple simulated environments (Christiano et al.,

2017; Ibarz et al., 2018; Bahdanau et al., 2018). By contrast,

real world settings in which humans need to specify com-

*

Equal contribution. Correspondence to paul@openai.com.

plex goals to AI agents are likely to both involve and require

natural language, which is a rich medium for expressing

value-laden concepts. Natural language is particularly im-

portant when an agent must communicate back to a human

to help provide a more accurate supervisory signal (Irving

et al., 2018; Christiano et al., 2018; Leike et al., 2018).

Natural language processing has seen substantial recent ad-

vances. One successful method has been to pretrain a large

generative language model on a corpus of unsupervised data,

then fine-tune the model for supervised NLP tasks (Dai and

Le, 2015; Peters et al., 2018; Radford et al., 2018; Khandel-

wal et al., 2019). This method often substantially outper-

forms training on the supervised datasets from scratch, and

a single pretrained language model often can be fine-tuned

for state of the art performance on many different super-

vised datasets (Howard and Ruder, 2018). In some cases,

fine-tuning is not required: Radford et al. (2019) find that

generatively trained models show reasonable performance

on NLP tasks with no additional training (zero-shot).

There is a long literature applying reinforcement learning to

natural language tasks. Much of this work uses algorithmi-

cally defined reward functions such as BLEU for translation

(Ranzato et al., 2015; Wu et al., 2016), ROUGE for summa-

rization (Ranzato et al., 2015; Paulus et al., 2017; Wu and

Hu, 2018; Gao et al., 2019b), music theory-based rewards

(Jaques et al., 2017), or event detectors for story generation

(Tambwekar et al., 2018). Nguyen et al. (2017) used RL

on BLEU but applied several error models to approximate

human behavior. Wu and Hu (2018) and Cho et al. (2019)

learned models of coherence from existing text and used

them as RL rewards for summarization and long-form gen-

eration, respectively. Gao et al. (2019a) built an interactive

summarization tool by applying reward learning to one ar-

ticle at a time. Experiments using human evaluations as

rewards include Kreutzer et al. (2018) which used off-policy

reward learning for translation, and Jaques et al. (2019)

which applied the modified Q-learning methods of Jaques

et al. (2017) to implicit human preferences in dialog. Yi

et al. (2019) learned rewards from humans to fine-tune dia-

log models, but smoothed the rewards to allow supervised

learning. We refer to Luketina et al. (2019) for a survey of

arXiv:1909.08593v2 [cs.CL] 8 Jan 2020

http://chat.xutongbao.top

Fine-Tuning Language Models from Human Preferences

RL tasks involving language as a component, and for RL

results using transfer learning from language. RL is not the

only way to incorporate ongoing human feedback: Hancock

et al. (2019) ask humans what a dialogue system should

have said instead, then continue supervised training.

In this paper, we combine the pretraining advances in natural

language processing with human preference learning. We

fine-tune pretrained language models with reinforcement

learning rather than supervised learning, using a reward

model trained from human preferences on text continua-

tions. Following Jaques et al. (2017; 2019), we use a KL

constraint to prevent the fine-tuned model from drifting too

far from the pretrained model. We apply our method to

two types of tasks: continuing text in a way that matches a

target style, either positive sentiment or vividly descriptive,

and summarizing text from the CNN/Daily Mail or TL;DR

datasets (Hermann et al., 2015; Völske et al., 2017). Our

motivation is NLP tasks where supervised data sets are un-

available or insufficient, and where programmatic reward

functions are poor proxies for our true goals.

For stylistic continuation, 5,000 human comparisons (each

choosing the best of 4 continuations) result in the fine-tuned

model being preferred by humans 86% of the time vs. zero-

shot and 77% vs. fine-tuning to a supervised sentiment net-

work. For summarization, we use 60,000 human samples

to train models that can roughly be described as “smart

copiers”: they typically copy whole sentences from the in-

put, but vary what they copy to skip irrelevant initial text.

This copying behavior emerged naturally from the data col-

lection and training process; we did not use any explicit

architectural mechanism for copying as in See et al. (2017);

Gehrmann et al. (2018). One explanation is that copying

is an easy way to be accurate, given that we did not in-

struct labelers to penalize copying but do instruct them to

penalize inaccuracy. It may also reflect the fact that some

labelers check for copying as a fast heuristic to ensure a

summary is accurate. Indeed, human labelers significantly

prefer our models to supervised fine-tuning baselines and

even to human-written reference summaries, but not to a

lead-3 baseline which copies the first three sentences.

For summarization, we continue to collect additional data

and retrain our reward model as the policy improves (online

data collection). We also test offline data collection where

we train the reward model using data from the original

language model only; offline data collection significantly re-

duces the complexity of the training process. For the TL;DR

dataset, human labelers preferred the policy trained with

online data collection 71% of the time, and in qualitative

evaluations the offline model often provides inaccurate sum-

maries. In contrast, for stylistic continuation we found that

offline data collection worked similarly well. This may be

related to the style tasks requiring very little data; Radford

Reward model training

Policy

Reward

model

Human

labeler

context continuation (×4) reward (×4) loss

label

Policy training

Policy

Reward

model

context continuation reward loss

Figure 1: Our training processes for reward model and

policy. In the online case, the processes are interleaved.

et al. (2017) show that generatively trained models can learn

to classify sentiment from very few labeled examples.

In concurrent work, Böhm et al. (2019) also use human

evaluations to learn a reward function for summarization,

and optimize that reward function with RL. Their work

provides a more detailed investigation of the learned policy

and reward function on the CNN/Daily Mail dataset, while

we are interested in exploring learning from human feedback

more generally and at larger computational scale. So we

consider several additional tasks, explore the effects of on-

policy reward model training and more data, and fine-tune

large language models for both reward modeling and RL.

2. Methods

We begin with a vocabulary

Σ

and a language model

ρ

which defines a probability distribution over sequences of

tokens Σ

n

via

ρ(x

0

· · · x

n−1

) =

Y

0≤k<n

ρ(x

k

|x

0

· · · x

k−1

)

We will apply this model to a task with input space

X =

Σ

≤m

, data distribution

D

over

X

, and output space

Y =

Σ

n

. For example,

x ∈ X

could be an article of up to

1000 words and

y ∈ Y

could be a 100-word summary.

ρ

defines a probabilistic policy for this task via

ρ(y|x) =

ρ(xy)/ρ(x)

: fixing the beginning of the sample to

x

and

generating subsequent tokens using ρ.

We initialize a policy

π = ρ

, and then fine-tune

π

to perform

the task well using RL. If the task was defined by a reward

function

r : X × Y → R

, then we could use RL to directly

optimize the expected reward:

E

π

[r] = E

x∼D,y∼π(·|x)

[r(x, y)]

However, we want to perform tasks defined by human judg-

ments, where we can only learn about the reward by asking

humans. To do this, we will first use human labels to train a

reward model, and then optimize that reward model.

http://chat.xutongbao.top

Fine-Tuning Language Models from Human Preferences

Following Christiano et al. (2017), we ask human labelers

to pick which of several values of

y

i

is the best response to

a given input

x

.

1

We ask humans to choose between four

options

(y

0

, y

1

, y

2

, y

3

)

; considering more options allows a

human to amortize the cost of reading and understanding

the prompt

x

. Let

b ∈ {0, 1, 2, 3}

be the option they select.

Having collected a dataset

S

of

(x, y

0

, y

1

, y

2

, y

3

, b)

tuples,

we fit a reward model r : X × Y → R using the loss

loss(r) = E

(

x,{y

i

}

i

,b

)

∼S

log

e

r(x,y

b

)

P

i

e

r(x,y

i

)

(1)

Since the reward model needs to understand language, we

initialize it as a random linear function of the final em-

bedding output of the language model policy

ρ

following

Radford et al. (2018) (see section 4.2 for why we initialize

from

ρ

rather than

π

). To keep the scale of the reward model

consistent across training, we normalize it so that it has

mean 0 and variance 1 for x ∼ D, y ∼ ρ(·|x).

Now we fine-tune

π

to optimize the reward model

r

. To

keep

π

from moving too far from

ρ

, we add a penalty with

expectation

β KL(π, ρ)

(see table 10 for what happens with-

out this). That is, we perform RL on the modified reward

R(x, y) = r(x, y) − β log

π(y|x)

ρ(y|x)

. (2)

We either choose a constant

β

or vary it dynamically to

achieve a particular value of

KL(π, ρ)

; see section 2.2. This

term has several purposes: it plays the role of an entropy

bonus, it prevents the policy from moving too far from

the range where

r

is valid, and in the case of our style

continuation tasks it also is an important part of the task

definition: we ask humans to evaluate style, but rely on the

KL term to encourage coherence and topicality.

Our overall training process is:

1.

Gather samples

(x, y

0

, y

1

, y

2

, y

3

)

via

x ∼ D, y

i

∼

ρ(·|x). Ask humans to pick the best y

i

from each.

2.

Initialize

r

to

ρ

, using random initialization for the

final linear layer of

r

. Train

r

on the human samples

using loss (1).

3.

Train

π

via Proximal Policy Optimization (PPO, Schul-

man et al. (2017)) with reward R from (2) on x ∼ D.

4.

In the online data collection case, continue to collect

additional samples, and periodically retrain the reward

model r. This is described in section 2.3.

1

In early experiments we found that it was hard for humans

to provide consistent fine-grained quantitative distinctions when

asked for an absolute number, and experiments on synthetic tasks

confirmed that comparisons were almost as useful.

2.1. Pretraining details

We use a 774M parameter version of the GPT-2 language

model in Radford et al. (2019) trained on their WebText

dataset and their 50,257 token invertible byte pair encoding

to preserve capitalization and punctuation (Sennrich et al.,

2015). The model is a Transformer with 36 layers, 20 heads,

and embedding size 1280 (Vaswani et al., 2017).

For stylistic continuation tasks we perform supervised fine-

tuning of the language model to the BookCorpus dataset

of Zhu et al. (2015) prior to RL fine-tuning; we train from

scratch on WebText, supervised fine-tune on BookCorpus,

then RL fine-tune to our final task. To improve sample

quality, we use a temperature of

T < 1

for all experiments;

we modify the initial language model by dividing logits by

T

, so that future sampling and RL with

T = 1

corresponds

to a lower temperature for the unmodified pretrained model.

2.2. Fine-tuning details

Starting with the pretrained language model, the reward

model is trained using the Adam optimizer (Kingma and Ba,

2014) with loss (1). The batch size is 8 for style tasks and

32 for summarization, and the learning rate is

1.77 × 10

−5

for both. We use a single epoch to avoid overfitting to the

small amount of human data, and turn off dropout.

For training the policy

π

, we use the PPO2 version of Proxi-

mal Policy Optimization from Dhariwal et al. (2017). We

use 2M episodes (

x, y

pairs),

γ = 1

, four PPO epochs per

batch with one minibatch each, and default values for the

other parameters. We use batch size 1024 for style tasks and

512 for summarization. We do not use dropout for policy

training. The learning rate was

1.41 × 10

−5

for style tasks

and 7.07 × 10

−6

for summarization.

Models trained with different seeds and the same KL penalty

β

sometimes end up with quite different values of

KL(π, ρ)

,

making them hard to compare. To fix this, for some experi-

ments we dynamically vary

β

to target a particular value of

KL(π, ρ) using the log-space proportional controller

e

t

= clip

KL(π

t

, ρ) − KL

target

KL

target

, −0.2, 0.2

β

t+1

= β

t

(1 + K

β

e

t

)

We used K

β

= 0.1.

For supervised fine-tuning baselines, we fine-tune for 1

epoch on the CNN/Daily Mail and TL;DR training sets (for

TL;DR we removed 30K examples to serve as a validation

set). We decayed the learning rate to 0 with a cosine sched-

ule; for the initial value, we swept over 8 log-linearly spaced

options between

10

−4

and

3 × 10

−4

. We also experimented

with different dropout rates, and found a rate of 0.1 to work

best. We then chose the model with the best validation loss.

http://chat.xutongbao.top

Fine-Tuning Language Models from Human Preferences

2.3. Online data collection

If the trained policy

π

is very different from the zero-shot

policy

ρ

, the reward model will suffer a large distributional

shift from training on samples from

ρ

to evaluation on sam-

ples from

π

. To prevent this, we can collect human data

throughout RL fine-tuning, continuously gathering new data

by sampling from

π

and retraining the reward model. As

section 3 shows, online data collection was important for

summarization but not for the simpler style tasks.

In the online case, we will choose a function

l(n)

describing

how many labels we want before beginning the

n

th

PPO

episode. Let

N

π

= 2 × 10

6

be the total number of PPO

episodes,

N

0

r

= l(0)

be an initial number of human labels,

and N

r

be the total number of human labels. We take

l(n) = N

0

r

+ (N

r

− N

0

r

)

1 − (1 − n/N

π

)

2

We pause before the

n

th

PPO episode if we have fewer than

l(n)

labels. We send another batch of requests to the labelers

if the total requests so far is less than

l(n) + 1000

, to ensure

they have at least 1000 outstanding queries at any time. We

train the reward model before the first PPO episode, and

then retrain it 19 more times at evenly spaced values of

l(n)

.

Each time we retrain we reinitialize

r

to a random linear

layer on top of

ρ

and do a single epoch through the labels

collected so far. The offline case is the limit N

r

= N

0

r

.

To estimate overall progress, we gather validation samples

consisting of

x ∼ D; y

0

, y

1

∼ ρ(·|x); y

2

, y

3

∼ π(·|x)

at

a constant rate; human labels on these give how often

π

beats

ρ

. Since validation samples are only used to evaluate

the current

π

, we can add them to the training set for

r

.

In order to estimate inter-labeler agreement, 5% of queries

are answered 5 times by different labelers. Label counts in

section 3 include validation samples and repeated labels.

2.4. Human labeling

We use Scale AI to collect labels. The Scale API accepts

requests of the form

(x, y

0

, y

1

, y

2

, y

3

)

and returns selections

b ∈ {0, 1, 2, 3}

. We describe the task to Scale through a

combination of instructions (appendix A) and a dataset of

about 100 example comparisons from the authors.

Unlike many tasks in ML, our queries do not have unam-

biguous ground truth, especially for pairs of similar outputs

(which play a large role in our training process, since we

train

r

on pairs of labels sampled from a single policy

π

).

This means that there is significant disagreement even be-

tween labelers who have a similar understanding of the task

and are trying to rate consistently. On 4-way comparisons

for sentiment and TL;DR summarization, authors of this

paper agree about 60% of the time (vs. 25% for random

guessing). This low rate of agreement complicates the qual-

ity control process for Scale; the authors agree with Scale

Figure 2: Learning curves for a 124M-parameter model with

mock sentiment reward, targeting a KL of 8 nats. Lines and

shaded areas show mean and range for 5 seeds. Early on the

reward model sometimes speeds up training, a phenomenon

also observed by Christiano et al. (2017).

labelers 38% of the time on sentiment and 46% of the time

on TL;DR summarization. We give further details of the

human data collection and quality evaluation in appendix B.

For final evaluation of two models

A

and

B

, we generate

either 2-way comparisons between pairs

(a ∼ A, b ∼ B)

or

4-way comparisons with quadruples

(a

0

, a

1

∼ A, b

0

, b

1

∼

B)

, randomize the order in which samples are presented,

and present these comparisons to Scale. Evaluating the

quality of a model trained by Scale using the same set of

humans from Scale is perilous: it demonstrates that

r

and

π

have succeeded in fitting to the human reward, but does

not show that those human evaluations capture what we

really care about, and our models are incentivized to exploit

idiosyncracies of the labeling process. We include samples

from our models so that readers can judge for themselves.

3. Experiments

In section 3.1.1, we test our approach to RL fine-tuning of

language models by using a mock labeler (a sentiment model

trained on a review classification problem) as a stand-in for

human labels. We show that RL fine-tuning is effective

at optimizing this complex but somewhat artificial reward.

In section 3.1.2, we show that we can optimize language

models from human preferences on stylistic continuation

tasks (sentiment and physical descriptiveness) with very

little data, and that in the sentiment case the results are

preferred to optimizing the review sentiment model. In

section 3.2 we apply RL fine-tuning to summarization on

the CNN/Daily Mail and TL;DR datasets, show that the

resulting models are essentially “smart copiers”, and discuss

these results in the context of other summarization work.

http://chat.xutongbao.top

Fine-Tuning Language Models from Human Preferences

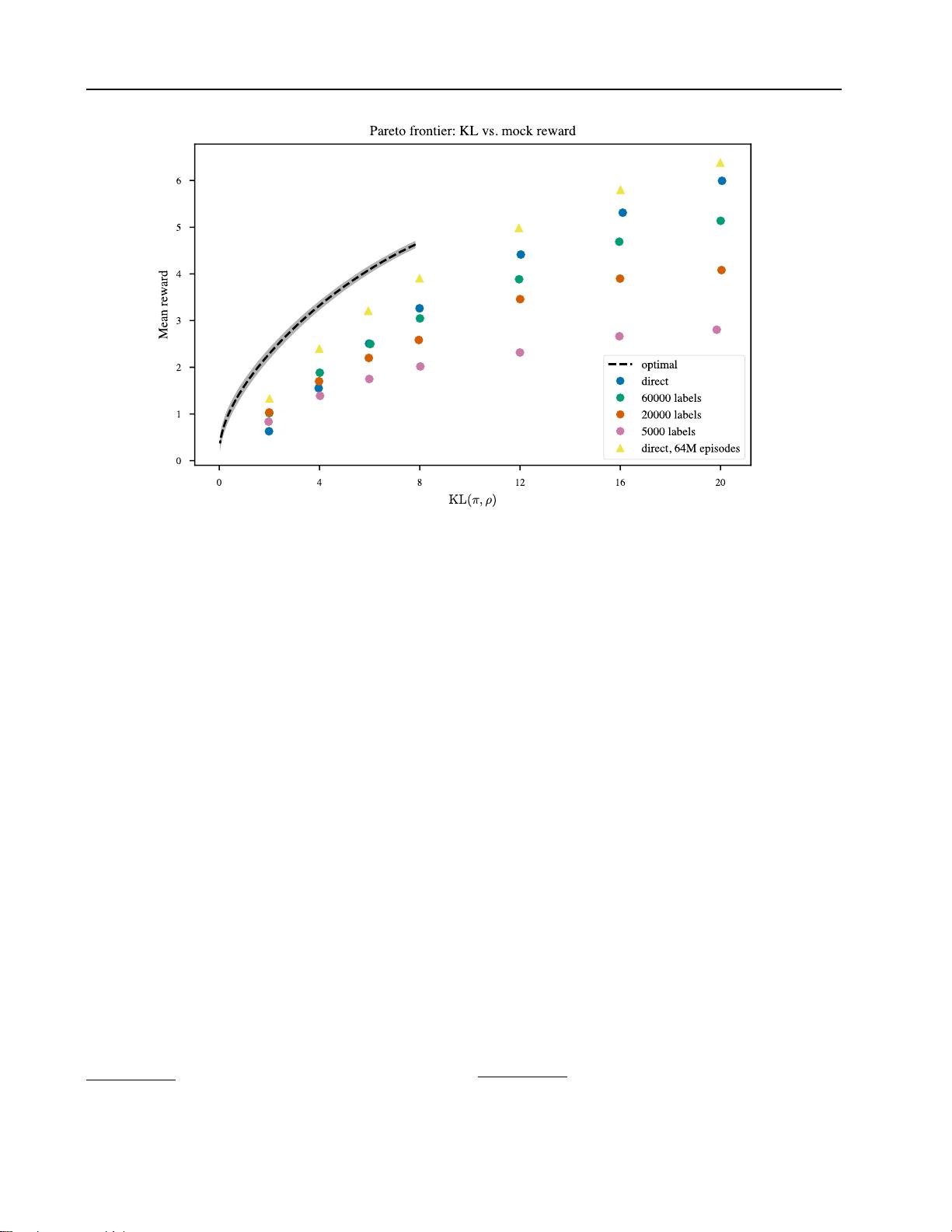

Figure 3: Allowing the policy

π

to move further from the initial policy

ρ

as measured by

KL(π, ρ)

achieves higher reward at

the cost of less natural samples. Here we show the optimal KL vs. reward for 124M-parameter mock sentiment (as estimated

by sampling), together with results using PPO. Runs used 2M episodes, except for the top series.

We release code

2

for reward modeling and fine-tuning in

the offline data case. Our public version of the code only

works with a smaller 124M parameter model with 12 layers,

12 heads, and embedding size 768. We include fine-tuned

versions of this smaller model, as well as some of the human

labels we collected for our main experiments (note that these

labels were collected from runs using the larger model).

3.1. Stylistic continuation tasks

We first apply our method to stylistic text continuation tasks,

where the policy is presented with an excerpt from the Book-

Corpus dataset (Zhu et al., 2015) and generates a continu-

ation of the text. The reward function evaluates the style

of the concatenated text, either automatically or based on

human judgments. We sample excerpts with lengths of 32

to 64 tokens, and the policy generates 24 additional tokens.

We set the temperature of the pretrained model to

T = 0.7

as described in section 2.1.

3.1.1. MOCK SENTIMENT TASK

To study our method in a controlled setting, we first apply it

to optimize a known reward function

r

s

designed to reflect

some of the complexity of human judgments. We construct

2

Code at https://github.com/openai/lm-human-preferences.

r

s

by training a classifier

3

on a binarized, balanced subsam-

ple of the Amazon review dataset of McAuley et al. (2015).

The classifier predicts whether a review is positive or nega-

tive, and we define

r

s

(x, y)

as the classifier’s log odds that

a review is positive (the input to the final sigmoid layer).

Optimizing

r

s

without constraints would lead the policy

to produce incoherent continuations, but as described in

section 2.2 we include a KL constraint that forces it to stay

close to a language model ρ trained on BookCorpus.

The goal of our method is to optimize a reward function

using only a small number of queries to a human. In this

mock sentiment experiment, we simulate human judgments

by assuming that the “human” always selects the continu-

ation with the higher reward according to

r

s

, and ask how

many queries we need to optimize r

s

.

Figure 2 shows how

r

s

evolves during training, using either

direct RL access to

r

s

or a limited number of queries to train

a reward model. 20k to 60k queries allow us to optimize

r

s

nearly as well as using RL to directly optimize r

s

.

Because we know the reward function, we can also ana-

lytically compute the optimal policy and compare it to our

learned policies. With a constraint on the KL divergence

3

The model is a Transformer with 6 layers, 8 attention heads,

and embedding size 512.

http://chat.xutongbao.top

剩余25页未读,继续阅读

资源评论

李需要2023-07-28感谢大佬分享的资源,对我启发很大,给了我新的灵感。

李需要2023-07-28感谢大佬分享的资源,对我启发很大,给了我新的灵感。

地理探险家

- 粉丝: 1054

- 资源: 5416

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功