没有合适的资源?快使用搜索试试~ 我知道了~

Unconditional Quantile Regressions 2009 Econometrica

需积分: 31 1 下载量 87 浏览量

2015-02-02

01:29:19

上传

评论

收藏 1.47MB PDF 举报

温馨提示

试读

22页

We propose a new regression method to evaluate the impact of changes in the distri- bution of the explanatory variables on quantiles of the unconditional (marginal) distrib- ution of an outcome variable. The proposed method consists of running a regression of the (recentered) influence function (RIF) of the unconditional quantile on the explana- tory variables. The influence function, a widely used tool in robust estimation, is easily computed for quantiles, as well as for other distributional statistics. Our approach, thus, can be readily generalized to other distributional statistics

资源推荐

资源详情

资源评论

Unconditional Quantile Regressions

Author(s): Sergio Firpo, Nicole M. Fortin and Thomas Lemieux

Source:

Econometrica,

Vol. 77, No. 3 (May, 2009), pp. 953-973

Published by: The Econometric Society

Stable URL: http://www.jstor.org/stable/40263848 .

Accessed: 31/10/2014 08:39

Your use of the JSTOR archive indicates your acceptance of the Terms & Conditions of Use, available at .

http://www.jstor.org/page/info/about/policies/terms.jsp

.

JSTOR is a not-for-profit service that helps scholars, researchers, and students discover, use, and build upon a wide range of

content in a trusted digital archive. We use information technology and tools to increase productivity and facilitate new forms

of scholarship. For more information about JSTOR, please contact support@jstor.org.

.

The Econometric Society is collaborating with JSTOR to digitize, preserve and extend access to Econometrica.

http://www.jstor.org

This content downloaded from 130.126.162.126 on Fri, 31 Oct 2014 08:39:16 AM

All use subject to JSTOR Terms and Conditions

Econometnca,

Vol.

77,

No. 3

(May,

2009),

953-973

UNCONDITIONAL

QUANTILE

REGRESSIONS

By

Sergio

Firpo,

Nicole M.

Fortin,

and

Thomas Lemieux1

We

propose

a

new

regression

method to evaluate the

impact

of

changes

in the distri-

bution of the

explanatory

variables on

quantiles

of

the unconditional

(marginal)

distrib-

ution of

an

outcome variable.

The

proposed

method

consists of

running

a

regression

of

the

(recentered)

influence function

(RIF)

of

the

unconditional

quantile

on

the

explana-

tory

variables. The

influence

function,

a

widely

used tool in

robust

estimation,

is

easily

computed

for

quantiles,

as

well as

for other

distributional statistics. Our

approach,

thus,

can

be

readily generalized

to

other

distributional

statistics.

Keywords: Influence

functions,

unconditional

quantile,

RIF

regressions,

quantile

regressions.

1.

INTRODUCTION

In

this

paper,

we

propose

a

new

computationally

simple regression

method

to

estimate

the

impact

of

changing

the distribution

of

explanatory

variables,

X,

on the

marginal

quantiles

of

the

outcome

variable, Y,

or

other

functional

of the

marginal

distribution

of

Y. The method

consists of

running

a

regres-

sion

of a

transformation

-

the

(recentered)

influence function

defined below

-

of the

outcome

variable

on the

explanatory

variables. To

distinguish

our

ap-

proach

from

commonly

used

conditional

quantile regressions

(Koenker

and

Bassett

(1978),

Koenker

(2005)),

we call our

regression

method an

uncondi-

tional

quantile regression.2

Empirical

researchers are

often interested

in

changes

in

the

quantiles,

de-

noted

qT,

of the

marginal

(unconditional)

distribution,

FY(y).

For

example,

we

may

want

to estimate the direct effect

dqT(p)/dp

of

increasing

the

proportion

of unionized

workers,

p

=

Pr[X

=

1],

on

the Tth

quantile

of the

distribu-

tion

of

wages,

where

X

=

1 if

the

workers

is

unionized

and X

=

0

otherwise.

In the

case

of

the

mean

/a,

the

coefficient

/3

of

a

standard

regression

of Y

on

X

is a

measure

of

the

impact

of

increasing

the

proportion

of

unionized

*We thank the co-editor and three

referees for

helpful suggestions.

We

are also indebted

to Joe

Altonji,

Richard

Blundell,

David

Card,

Vinicius

Carrasco,

Marcelo

Fernandes,

Chuan

Goh,

Jinyong

Hahn,

Joel

Horowitz,

Guido

Imbens,

Shakeeb

Khan,

Roger

Koenker,

Thierry

Magnac,

Ulrich

Millier,

Geert

Ridder,

Jean-Marc

Robin,

Hal

White,

and

seminar

participants

at

CESG2005, UCL,

CAEN-UFC, UFMG,

Econometrics

in

Rio

2006, PUC-Rio,

IPEA-RJ,

SBE

Meetings

2006,

Tilburg University, Tinbergen

Institute,

KU

Leuven,

ESTE-2007,

Harvard-MIT

Econometrics

Seminar, Yale, Princeton, Vanderbilt,

and

Boston

University

for useful

comments

on earlier versions of

the

manuscript.

Fortin

and Lemieux thank

SSHRC for

financial

support.

Firpo

thanks

CNPq

for financial

support.

Usual disclaimers

apply.

2The "unconditional

quantiles"

are

the

quantiles

of the

marginal

distribution of

the

outcome

variable Y.

Using "marginal"

instead of

"unconditional" would

be

confusing,

however,

since

we

also

use

the word

"marginal"

to

refer to the

impact

of

small

changes

in

covariates

(marginal

effects).

© 2009 The

Econometric

Society

DOI:

10.3982/ECTA6822

This content downloaded from 130.126.162.126 on Fri, 31 Oct 2014 08:39:16 AM

All use subject to JSTOR Terms and Conditions

954

S.

FIRPO,

N.

M.

FORTIN,

AND T.

LEMIEUX

workers on

the mean

wage, dji(p)/dp.

As is well

known,

the same

coeffi-

cient

/3

can

also be

interpreted

as an

impact

on the conditional mean.3 Un-

fortunately,

the

coefficient

/3T

from a

single

conditional

quantile

regression,

j8T

=

Fy\r\X

=

1)

-

Fy\r\X

=

0),

is

generally

different

from

dqT(p)/dp

=

(Pr[Y

>

qT\X

=

1]-

Pr[Y

>

qT\X

=

0])/fY(qT),

the

effect of

increasing

the

proportion

of unionized workers on the rth

quantile

of

the unconditional dis-

tribution of Y.4

A

new

approach

is therefore needed to

provide practitioners

with

an

easy way

to

compute

dqT(p)/dp,

especially

when

X

is

not univariate

and

binary

as in

the above

example.

Our

approach

builds

upon

the

concept

of the

influence function

(IF),

a

widely

used tool

in

the robust estimation

of

statistical

or econometric

mod-

els. As its name

suggests,

the influence function

IF(

Y;

v, FY)

of a distributional

statistic

v(FY )

represents

the influence of

an individual observation on

that dis-

tributional statistic.

Adding

back the statistic

v(FY)

to the influence

function

yields

what we call the recentered

influence function

(RIF).

One convenient

fea-

ture of the

RIF

is that its

expectation

is

equal

to

v(FY).5

Because

influence

functions can be

computed

for most distributional

statistics,

our

method

easily

extends to other choices of v

beyond

quantiles,

such

as the

variance,

the Gini

coefficient,

and

other

commonly

used

inequality

measures.6

For

the Tth

quantile,

the influence function

IF(Y;

qT,FY)

is

known to be

equal

to

(r

-

1{

Y

<

qT})/fy{qr)'

As a

result, RIF(

Y;

qT,

FY)

is

simply

equal

to

qT

+

IF(

Y;

qT,

FY).

We call the conditional

expectation

of the

RIF(

Y;

v, FY)

modeled as a function of the

explanatory

variables,

£[RIF(Y;

v,

FY)\X]

=

mv(X),

the

RIF

regression

model.1

In

the case

of

quantiles,

£[RIF(Y;

qT,

FY)\X]

=

mT(X)

can be viewed as an unconditional

quantile

regression.

We

show that the

average

derivative of the unconditional

quantile

regression,

E[mfT(X)],

corresponds

to the

marginal

effect on the

unconditional

quantile

of a

small location shift

in

the distribution

of

covariates,

holding

everything

else

constant.

Our

proposed

approach

can be

easily

implemented

as an

ordinary

least

squares (OLS) regression.

In

the case of

quantiles,

the

dependent

variable

in

the

regression

is

RIF(Y;

qr,FY)

=

qT

+

(r

-

1{Y

<

ÇtD/MÇt).

It is

easily

3The

conditional

mean

interpretation

is the

wage change

that a worker

would

expect

when

her union

status

changes

from

non-unionized

to

unionized,

or

/3

=

E(Y\X

=

1)

-

E(Y\X

=

0).

Since the unconditional mean is

fi(p)

=

pE(Y\X

=

1)

+

(1

-

p)E{Y\X

=

0),

it

follows that

drtpydp

=

E{Y\X

=

1)

-

E(Y\X

=

0)

=

p.

4The

expression

for

dqT(p)/dp

is obtained

by implicit

differentiation

applied

to

FY(qT)

=

P

•

(Pi[Y

<qT\X

=

l]- Pr[7

<

qT\X

=

0])

+

Pr[Y

<

qT\X

=

0].

3

Such

property

is

important

in

some

situations,

although

for

the

marginal

effects in

which we

are interested in this

paper

the

recentering

is

not

fundamental.

In

Firpo,

Fortin,

and

Lemieux

(2007b),

the

recentering

is useful because it allows

us to

identify

the

intercept

and

perform

Oaxaca-type decompositions

at various

quantiles.

6See

Firpo,

Fortin,

and Lemieux

(2007b)

for such

regressions

on the variance

and Gini.

7In the case of the

mean,

since the

RIF is

simply

the outcome

variable

Y,

a

regression

of

RIF(

Y;

fi)

on X is the same as an OLS

regression

of

Y

on

X.

This content downloaded from 130.126.162.126 on Fri, 31 Oct 2014 08:39:16 AM

All use subject to JSTOR Terms and Conditions

UNCONDITIONAL

QUANTILE

REGRESSIONS

955

computed

by

estimating

the

sample quantile

qT,

estimating

the

density

fY(qT)

at that

point

qT using

kernel

(or

other)

methods,

and

forming

a

dummy

variable

MY

<

qT], indicating

whether the value of

the outcome variable is below

qT.

Then we can

simply

run

an

OLS

regression

of this new

dependent

variable on

the

covariates,

although

we

suggest

more

sophisticated

estimation methods

in

Section

3.

We view our

approach

as an

important complement

to the literature

con-

cerned with the

estimation of

quantile

functions.

However,

unlike Imbens

and

Newey

(2009),

Chesher

(2003),

and

Florens,

Heckman,

Meghir,

and

Vyt-

lacil

(2008),

who considered

the identification of structural

functions defined

from conditional

quantile

restrictions

in

the

presence

of

endogenous

regres-

sors,

our

approach

is

concerned

solely

with

parameters

that

capture changes

in

unconditional

quantiles

in

the

presence

of

exogenous regressors.

The structure

of the

paper

is

as follows.

In

the next

section,

we

define the

key object

of

interest,

the "unconditional

quantile partial

effect"

(UQPE)

and

show

how RIF

regressions

for the

quantile

can

be

used to estimate the

UQPE.

We

also

link

this

parameter

to

the

structural

parameters

of a

general

model and

the conditional

quantile

partial

effects

(CQPE).

The estimation issues

are

ad-

dressed

in

Section

3. Section

4

presents

an

empirical application

of

our method

that

illustrates well the difference between our method and conditional

quan-

tiles

regressions.

We conclude

in

Section 5.

2. UNCONDITIONAL

PARTIAL

EFFECTS

2.1.

General

Concepts

We

assume that

Y

is observed

in

the

presence

of covariates

X,

so

that

Y

and X have

a

joint

distribution,

FY,x(;

•)

'•

R

x

X

-►

[0,

1],

and

X

c

R*

is

the

support

of X.

By

analogy

with

a

standard

regression

coefficient,

our

object

of

interest

is the effect of a small increase

in

the location of

the distribution of the

explanatory

variable

X

on the rth

quantile

of the

unconditional distribution

of

Y

. We

represent

this small location shift

in

the distribution

of

X in

terms of

the counterfactual

distribution

Gx(x).

By

definition,

the unconditional

(mar-

ginal)

distribution function of

Y

can be written

as

(1)

FY(y)

=

J

FYlx(y\X

=

x)

•

dFx(x).

Under the

assumption

that the conditional

distribution

FY\x(-)

is unaffected

by

this small

manipulation

of

the distribution

of

X,

a

counterfactual

distribution

This content downloaded from 130.126.162.126 on Fri, 31 Oct 2014 08:39:16 AM

All use subject to JSTOR Terms and Conditions

956

S.

FIRPO,

N. M.

FORTIN,

AND T.

LEMIEUX

of

Y,

G*Y,

can be

obtained

by

replacing

Fx(x)

with

Gx(x)8:

(2)

GY

(y)

=

J

FYlx(y\X

=

x)

•

dGx(x).

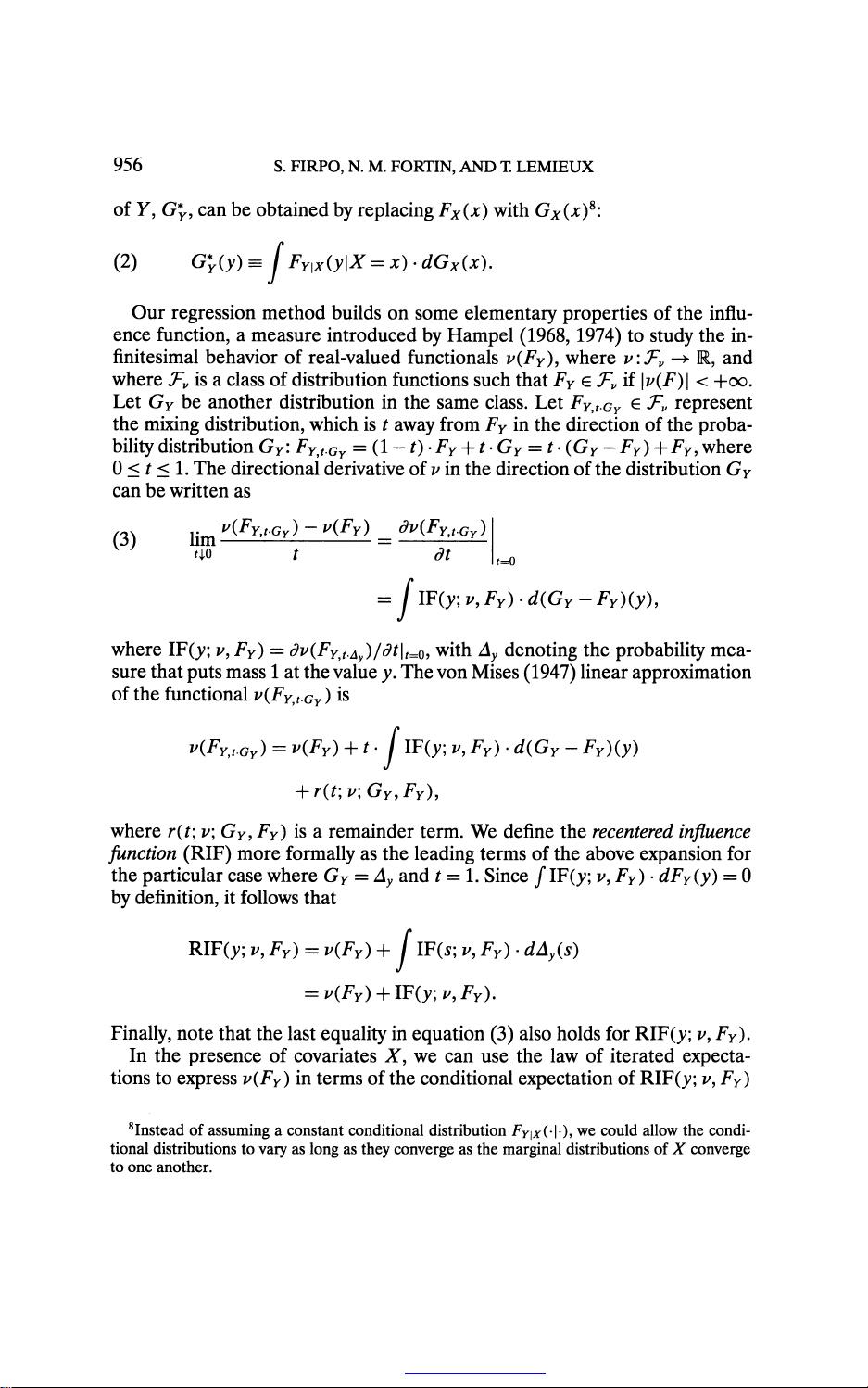

Our

regression

method builds on

some

elementary properties

of

the

influ-

ence

function,

a

measure introduced

by

Hampel (1968,

1974)

to

study

the in-

finitesimal

behavior of

real-valued

functional

v(FY),

where

v:Fv-+R,

and

where

Tv

is

a class of

distribution functions

such that

FY

e

Tv

if

\v(F)\

<

+oo.

Let

GY

be

another distribution in the

same class. Let

FY,t.GY

G

^

represent

the

mixing

distribution,

which is t

away

from

FY

in

the direction of the

proba-

bility

distribution

GY

:

FY,t.Gy

=

(1

-

1)

•

FY

+

1

•

GY

=

t

•

(GY

-

FY)

+

FY,

where

0

<

t

<

1.

The directional

derivative of v

in

the

direction of the distribution

GY

can be

written

as

(3)

Um

S?

HFy,,gy)-HFy)

=

dv{FYtt.GY)

Um

S?

/

=

dt

t=Q

=

JlF(y;p,FY).d(GY-FY)(y),

where

IF(};;

v, FY)

=

dv(FY,t.Ay)/dt\t=0,

with

Ay

denoting

the

probability

mea-

sure that

puts

mass

1 at

the value

y.

The von

Mises

(1947)

linear

approximation

of

the functional

v(FYjt.GY)

is

v(FY,t.GY)

=

v(FY)

+

t.j

IF(y;

v, FY)

•

d(GY

-

FY)(y)

+

r(t;v;GY,FY),

where

r(t\

v\

GY, FY)

is a

remainder term. We

define

the

recentered

influence

function

(RIF)

more

formally

as the

leading

terms of the above

expansion

for

the

particular

case where

GY

=

Ay

and

t

=

1. Since

/

IF(y;

v,

FY

)

•

dFY(y)

=

0

by

definition,

it follows that

RIFO;

v, FY)

=

v(FY)

+

j

IF(s; v, FY)

•

dAy{s)

=

KFy)

+

IF(y;i/,Fy).

Finally,

note that the last

equality

in

equation (3)

also holds

for

RIF(>>;

v,FY).

In

the

presence

of

covariates

X,

we can use the law of iterated

expecta-

tions

to

express

v(FY )

in

terms of the

conditional

expectation

of

RIF(_y;

v, FY)

8

Instead of

assuming

a constant conditional

distribution

FY\x(-\-),

we could allow the condi-

tional

distributions to

vary

as

long

as

they converge

as the

marginal

distributions

of X

converge

to one another.

This content downloaded from 130.126.162.126 on Fri, 31 Oct 2014 08:39:16 AM

All use subject to JSTOR Terms and Conditions

剩余21页未读,继续阅读

资源评论

sinat_25766775

- 粉丝: 0

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功