TensorFlow:

Large-Scale Machine Learning on Heterogeneous Distributed Systems

(Preliminary White Paper, November 9, 2015)

Mart

´

ın Abadi, Ashish Agarwal, Paul Barham, Eugene Brevdo, Zhifeng Chen, Craig Citro,

Greg S. Corrado, Andy Davis, Jeffrey Dean, Matthieu Devin, Sanjay Ghemawat, Ian Goodfellow,

Andrew Harp, Geoffrey Irving, Michael Isard, Yangqing Jia, Rafal Jozefowicz, Lukasz Kaiser,

Manjunath Kudlur, Josh Levenberg, Dan Man

´

e, Rajat Monga, Sherry Moore, Derek Murray,

Chris Olah, Mike Schuster, Jonathon Shlens, Benoit Steiner, Ilya Sutskever, Kunal Talwar,

Paul Tucker, Vincent Vanhoucke, Vijay Vasudevan, Fernanda Vi

´

egas, Oriol Vinyals,

Pete Warden, Martin Wattenberg, Martin Wicke, Yuan Yu, and Xiaoqiang Zheng

Google Research

∗

Abstract

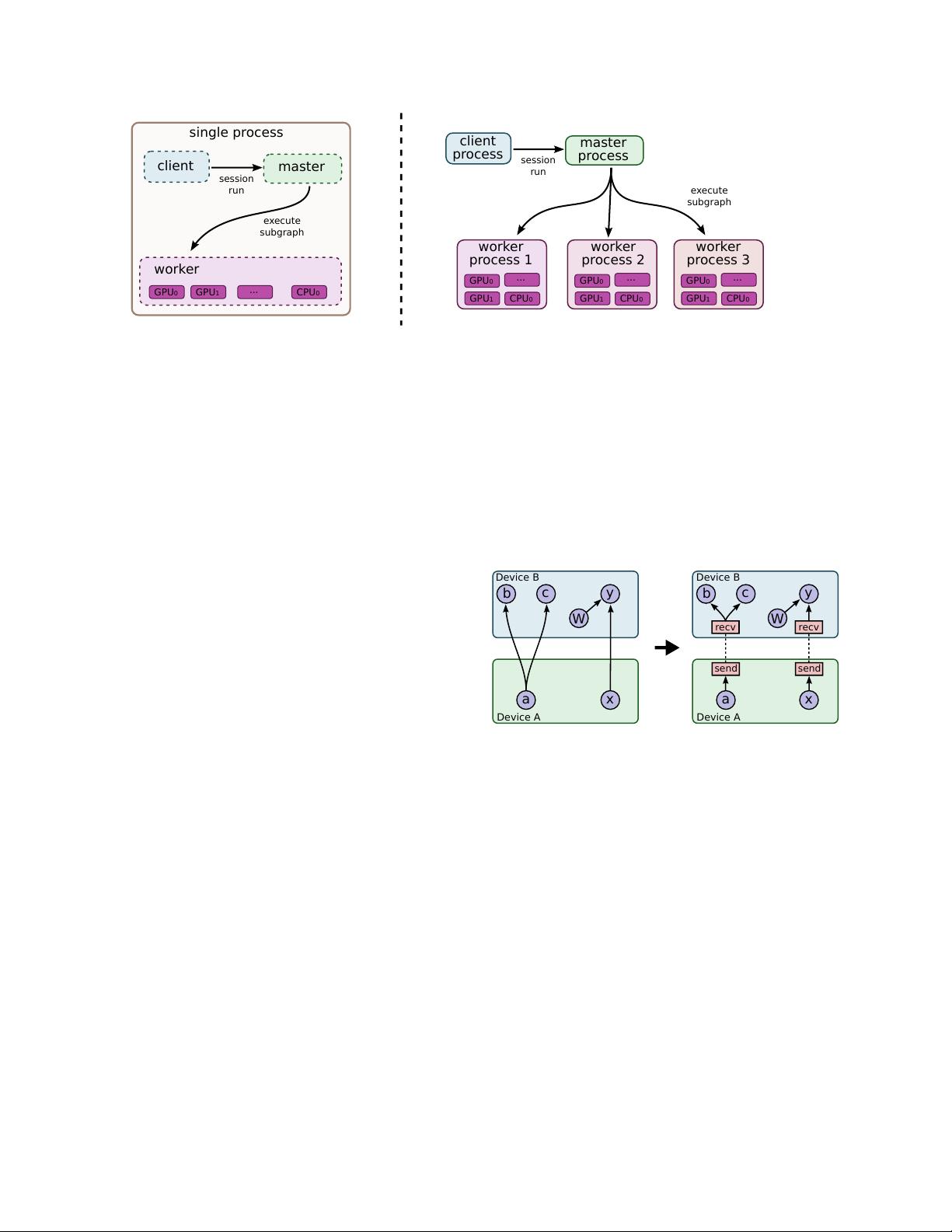

TensorFlow [1] is an interface for expressing machine learn-

ing algorithms, and an implementation for executing such al-

gorithms. A computation expressed using TensorFlow can be

executed with little or no change on a wide variety of hetero-

geneous systems, ranging from mobile devices such as phones

and tablets up to large-scale distributed systems of hundreds

of machines and thousands of computational devices such as

GPU cards. The system is flexible and can be used to express

a wide variety of algorithms, including training and inference

algorithms for deep neural network models, and it has been

used for conducting research and for deploying machine learn-

ing systems into production across more than a dozen areas of

computer science and other fields, including speech recogni-

tion, computer vision, robotics, information retrieval, natural

language processing, geographic information extraction, and

computational drug discovery. This paper describes the Ten-

sorFlow interface and an implementation of that interface that

we have built at Google. The TensorFlow API and a reference

implementation were released as an open-source package under

the Apache 2.0 license in November, 2015 and are available at

www.tensorflow.org.

1 Introduction

The Google Brain project started in 2011 to explore the

use of very-large-scale deep neural networks, both for

research and for use in Google’s products. As part of

the early work in this project, we built DistBelief, our

first-generation scalable distributed training and infer-

ence system [14], and this system has served us well. We

and others at Google have performed a wide variety of re-

search using DistBelief including work on unsupervised

learning [31], language representation [35, 52], models

for image classification and object detection [16, 48],

video classification [27], speech recognition [56, 21, 20],

∗

Corresponding authors: Jeffrey Dean and Rajat Monga:

{jeff,rajatmonga}@google.com

sequence prediction [47], move selection for Go [34],

pedestrian detection [2], reinforcement learning [38],

and other areas [17, 5]. In addition, often in close collab-

oration with the Google Brain team, more than 50 teams

at Google and other Alphabet companies have deployed

deep neural networks using DistBelief in a wide variety

of products, including Google Search [11], our advertis-

ing products, our speech recognition systems [50, 6, 46],

Google Photos [43], Google Maps and StreetView [19],

Google Translate [18], YouTube, and many others.

Based on our experience with DistBelief and a more

complete understanding of the desirable system proper-

ties and requirements for training and using neural net-

works, we have built TensorFlow, our second-generation

system for the implementation and deployment of large-

scale machine learning models. TensorFlow takes com-

putations described using a dataflow-like model and

maps them onto a wide variety of different hardware

platforms, ranging from running inference on mobile

device platforms such as Android and iOS to modest-

sized training and inference systems using single ma-

chines containing one or many GPU cards to large-scale

training systems running on hundreds of specialized ma-

chines with thousands of GPUs. Having a single system

that can span such a broad range of platforms signifi-

cantly simplifies the real-world use of machine learning

system, as we have found that having separate systems

for large-scale training and small-scale deployment leads

to significant maintenance burdens and leaky abstrac-

tions. TensorFlow computations are expressed as stateful

dataflow graphs (described in more detail in Section 2),

and we have focused on making the system both flexible

enough for quickly experimenting with new models for

research purposes and sufficiently high performance and

robust for production training and deployment of ma-

chine learning models. For scaling neural network train-

ing to larger deployments, TensorFlow allows clients to

easily express various kinds of parallelism through repli-

cation and parallel execution of a core model dataflow

1