Distributed Adversarial Training to Robustify Deep Neural Networks at Scale

Gaoyuan Zhang

1,*

Songtao Lu

1,*

Yihua Zhang

2

Xiangyi Chen

3

Pin-Yu Chen

1

Quanfu Fan

1

Lee Martie

1

Lior Horesh

1

Mingyi Hong

3

Sijia Liu

1,2

1

IBM Research, Yorktown Heights, NY 10598

2

Michigan State University, East Lansing, MI 48824

3

University of Minnesota, Minneapolis, MN 55455

*

Equal Contribution

Abstract

Current deep neural networks (DNNs) are vulner-

able to adversarial attacks, where adversarial per-

turbations to the inputs can change or manipulate

classification. To defend against such attacks, an

effective and popular approach, known as adver-

sarial training (AT), has been shown to mitigate

the negative impact of adversarial attacks by virtue

of a min-max robust training method. While ef-

fective, it remains unclear whether it can success-

fully be adapted to the distributed learning con-

text. The power of distributed optimization over

multiple machines enables us to scale up robust

training over large models and datasets. Spurred by

that, we propose distributed adversarial training

(DAT), a large-batch adversarial training frame-

work implemented over multiple machines. We

show that DAT is general, which supports training

over labeled and unlabeled data, multiple types

of attack generation methods, and gradient com-

pression operations favored for distributed opti-

mization. Theoretically, we provide, under stan-

dard conditions in the optimization theory, the

convergence rate of DAT to the first-order station-

ary points in general non-convex settings. Empir-

ically, we demonstrate that DAT either matches

or outperforms state-of-the-art robust accuracies

and achieves a graceful training speedup (e.g., on

ResNet–50 under ImageNet). Codes are available

at https://github.com/dat-2022/dat.

1 INTRODUCTION

The rapid increase of research in DNNs and their adoption

in practice is, in part, owed to the significant breakthroughs

made with DNNs in computer vision [Alom et al., 2018].

Yet, with the apparent power of DNNs, there remains a se-

rious weakness of robustness. That is, DNNs can easily be

manipulated (by an adversary) to output drastically differ-

ent classifications and can be done so in a controlled and

directed way. This process is known as an adversarial attack

and considered as one of the major hurdles in using DNNs

in security critical and real-world applications [Goodfellow

et al., 2015, Szegedy et al., 2013, Carlini and Wagner, 2017,

Papernot et al., 2016, Kurakin et al., 2016, Eykholt et al.,

2018, Xu et al., 2019b].

Methods to train DNNs being robust against adversarial

attacks are now a major focus in research [Xu et al., 2019a].

But most of them are far from satisfactory [Athalye et al.,

2018] with the exception of the adversarial training (AT)

approach [Madry et al., 2017]. AT is a min-max robust

training method that minimizes the worst-case training loss

at adversarially perturbed examples. AT has inspired a wide

range of state-of-the-art defenses [Zhang et al., 2019b, Sinha

et al., 2018, Boopathy et al., 2020, Carmon et al., 2019,

Shafahi et al., 2019, Zhang et al., 2019a], which ultimately

resort to min-max optimization. However, different from

standard training, AT is more computationally intensive and

is difficult to scale.

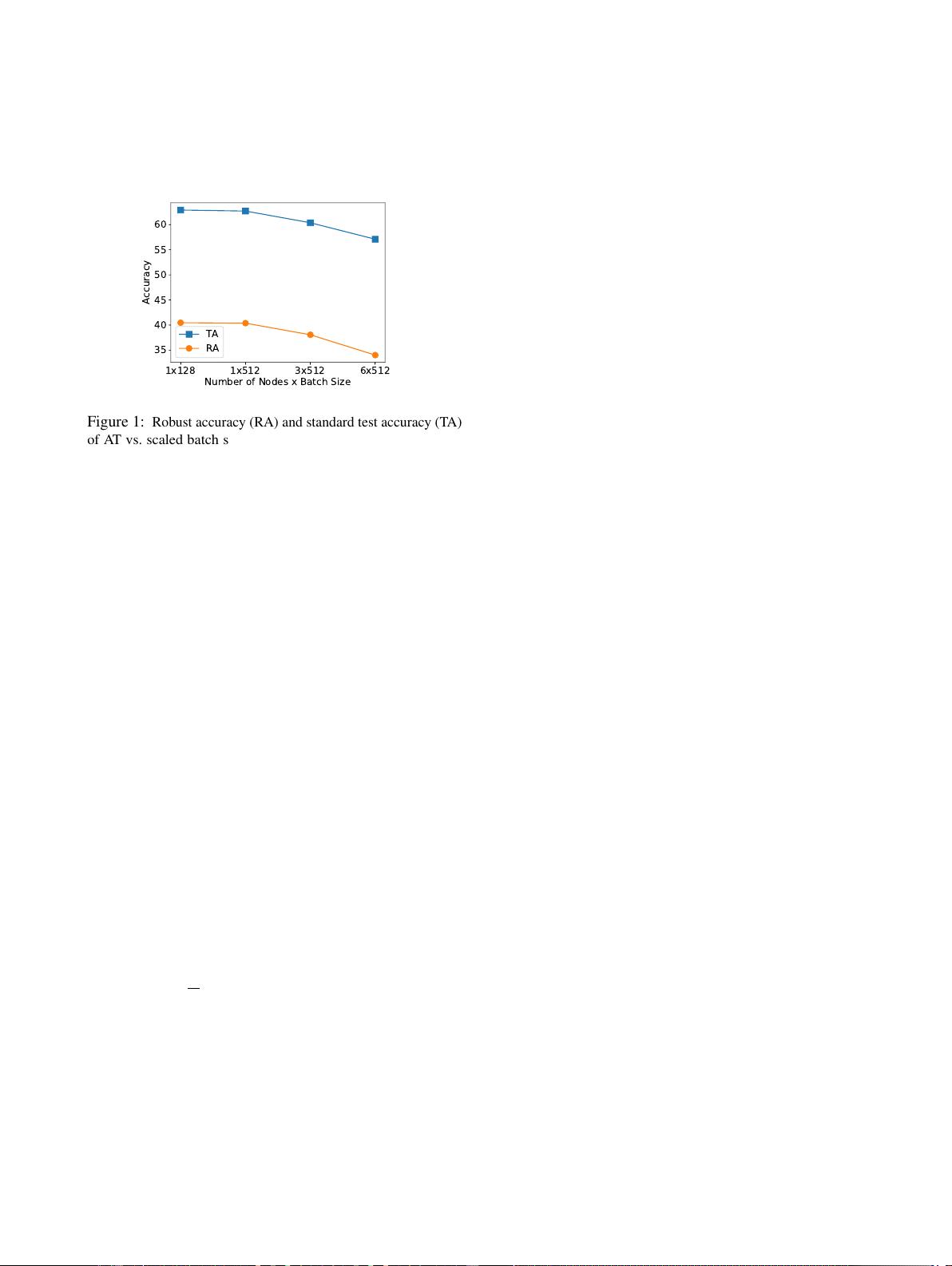

Motivation and challenges.

First, although a ‘fast’ ver-

sion of AT (we call Fast AT) was developed in [Wong et al.,

2020] where an iterative inner maximization solver is re-

placed by a simplified (single-step) solution, it may suffer

several problems compared to AT: unstable robust learn-

ing performance [Li et al., 2020], over-sensitive to learning

rate schedule [Rice et al., 2020], and catastrophic forgetting

of robustness against strong attacks [Andriushchenko and

Flammarion, 2020]. As a result, AT is still the dominant

robust training protocol across applications. Spurred by that,

we propose DAT, a new approach to speed up AT by allow-

ing for scaling batch size with distributed machines. Second,

existing AT-type methods are generally built on centralized

optimization. The need of AT in a distributed setting arises

when centralized robust training becomes infeasible or in-

effective. For example, training data are distributed as they

Accepted for the 38

th

Conference on Uncertainty in Artificial Intelligence (UAI 2022).

arXiv:2206.06257v1 [cs.LG] 13 Jun 2022