没有合适的资源?快使用搜索试试~ 我知道了~

Google PaLM 2 技术手册

温馨提示

试读

92页

我们介绍PaLM 2,这是一个新的最先进的语言模型,比其前身PaLM(Chowdhery等人,2022)具有更好的多语言和推理能力,而且计算效率更高。PaLM 2是一个基于Transformer的模型,使用类似UL2的混合目标进行训练(Tay等人,2023)。通过对英语和多语种语言以及推理任务的广泛评估,我们证明了PaLM 2在不同模型规模的下游任务上具有明显的质量改进,同时与PaLM相比表现出更快、更有效的推理。这种效率的提高使我们能够更广泛地 的部署,同时也使模型能够响应更快,以实现更自然的互动速度。PaLM 2展示了强大的推理能力,其例子是 在BIG-Bench和其他推理任务上比PaLM有很大的改进。PaLM 2在一系列的人工智能评估中表现出稳定的性能。在一套负责任的人工智能评估中表现出稳定的性能,并且能够在推理时间控制毒性,而不需要额外的开销或影响到 其他能力。总的来说,PaLM 2在一系列不同的任务和能力中实现了最先进的性能。

资源推荐

资源详情

资源评论

PaLM 2 Technical Report

Google

*

Abstract

We introduce PaLM 2, a new state-of-the-art language model that has better multilingual and reasoning capabilities

and is more compute-efficient than its predecessor PaLM (Chowdhery et al., 2022). PaLM 2 is a Transformer-based

model trained using a mixture of objectives similar to UL2 (Tay et al., 2023). Through extensive evaluations on English

and multilingual language, and reasoning tasks, we demonstrate that PaLM 2 has significantly improved quality on

downstream tasks across different model sizes, while simultaneously exhibiting faster and more efficient inference

compared to PaLM. This improved efficiency enables broader deployment while also allowing the model to respond

faster, for a more natural pace of interaction. PaLM 2 demonstrates robust reasoning capabilities exemplified by large

improvements over PaLM on BIG-Bench and other reasoning tasks. PaLM 2 exhibits stable performance on a suite of

responsible AI evaluations, and enables inference-time control over toxicity without additional overhead or impact on

other capabilities. Overall, PaLM 2 achieves state-of-the-art performance across a diverse set of tasks and capabilities.

*

See authorship section for a list of authors.

1

Contents

1 Introduction 3

2 Scaling law experiments 7

2.1 Scaling laws . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

2.2 Downstream metric evaluations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

3 Training dataset 9

4 Evaluation 10

4.1 Language proficiency exams . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

4.2 Classification and question answering . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

4.3 Reasoning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

4.4 Coding . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

4.5 Translation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

4.6 Natural language generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

4.7 Memorization . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

5 Responsible usage 23

5.1 Inference-time control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

5.2 Recommendations for developers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

6 Conclusion 27

A Detailed results 42

A.1 Scaling laws . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 42

A.2 Instruction tuning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 42

A.3 Multilingual commonsense reasoning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

A.4 Coding . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

A.5 Natural language generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

B Examples of model capabilities 44

B.1 Multilinguality . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

B.2 Creative generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 48

B.3 Coding . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 49

C Language proficiency exams 61

D Dataset language composition 61

E Responsible AI 62

E.1 Dataset analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

E.2 Evaluation approach . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

E.3 Dialog uses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

E.4 Classification uses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

E.5 Translation uses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 72

E.6 Question answering uses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 76

E.7 Language modeling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 79

E.8 Measurement quality rubrics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

E.9 CrowdWorksheets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 84

E.10 Model Card . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 91

2

1 Introduction

Language modeling has long been an important research area since Shannon (1951) estimated the information in

language with next word prediction. Modeling began with

n

-gram based approaches (Kneser & Ney, 1995) but rapidly

advanced with LSTMs (Hochreiter & Schmidhuber, 1997; Graves, 2014). Later work showed that language modelling

also led to language understanding (Dai & Le, 2015). With increased scale and the Transformer architecture (Vaswani

et al., 2017), large language models (LLMs) have shown strong performance in language understanding and generation

capabilities over the last few years, leading to breakthrough performance in reasoning, math, science, and language tasks

(Howard & Ruder, 2018; Brown et al., 2020; Du et al., 2022; Chowdhery et al., 2022; Rae et al., 2021; Lewkowycz

et al., 2022; Tay et al., 2023; OpenAI, 2023b). Key factors in these advances have been scaling up model size (Brown

et al., 2020; Rae et al., 2021) and the amount of data (Hoffmann et al., 2022). To date, most LLMs follow a standard

recipe of mostly monolingual corpora with a language modeling objective.

We introduce PaLM 2, the successor to PaLM (Chowdhery et al., 2022), a language model unifying modeling advances,

data improvements, and scaling insights. PaLM 2 incorporates the following diverse set of research advances:

• Compute-optimal scaling

: Recently, compute-optimal scaling (Hoffmann et al., 2022) showed that data size is

at least as important as model size. We validate this study for larger amounts of compute and similarly find that

data and model size should be scaled roughly 1:1 to achieve the best performance for a given amount of training

compute (as opposed to past trends, which scaled the model 3× faster than the dataset).

• Improved dataset mixtures

: Previous large pre-trained language models typically used a dataset dominated

by English text (e.g.,

∼

78% of non-code in Chowdhery et al. (2022)). We designed a more multilingual and

diverse pre-training mixture, which extends across hundreds of languages and domains (e.g., programming

languages, mathematics, and parallel multilingual documents). We show that larger models can handle more

disparate non-English datasets without causing a drop in English language understanding performance, and apply

deduplication to reduce memorization (Lee et al., 2021)

• Architectural and objective improvements

: Our model architecture is based on the Transformer. Past LLMs

have almost exclusively used a single causal or masked language modeling objective. Given the strong results of

UL2 (Tay et al., 2023), we use a tuned mixture of different pre-training objectives in this model to train the model

to understand different aspects of language.

The largest model in the PaLM 2 family, PaLM 2-L, is significantly smaller than the largest PaLM model but uses

more training compute. Our evaluation results show that PaLM 2 models significantly outperform PaLM on a variety

of tasks, including natural language generation, translation, and reasoning. These results suggest that model scaling

is not the only way to improve performance. Instead, performance can be unlocked by meticulous data selection

and efficient architecture/objectives. Moreover, a smaller but higher quality model significantly improves inference

efficiency, reduces serving cost, and enables the model’s downstream application for more applications and users.

PaLM 2 demonstrates significant multilingual language, code generation and reasoning abilities, which we illustrate in

Figures 2 and 3. More examples can be found in Appendix B.

1

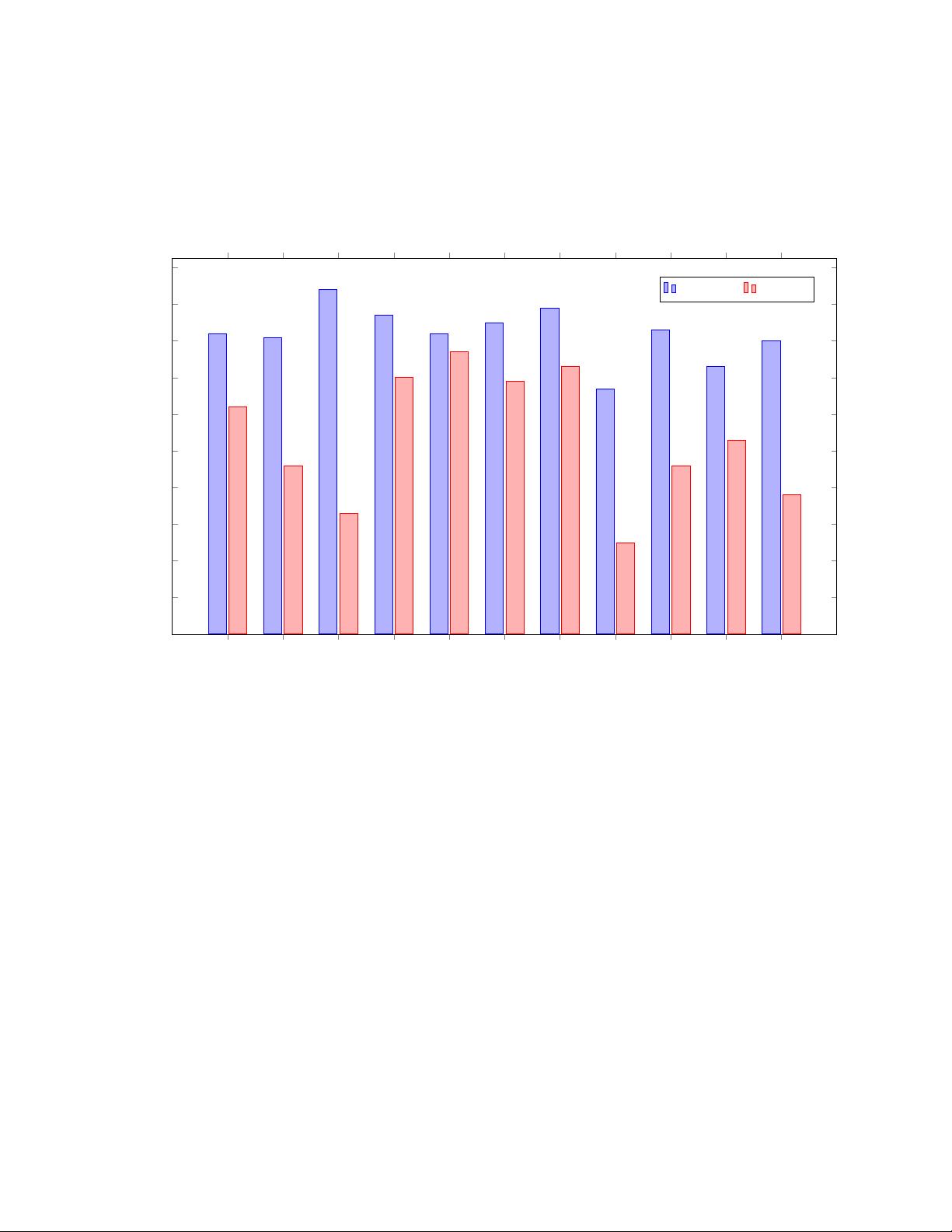

PaLM 2 performs significantly better than PaLM on

real-world advanced language proficiency exams and passes exams in all evaluated languages (see Figure 1). For some

exams, this is a level of language proficiency sufficient to teach that language. In this report, generated samples and

measured metrics are from the model itself without any external augmentations such as Google Search or Translate.

PaLM 2 includes control tokens to enable inference-time control over toxicity, modifying only a fraction of pre-training

as compared to prior work (Korbak et al., 2023). Special ‘canary’ token sequences were injected into PaLM 2 pre-

training data to enable improved measures of memorization across languages (Carlini et al., 2019, 2021). We find

that PaLM 2 has lower average rates of verbatim memorization than PaLM, and for tail languages we observe that

memorization rates increase above English only when data is repeated several times across documents. We show that

PaLM 2 has improved multilingual toxicity classification capabilities, and evaluate potential harms and biases across a

range of potential downstream uses. We also include an analysis of the representation of people in pre-training data.

These sections help downstream developers assess potential harms in their specific application contexts (Shelby et al.,

2023), so that they can prioritize additional procedural and technical safeguards earlier in development. The rest of this

report focuses on describing the considerations that went into designing PaLM 2 and evaluating its capabilities.

1

Note that not all capabilities of PaLM 2 are currently exposed via PaLM 2 APIs.

3

HSK 7-9 Writing (Chinese)

HSK 7-9 Overall (Chinese)

J-Test A-C Overall (Japanese)

PLIDA C2 Writing (Italian)

PLIDA C2 Overall (Italian)

TCF Writing (French)

TCF Overall (French)

DELE C2 Writing (Spanish)

DELE C2 Overall (Spanish)

Goethe-Zertifikat C2 Writing (German)

Goethe-Zertifikat C2 Overall (German)

0

10

20

30

40

50

60

70

80

90

100

Pass

Fail

Pass*

Fail*

Pass

Pass

Pass

Fail*

Pass

Fail

Pass

Fail

82

81

94

87

82

85

89

67

83

73

80

62

46

33

70

77

69

73

25

46

53

38

Exam Score in %

PaLM 2

PaLM

1

Figure 1: Performance of PaLM 2 and PaLM on the latest available professional language proficiency exams. We

used exams for each language to test a C2 (mastery or advanced professional) level proficiency following the CEFR

definition. We used the transcripts of the listening portion of the exams and treated them as additional reading questions.

We used the most recent year’s exam where available, otherwise we used practice exams representative of the full exam.

Each writing exam was scored by three professional native speakers. The writing portion was given equal weight to

the final score compared to the non-written portion. These were done under simulated conditions and are not official

results. For more details, see Section 4.1 of this report. * PaLM 2 reached level A/special A on Japanese, whereas PaLM

reached level F. PaLM 2 reached level C1 on French.

4

Fixing a bug with comments in Korean

can you fix this code with a bug and add line by line comments in Korean.

1 visited = set ()

2

3 def dfs ( visited , graph , node ):

4 if node not in vi s ited :

5 pr i n t ( node )

6 for n eighb our in graph [ node ]:

7 dfs ( visited , graph , nei g hbou r )

The bug in the code is that the

visited

set is not being updated. The code should be updated to add the current

node to the

visited

set before calling the

dfs

function recursively. The updated code with Korean comments

is as follows:

1 # 방문한 노드의 집합을 만듭니다.

2 visited = set ()

3

4 # 깊이 우선 탐색을 수행합니다.

5 def dfs ( visited , graph , node ) :

6 # 현재 노드가 방문한 노드가 아니라면

7 if node not in vi s ited :

8 # 현재 노드를 방문한 노드로 표시합니다.

9 visited . add ( node )

10 # 현재 노드를 출력합니다.

11 pri n t ( n ode )

12 # 현재 노드의 인접 노드에 대해 깊이 우선 탐색을 수행합니다.

13 for n eigh b our in graph [ node ]:

14 dfs ( visited , graph , nei g hbou r )

Figure 2: Example of fixing a bug with line-by-line comments in Korean.

5

剩余91页未读,继续阅读

此星光明

- 粉丝: 5w+

- 资源: 916

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

- 1

- 2

前往页