Enhancing Underwater Images and Videos by Fusion

Cosmin Ancuti, Codruta Orniana Ancuti, Tom Haber and Philippe Bekaert

Hasselt University - tUL -IBBT, EDM, Belgium

Abstract

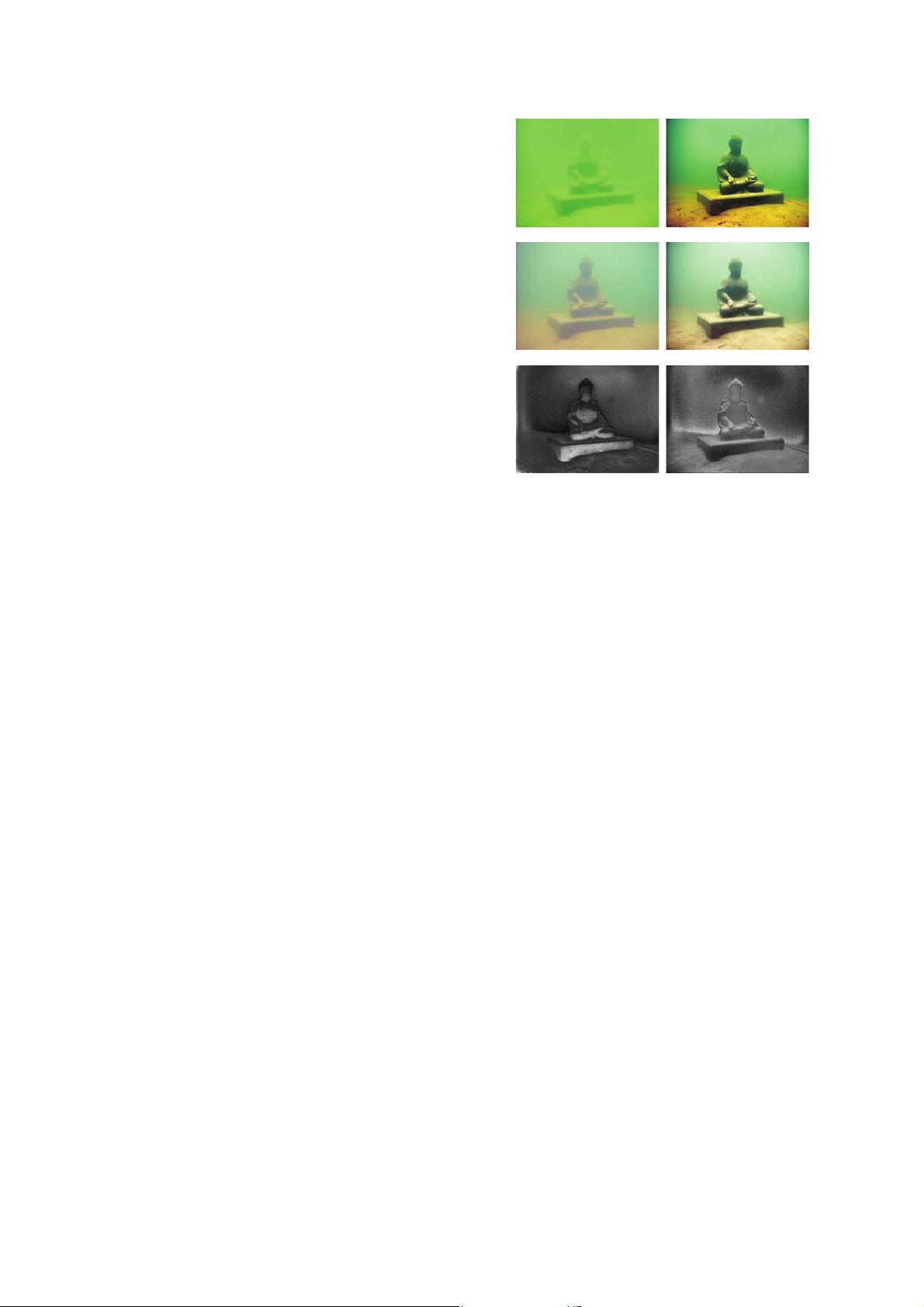

This paper describes a novel strategy to enhance under-

water videos and images. B uilt on the fusion principles, our

strategy derives the inputs and the weight measures only

from the degraded version of the image. In order to over-

come the limitations of the underwater medium we define

two inputs that represent color correc te d and contrast en-

hanced versions of the original underwater image/frame,

but also four weight maps that aim to increase the visibility

of the distant objects degraded due to the medium scattering

and absorption. Our strategy is a sin gle image approach

that does not require specialized hardware or knowledge

about the underwater conditions or scene structure. Our fu-

sion framework also supports temporal coherence between

adjacent frames by performing an effective edge preserving

noise red uction strategy. Th e enhanced images and videos

are characterized by reduced noise level, better exposed-

ness of th e dark regions, improved global contrast while th e

finest details and edges a re enhanced significantly. In ad-

dition, the utility of ou r enhancing technique is proved for

several challenging applica tions.

1. Introduction

Underwater imaging is challeng ing due to the physical

properties existing in such environments. Different from

common images, underwater images suffer from poor vis-

ibility due to the attenuation of the pro pagated light. The

light is attenuated exponentially with the distance and depth

mainly due to absorption and scattering effects. The absorp-

tion substantially reduces the light energy while the scat-

tering causes changes in the light dire ction. The random

attenuation of the light is the main cause of the foggy ap-

pearance while the the fraction of the light scattered back

from the medium along the sight considerably degrades the

scene contrast. The se properties of the underwater medium

yields scenes c haracterized by poor contrast where distant

objects appear misty. Practically, in common sea water, the

objects at a distance of more than 10 meters are almost in-

distinguishab le while the colors are faded since their char-

acteristic wavelengths are cut accordin g to the water depth.

There have been several attempts to restore and enhance

the visibility of such degraded images. Mainly, the prob-

lem can be tackled by using multiple images [21], spe-

cialized hardware [15] and by exploiting polarization fil-

ters [25]. Despite their effectiveness to restore underwater

images, these strategies have demonstrated several impor-

tant issues that reduce their practical applicability. First, the

hardware solutions (e.g. laser range -gated technology and

synchro nous scanning) are relatively expensive and com-

plex. The multiple-imag e solutions requir e several images

of th e same scene taken in different environment conditions.

Similarly, polarization methods process several images that

have different degrees of polarization. While this is rela-

tively feasib le for outdoo r ha zy an d fog gy images, for the

underwater case, the setup of the camera might be trouble-

some. In addition, these methods (except the hardware so-

lutions) are not able to deal with dyn amic scenes, thus being

impractical for videos.

In this paper, we introduce a novel approach that is able

to enhance underwater images based on a single image, as

well as videos of dynamic scenes. Our approach is built on

the fusion principle that has shown utility in several appli-

cations such as image compositing [14], multispectral video

enhance ment [6], defogging [2] and HDR ima ging [20].

In contrast to these methods, our fusion-ba sed approach

does not require multiple images, deriving the inputs and

the weights only from the original degraded image. We

aim for a straightforward a nd computationally inexpen sive

that is able to perform relatively fast on common hardware.

Since the degradation process of underwater scenes is

both multiplicative and additive [26] traditio nal enhancing

techniqu es like white balance, color correction, histogram

equalization shown strong limitations for such a task.

Instead of directly filtering the input image, we developed

a fusion-ba sed scheme driven by the intrinsic properties

of the original image (these properties are represented by

the w eight maps). The success of the fusion techniques

is highly dependent on the choice of the inputs and the

weights and therefore we investigate a set of operators

in order to overco me limitations specific to underwater

environments. As a result, in our framework the degraded

image is firstly white balanced in order to r emove the color

978-1-4673-1228-8/12/$31.00 ©2012 IEEE 81

- 1

- 2

前往页