馃摎 This guide explains how to use **Weights & Biases** (W&B) with YOLOv5 馃殌. UPDATED 29 September 2021.

* [About Weights & Biases](#about-weights-&-biases)

* [First-Time Setup](#first-time-setup)

* [Viewing runs](#viewing-runs)

* [Disabling wandb](#disabling-wandb)

* [Advanced Usage: Dataset Versioning and Evaluation](#advanced-usage)

* [Reports: Share your work with the world!](#reports)

## About Weights & Biases

Think of [W&B](https://wandb.ai/site?utm_campaign=repo_yolo_wandbtutorial) like GitHub for machine learning models. With a few lines of code, save everything you need to debug, compare and reproduce your models 鈥� architecture, hyperparameters, git commits, model weights, GPU usage, and even datasets and predictions.

Used by top researchers including teams at OpenAI, Lyft, Github, and MILA, W&B is part of the new standard of best practices for machine learning. How W&B can help you optimize your machine learning workflows:

* [Debug](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#Free-2) model performance in real time

* [GPU usage](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#System-4) visualized automatically

* [Custom charts](https://wandb.ai/wandb/customizable-charts/reports/Powerful-Custom-Charts-To-Debug-Model-Peformance--VmlldzoyNzY4ODI) for powerful, extensible visualization

* [Share insights](https://wandb.ai/wandb/getting-started/reports/Visualize-Debug-Machine-Learning-Models--VmlldzoyNzY5MDk#Share-8) interactively with collaborators

* [Optimize hyperparameters](https://docs.wandb.com/sweeps) efficiently

* [Track](https://docs.wandb.com/artifacts) datasets, pipelines, and production models

## First-Time Setup

<details open>

<summary> Toggle Details </summary>

When you first train, W&B will prompt you to create a new account and will generate an **API key** for you. If you are an existing user you can retrieve your key from https://wandb.ai/authorize. This key is used to tell W&B where to log your data. You only need to supply your key once, and then it is remembered on the same device.

W&B will create a cloud **project** (default is 'YOLOv5') for your training runs, and each new training run will be provided a unique run **name** within that project as project/name. You can also manually set your project and run name as:

```shell

$ python train.py --project ... --name ...

```

YOLOv5 notebook example: <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>

<img width="960" alt="Screen Shot 2021-09-29 at 10 23 13 PM" src="https://user-images.githubusercontent.com/26833433/135392431-1ab7920a-c49d-450a-b0b0-0c86ec86100e.png">

</details>

## Viewing Runs

<details open>

<summary> Toggle Details </summary>

Run information streams from your environment to the W&B cloud console as you train. This allows you to monitor and even cancel runs in <b>realtime</b> . All important information is logged:

* Training & Validation losses

* Metrics: Precision, Recall, mAP@0.5, mAP@0.5:0.95

* Learning Rate over time

* A bounding box debugging panel, showing the training progress over time

* GPU: Type, **GPU Utilization**, power, temperature, **CUDA memory usage**

* System: Disk I/0, CPU utilization, RAM memory usage

* Your trained model as W&B Artifact

* Environment: OS and Python types, Git repository and state, **training command**

<p align="center"><img width="900" alt="Weights & Biases dashboard" src="https://user-images.githubusercontent.com/26833433/135390767-c28b050f-8455-4004-adb0-3b730386e2b2.png"></p>

</details>

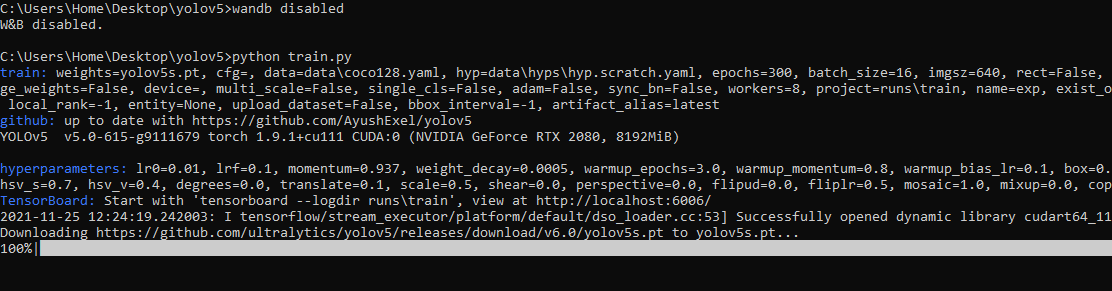

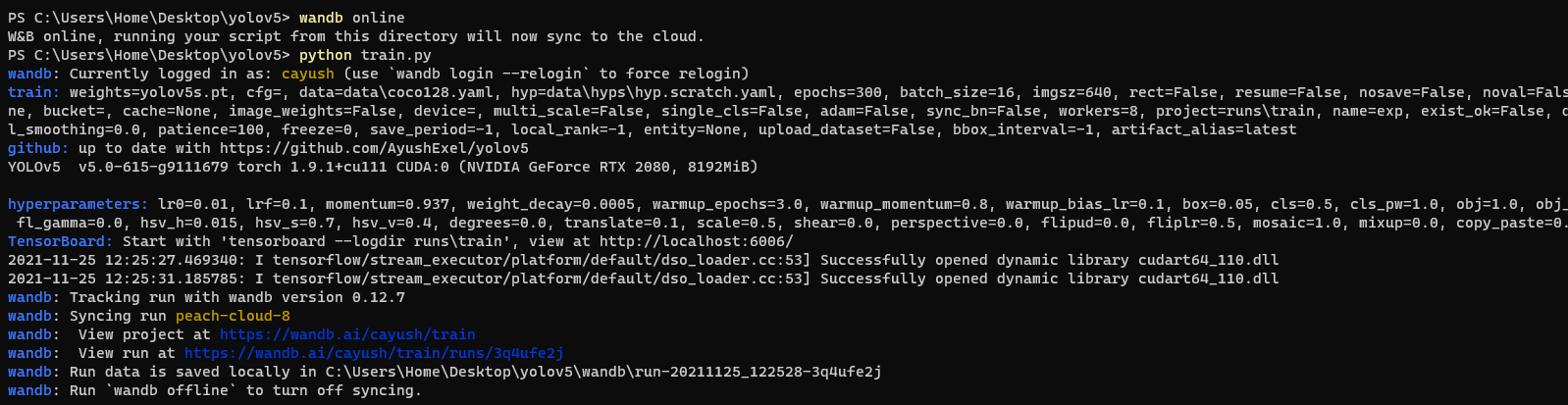

## Disabling wandb

* training after running `wandb disabled` inside that directory creates no wandb run

* To enable wandb again, run `wandb online`

## Advanced Usage

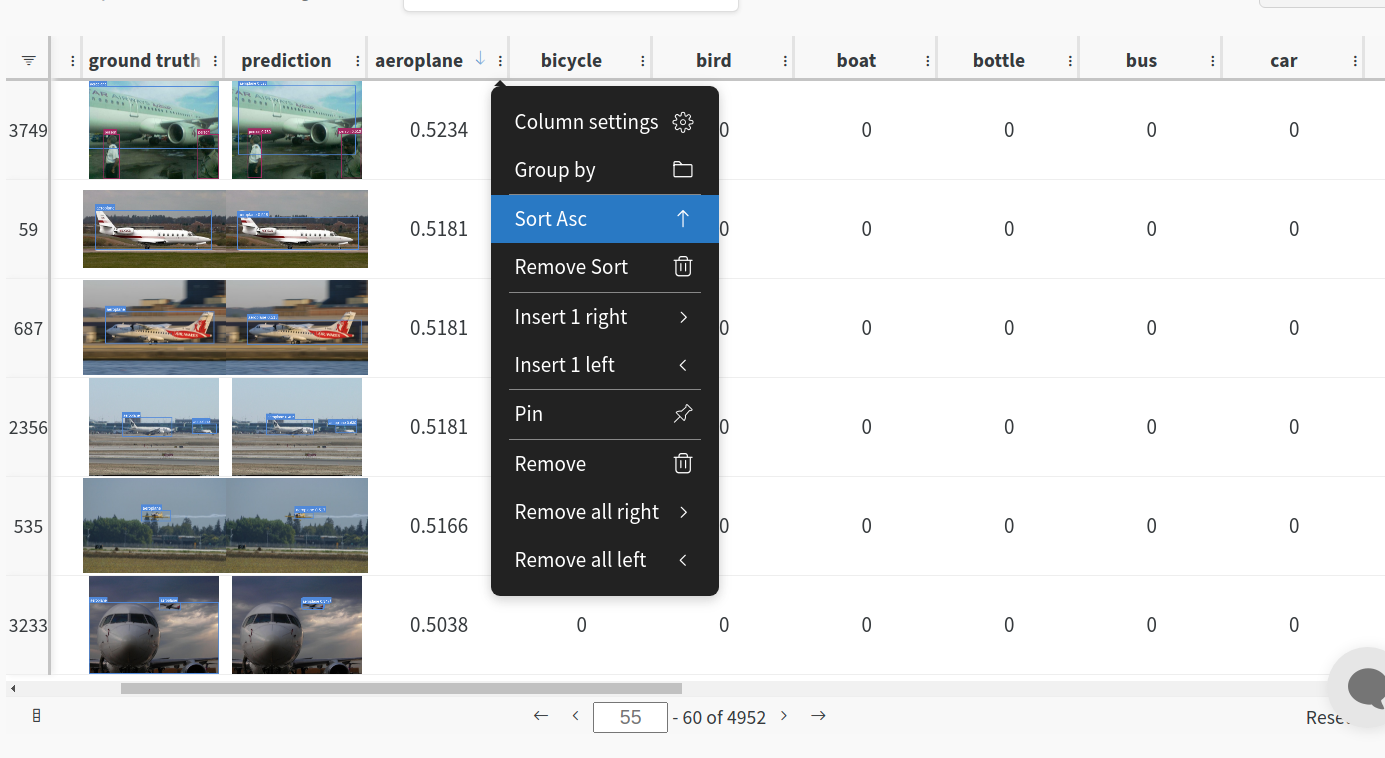

You can leverage W&B artifacts and Tables integration to easily visualize and manage your datasets, models and training evaluations. Here are some quick examples to get you started.

<details open>

<h3> 1: Train and Log Evaluation simultaneousy </h3>

This is an extension of the previous section, but it'll also training after uploading the dataset. <b> This also evaluation Table</b>

Evaluation table compares your predictions and ground truths across the validation set for each epoch. It uses the references to the already uploaded datasets,

so no images will be uploaded from your system more than once.

<details open>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --upload_data val</code>

</details>

<h3>2. Visualize and Version Datasets</h3>

Log, visualize, dynamically query, and understand your data with <a href='https://docs.wandb.ai/guides/data-vis/tables'>W&B Tables</a>. You can use the following command to log your dataset as a W&B Table. This will generate a <code>{dataset}_wandb.yaml</code> file which can be used to train from dataset artifact.

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python utils/logger/wandb/log_dataset.py --project ... --name ... --data .. </code>

</details>

<h3> 3: Train using dataset artifact </h3>

When you upload a dataset as described in the first section, you get a new config file with an added `_wandb` to its name. This file contains the information that

can be used to train a model directly from the dataset artifact. <b> This also logs evaluation </b>

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --data {data}_wandb.yaml </code>

</details>

<h3> 4: Save model checkpoints as artifacts </h3>

To enable saving and versioning checkpoints of your experiment, pass `--save_period n` with the base cammand, where `n` represents checkpoint interval.

You can also log both the dataset and model checkpoints simultaneously. If not passed, only the final model will be logged

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --save_period 1 </code>

</details>

</details>

<h3> 5: Resume runs from checkpoint artifacts. </h3>

Any run can be resumed using artifacts if the <code>--resume</code> argument starts with聽<code>wandb-artifact://</code>聽prefix followed by the run path, i.e,聽<code>wandb-artifact://username/project/runid </code>. This doesn't require the model checkpoint to be present on the local system.

<details>

<summary> <b>Usage</b> </summary>

<b>Code</b> <code> $ python train.py --resume wandb-artifact://{run_path} </code>

</details>

<h3> 6: Resume runs from dataset artifact & checkpoint artifacts. </h3>

<b> Local dataset or model checkpoints are not required. This can be used to resume runs directly on a different device </b>

The syntax is same as the previous section, but you'll need to lof both the dataset and model checkpoints as artifacts, i.e, set bot <code>--upload_dataset<

没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

YOLOv5-ROSYOLOv5 + ROS2 物体检测包该程序将detect.py(ultralytics/yolov5)的输入更改为sensor_msgs/ImageROS2的输入。也许这个更容易使用。安装mkdir -p ws_yolov5/srccd ws_yolov5/srcgit clone https://github.com/Ar-Ray-code/YOLOv5-ROS.gitgit clone https://github.com/Ar-Ray-code/bbox_ex_msgs.gitpip3 install -r ./YOLOv5-ROS/requirements.txtcolcon build --symlink-install演示cd ws_yolov5/source ./install/setup.bashros2 launch yolov5_ros yolov5s_simple.launch.py要求ROS2 狐狸OpenCV 4PyTorch消息框话题订阅原始图像(sensor_

资源推荐

资源详情

资源评论

收起资源包目录

YOLOv5 + ROS2 物体检测包(不规避 AGPL).zip (96个子文件)

YOLOv5 + ROS2 物体检测包(不规避 AGPL).zip (96个子文件)  .github

.github  FUNDING.yml 756B

FUNDING.yml 756B 标签.txt 51B

标签.txt 51B LICENSE 34KB

LICENSE 34KB yolov5_ros

yolov5_ros  setup.py 1014B

setup.py 1014B package.xml 858B

package.xml 858B resource

resource  yolov5_ros 0B

yolov5_ros 0B yolov5_ros

yolov5_ros  __init__.py 0B

__init__.py 0B main.py 11KB

main.py 11KB data

data  Argoverse.yaml 3KB

Argoverse.yaml 3KB coco128.yaml 2KB

coco128.yaml 2KB VisDrone.yaml 3KB

VisDrone.yaml 3KB xView.yaml 5KB

xView.yaml 5KB SKU-110K.yaml 2KB

SKU-110K.yaml 2KB coco.yaml 2KB

coco.yaml 2KB VOC.yaml 3KB

VOC.yaml 3KB images

images  zidane.jpg 165KB

zidane.jpg 165KB bus.jpg 476KB

bus.jpg 476KB GlobalWheat2020.yaml 2KB

GlobalWheat2020.yaml 2KB hyps

hyps  hyp.finetune_objects365.yaml 460B

hyp.finetune_objects365.yaml 460B hyp.scratch-med.yaml 2KB

hyp.scratch-med.yaml 2KB hyp.finetune.yaml 907B

hyp.finetune.yaml 907B hyp.scratch-high.yaml 2KB

hyp.scratch-high.yaml 2KB hyp.scratch.yaml 2KB

hyp.scratch.yaml 2KB hyp.scratch-low.yaml 2KB

hyp.scratch-low.yaml 2KB scripts

scripts  get_coco.sh 900B

get_coco.sh 900B get_coco128.sh 615B

get_coco128.sh 615B download_weights.sh 523B

download_weights.sh 523B Objects365.yaml 8KB

Objects365.yaml 8KB export.py 29KB

export.py 29KB utils

utils  __init__.py 1KB

__init__.py 1KB loss.py 10KB

loss.py 10KB loggers

loggers  __init__.py 8KB

__init__.py 8KB wandb

wandb  __init__.py 0B

__init__.py 0B sweep.yaml 2KB

sweep.yaml 2KB log_dataset.py 1KB

log_dataset.py 1KB sweep.py 1KB

sweep.py 1KB README.md 11KB

README.md 11KB wandb_utils.py 27KB

wandb_utils.py 27KB augmentations.py 12KB

augmentations.py 12KB flask_rest_api

flask_rest_api  example_request.py 368B

example_request.py 368B restapi.py 1KB

restapi.py 1KB README.md 2KB

README.md 2KB metrics.py 14KB

metrics.py 14KB aws

aws  __init__.py 0B

__init__.py 0B userdata.sh 1KB

userdata.sh 1KB mime.sh 780B

mime.sh 780B resume.py 1KB

resume.py 1KB autoanchor.py 7KB

autoanchor.py 7KB general.py 39KB

general.py 39KB activations.py 3KB

activations.py 3KB google_app_engine

google_app_engine  Dockerfile 821B

Dockerfile 821B app.yaml 174B

app.yaml 174B additional_requirements.txt 105B

additional_requirements.txt 105B downloads.py 6KB

downloads.py 6KB plots.py 21KB

plots.py 21KB datasets.py 46KB

datasets.py 46KB benchmarks.py 6KB

benchmarks.py 6KB callbacks.py 2KB

callbacks.py 2KB torch_utils.py 13KB

torch_utils.py 13KB autobatch.py 2KB

autobatch.py 2KB models

models  hub

hub  yolov5x6.yaml 2KB

yolov5x6.yaml 2KB anchors.yaml 3KB

anchors.yaml 3KB yolov5-p2.yaml 2KB

yolov5-p2.yaml 2KB yolov5s-ghost.yaml 1KB

yolov5s-ghost.yaml 1KB yolov5-panet.yaml 1KB

yolov5-panet.yaml 1KB yolov5s6.yaml 2KB

yolov5s6.yaml 2KB yolov3.yaml 2KB

yolov3.yaml 2KB yolov5-p6.yaml 2KB

yolov5-p6.yaml 2KB yolov5n6.yaml 2KB

yolov5n6.yaml 2KB yolov5-bifpn.yaml 1KB

yolov5-bifpn.yaml 1KB yolov5-p7.yaml 2KB

yolov5-p7.yaml 2KB yolov5l6.yaml 2KB

yolov5l6.yaml 2KB yolov5m6.yaml 2KB

yolov5m6.yaml 2KB yolov3-spp.yaml 2KB

yolov3-spp.yaml 2KB yolov5-p34.yaml 1KB

yolov5-p34.yaml 1KB yolov3-tiny.yaml 1KB

yolov3-tiny.yaml 1KB yolov5-fpn.yaml 1KB

yolov5-fpn.yaml 1KB yolov5s-transformer.yaml 1KB

yolov5s-transformer.yaml 1KB __init__.py 0B

__init__.py 0B tf.py 21KB

tf.py 21KB yolov5m.yaml 1KB

yolov5m.yaml 1KB yolov5s.yaml 1KB

yolov5s.yaml 1KB yolov5l.yaml 1KB

yolov5l.yaml 1KB common.py 33KB

common.py 33KB experimental.py 5KB

experimental.py 5KB yolov5x.yaml 1KB

yolov5x.yaml 1KB yolov5n.yaml 1KB

yolov5n.yaml 1KB yolo.py 15KB

yolo.py 15KB config

config  yolov5s.pt 14.02MB

yolov5s.pt 14.02MB launch

launch  yolov5s_simple.launch.py 868B

yolov5s_simple.launch.py 868B setup.cfg 89B

setup.cfg 89B .gitmodules 103B

.gitmodules 103B 资源内容.txt 640B

资源内容.txt 640B requirements.txt 925B

requirements.txt 925B .gitignore 12B

.gitignore 12B README.md 2KB

README.md 2KB共 96 条

- 1

资源评论

徐浪老师

- 粉丝: 8683

- 资源: 1万+

下载权益

C知道特权

VIP文章

课程特权

开通VIP

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 高阶AI指令大合集!.zip

- DeepSeek零基础到精通手册(保姆级教程).zip

- DeepSeek使用攻略.zip

- 【官网提示库】探索 DeepSeek 提示词样例,挖掘更多可能.zip

- 《7天精通DeepSeek实操手册》.zip

- 教大家如何使用Deepseek AI进行超级降维知识输出V1.0版.zip

- DeepSeek 15天指导手册-从入门到精通.zip

- 10天精通+DeepSeek+实操手册.zip

- 112页!DeepSeek 7大场景+50大案例+全套提示词 从入门到精通干货-202502.zip

- Deepseek+V3从零基础到精通学习手册(1).zip

- OMO2203class3面向对象.mp4

- 三相逆变器下垂控制参数调整与波形质量分析报告:直流侧电压800V,交流侧电压220V,开关频率达10kHz,模拟调频工况下性能表现优越,三相逆变器下垂控制参数调整与波形质量分析报告:直流侧电压800V

- 工具变量-上市公司企业绿色创新泡沫数据(1995-2023年).txt

- Simulink Simscape中的UR5机械臂三次多项式轨迹规划仿真:动态动画展示角度、力矩与运动参数图,六自由度UR5机械臂Simulink Simscape三次多项式轨迹规划仿真动画及数据图表

- 基于FPGA与Matlab算法的超声多普勒频移解调系统:DDS生成信号、混合与滤波处理、FFT运算及峰值搜索比对,基于FPGA和MATLAB的超声多普勒频移解调技术:DDS生成信号、混频处理、滤波、F

- 松下FP-XH双PLC 10轴摆盘程序范例:清晰分输出与调试、报警通信、启动复位,维纶通触摸屏操作,一年平稳运行经验分享,松下FP-XH双PLC 10轴摆盘程序范例:清晰思路,易学易懂,带触摸屏与通信

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功