没有合适的资源?快使用搜索试试~ 我知道了~

Introduction to Intel AVX

需积分: 10 13 下载量 34 浏览量

2018-07-23

16:28:23

上传

评论

收藏 1.44MB PDF 举报

温馨提示

试读

21页

介绍 Intel AVX 指令集,及其用法. 使用AVX指令集可以很方便的实现向量化计算。

资源推荐

资源详情

资源评论

Introduction to Intel® Advanced Vector Extensions

By Chris Lomont

Intel® Advanced Vector Extensions (Intel® AVX) is a set of instructions for doing Single

Instruction Multiple Data (SIMD) operations on Intel® architecture CPUs. These instructions

extend previous SIMD offerings (MMX™ instructions and Intel® Streaming SIMD Extensions

(Intel® SSE)) by adding the following new features:

The 128-bit SIMD registers have been expanded to 256 bits. Intel® AVX is designed to

support 512 or 1024 bits in the future.

Three-operand, nondestructive operations have been added. Previous two-operand

instructions performed operations such as A = A + B, which overwrites a source operand;

the new operands can perform operations like A = B + C, leaving the original source

operands unchanged.

A few instructions take four-register operands, allowing smaller and faster code by

removing unnecessary instructions.

Memory alignment requirements for operands are relaxed.

A new extension coding scheme (VEX) has been designed to make future additions easier

as well as making coding of instructions smaller and faster to execute.

Closely related to these advances are the new Fused–Multiply–Add (FMA) instructions, which

allow faster and more accurate specialized operations such as single instruction A = A * B + C. The

FMA instructions should be available in the second-generation Intel® Core™ CPU. Other features

include new instructions for dealing with Advanced Encryption Standard (AES) encryption and

decryption, a packed carry-less multiplication operation (PCLMULQDQ) useful for certain

encryption primitives, and some reserved slots for future instructions, such as a hardware random

number generator.

Instruction Set Overview

The new instructions are encoded using what Intel calls a VEX prefix, which is a two- or three-byte

prefix designed to clean up the complexity of current and future x86/x64 instruction encoding.

The two new VEX prefixes are formed from two obsolete 32-bit instructions—Load Pointer Using

DS (LDS—0xC4, 3-byte form) and Load Pointer Using ES (LES—0xC5, two-byte form)—which load

the DS and ES segment registers in 32-bit mode. In 64-bit mode, opcodes LDS and LES generate an

invalid-opcode exception, but under Intel® AVX, these opcodes are repurposed for encoding new

instruction prefixes. As a result, the VEX instructions can only be used when running in 64-bit

mode. The prefixes allow encoding more registers than previous x86 instructions and are required

for accessing the new 256-bit SIMD registers or using the three- and four-operand syntax. As a

user, you do not need to worry about this (unless you’re writing assemblers or disassemblers).

2

Intel® Advanced Vector Extensions

23 May 2011

Note The rest of this article assumes operation in 64-bit mode.

SIMD instructions allow processing of multiple pieces of data in a single step, speeding up

throughput for many tasks, from video encoding and decoding to image processing to data

analysis to physics simulations. Intel® AVX instructions work on Institute of Electrical and

Electronics Engineers (IEEE)-754 floating-point values in 32-bit length (called single precision)

and in 64-bit length (called double precision). IEEE-754 is the standard defining reproducible,

robust floating-point operation and is the standard for most mainstream numerical computations.

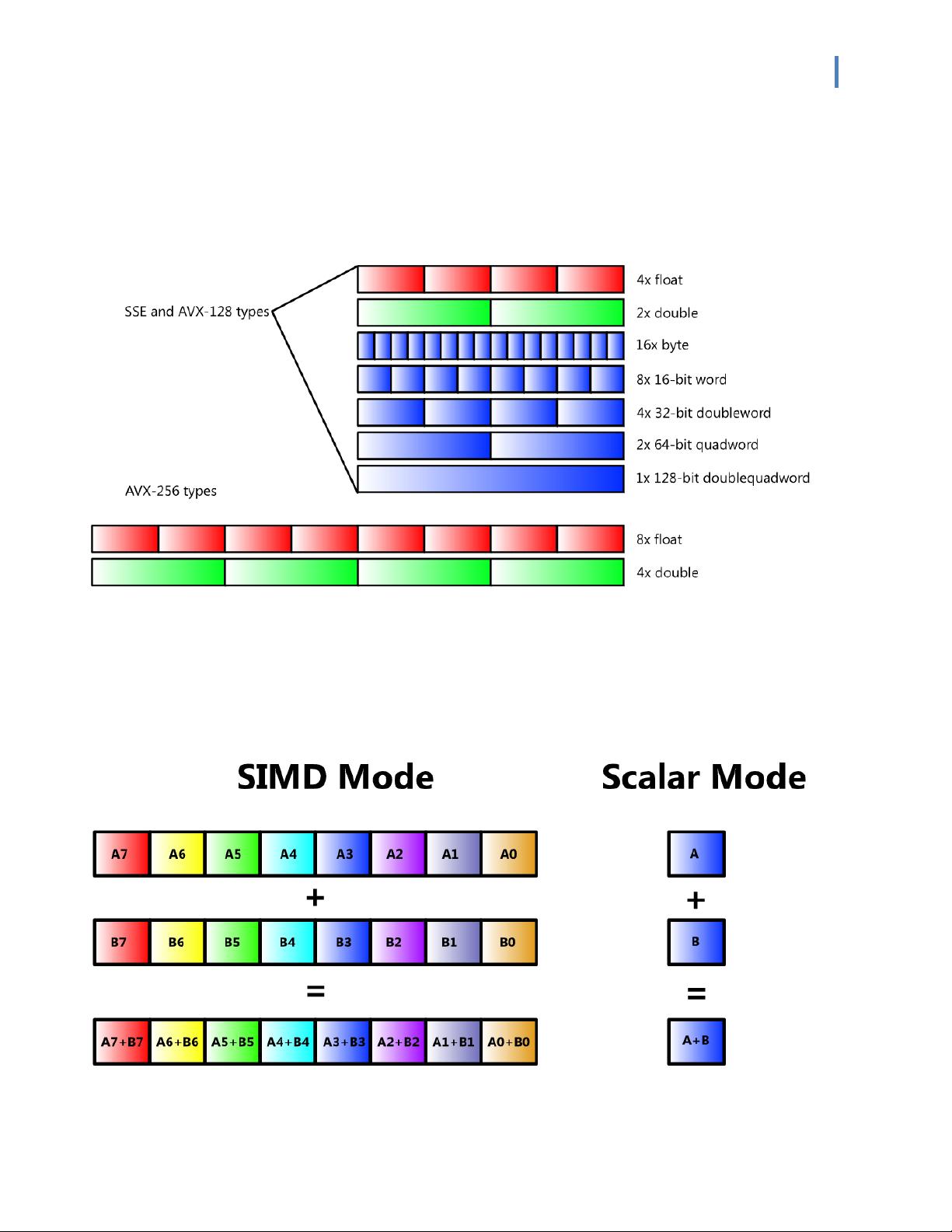

The older, related Intel® SSE instructions also support various signed and unsigned integer sizes,

including signed and unsigned byte (B, 8-bit), word (W, 16-bit), doubleword (DW, 32-bit),

quadword (QW, 64-bit), and doublequadword (DQ, 128-bit) lengths. Not all instructions are

available in all size combinations; for details, see the links provided in “For More Information.” See

Figure 2 later in this article for a graphical representation of the data types.

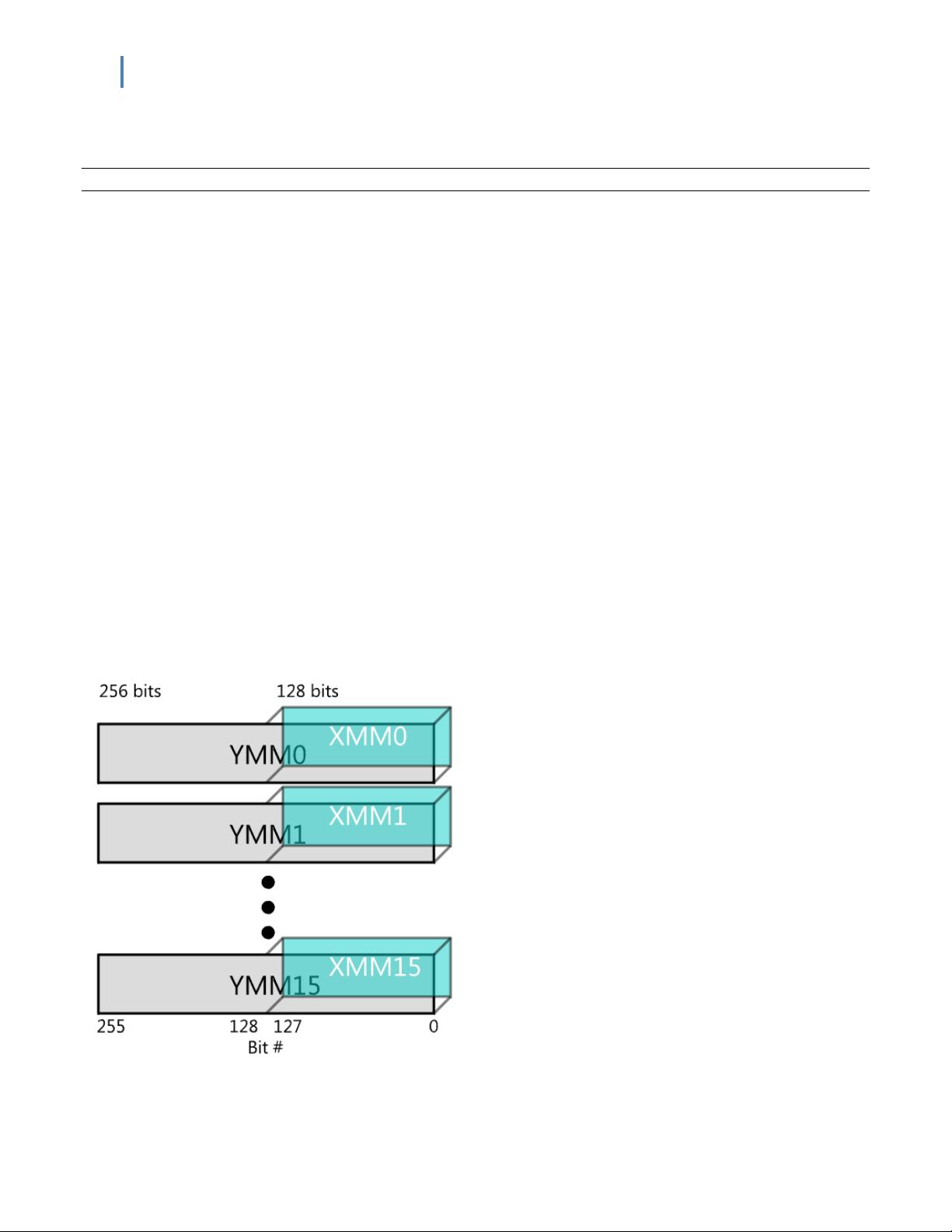

The hardware supporting Intel® AVX (and FMA) consists of the 16 256-bit YMM registers YMM0-

YMM15 and a 32-bit control/status register called MXCSR. The YMM registers are aliased over the

older 128-bit XMM registers used for Intel SSE, treating the XMM registers as the lower half of the

corresponding YMM register, as shown in Figure 1.

Bits 0–5 of MXCSR indicate SIMD floating-point exceptions with “sticky” bits—after being set, they

remain set until cleared using LDMXCSR or FXRSTOR. Bits 7–12 mask individual exceptions when set,

initially set by a power-up or reset. Bits 0–5 represent invalid operation, denormal, divide by zero,

overflow, underflow, and precision, respectively. For details, see the links ”For More Information.”

Figure 1. XMM registers overlay the YMM registers.

Intel® Advanced Vector Extensions

3

23 May 2011

Figure 2 illustrates the data types used in the Intel® SSE and Intel® AVX instructions. Roughly, for

Intel AVX, any multiple of 32-bit or 64-bit floating-point type that adds to 128 or 256 bits is

allowed as well as multiples of any integer type that adds to 128 bits.

Figure 2. Intel® AVX and Intel® SSE data types

Instructions often come in scalar and vector versions, as illustrated in Figure 3. Vector versions

operate by treating data in the registers in parallel “SIMD” mode; the scalar version only operates

on one entry in each register. This distinction allows less data movement for some algorithms,

providing better overall throughput.

Figure 3. SIMD versus scalar operations

4

Intel® Advanced Vector Extensions

23 May 2011

Data is memory aligned when the data to be operated upon as an n-byte chunk is stored on an n-

byte memory boundary. For example, when loading 256-bit data into YMM registers, if the data

source is 256-bit aligned, the data is called aligned.

For Intel® SSE operations, memory alignment was required unless explicitly stated. For example,

under Intel SSE, there were specific instructions for memory-aligned and memory-unaligned

operations, such as the MOVAPD (move-aligned packed double) and MOVUPD (move-unaligned

packed double) instructions. Instructions not split in two like this required aligned accesses.

Intel® AVX has relaxed some memory alignment requirements, so now Intel AVX by default allows

unaligned access; however, this access may come at a performance slowdown, so the old rule of

designing your data to be memory aligned is still good practice (16-byte aligned for 128-bit access

and 32-byte aligned for 256-bit access). The main exceptions are the VEX-extended versions of the

SSE instructions that explicitly required memory-aligned data: These instructions still require

aligned data. Other specific instructions requiring aligned access are listed in Table 2.4 of the

Intel® Advanced Vector Extensions Programming Reference (see “For More Information” for a link).

Another performance concern besides unaligned data issues is that mixing legacy XMM-only

instructions and newer Intel AVX instructions causes delays, so minimize transitions between

VEX-encoded instructions and legacy Intel SSE code. Said another way, do not mix VEX-prefixed

instructions and non–VEX-prefixed instructions for optimal throughput. If you must do so,

minimize transitions between the two by grouping instructions of the same VEX/non-VEX class.

Alternatively, there is no transition penalty if the upper YMM bits are set to zero via VZEROUPPER or

VZEROALL, which compilers should automatically insert. This insertion requires an extra

instruction, so profiling is recommended.

Intel® AVX Instruction Classes

As mentioned, Intel® AVX adds support for many new instructions and extends current Intel SSE

instructions to the new 256-bit registers, with most old Intel SSE instructions having a V-prefixed

Intel AVX version for accessing new register sizes and three-operand forms. Depending on how

instructions are counted, there are up to a few hundred new Intel AVX instructions.

For example, the old two-operand Intel SSE instruction ADDPS xmm1, xmm2/m128 can now be

expressed in three-operand syntax as VADDPS xmm1, xmm2, xmm3/m128 or the 256-bit register

using the form VADDPS ymm1, ymm2, ymm3/m256. A few instructions allow four operands, such as

VBLENDVPS ymm1, ymm2, ymm3/m256, ymm4, which conditionally copies single-precision floating-

point values from ymm2 or ymm3/m256 to ymm1 based on masks in ymm4. This is an improvement on

the previous form, where xmm0 was implicitly needed, requiring compilers to free up xmm0. Now,

with all registers explicit, there is more freedom for register allocation. Here, m128 is a 128-bit

memory location, xmm1 is the 128-bit register, and so on.

Some new instructions are VEX only (not Intel SSE extensions), including many ways to move data

into and out of the YMM registers. Examples are the useful VBROADCASTS[S/D], which loads a

Intel® Advanced Vector Extensions

5

23 May 2011

single value into all elements of an XMM or YMM register, and ways to shuffle data around in a

register using VPERMILP[S/D]. (The bracket notation is explained in the Appendix A.)

Intel® AVX adds arithmetic instructions for variants of add, subtract, multiply, divide, square root,

compare, min, max, and round on single- and double-precision packed and scalar floating-point

data. Many new conditional predicates are also useful for 128-bit Intel SSE, giving 32 comparison

types. Intel® AVX also includes instructions promoted from previous SIMD covering logical, blend,

convert, test, pack, unpack, shuffle, load, and store.

The toolset adds new instructions, as well, including non-strided fetching (broadcast of single or

multiple data into a 256-bit destination, masked-move primitives for conditional load and store),

insert and extract multiple-SIMD data to and from 256-bit SIMD registers, permute primitives to

manipulate data within a register, branch handling, and packed testing instructions.

Future Additions

The Intel® AVX manual also lists some proposed future instructions, covered here for

completeness. This is not a guarantee that these instructions will materialize as written.

Two instructions (VCVTPH2PS and VCVTPS2PH) are reserved for supporting 16-bit floating-point

conversions to and from single– and double–floating-point types. The 16-bit format is called half-

precision and has a 10-bit mantissa (with an implied leading 1 for non-denormalized numbers,

resulting in 11-bit precision), 5-bit exponent (biased by 15), and 1-bit sign.

The proposed RDRAND instruction uses a cryptographically secure hardware digital random bit

generator to generate random numbers for 16- 32- , and 64-bit registers. On success, the carry flag

is set to 1 (CF=1). If not enough entropy is available, the carry flag is cleared (CF=0).

Finally, there are four instructions (RDFDBASE, RDGSBASE, WRFSBASE, and WRGSBASE) to read and

write FS and GS registers at all privilege levels in 64-bit mode.

Another future addition is the FMA instructions, which perform operations similar to

A = + A * B + C, where either of the plus signs (+) on the right can be changed to a minus sign (−)

and the three operands on the right can be in any order. There are also forms for interleaved

addition and subtraction. Packed FMA instructions can perform eight single-precision FMA

operations or four double-precision FMA operations with 256-bit vectors.

FMA operations such as A = A * B + C are better than performing one step at a time, because

intermediate results are treated as infinite precision, with rounding done on store, and thus are

more accurate for computation. This single rounding is what gives the “fused” prefix. They are also

faster than performing the computation in steps.

Each instruction comes in three forms for the ordering of the operands A, B, and C, with the

ordering corresponding to a three-digit extension: form 132 does A = AC + B, form 213 does

A = BA + C, and form 231 does A = BC + A. The ordering number is just the order of the operands

on the right side of the expression.

剩余20页未读,继续阅读

资源评论

Dgelom

- 粉丝: 0

- 资源: 3

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- ### 1、项目介绍 本项目Scrapy进行数据爬取,并使用Django框架+PyEcharts实现可视化大屏 效果如下:

- # 微信小程序-健康菜谱 基于微信小程序的一个查找检索菜谱的应用 ### 效果 !动态图(./res/gif/demo

- zabbix-get命令包资源

- 毕业设计,基于PyQt5实现的可视化界面的Python车牌自动识别系统源码

- 26-朴素贝叶斯分类.rar

- 没有安Matlab 也可以 生成FIR抽头系数工具.py

- python烟花代码.rar

- 实验目的: 1.构建基于verilog语言的组合逻辑电路和时序逻辑电路; 2.掌握verilog语言的电路设计技巧 3.完成如

- 扩展卡尔曼滤波matlab仿真

- 3_base.apk.1

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功