没有合适的资源?快使用搜索试试~ 我知道了~

A Real-Time Detection Method for Picking Robot Based on YOLOv 5

需积分: 0 0 下载量 13 浏览量

2024-01-03

08:14:54

上传

评论

收藏 7.89MB PDF 举报

温馨提示

试读

23页

论文

资源推荐

资源详情

资源评论

remote sensing

Article

A Real-Time Apple Targets Detection Method for Picking Robot

Based on Improved YOLOv5

Bin Yan

1,2

, Pan Fan

1,2

, Xiaoyan Lei

1,2

, Zhijie Liu

1,3

and Fuzeng Yang

1,3,4,

*

Citation: Yan, B.; Fan, P.; Lei, X.; Liu,

Z.; Yang, F. A Real-Time Apple

Targets Detection Method for Picking

Robot Based on Improved YOLOv5.

Remote Sens. 2021, 13, 1619. https:

//doi.org/10.3390/rs13091619

Academic Editor: Gemine Vivone

Received: 20 March 2021

Accepted: 19 April 2021

Published: 21 April 2021

Publisher’s Note: MDPI stays neutral

with regard to jurisdictional claims in

published maps and institutional affil-

iations.

Copyright: © 2021 by the authors.

Licensee MDPI, Basel, Switzerland.

This article is an open access article

distributed under the terms and

conditions of the Creative Commons

Attribution (CC BY) license (https://

creativecommons.org/licenses/by/

4.0/).

1

College of Mechanical and Electronic Engineering, Northwest A&F University, Yangling 712100, China;

[email protected] (Z.L.)

2

Shannxi Key Laboratory of Apple, Yangling 712100, China

3

Apple Full Mechanized Scientific Research Base of Ministry of Agriculture and Rural Affairs,

Yangling 712100, China

4

State Key Laboratory of Soil Erosion and Dryland Farming on Loess Plateau, Yangling 712100, China

* Correspondence: [email protected]

Abstract:

The apple target recognition algorithm is one of the core technologies of the apple picking

robot. However, most of the existing apple detection algorithms cannot distinguish between the

apples that are occluded by tree branches and occluded by other apples. The apples, grasping

end-effector and mechanical picking arm of the robot are very likely to be damaged if the algorithm

is directly applied to the picking robot. Based on this practical problem, in order to automatically

recognize the graspable and ungraspable apples in an apple tree image, a light-weight apple targets

detection method was proposed for picking robot using improved YOLOv5s. Firstly, BottleneckCSP

module was improved designed to BottleneckCSP-2 module which was used to replace the Bot-

tleneckCSP module in backbone architecture of original YOLOv5s network. Secondly, SE module,

which belonged to the visual attention mechanism network, was inserted to the proposed improved

backbone network. Thirdly, the bonding fusion mode of feature maps, which were inputs to the

target detection layer of medium size in the original YOLOv5s network, were improved. Finally,

the initial anchor box size of the original network was improved. The experimental results indi-

cated that the graspable apples, which were unoccluded or only occluded by tree leaves, and the

ungraspable apples, which were occluded by tree branches or occluded by other fruits, could be

identified effectively using the proposed improved network model in this study. Specifically, the

recognition recall, precision, mAP and F1 were 91.48%, 83.83%, 86.75% and 87.49%, respectively.

The average recognition time was 0.015 s per image. Contrasted with original YOLOv5s, YOLOv3,

YOLOv4 and EfficientDet-D0 model, the mAP of the proposed improved YOLOv5s model increased

by 5.05%, 14.95%, 4.74% and 6.75% respectively, the size of the model compressed by 9.29%, 94.6%,

94.8% and 15.3% respectively. The average recognition speeds per image of the proposed improved

YOLOv5s model were 2.53, 1.13 and 3.53 times of EfficientDet-D0, YOLOv4 and YOLOv3 and model,

respectively. The proposed method can provide technical support for the real-time accurate detection

of multiple fruit targets for the apple picking robot.

Keywords:

artificial intelligence; convolutional neural network; YOLOv5; object detection; apple

picking robot; lightweight; real-time detection

1. Introduction

Artificial apple picking is a labor-intensive and time-intensive task. Therefore, in

order to realize the efficient and automatic picking of apples, to ensure timely harvest of

mature fruits, and improve the competitiveness of the apple market, further study of the

key technologies of the apple picking robot is essential [

1

,

2

]. The intelligent perception

and acquisition of apple information is one of the most critical technologies for the apple

picking robot, which belongs to the information perception of the front-end part of the

Remote Sens. 2021, 13, 1619. https://doi.org/10.3390/rs13091619 https://www.mdpi.com/journal/remotesensing

Remote Sens. 2021, 13, 1619 2 of 23

robot. Therefore, to improve the apple picking efficiency of the robot, it is necessary to

realize the rapid and accurate identification of apple targets on the tree.

A schematic diagram of the practical harvesting situation that the picking robot

confronts in an apple orchard is shown in Figure 1. The robot can realize the picking

of apples that are unoccluded or only occluded by leaves. However, the apples which

are occluded by branches or occluded by other fruits cannot be harvested by the picking

robot, due to the fact that the apples, grasping end-effector and mechanical picking arm of

the robot are very likely to be damaged if directly picking apples in the above situations

without accurate recognition, resulting in the failure of picking operation. Therefore, it

is essential for the picking robot to automatically recognize the apple targets which are

graspable or ungraspable.

Remote Sens. 2021, 13, x FOR PEER REVIEW 2 of 24

picking robot, which belongs to the information perception of the front-end part of the

robot. Therefore, to improve the apple picking efficiency of the robot, it is necessary to

realize the rapid and accurate identification of apple targets on the tree.

A schematic diagram of the practical harvesting situation that the picking robot con-

fronts in an apple orchard is shown in Figure 1. The robot can realize the picking of apples

that are unoccluded or only occluded by leaves. However, the apples which are occluded

by branches or occluded by other fruits cannot be harvested by the picking robot, due to

the fact that the apples, grasping end-effector and mechanical picking arm of the robot are

very likely to be damaged if directly picking apples in the above situations without accu-

rate recognition, resulting in the failure of picking operation. Therefore, it is essential for

the picking robot to automatically recognize the apple targets which are graspable or un-

graspable.

Figure 1. Schematic diagram of practical harvesting situation that picking robot confronts.

However, the existing apple targets recognition algorithms basically focused on the

identification of apples in the complex orchard environment (leaf occlusion, branch occlu-

sion, fruit occlusion and mixed occlusion etc.), and recognizes apples in different condi-

tions as one class. Therefore, they are not suitable for application by picking robots. The

recognition algorithm for judging whether the apples can be harvested by picking robot

has not yet been studied.

With the development of artificial intelligence, in recent years, the artificial neural

network has been widely applied in many research fields. For example, in the field of

economy, stacking and deep neural network models are deployed separately on feature

engineered and bootstrapped samples for estimating trends in prices of underlying stocks

during pre- and post-COVID-19 periods [3]; the grey relational analysis (GRA) and artifi-

cial neural network models were utilized for the prediction of consumer exchange-traded

funds (ETFs) [4]. In the field of industry, a relatively simple fuzzy logic-based solution for

networked control system was proposed using related ideas of neural network [5]; a

model for the prediction of maximum energy generated photovoltaic modules based on

fuzzy logic principles and artificial neural networks was developed [6]; the intelligence of

the flexible manufacturing system (FMS) was improved by combining Petri Net [7] and

the artificial neural network [8]. In the field of agriculture, a new deep learning architec-

ture called VddNet (Vine Disease Detection Network) was proposed for the detection of

vine disease [9]; conifer seedlings in drone imagery were automated, detected using a

Figure 1. Schematic diagram of practical harvesting situation that picking robot confronts.

However, the existing apple targets recognition algorithms basically focused on the

identification of apples in the complex orchard environment (leaf occlusion, branch occlu-

sion, fruit occlusion and mixed occlusion etc.), and recognizes apples in different conditions

as one class. Therefore, they are not suitable for application by picking robots. The recogni-

tion algorithm for judging whether the apples can be harvested by picking robot has not

yet been studied.

With the development of artificial intelligence, in recent years, the artificial neural

network has been widely applied in many research fields. For example, in the field of

economy, stacking and deep neural network models are deployed separately on feature

engineered and bootstrapped samples for estimating trends in prices of underlying stocks

during pre- and post-COVID-19 periods [

3

]; the grey relational analysis (GRA) and artificial

neural network models were utilized for the prediction of consumer exchange-traded

funds (ETFs) [

4

]. In the field of industry, a relatively simple fuzzy logic-based solution for

networked control system was proposed using related ideas of neural network [

5

]; a model

for the prediction of maximum energy generated photovoltaic modules based on fuzzy

logic principles and artificial neural networks was developed [

6

]; the intelligence of the

flexible manufacturing system (FMS) was improved by combining Petri Net [

7

] and the

artificial neural network [

8

]. In the field of agriculture, a new deep learning architecture

called VddNet (Vine Disease Detection Network) was proposed for the detection of vine

disease [

9

]; conifer seedlings in drone imagery were automated, detected using a neural

network [

10

]; the early blight disease was identified in real-time for potato production

systems, using machine vision in combination with deep learning [11].

Remote Sens. 2021, 13, 1619 3 of 23

When given sufficient data, the deep learning algorithm can generate and extrapolate

new features without having to be explicitly told which features should be utilized and

how they can be extracted [

12

–

14

]. CNNs (convolutional neural networks) are another

variety of algorithm belonging to deep learning technology, which can provide insights into

image-related datasets that we have not yet understood, achieving identification accuracies

that sometimes surpass the human-level performance [

15

–

17

]. One of the most important

characteristics of utilizing CNNs in object detection is that the CNN can obtain essential

features by itself. Furthermore, it can build and use more abstract concepts [18].

Up till now, there have been many studies in the aspect of apple targets recognition us-

ing deep learning technology. Many convolutional neural networks, such as YOLOv2 [

19

],

YOLOv3 [

20

], LedNet [

21

], R-FCN [

22

], Faster R-CNN [

23

–

26

], Mask R-CNN [

27

], DaS-

Net [

28

] and DaSNet-v2 [

29

], were successfully used in apple target recognition. The

relevant study status is shown in Table 1.

Table 1. Research on apple target recognition, based on deep learning technology.

Networks Model

Precision

(%)

Recall

(%)

mAP

(%)

F1

(%)

Average

Detection Speed

(s/pic)

Reference

Improved YOLOv2 — — 90 — 0.333 [19]

Improved YOLOv3 97 90 87.71 — 0.01669 [20]

LedNet 85.3 82.1 82.6 83.4 0.028 [21]

Improved R-FCN 95.1 85.7 — 90.2 0.187 [22]

Improved Faster

R-CNN

89.7 89.9 94.8 89.8 4.412 [25]

Mask R-CNN 85.7 90.6 — 88.1 — [27]

DaSNet — — 83.6 83.2 0.072 [28]

DaSNet-v2 87.3 86.8 88 87.3 0.437 [29]

Faster R-CNN

(VGG16)

— — 89.3 — 0.181 [23]

Faster R-CNN

(VGG16)

— — 87.9 — 0.241 [24]

Faster R-CNN

(VGG19)

— — 82.4 86 0.45 [26]

However, no research work has been reported on the light-weight apple targets

recognition algorithm that classifies apples into two categories: graspable (not occluded or

only occluded by leaves) and ungraspable (other conditions).

On the other hand, throughout the studies of apple targets recognition based on

deep learning, although the recognition accuracy of most existing apple detection models

was high, the real-time performance of many of them were insufficient, due to its high

complexity, large number of parameters and large size. Therefore, it is essential to de-

sign a light-weight apple target detection algorithm, while ensuring the accuracy of fruit

recognition, to satisfy the requirements of picking robot for real-time recognition.

In the study, the apple tree fruit was used as the research object. A light-weight apple

targets real-time recognition algorithm based on improved YOLOv5s for picking robot was

proposed, which can realize the automatic recognition of the apples that can be grasped by

picking robot and ungraspable in an apple tree image. The proposed method can provide

technical support for real-time accurate detection of multiple fruit targets for the apple

picking robot.

2. Materials and Methods

2.1. Apple Images Acquisition

2.1.1. Materials and Image Data Acquisition Methods

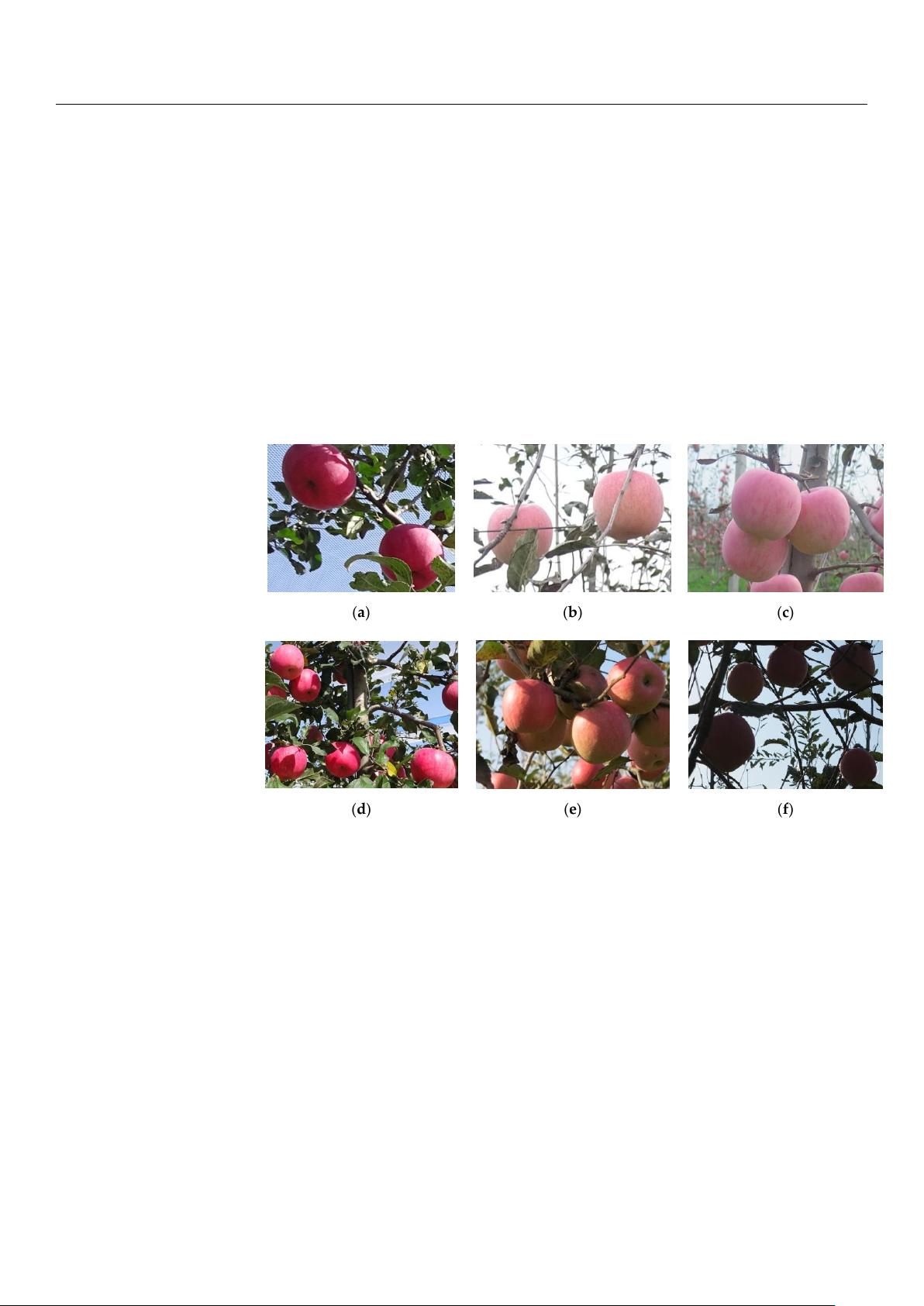

In the study, fruits in Fuji apple trees of fusiform cultivation mode in a modern

standard orchard were used as research object, and the original images of apple trees from

Remote Sens. 2021, 13, 1619 4 of 23

the standardized modern orchard in Agricultural Science and Technology Experimental

Demonstration Base of Qian County in Shaanxi Province and the Apple Experimental

Station of Northwest A&F University in Baishui county of Shaanxi Province were collected.

In fusiform cultivation mode, the row spacing of apple trees is about 4 m. The plant

spacing is about 1.2 m, and the tree height is about 3.5 m, which is suitable for apple

picking robot to operate in the orchard. The images of apple trees were obtained on sunny

and cloudy days. The shooting phase included morning, noon and afternoon. The images

were captured by Canon Powershot G16 camera, with a variety of angles selected for image

acquisition at different shooting distances (0.5–1.5 m), and in total, 1214 apple images were

obtained, including the following conditions: apples occluded by leaves, apples occluded

by branches, mixed occlusion, overlap between apples, natural light angle, backlight angle,

and side light angle, etc. (Figure 2). Furthermore, the environment factors such as cloudy,

shadows, high light, low light, reflections were also considered in image capture. The

resolution of the captured images is 4000 × 3000 pixels, and the format is JPEG.

Remote Sens. 2021, 13, x FOR PEER REVIEW 4 of 24

2. Materials and Methods

2.1. Apple Images Acquisition

2.1.1. Materials and Image Data Acquisition Methods

In the study, fruits in Fuji apple trees of fusiform cultivation mode in a modern stand-

ard orchard were used as research object, and the original images of apple trees from the

standardized modern orchard in Agricultural Science and Technology Experimental

Demonstration Base of Qian County in Shaanxi Province and the Apple Experimental Sta-

tion of Northwest A&F University in Baishui county of Shaanxi Province were collected.

In fusiform cultivation mode, the row spacing of apple trees is about 4 m. The plant spac-

ing is about 1.2 m, and the tree height is about 3.5 m, which is suitable for apple picking

robot to operate in the orchard. The images of apple trees were obtained on sunny and

cloudy days. The shooting phase included morning, noon and afternoon. The images were

captured by Canon Powershot G16 camera, with a variety of angles selected for image

acquisition at different shooting distances (0.5–1.5 m), and in total, 1214 apple images were

obtained, including the following conditions: apples occluded by leaves, apples occluded

by branches, mixed occlusion, overlap between apples, natural light angle, backlight an-

gle, and side light angle, etc. (Figure 2). Furthermore, the environment factors such as

cloudy, shadows, high light, low light, reflections were also considered in image capture.

The resolution of the captured images is 4000 × 3000 pixels, and the format is JPEG.

(a)

(b)

(c)

(d)

(e)

(f)

Figure 2. Apple images in different conditions. (a) Apples occluded by leaves (b) Apples occluded by branches (c) Over-

lapped apples. (d) Frontlight angle (e) Sidelight angle (f) Backlight angle.

2.1.2. Preprocessing of Images

The generation of the target detection model based on deep learning is realized on

the basis of the training of a large number of image data, therefore, the augmentation of

1214 collected apple images is necessary.

Firstly, 200 images (100 of sunny days and 100 of cloudy days) were randomly se-

lected from 1214 images as the test set, and the rest of the 1014 images were utilized as the

training set. The detail of distribution for the image samples in test set is shown in Table

2. Secondly, in order to improve the training efficiency of apple targets recognition model,

the original 1014 images of training set were compressed, and the length and width of

Figure 2.

Apple images in different conditions. (

a

) Apples occluded by leaves (

b

) Apples occluded

by branches (c) Overlapped apples. (d) Frontlight angle (e) Sidelight angle (f) Backlight angle.

2.1.2. Preprocessing of Images

The generation of the target detection model based on deep learning is realized on the

basis of the training of a large number of image data, therefore, the augmentation of 1214

collected apple images is necessary.

Firstly, 200 images (100 of sunny days and 100 of cloudy days) were randomly selected

from 1214 images as the test set, and the rest of the 1014 images were utilized as the

training set. The detail of distribution for the image samples in test set is shown in Table 2.

Secondly, in order to improve the training efficiency of apple targets recognition model, the

original 1014 images of training set were compressed, and the length and width of them

were compressed to 1/5 of the original one. Thirdly, the image data annotation software

called ‘LabelImg’ was used to draw the outer rectangular boxes of the apple targets in

the compressed apple tree images to realize the manual annotation of the fruits. Images

were labeled based on the smallest surrounding rectangle of apples, to ensure the rectangle

contains background area as little as possible. Among them, the apples in the image that

were unoccluded or only occluded by leaves were labeled as ‘graspable’ class, and the

apples in other conditions were labeled as ‘ungraspable’ class. The XML format files were

generated after the annotation were saved. Finally, in order to enrich the image data of the

Remote Sens. 2021, 13, 1619 5 of 23

training set, data enhancement processing was carried out to the data set to better extract

the features of apples belonging to different labeled categories and avoid the over-fitting of

the model obtained from training.

Table 2. Detailed information of images in test set.

Test Set Sunny Cloudy Total

Number of images 100 100 200

Graspable apple 482 525 1007

Ungraspable apple 766 563 1329

Due to the uncertain factors, such as illumination angle and weather, resulting in the

light environment of image acquisition is extremely complex; in order to improve the gen-

eralization ability of apple targets detection model, several image enhancement methods

were utilized for the 1014 images of training set respectively based on MATLAB (version

2016, the MathWorks Inc., Natick, MA, USA) software and its related image processing

functions. The image enhancement methods include image brightness enhancement and

reduction, horizontal mirroring, vertical mirroring, multi-angle rotation (90

◦

, 180

◦

, 270

◦

)

etc. In addition, considering the noise generated by the image acquisition equipment in the

process of image acquisition and the blur of the captured images caused by the shaking

of the equipment or the branches, Gaussian noise with variance of 0.02 was added to the

images, and the motion blur processing was carried out. Detailed procedures of image

enhancement methods are illustrated in the following.

Image brightness enhancement and reduction: Firstly, the original image is converted

to HSV space by using ‘rgb2hsv’ function; secondly, the V component (brightness com-

ponent) of the image is multiplied by different coefficients; finally, the synthesized HSV

space image is converted to RGB space by using ‘hsv2rgb’ function, realizing the brightness

enhancement and reduction of the image. In the study, three brightness intensities can be

generated utilizing brightness enhancement, including (H + S + 1.2

×

V),

(H + S + 1.4 × V)

and (H + S + 1.6

×

V); two brightness intensities can be generated using brightness reduc-

tion, including (H + S + 0.6 × V) and (H + S + 0.8 × V).

Image mirroring (horizontal and vertical mirror) was implemented using the Matlab

function ‘imwarp’. The horizontal mirroring was implemented by transforming the left and

right sides of the image centering on the vertical line of the image. The vertical mirroring

was implemented by transforming the upper and lower sides of the image centering on the

horizontal centerline of the image.

For image rotation, the Matlab function ‘imrotate’ was used to rotate the raw image,

and 90

◦

, 180

◦

, and 270

◦

of rotation were achieved by changing the function parameter

‘angle’, respectively. The transformed images can improve the detection performance of

the model by correctly identifying the apples of different orientations.

Four kinds of motion blur processing were employed to make the convolutional

network model have strong adaptability with the blurred images. A predetermined two-

dimensional filter was created using the Matlab function ‘fspecial’. LEN (length, represents

pixels of linear motion of camera) and THETA (

θ

, represents the angular degree in a

counter-clockwise direction) of the motion filter were set as (6, 30), (6,

−

30), (7, 45) and

(7, −45),

respectively. Then, the Matlab function ‘imfilter’ was used to blur the image with

the generated filter.

Furthermore, the addition of Gaussian noise with variance of 0.02 to the raw images

was implemented using Matlab function ‘imnoise’.

The final training sets consist of 16,224 images used as the final training set data for

training of apple targets recognition model, including 15,210 enhanced images and 1014

raw images. The detailed distribution of training set data is shown in Figure 3. There was

no overlap between the training set and the test set.

剩余22页未读,继续阅读

资源评论

ahdvsgshqkyd

- 粉丝: 0

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功