IEEE TRANSACTIONS ON AUTOMATIC CONTROL, VOL. 55, NO. 5, MAY 2010 1101

Solving Continuous-State POMDPs

via Density Projection

Enlu Zhou, Member, IEEE, Michael C. Fu, Fellow, IEEE, and Steven I. Marcus, Fellow, IEEE

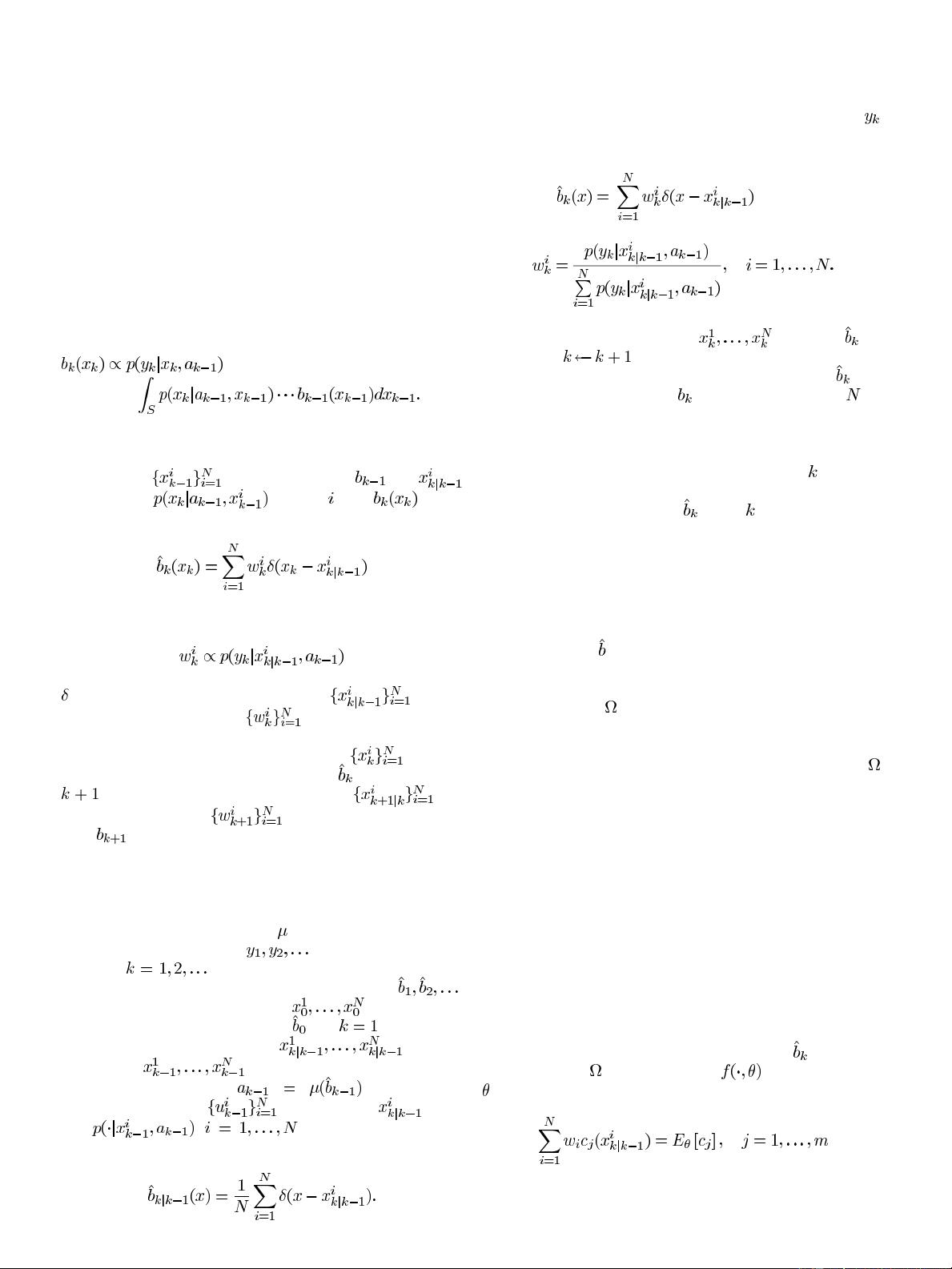

Abstract—Research on numerical solution methods for partially

observable Markov decision processes (POMDPs) has primarily

focused on finite-state models, and these algorithms do not gen-

erally extend to continuous-state POMDPs, due to the infinite

dimensionality of the belief space. In this paper, we develop a

computationally viable and theoretically sound method for solving

continuous-state POMDPs by effectively reducing the dimen-

sionality of the belief space via density projection. The density

projection technique is also incorporated into particle filtering

to provide a filtering scheme for online decision making. We

provide an error bound between the value function induced by

the policy obtained by our method and the true value function of

the POMDP, and also an error bound between projection particle

filtering and exact filtering. Finally, we illustrate the effectiveness

of our method through an inventory control problem.

Index Terms—Belief state, decision making, density projection,

partially observable Markov decision processes (POMDPs), par-

ticle filtering, value function.

I. INTRODUCTION

P

ARTIALLY observable Markov decision processes

(POMDPs) model sequential decision making under

uncertainty with partially observed state information. At each

stage or period, an action is taken based on a partial observation

of the current state along with the history of observations and

actions, and the state transitions probabilistically. The objective

is to minimize (or maximize) a cost (or reward) function, where

costs (or rewards) are accrued in each stage. Clearly, POMDPs

suffer from the same curse of dimensionality as fully observable

MDPs, so efficient numerical solution of problems with large

state spaces is a challenging research area.

A POMDP can be converted to a continuous-state Markov

decision process (MDP) by introducing the notion of the be-

lief state [6], which is the conditional distribution of the cur-

Manuscript received January 04, 2008; revised August 18, 2008. First

published February 02, 2010; current version published May 12, 2010. This

work was supported in part by the National Science Foundation under Grants

DMI-0540312 and DMI-0323220, and by the Air Force Office of Scientific

Research under Grant FA9550-07-1-0366. Recommended by Associate Editor

C.-H. Chen.

E. Zhou is with the Department of Industrial and Enterprise Systems Engi-

neering, University of Illinois at Urbana-Champaign, Urbana, IL 61801 USA

(e-mail: enluzhou@illinois.edu).

M.C. Fu is with the Robert H. Smith School of Business, and Institute for Sys-

tems Research, University of Maryland, College Park, MD 20742 USA (e-mail:

mfu@umd.edu).

S. I. Marcus is with the Department of Electrical and Computer Engineering,

and Institute for Systems Research, University of Maryland, College Park, MD

20742 USA (e-mail: marcus@umd.edu).

Color versions of one or more of the figures in this paper are available online

at http://ieeexplore.ieee.org.

Digital Object Identifier 10.1109/TAC.2010.2042005

rent state given the history of observations and actions. For a

finite-state POMDP, the belief space is finite dimensional (i.e.,

a probability simplex), whereas for a continuous-state POMDP,

the belief space is an infinite-dimensional space of continuous

probability distributions. This difference suggests that simple

generalizations of many of the finite-state algorithms to con-

tinuous-state models are not appropriate or applicable. For ex-

ample, discretization of the continuous-state space may result

in a finite-state POMDP of dimension either too large to solve

computationally or not sufficiently precise. Taking another ex-

ample, many algorithms for solving finite-state POMDPs (see

[17] for a survey) are based on discretization of the finite-di-

mensional probability simplex; however, it is usually not fea-

sible to discretize an infinite-dimensional probability distribu-

tion space. Throughout the paper, when we use the word “di-

mension” or “dimensional,” we refer to the dimension of the

belief space/state.

Despite the abundance of algorithms for finite-state

POMDPs, the aforementioned difficulty has motivated some

researchers to look for efficient algorithms for continuous-state

POMDPs [8], [9], [10], [11], [23], [24], [25], [28], [31]. As-

suming discrete observation and action spaces, Porta

et al.

[24] showed that the optimal finite-horizon value function is

defined by a finite set of “

-functions”, and model all functions

of interest by Gaussian mixtures. In a later work [25], they

extended their result and method to continuous observation and

action spaces using sampling strategies. However, the number

of Gaussian mixtures in representing belief states and

-func-

tions grows exponentially in value iteration as the number of

iterations increases. Thrun [31] addressed continuous-state

POMDPs using particle filtering to simulate the propagation of

belief states and represent the belief states by a finite number of

samples. The number of samples determines the dimension of

the belief space, and the dimension could be very high in order

to approximate the belief states closely. Brunskill et al. [10]

used weighted sums of Gaussians to approximate the belief

states and value functions in a class of switching state models.

Roy [28] and Brooks et al. [8] used sufficient statistics to re-

duce the dimension of the belief space, which is often referred

to as belief compression in the Artificial Intelligence literature.

Roy [28] proposed an augmented MDP (AMDP), characterizing

belief states using maximum likelihood state and entropy, which

are usually not sufficient statistics except for a linear Gaussian

model. As shown by Roy himself, the algorithm fails in a simple

robot navigation problem, since the two statistics are not suffi-

cient for distinguishing between a unimodal distribution and a

bimodal distribution. Brooks et al. [8] proposed a parametric

POMDP, representing the belief state as a Gaussian distribution

so as to convert the POMDP to a problem of computing the value

0018-9286/$26.00 © 2010 IEEE

Authorized licensed use limited to: National Univ of Defense Tech. Downloaded on May 29,2010 at 03:02:27 UTC from IEEE Xplore. Restrictions apply.