Learning Transferable Visual Models From Natural Language Supervision 4

balance the results by including up to 20,000 (image, text)

pairs per query. The resulting dataset has a similar total

word count as the WebText dataset used to train GPT-2. We

refer to this dataset as WIT for WebImageText.

2.3. Selecting an Efficient Pre-Training Method

State-of-the-art computer vision systems use very large

amounts of compute. Mahajan et al. (2018) required 19

GPU years to train their ResNeXt101-32x48d and Xie et al.

(2020) required 33 TPUv3 core-years to train their Noisy

Student EfficientNet-L2. When considering that both these

systems were trained to predict only 1000 ImageNet classes,

the task of learning an open set of visual concepts from

natural language seems daunting. In the course of our ef-

forts, we found training efficiency was key to successfully

scaling natural language supervision and we selected our

final pre-training method based on this metric.

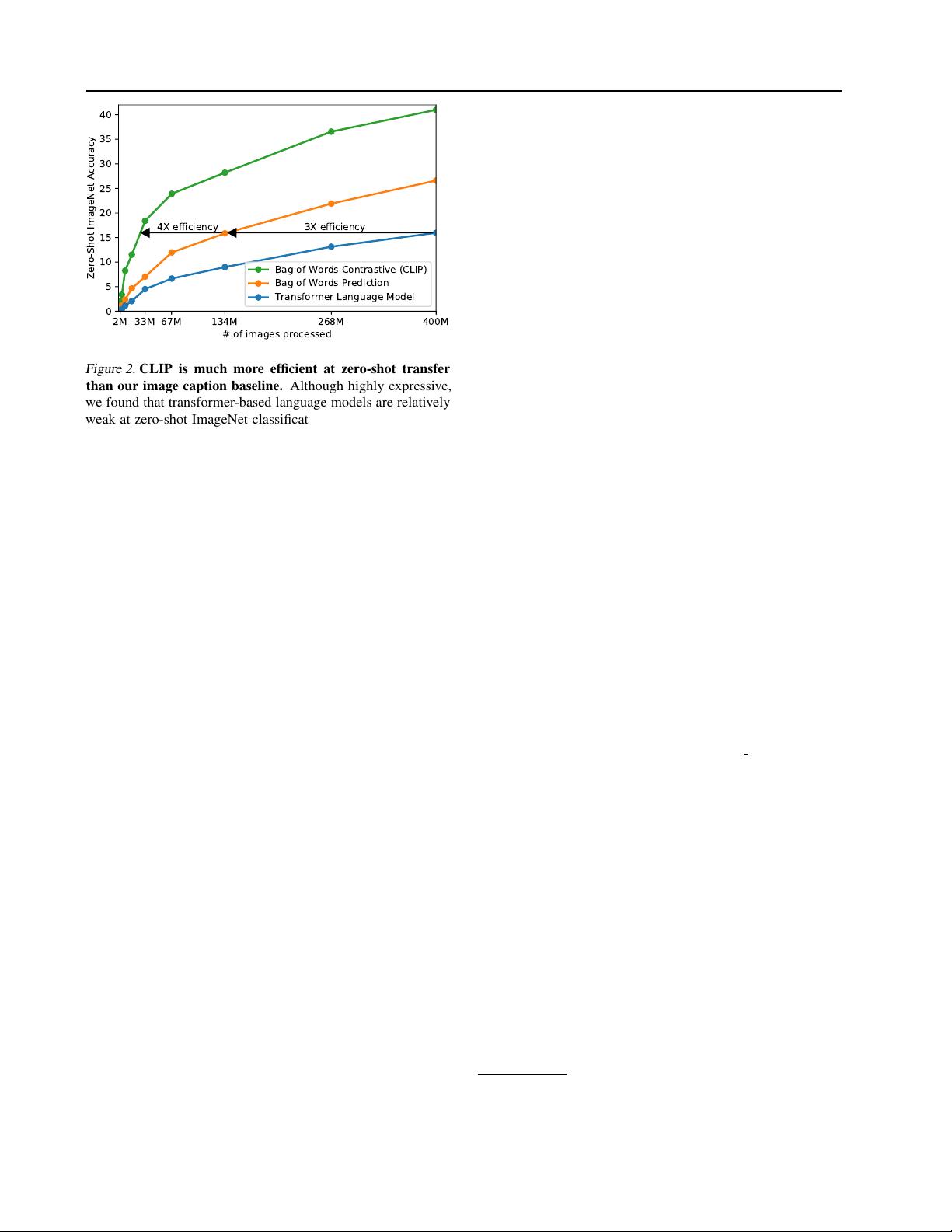

Our initial approach, similar to VirTex, jointly trained an

image CNN and text transformer from scratch to predict the

caption of an image. However, we encountered difficulties

efficiently scaling this method. In Figure 2 we show that a

63 million parameter transformer language model, which

already uses twice the compute of its ResNet-50 image

encoder, learns to recognize ImageNet classes three times

slower than a much simpler baseline that predicts a bag-of-

words encoding of the same text.

Both these approaches share a key similarity. They try to pre-

dict the exact words of the text accompanying each image.

This is a difficult task due to the wide variety of descriptions,

comments, and related text that co-occur with images. Re-

cent work in contrastive representation learning for images

has found that contrastive objectives can learn better repre-

sentations than their equivalent predictive objective (Tian

et al., 2019). Other work has found that although generative

models of images can learn high quality image representa-

tions, they require over an order of magnitude more compute

than contrastive models with the same performance (Chen

et al., 2020a). Noting these findings, we explored training

a system to solve the potentially easier proxy task of pre-

dicting only which text as a whole is paired with which

image and not the exact words of that text. Starting with

the same bag-of-words encoding baseline, we swapped the

predictive objective for a contrastive objective in Figure 2

and observed a further 4x efficiency improvement in the rate

of zero-shot transfer to ImageNet.

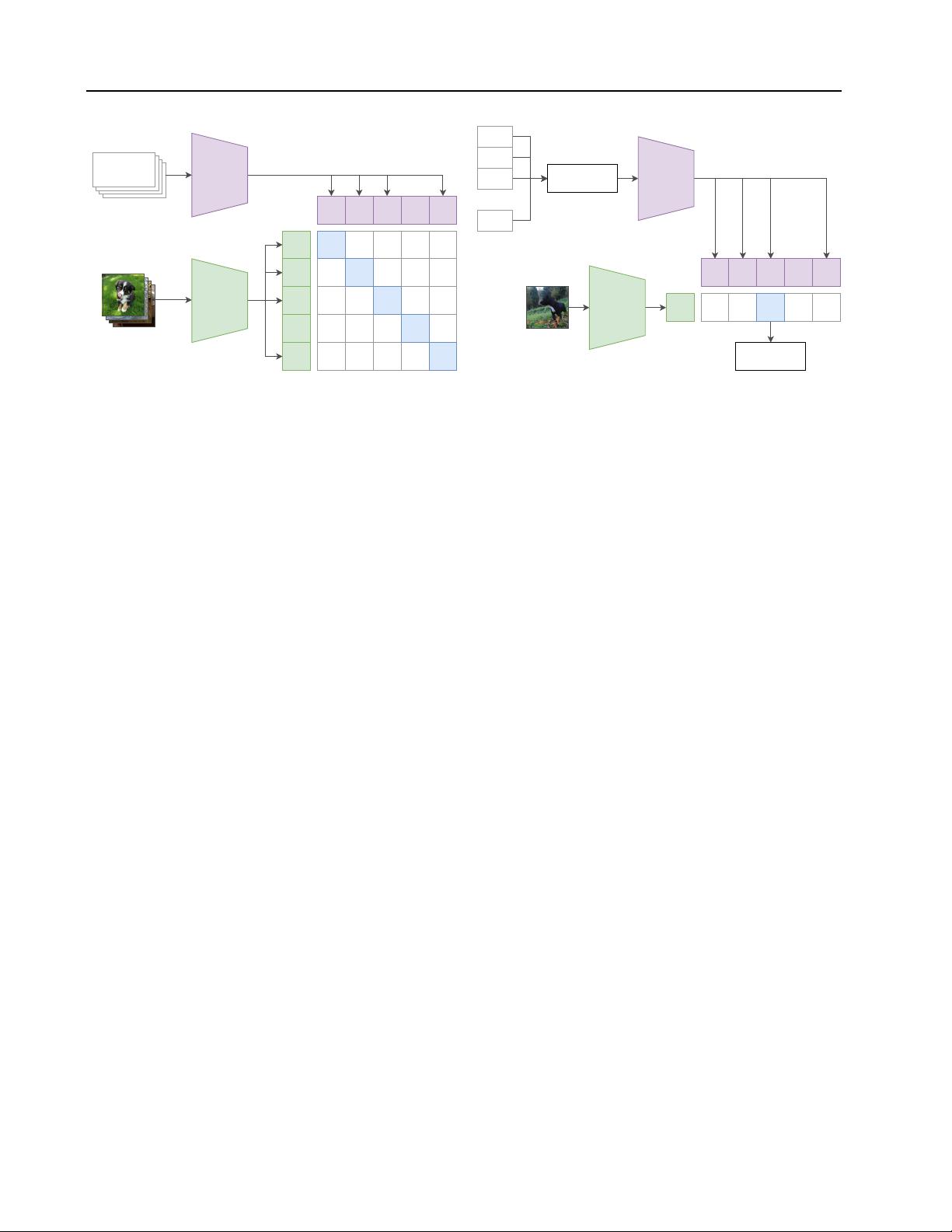

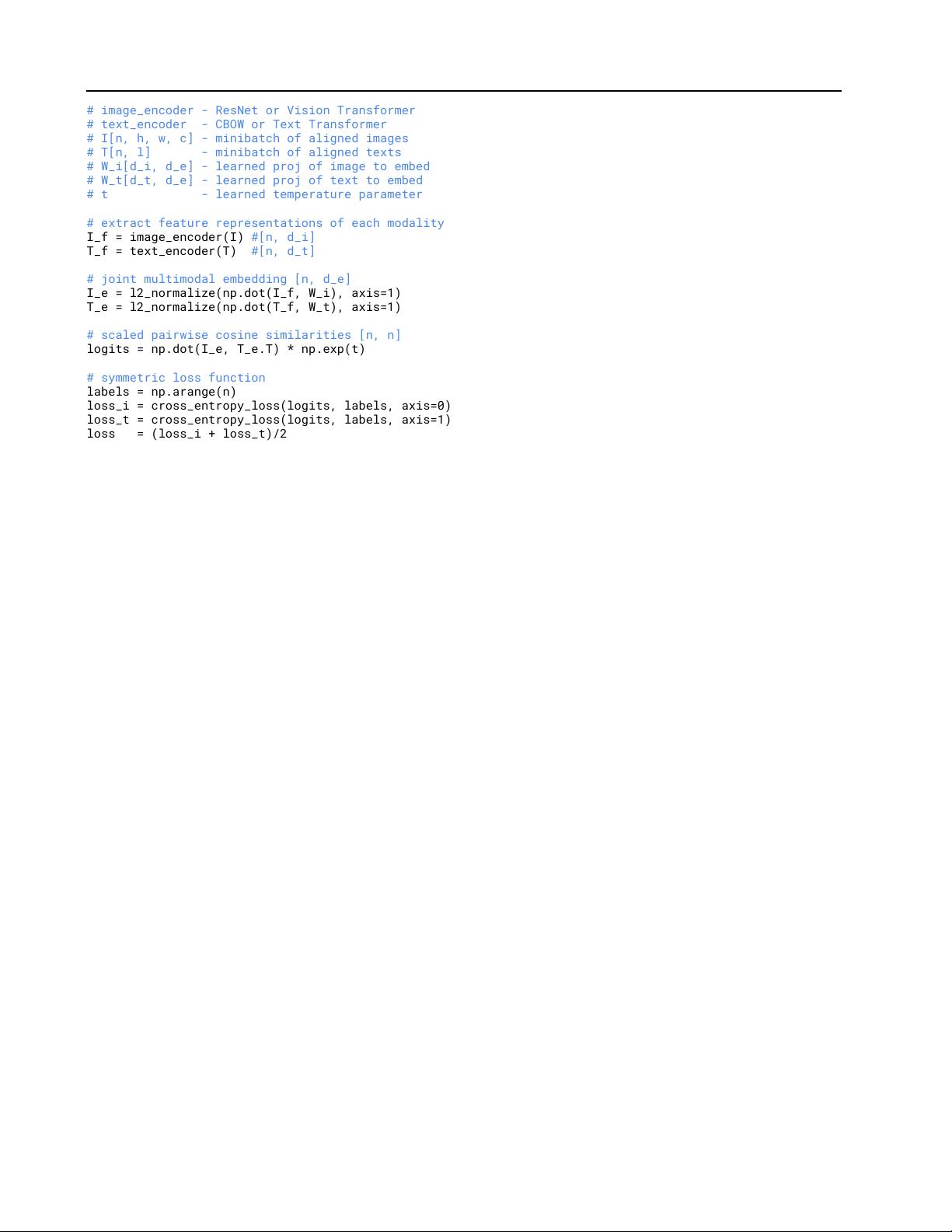

Given a batch of

N

(image, text) pairs, CLIP is trained to

predict which of the

N × N

possible (image, text) pairings

across a batch actually occurred. To do this, CLIP learns a

with high pointwise mutual information as well as the names of

all Wikipedia articles above a certain search volume. Finally all

WordNet synsets not already in the query list are added.

multi-modal embedding space by jointly training an image

encoder and text encoder to maximize the cosine similar-

ity of the image and text embeddings of the

N

real pairs

in the batch while minimizing the cosine similarity of the

embeddings of the

N

2

− N

incorrect pairings. We opti-

mize a symmetric cross entropy loss over these similarity

scores. In Figure 3 we include pseudocode of the core of an

implementation of CLIP. To our knowledge this batch con-

struction technique and objective was first introduced in the

area of deep metric learning as the multi-class N-pair loss

Sohn (2016), was popularized for contrastive representation

learning by Oord et al. (2018) as the InfoNCE loss, and was

recently adapted for contrastive (text, image) representation

learning in the domain of medical imaging by Zhang et al.

(2020).

Due to the large size of our pre-training dataset, over-fitting

is not a major concern and the details of training CLIP are

simplified compared to the implementation of Zhang et al.

(2020). We train CLIP from scratch without initializing the

image encoder with ImageNet weights or the text encoder

with pre-trained weights. We do not use the non-linear

projection between the representation and the contrastive

embedding space, a change which was introduced by Bach-

man et al. (2019) and popularized by Chen et al. (2020b).

We instead use only a linear projection to map from each en-

coder’s representation to the multi-modal embedding space.

We did not notice a difference in training efficiency between

the two versions and speculate that non-linear projections

may be co-adapted with details of current image only in

self-supervised representation learning methods. We also

remove the text transformation function

t

u

from Zhang et al.

(2020) which samples a single sentence at uniform from

the text since many of the (image, text) pairs in CLIP’s pre-

training dataset are only a single sentence. We also simplify

the image transformation function

t

v

. A random square

crop from resized images is the only data augmentation

used during training. Finally, the temperature parameter

which controls the range of the logits in the softmax,

τ

, is

directly optimized during training as a log-parameterized

multiplicative scalar to avoid turning as a hyper-parameter.

2.4. Choosing and Scaling a Model

We consider two different architectures for the image en-

coder. For the first, we use ResNet-50 (He et al., 2016a)

as the base architecture for the image encoder due to its

widespread adoption and proven performance. We make sev-

eral modifications to the original version using the ResNet-

D improvements from He et al. (2019) and the antialiased

rect-2 blur pooling from Zhang (2019). We also replace

the global average pooling layer with an attention pooling

mechanism. The attention pooling is implemented as a sin-

gle layer of “transformer-style” multi-head QKV attention

where the query is conditioned on the global average-pooled

2103.00020.zip (1个子文件)

2103.00020.zip (1个子文件)  2103.00020.pdf 6.5MB

2103.00020.pdf 6.5MB

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜

信息提交成功

信息提交成功