没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

High-Resolution Image Synthesis with Latent Diffusion Models

Robin Rombach

1

*

Andreas Blattmann

1 ∗

Dominik Lorenz

1

Patrick Esser Bj

¨

orn Ommer

1

1

Ludwig Maximilian University of Munich & IWR, Heidelberg University, Germany Runway ML

https://github.com/CompVis/latent-diffusion

Abstract

By decomposing the image formation process into a se-

quential application of denoising autoencoders, diffusion

models (DMs) achieve state-of-the-art synthesis results on

image data and beyond. Additionally, their formulation al-

lows for a guiding mechanism to control the image gen-

eration process without retraining. However, since these

models typically operate directly in pixel space, optimiza-

tion of powerful DMs often consumes hundreds of GPU

days and inference is expensive due to sequential evalu-

ations. To enable DM training on limited computational

resources while retaining their quality and flexibility, we

apply them in the latent space of powerful pretrained au-

toencoders. In contrast to previous work, training diffusion

models on such a representation allows for the first time

to reach a near-optimal point between complexity reduc-

tion and detail preservation, greatly boosting visual fidelity.

By introducing cross-attention layers into the model archi-

tecture, we turn diffusion models into powerful and flexi-

ble generators for general conditioning inputs such as text

or bounding boxes and high-resolution synthesis becomes

possible in a convolutional manner. Our latent diffusion

models (LDMs) achieve new state-of-the-art scores for im-

age inpainting and class-conditional image synthesis and

highly competitive performance on various tasks, includ-

ing text-to-image synthesis, unconditional image generation

and super-resolution, while significantly reducing computa-

tional requirements compared to pixel-based DMs.

1. Introduction

Image synthesis is one of the computer vision fields with

the most spectacular recent development, but also among

those with the greatest computational demands. Espe-

cially high-resolution synthesis of complex, natural scenes

is presently dominated by scaling up likelihood-based mod-

els, potentially containing billions of parameters in autore-

gressive (AR) transformers [66,67]. In contrast, the promis-

ing results of GANs [3, 27, 40] have been revealed to be

mostly confined to data with comparably limited variability

as their adversarial learning procedure does not easily scale

to modeling complex, multi-modal distributions. Recently,

diffusion models [82], which are built from a hierarchy of

denoising autoencoders, have shown to achieve impressive

*

The first two authors contributed equally to this work.

Input

ours (f = 4)

PSNR: 27.4 R-FID: 0.58

DALL-E (f = 8)

PSNR: 22.8 R-FID: 32.01

VQGAN (f = 16)

PSNR: 19.9 R-FID: 4.98

Figure 1. Boosting the upper bound on achievable quality with

less agressive downsampling. Since diffusion models offer excel-

lent inductive biases for spatial data, we do not need the heavy spa-

tial downsampling of related generative models in latent space, but

can still greatly reduce the dimensionality of the data via suitable

autoencoding models, see Sec. 3. Images are from the DIV2K [1]

validation set, evaluated at 512

2

px. We denote the spatial down-

sampling factor by f . Reconstruction FIDs [29] and PSNR are

calculated on ImageNet-val. [12]; see also Tab. 8.

results in image synthesis [30,85] and beyond [7,45,48,57],

and define the state-of-the-art in class-conditional image

synthesis [15,31] and super-resolution [72]. Moreover, even

unconditional DMs can readily be applied to tasks such

as inpainting and colorization [85] or stroke-based syn-

thesis [53], in contrast to other types of generative mod-

els [19, 46, 69]. Being likelihood-based models, they do not

exhibit mode-collapse and training instabilities as GANs

and, by heavily exploiting parameter sharing, they can

model highly complex distributions of natural images with-

out involving billions of parameters as in AR models [67].

Democratizing High-Resolution Image Synthesis DMs

belong to the class of likelihood-based models, whose

mode-covering behavior makes them prone to spend ex-

cessive amounts of capacity (and thus compute resources)

on modeling imperceptible details of the data [16, 73]. Al-

though the reweighted variational objective [30] aims to ad-

dress this by undersampling the initial denoising steps, DMs

are still computationally demanding, since training and

evaluating such a model requires repeated function evalu-

ations (and gradient computations) in the high-dimensional

space of RGB images. As an example, training the most

powerful DMs often takes hundreds of GPU days (e.g. 150 -

1000 V100 days in [15]) and repeated evaluations on a noisy

version of the input space render also inference expensive,

1

arXiv:2112.10752v2 [cs.CV] 13 Apr 2022

so that producing 50k samples takes approximately 5 days

[15] on a single A100 GPU. This has two consequences for

the research community and users in general: Firstly, train-

ing such a model requires massive computational resources

only available to a small fraction of the field, and leaves a

huge carbon footprint [65, 86]. Secondly, evaluating an al-

ready trained model is also expensive in time and memory,

since the same model architecture must run sequentially for

a large number of steps (e.g. 25 - 1000 steps in [15]).

To increase the accessibility of this powerful model class

and at the same time reduce its significant resource con-

sumption, a method is needed that reduces the computa-

tional complexity for both training and sampling. Reducing

the computational demands of DMs without impairing their

performance is, therefore, key to enhance their accessibility.

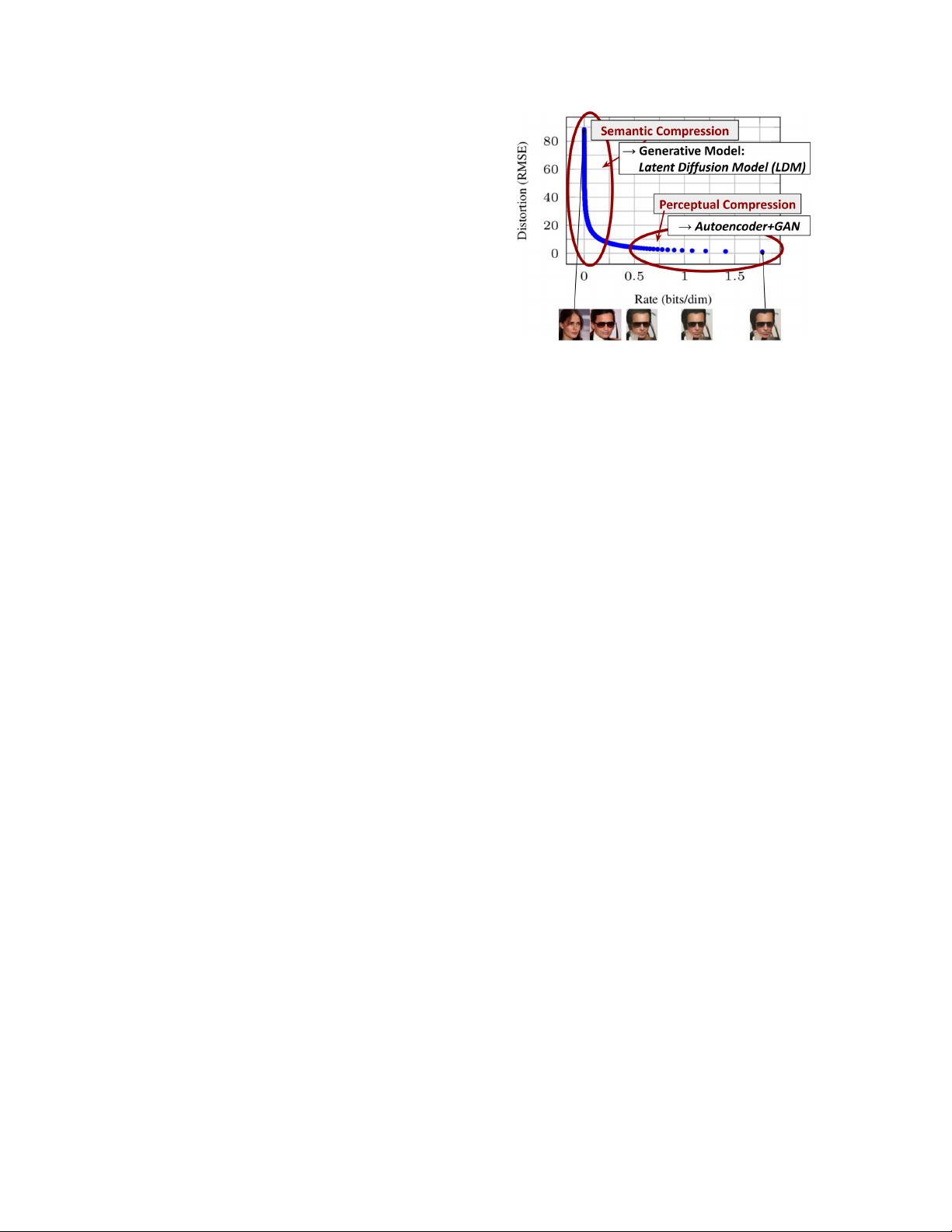

Departure to Latent Space Our approach starts with

the analysis of already trained diffusion models in pixel

space: Fig. 2 shows the rate-distortion trade-off of a trained

model. As with any likelihood-based model, learning can

be roughly divided into two stages: First is a perceptual

compression stage which removes high-frequency details

but still learns little semantic variation. In the second stage,

the actual generative model learns the semantic and concep-

tual composition of the data (semantic compression). We

thus aim to first find a perceptually equivalent, but compu-

tationally more suitable space, in which we will train diffu-

sion models for high-resolution image synthesis.

Following common practice [11, 23, 66, 67, 96], we sep-

arate training into two distinct phases: First, we train

an autoencoder which provides a lower-dimensional (and

thereby efficient) representational space which is perceptu-

ally equivalent to the data space. Importantly, and in con-

trast to previous work [23,66], we do not need to rely on ex-

cessive spatial compression, as we train DMs in the learned

latent space, which exhibits better scaling properties with

respect to the spatial dimensionality. The reduced complex-

ity also provides efficient image generation from the latent

space with a single network pass. We dub the resulting

model class Latent Diffusion Models (LDMs).

A notable advantage of this approach is that we need to

train the universal autoencoding stage only once and can

therefore reuse it for multiple DM trainings or to explore

possibly completely different tasks [81]. This enables effi-

cient exploration of a large number of diffusion models for

various image-to-image and text-to-image tasks. For the lat-

ter, we design an architecture that connects transformers to

the DM’s UNet backbone [71] and enables arbitrary types

of token-based conditioning mechanisms, see Sec. 3.3.

In sum, our work makes the following contributions:

(i) In contrast to purely transformer-based approaches

[23, 66], our method scales more graceful to higher dimen-

sional data and can thus (a) work on a compression level

which provides more faithful and detailed reconstructions

than previous work (see Fig. 1) and (b) can be efficiently

Figure 2. Illustrating perceptual and semantic compression: Most

bits of a digital image correspond to imperceptible details. While

DMs allow to suppress this semantically meaningless information

by minimizing the responsible loss term, gradients (during train-

ing) and the neural network backbone (training and inference) still

need to be evaluated on all pixels, leading to superfluous compu-

tations and unnecessarily expensive optimization and inference.

We propose latent diffusion models (LDMs) as an effective gener-

ative model and a separate mild compression stage that only elim-

inates imperceptible details. Data and images from [30].

applied to high-resolution synthesis of megapixel images.

(ii) We achieve competitive performance on multiple

tasks (unconditional image synthesis, inpainting, stochastic

super-resolution) and datasets while significantly lowering

computational costs. Compared to pixel-based diffusion ap-

proaches, we also significantly decrease inference costs.

(iii) We show that, in contrast to previous work [93]

which learns both an encoder/decoder architecture and a

score-based prior simultaneously, our approach does not re-

quire a delicate weighting of reconstruction and generative

abilities. This ensures extremely faithful reconstructions

and requires very little regularization of the latent space.

(iv) We find that for densely conditioned tasks such

as super-resolution, inpainting and semantic synthesis, our

model can be applied in a convolutional fashion and render

large, consistent images of ∼ 1024

2

px.

(v) Moreover, we design a general-purpose conditioning

mechanism based on cross-attention, enabling multi-modal

training. We use it to train class-conditional, text-to-image

and layout-to-image models.

(vi) Finally, we release pretrained latent diffusion

and autoencoding models at https : / / github .

com/CompVis/latent-diffusion which might be

reusable for a various tasks besides training of DMs [81].

2. Related Work

Generative Models for Image Synthesis The high di-

mensional nature of images presents distinct challenges

to generative modeling. Generative Adversarial Networks

(GAN) [27] allow for efficient sampling of high resolution

images with good perceptual quality [3, 42], but are diffi-

2

cult to optimize [2, 28, 54] and struggle to capture the full

data distribution [55]. In contrast, likelihood-based meth-

ods emphasize good density estimation which renders op-

timization more well-behaved. Variational autoencoders

(VAE) [46] and flow-based models [18, 19] enable efficient

synthesis of high resolution images [9, 44, 92], but sam-

ple quality is not on par with GANs. While autoregressive

models (ARM) [6, 10, 94, 95] achieve strong performance

in density estimation, computationally demanding architec-

tures [97] and a sequential sampling process limit them to

low resolution images. Because pixel based representations

of images contain barely perceptible, high-frequency de-

tails [16,73], maximum-likelihood training spends a dispro-

portionate amount of capacity on modeling them, resulting

in long training times. To scale to higher resolutions, several

two-stage approaches [23,67,101,103] use ARMs to model

a compressed latent image space instead of raw pixels.

Recently, Diffusion Probabilistic Models (DM) [82],

have achieved state-of-the-art results in density estimation

[45] as well as in sample quality [15]. The generative power

of these models stems from a natural fit to the inductive bi-

ases of image-like data when their underlying neural back-

bone is implemented as a UNet [15, 30, 71, 85]. The best

synthesis quality is usually achieved when a reweighted ob-

jective [30] is used for training. In this case, the DM corre-

sponds to a lossy compressor and allow to trade image qual-

ity for compression capabilities. Evaluating and optimizing

these models in pixel space, however, has the downside of

low inference speed and very high training costs. While

the former can be partially adressed by advanced sampling

strategies [47, 75, 84] and hierarchical approaches [31, 93],

training on high-resolution image data always requires to

calculate expensive gradients. We adress both drawbacks

with our proposed LDMs, which work on a compressed la-

tent space of lower dimensionality. This renders training

computationally cheaper and speeds up inference with al-

most no reduction in synthesis quality (see Fig. 1).

Two-Stage Image Synthesis To mitigate the shortcom-

ings of individual generative approaches, a lot of research

[11, 23, 67, 70, 101, 103] has gone into combining the

strengths of different methods into more efficient and per-

formant models via a two stage approach. VQ-VAEs [67,

101] use autoregressive models to learn an expressive prior

over a discretized latent space. [66] extend this approach to

text-to-image generation by learning a joint distributation

over discretized image and text representations. More gen-

erally, [70] uses conditionally invertible networks to pro-

vide a generic transfer between latent spaces of diverse do-

mains. Different from VQ-VAEs, VQGANs [23, 103] em-

ploy a first stage with an adversarial and perceptual objec-

tive to scale autoregressive transformers to larger images.

However, the high compression rates required for feasible

ARM training, which introduces billions of trainable pa-

rameters [23, 66], limit the overall performance of such ap-

proaches and less compression comes at the price of high

computational cost [23, 66]. Our work prevents such trade-

offs, as our proposed LDMs scale more gently to higher

dimensional latent spaces due to their convolutional back-

bone. Thus, we are free to choose the level of compression

which optimally mediates between learning a powerful first

stage, without leaving too much perceptual compression up

to the generative diffusion model while guaranteeing high-

fidelity reconstructions (see Fig. 1).

While approaches to jointly [93] or separately [80] learn

an encoding/decoding model together with a score-based

prior exist, the former still require a difficult weighting be-

tween reconstruction and generative capabilities [11] and

are outperformed by our approach (Sec. 4), and the latter

focus on highly structured images such as human faces.

3. Method

To lower the computational demands of training diffu-

sion models towards high-resolution image synthesis, we

observe that although diffusion models allow to ignore

perceptually irrelevant details by undersampling the corre-

sponding loss terms [30], they still require costly function

evaluations in pixel space, which causes huge demands in

computation time and energy resources.

We propose to circumvent this drawback by introducing

an explicit separation of the compressive from the genera-

tive learning phase (see Fig. 2). To achieve this, we utilize

an autoencoding model which learns a space that is percep-

tually equivalent to the image space, but offers significantly

reduced computational complexity.

Such an approach offers several advantages: (i) By leav-

ing the high-dimensional image space, we obtain DMs

which are computationally much more efficient because

sampling is performed on a low-dimensional space. (ii) We

exploit the inductive bias of DMs inherited from their UNet

architecture [71], which makes them particularly effective

for data with spatial structure and therefore alleviates the

need for aggressive, quality-reducing compression levels as

required by previous approaches [23, 66]. (iii) Finally, we

obtain general-purpose compression models whose latent

space can be used to train multiple generative models and

which can also be utilized for other downstream applica-

tions such as single-image CLIP-guided synthesis [25].

3.1. Perceptual Image Compression

Our perceptual compression model is based on previous

work [23] and consists of an autoencoder trained by com-

bination of a perceptual loss [106] and a patch-based [33]

adversarial objective [20, 23, 103]. This ensures that the re-

constructions are confined to the image manifold by enforc-

ing local realism and avoids bluriness introduced by relying

solely on pixel-space losses such as L

2

or L

1

objectives.

More precisely, given an image x ∈ R

H×W ×3

in RGB

space, the encoder E encodes x into a latent representa-

3

tion z = E(x), and the decoder D reconstructs the im-

age from the latent, giving ˜x = D(z) = D(E(x)), where

z ∈ R

h×w×c

. Importantly, the encoder downsamples the

image by a factor f = H/h = W/w, and we investigate

different downsampling factors f = 2

m

, with m ∈ N.

In order to avoid arbitrarily high-variance latent spaces,

we experiment with two different kinds of regularizations.

The first variant, KL-reg., imposes a slight KL-penalty to-

wards a standard normal on the learned latent, similar to a

VAE [46, 69], whereas VQ-reg. uses a vector quantization

layer [96] within the decoder. This model can be interpreted

as a VQGAN [23] but with the quantization layer absorbed

by the decoder. Because our subsequent DM is designed

to work with the two-dimensional structure of our learned

latent space z = E(x), we can use relatively mild compres-

sion rates and achieve very good reconstructions. This is

in contrast to previous works [23, 66], which relied on an

arbitrary 1D ordering of the learned space z to model its

distribution autoregressively and thereby ignored much of

the inherent structure of z. Hence, our compression model

preserves details of x better (see Tab. 8). The full objective

and training details can be found in the supplement.

3.2. Latent Diffusion Models

Diffusion Models [82] are probabilistic models designed to

learn a data distribution p(x) by gradually denoising a nor-

mally distributed variable, which corresponds to learning

the reverse process of a fixed Markov Chain of length T .

For image synthesis, the most successful models [15,30,72]

rely on a reweighted variant of the variational lower bound

on p(x), which mirrors denoising score-matching [85].

These models can be interpreted as an equally weighted

sequence of denoising autoencoders

θ

(x

t

, t); t = 1 . . . T ,

which are trained to predict a denoised variant of their input

x

t

, where x

t

is a noisy version of the input x. The corre-

sponding objective can be simplified to (Sec. B)

L

DM

= E

x,∼N(0,1),t

h

k −

θ

(x

t

, t)k

2

2

i

, (1)

with t uniformly sampled from {1, . . . , T}.

Generative Modeling of Latent Representations With

our trained perceptual compression models consisting of E

and D, we now have access to an efficient, low-dimensional

latent space in which high-frequency, imperceptible details

are abstracted away. Compared to the high-dimensional

pixel space, this space is more suitable for likelihood-based

generative models, as they can now (i) focus on the impor-

tant, semantic bits of the data and (ii) train in a lower di-

mensional, computationally much more efficient space.

Unlike previous work that relied on autoregressive,

attention-based transformer models in a highly compressed,

discrete latent space [23,66, 103], we can take advantage of

image-specific inductive biases that our model offers. This

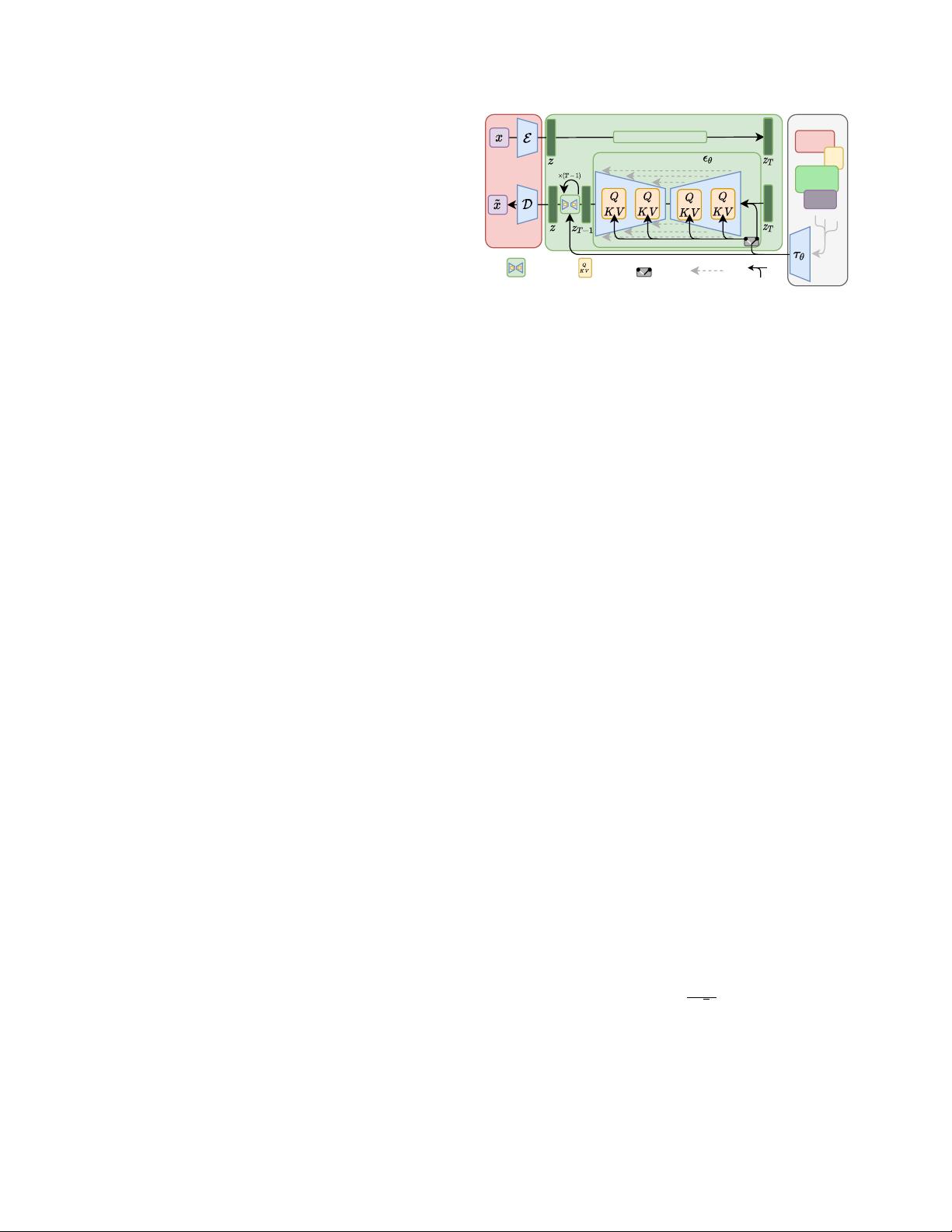

Semantic

Map

crossattention

Latent Space

Conditioning

Text

Diffusion Process

denoising step switch skip connection

Repres

entations

Pixel Space

Images

Denoising U-Net

concat

Figure 3. We condition LDMs either via concatenation or by a

more general cross-attention mechanism. See Sec. 3.3

includes the ability to build the underlying UNet primar-

ily from 2D convolutional layers, and further focusing the

objective on the perceptually most relevant bits using the

reweighted bound, which now reads

L

LDM

:= E

E(x),∼N(0,1),t

h

k −

θ

(z

t

, t)k

2

2

i

. (2)

The neural backbone

θ

(◦, t) of our model is realized as a

time-conditional UNet [71]. Since the forward process is

fixed, z

t

can be efficiently obtained from E during training,

and samples from p(z) can be decoded to image space with

a single pass through D.

3.3. Conditioning Mechanisms

Similar to other types of generative models [56, 83],

diffusion models are in principle capable of modeling

conditional distributions of the form p(z|y). This can

be implemented with a conditional denoising autoencoder

θ

(z

t

, t, y) and paves the way to controlling the synthesis

process through inputs y such as text [68], semantic maps

[33, 61] or other image-to-image translation tasks [34].

In the context of image synthesis, however, combining

the generative power of DMs with other types of condition-

ings beyond class-labels [15] or blurred variants of the input

image [72] is so far an under-explored area of research.

We turn DMs into more flexible conditional image gener-

ators by augmenting their underlying UNet backbone with

the cross-attention mechanism [97], which is effective for

learning attention-based models of various input modali-

ties [35,36]. To pre-process y from various modalities (such

as language prompts) we introduce a domain specific en-

coder τ

θ

that projects y to an intermediate representation

τ

θ

(y) ∈ R

M×d

τ

, which is then mapped to the intermediate

layers of the UNet via a cross-attention layer implementing

Attention(Q, K, V ) = softmax

QK

T

√

d

· V , with

Q = W

(i)

Q

· ϕ

i

(z

t

), K = W

(i)

K

· τ

θ

(y), V = W

(i)

V

· τ

θ

(y).

Here, ϕ

i

(z

t

) ∈ R

N×d

i

denotes a (flattened) intermediate

representation of the UNet implementing

θ

and W

(i)

V

∈

4

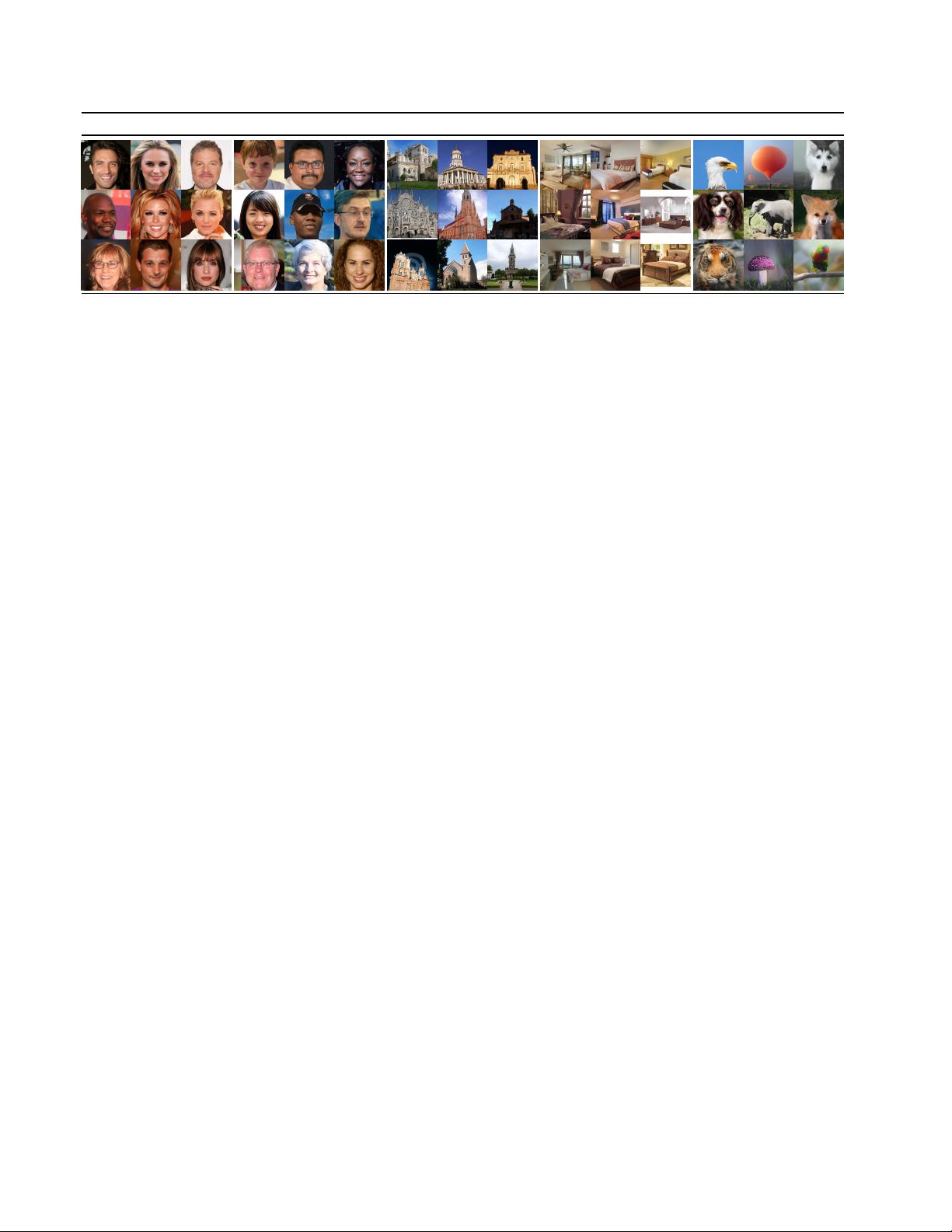

CelebAHQ FFHQ LSUN-Churches LSUN-Beds ImageNet

Figure 4. Samples from LDMs trained on CelebAHQ [39], FFHQ [41], LSUN-Churches [102], LSUN-Bedrooms [102] and class-

conditional ImageNet [12], each with a resolution of 256 × 256. Best viewed when zoomed in. For more samples cf . the supplement.

R

d×d

i

, W

(i)

Q

∈ R

d×d

τ

& W

(i)

K

∈ R

d×d

τ

are learnable pro-

jection matrices [36, 97]. See Fig. 3 for a visual depiction.

Based on image-conditioning pairs, we then learn the

conditional LDM via

L

LDM

:= E

E(x),y,∼N(0,1),t

h

k−

θ

(z

t

, t, τ

θ

(y))k

2

2

i

, (3)

where both τ

θ

and

θ

are jointly optimized via Eq. 3. This

conditioning mechanism is flexible as τ

θ

can be parameter-

ized with domain-specific experts, e.g. (unmasked) trans-

formers [97] when y are text prompts (see Sec. 4.3.1)

4. Experiments

LDMs provide means to flexible and computationally

tractable diffusion based image synthesis of various image

modalities, which we empirically show in the following.

Firstly, however, we analyze the gains of our models com-

pared to pixel-based diffusion models in both training and

inference. Interestingly, we find that LDMs trained in VQ-

regularized latent spaces sometimes achieve better sample

quality, even though the reconstruction capabilities of VQ-

regularized first stage models slightly fall behind those of

their continuous counterparts, cf . Tab. 8. A visual compari-

son between the effects of first stage regularization schemes

on LDM training and their generalization abilities to resolu-

tions > 256

2

can be found in Appendix D.1. In E.2 we list

details on architecture, implementation, training and evalu-

ation for all results presented in this section.

4.1. On Perceptual Compression Tradeoffs

This section analyzes the behavior of our LDMs with dif-

ferent downsampling factors f ∈ {1, 2, 4, 8, 16, 32} (abbre-

viated as LDM-f, where LDM-1 corresponds to pixel-based

DMs). To obtain a comparable test-field, we fix the com-

putational resources to a single NVIDIA A100 for all ex-

periments in this section and train all models for the same

number of steps and with the same number of parameters.

Tab. 8 shows hyperparameters and reconstruction perfor-

mance of the first stage models used for the LDMs com-

pared in this section. Fig. 6 shows sample quality as a func-

tion of training progress for 2M steps of class-conditional

models on the ImageNet [12] dataset. We see that, i) small

downsampling factors for LDM-{1,2} result in slow train-

ing progress, whereas ii) overly large values of f cause stag-

nating fidelity after comparably few training steps. Revis-

iting the analysis above (Fig. 1 and 2) we attribute this to

i) leaving most of perceptual compression to the diffusion

model and ii) too strong first stage compression resulting

in information loss and thus limiting the achievable qual-

ity. LDM-{4-16} strike a good balance between efficiency

and perceptually faithful results, which manifests in a sig-

nificant FID [29] gap of 38 between pixel-based diffusion

(LDM-1) and LDM-8 after 2M training steps.

In Fig. 7, we compare models trained on CelebA-

HQ [39] and ImageNet in terms sampling speed for differ-

ent numbers of denoising steps with the DDIM sampler [84]

and plot it against FID-scores [29]. LDM-{4-8} outper-

form models with unsuitable ratios of perceptual and con-

ceptual compression. Especially compared to pixel-based

LDM-1, they achieve much lower FID scores while simulta-

neously significantly increasing sample throughput. Com-

plex datasets such as ImageNet require reduced compres-

sion rates to avoid reducing quality. In summary, LDM-4

and -8 offer the best conditions for achieving high-quality

synthesis results.

4.2. Image Generation with Latent Diffusion

We train unconditional models of 256

2

images on

CelebA-HQ [39], FFHQ [41], LSUN-Churches and

-Bedrooms [102] and evaluate the i) sample quality and ii)

their coverage of the data manifold using ii) FID [29] and

ii) Precision-and-Recall [50]. Tab. 1 summarizes our re-

sults. On CelebA-HQ, we report a new state-of-the-art FID

of 5.11, outperforming previous likelihood-based models as

well as GANs. We also outperform LSGM [93] where a la-

tent diffusion model is trained jointly together with the first

stage. In contrast, we train diffusion models in a fixed space

5

剩余44页未读,继续阅读

资源评论

IT徐师兄

- 粉丝: 1581

- 资源: 2690

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功