See discussions, stats, and author profiles for this publication at: https://www.researchgate.net/publication/306093646

Neural Relation Extraction with Selective Attention over Instances

Conference Paper · January 2016

DOI: 10.18653/v1/P16-1200

CITATIONS

835

READS

2,354

5 authors, including:

Some of the authors of this publication are also working on these related projects:

Sememe View project

Incorporating Relation Paths in Neural Relation Extraction View project

Zhiyuan Liu

Tsinghua University

396 PUBLICATIONS12,482 CITATIONS

SEE PROFILE

All content following this page was uploaded by Zhiyuan Liu on 16 August 2017.

The user has requested enhancement of the downloaded file.

Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, pages 2124–2133,

Berlin, Germany, August 7-12, 2016.

c

2016 Association for Computational Linguistics

Neural Relation Extraction with Selective Attention over Instances

Yankai Lin

1

, Shiqi Shen

1

, Zhiyuan Liu

1,2∗

, Huanbo Luan

1

, Maosong Sun

1,2

1

Department of Computer Science and Technology,

State Key Lab on Intelligent Technology and Systems,

National Lab for Information Science and Technology, Tsinghua University, Beijing, China

2

Jiangsu Collaborative Innovation Center for Language Competence, Jiangsu, China

Abstract

Distant supervised relation extraction has

been widely used to find novel relational

facts from text. However, distant su-

pervision inevitably accompanies with the

wrong labelling problem, and these noisy

data will substantially hurt the perfor-

mance of relation extraction. To allevi-

ate this issue, we propose a sentence-level

attention-based model for relation extrac-

tion. In this model, we employ convolu-

tional neural networks to embed the se-

mantics of sentences. Afterwards, we

build sentence-level attention over multi-

ple instances, which is expected to dy-

namically reduce the weights of those

noisy instances. Experimental results on

real-world datasets show that, our model

can make full use of all informative sen-

tences and effectively reduce the influence

of wrong labelled instances. Our model

achieves significant and consistent im-

provements on relation extraction as com-

pared with baselines. The source code of

this paper can be obtained from https:

//github.com/thunlp/NRE.

1 Introduction

In recent years, various large-scale knowledge

bases (KBs) such as Freebase (Bollacker et al.,

2008), DBpedia (Auer et al., 2007) and YAGO

(Suchanek et al., 2007) have been built and widely

used in many natural language processing (NLP)

tasks, including web search and question answer-

ing. These KBs mostly compose of relational facts

with triple format, e.g., (Microsoft, founder,

Bill Gates). Although existing KBs contain a

∗

Corresponding author: Zhiyuan Liu (li-

uzy@tsinghua.edu.cn).

massive amount of facts, they are still far from

complete compared to the infinite real-world facts.

To enrich KBs, many efforts have been invested

in automatically finding unknown relational facts.

Therefore, relation extraction (RE), the process of

generating relational data from plain text, is a cru-

cial task in NLP.

Most existing supervised RE systems require a

large amount of labelled relation-specific training

data, which is very time consuming and labor in-

tensive. (Mintz et al., 2009) proposes distant su-

pervision to automatically generate training data

via aligning KBs and texts. They assume that if

two entities have a relation in KBs, then all sen-

tences that contain these two entities will express

this relation. For example, (Microsoft, founder,

Bill Gates) is a relational fact in KB. Distant su-

pervision will regard all sentences that contain

these two entities as active instances for relation

founder. Although distant supervision is an

effective strategy to automatically label training

data, it always suffers from wrong labelling prob-

lem. For example, the sentence “Bill Gates ’s turn

to philanthropy was linked to the antitrust prob-

lems Microsoft had in the U.S. and the European

union.” does not express the relation founder

but will still be regarded as an active instance.

Hence, (Riedel et al., 2010; Hoffmann et al., 2011;

Surdeanu et al., 2012) adopt multi-instance learn-

ing to alleviate the wrong labelling problem. The

main weakness of these conventional methods is

that most features are explicitly derived from NLP

tools such as POS tagging and the errors generated

by NLP tools will propagate in these methods.

Some recent works (Socher et al., 2012; Zeng

et al., 2014; dos Santos et al., 2015) attempt to

use deep neural networks in relation classifica-

tion without handcrafted features. These meth-

ods build classifier based on sentence-level anno-

tated data, which cannot be applied in large-scale

2124

r

1

r

2

r

3

r

c

CNN CNN CNN CNN

m

1

m

2

m

3

m

c

r

α

1

α

2

α

3

α

c

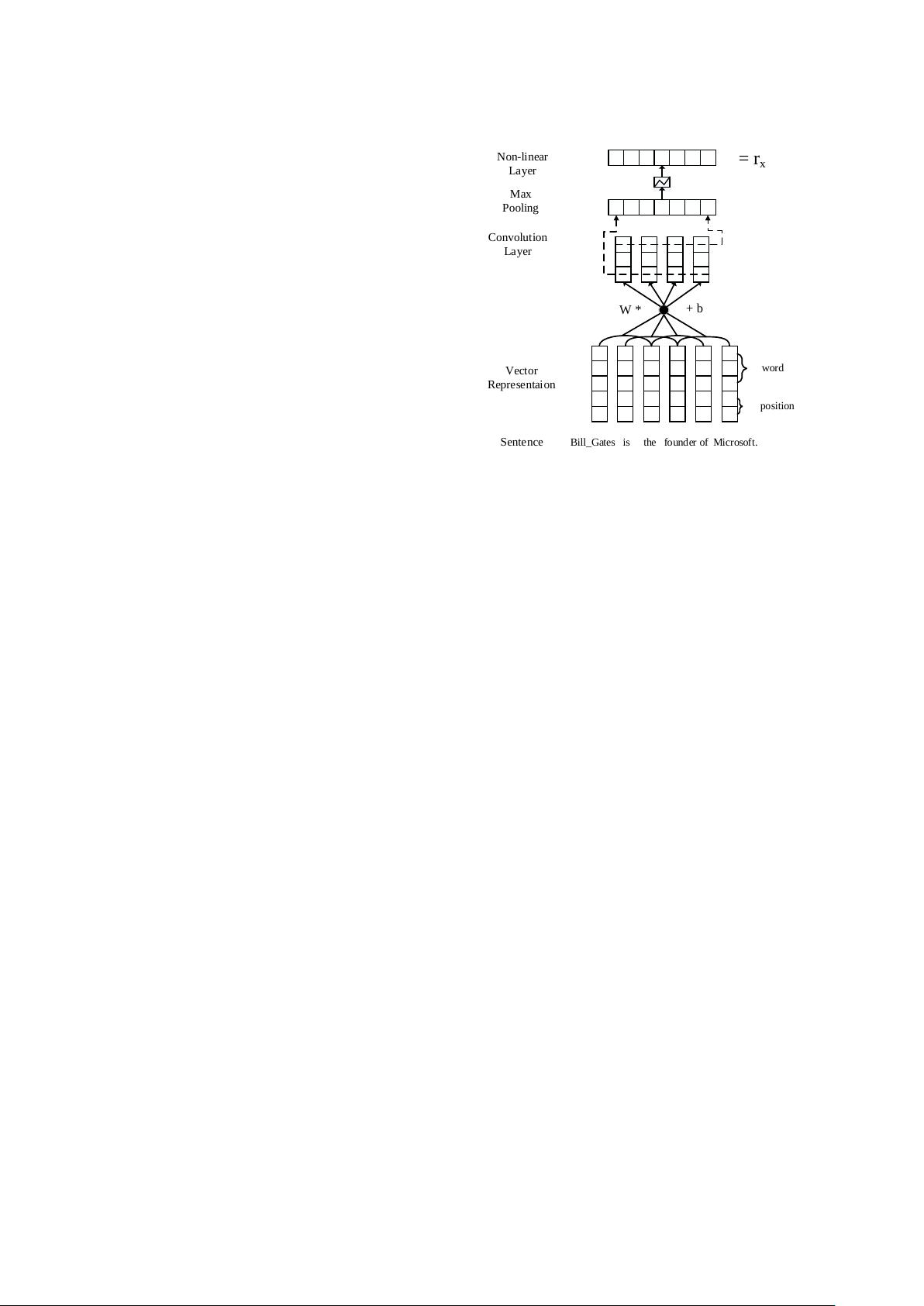

Figure 1: The architecture of sentence-level

attention-based CNN, where m

i

indicates the orig-

inal sentence for an entity pair, α

i

is the weight

given by sentence-level attention.

KBs due to the lack of human-annotated train-

ing data. Therefore, (Zeng et al., 2015) incor-

porates multi-instance learning with neural net-

work model, which can build relation extractor

based on distant supervision data. Although the

method achieves significant improvement in re-

lation extraction, it is still far from satisfactory.

The method assumes that at least one sentence that

mentions these two entities will express their rela-

tion, and only selects the most likely sentence for

each entity pair in training and prediction. It’s ap-

parent that the method will lose a large amount

of rich information containing in neglected sen-

tences.

In this paper, we propose a sentence-level

attention-based convolutional neural network

(CNN) for distant supervised relation extraction.

As illustrated in Fig. 1, we employ a CNN to

embed the semantics of sentences. Afterwards, to

utilize all informative sentences, we represent the

relation as semantic composition of sentence em-

beddings. To address the wrong labelling prob-

lem, we build sentence-level attention over mul-

tiple instances, which is expected to dynamically

reduce the weights of those noisy instances. Fi-

nally, we extract relation with the relation vector

weighted by sentence-level attention. We evaluate

our model on a real-world dataset in the task of

relation extraction. The experimental results show

that our model achieves significant and consistent

improvements in relation extraction as compared

with the state-of-the-art methods.

The contributions of this paper can be summa-

rized as follows:

• As compared to existing neural relation ex-

traction model, our model can make full use

of all informative sentences of each entity

pair.

• To address the wrong labelling problem in

distant supervision, we propose selective

attention to de-emphasize those noisy in-

stances.

• In the experiments, we show that selective

attention is beneficial to two kinds of CNN

models in the task of relation extraction.

2 Related Work

Relation extraction is one of the most impor-

tant tasks in NLP. Many efforts have been invested

in relation extraction, especially in supervised re-

lation extraction. Most of these methods need a

great deal of annotated data, which is time con-

suming and labor intensive. To address this issue,

(Mintz et al., 2009) aligns plain text with Free-

base by distant supervision. However, distant su-

pervision inevitably accompanies with the wrong

labelling problem. To alleviate the wrong la-

belling problem, (Riedel et al., 2010) models dis-

tant supervision for relation extraction as a multi-

instance single-label problem, and (Hoffmann et

al., 2011; Surdeanu et al., 2012) adopt multi-

instance multi-label learning in relation extraction.

Multi-instance learning was originally proposed to

address the issue of ambiguously-labelled training

data when predicting the activity of drugs (Diet-

terich et al., 1997). Multi-instance learning con-

siders the reliability of the labels for each instance.

(Bunescu and Mooney, 2007) connects weak su-

pervision with multi-instance learning and extends

it to relation extraction. But all the feature-based

methods depend strongly on the quality of the fea-

tures generated by NLP tools, which will suffer

from error propagation problem.

Recently, deep learning (Bengio, 2009) has

been widely used for various areas, including com-

puter vision, speech recognition and so on. It has

also been successfully applied to different NLP

tasks such as part-of-speech tagging (Collobert

et al., 2011), sentiment analysis (dos Santos and

Gatti, 2014), parsing (Socher et al., 2013), and

machine translation (Sutskever et al., 2014). Due

to the recent success in deep learning, many re-

searchers have investigated the possibility of us-

ing neural networks to automatically learn features

2125

for relation extraction. (Socher et al., 2012) uses

a recursive neural network in relation extraction.

They parse the sentences first and then represent

each node in the parsing tree as a vector. More-

over, (Zeng et al., 2014; dos Santos et al., 2015)

adopt an end-to-end convolutional neural network

for relation extraction. Besides, (Xie et al., 2016)

attempts to incorporate the text information of en-

tities for relation extraction.

Although these methods achieve great success,

they still extract relations on sentence-level and

suffer from a lack of sufficient training data. In

addition, the multi-instance learning strategy of

conventional methods cannot be easily applied in

neural network models. Therefore, (Zeng et al.,

2015) combines at-least-one multi-instance learn-

ing with neural network model to extract relations

on distant supervision data. However, they assume

that only one sentence is active for each entity pair.

Hence, it will lose a large amount of rich informa-

tion containing in those neglected sentences. Dif-

ferent from their methods, we propose sentence-

level attention over multiple instances, which can

utilize all informative sentences.

The attention-based models have attracted a lot

of interests of researchers recently. The selectiv-

ity of attention-based models allows them to learn

alignments between different modalities. It has

been applied to various areas such as image clas-

sification (Mnih et al., 2014), speech recognition

(Chorowski et al., 2014), image caption generation

(Xu et al., 2015) and machine translation (Bah-

danau et al., 2014). To the best of our knowl-

edge, this is the first effort to adopt attention-based

model in distant supervised relation extraction.

3 Methodology

Given a set of sentences {x

1

, x

2

, · · · , x

n

} and

two corresponding entities, our model measures

the probability of each relation r. In this section,

we will introduce our model in two main parts:

• Sentence Encoder. Given a sentence x and

two target entities, a convolutional neutral

network (CNN) is used to construct a dis-

tributed representation x of the sentence.

• Selective Attention over Instances. When

the distributed vector representations of all

sentences are learnt, we use sentence-level at-

tention to select the sentences which really

express the corresponding relation.

3.1 Sentence Encoder

Bill_Gates is the founder of Microsoft.

Sentence

Vector

Representaion

word

position

Convolution

Layer

Max

Pooling

= r

x

W *

+ b

Non-linear

Layer

Figure 2: The architecture of CNN/PCNN used for

sentence encoder.

As shown in Fig. 2, we transform the sentence

x into its distributed representation x by a CNN.

First, words in the sentence are transformed into

dense real-valued feature vectors. Next, convo-

lutional layer, max-pooling layer and non-linear

transformation layer are used to construct a dis-

tributed representation of the sentence, i.e., x.

3.1.1 Input Representation

The inputs of the CNN are raw words of the

sentence x. We first transform words into low-

dimensional vectors. Here, each input word is

transformed into a vector via word embedding ma-

trix. In addition, to specify the position of each en-

tity pair, we also use position embeddings for all

words in the sentence.

Word Embeddings. Word embeddings aim to

transform words into distributed representations

which capture syntactic and semantic meanings

of the words. Given a sentence x consisting of

m words x = {w

1

, w

2

, · · · , w

m

}, every word

w

i

is represented by a real-valued vector. Word

representations are encoded by column vectors in

an embedding matrix V ∈ R

d

a

×|V |

where V is a

fixed-sized vocabulary.

Position Embeddings. In the task of relation

extraction, the words close to the target entities are

usually informative to determine the relation be-

tween entities. Similar to (Zeng et al., 2014), we

use position embeddings specified by entity pairs.

It can help the CNN to keep track of how close

2126

评论0