MDETR - Modulated Detection for End-to-End Multi-Modal Understanding

Aishwarya Kamath

1

Mannat Singh

2

Yann LeCun

123

Gabriel Synnaeve

2

Ishan Misra

2

Nicolas Carion

3

1

NYU Center for Data Science

2

Facebook AI Research

3

NYU Courant Institute

{aish, yann.lecun, nc2794}@nyu.edu, {mannatsingh,imisra,gab}@fb.com

Abstract

Multi-modal reasoning systems rely on a pre-trained ob-

ject detector to extract regions of interest from the im-

age. However, this crucial module is typically used as a

black box, trained independently of the downstream task

and on a fixed vocabulary of objects and attributes. This

makes it challenging for such systems to capture the long

tail of visual concepts expressed in free form text. In this

paper we propose MDETR, an end-to-end modulated de-

tector that detects objects in an image conditioned on a

raw text query, like a caption or a question. We use a

transformer-based architecture to reason jointly over text

and image by fusing the two modalities at an early stage of

the model. We pre-train the network on 1.3M text-image

pairs, mined from pre-existing multi-modal datasets hav-

ing explicit alignment between phrases in text and objects

in the image. We then fine-tune on several downstream

tasks such as phrase grounding, referring expression com-

prehension and segmentation, achieving state-of-the-art re-

sults on popular benchmarks. We also investigate the util-

ity of our model as an object detector on a given label set

when fine-tuned in a few-shot setting. We show that our

pre-training approach provides a way to handle the long

tail of object categories which have very few labelled in-

stances. Our approach can be easily extended for visual

question answering, achieving competitive performance on

GQA and CLEVR. The code and models are available at

https://github.com/ashkamath/mdetr.

1. Introduction

Object detection forms an integral component of most

state-of-the-art multi-modal understanding systems [6, 28],

typically used as a black-box to detect a fixed vocabulary

of concepts in an image followed by multi-modal align-

ment. This “pipelined” approach limits co-training with

other modalities as context and restricts the downstream

model to only have access to the detected objects and not the

whole image. In addition, the detection system is usually

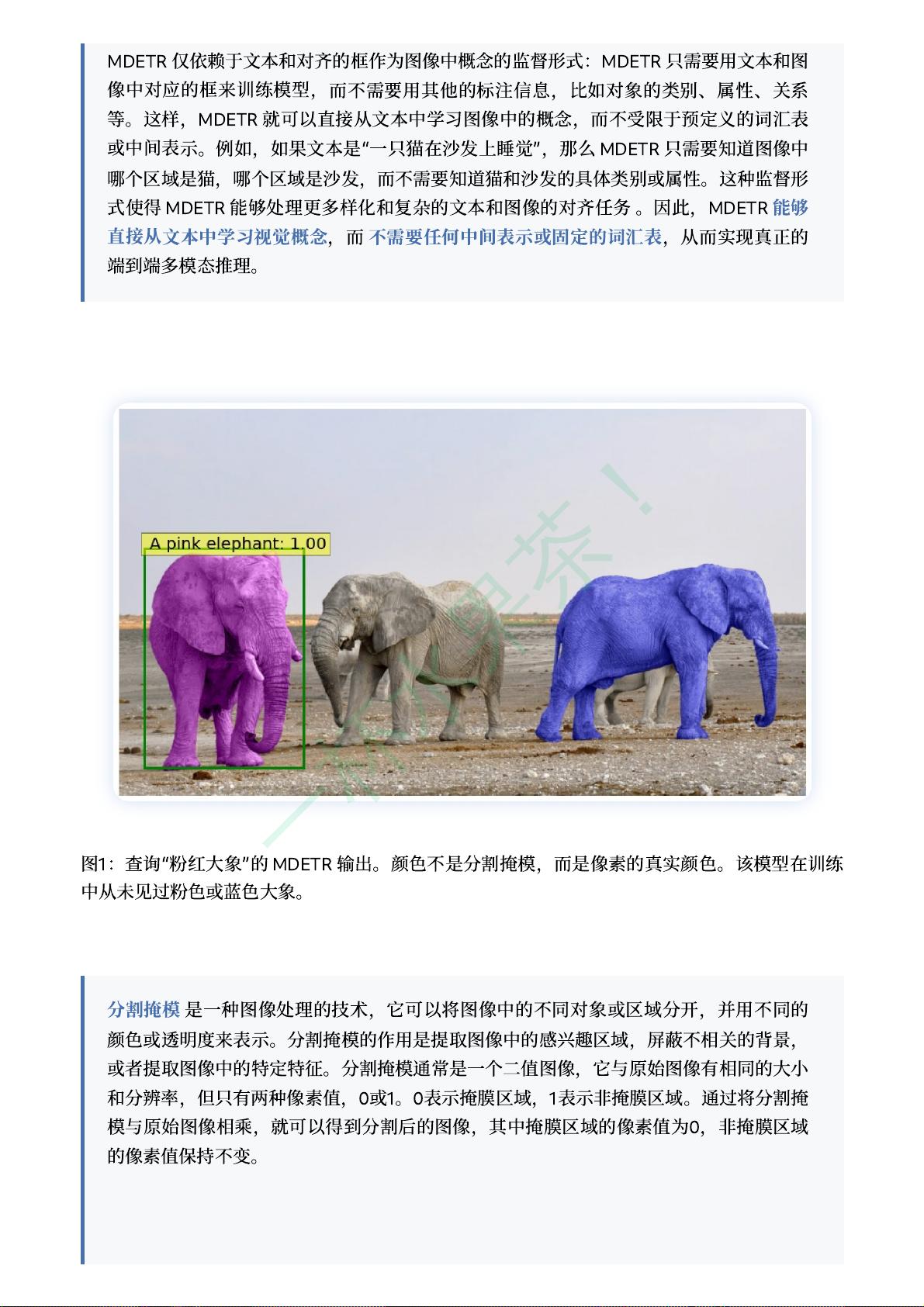

Figure 1: Output of MDETR for the query “A pink elephant”. The

colors are not segmentation masks but the real colors of the pixels.

The model has never seen a pink nor a blue elephant in training.

frozen, which prevents further refinement of the model’s

perceptive capability. In the vision-language setting, it im-

plies restricting the vocabulary of the resulting system to

the categories and attributes of the detector, and is often a

bottleneck for performance on these tasks [72]. As a re-

sult, such a system cannot recognize novel combinations of

concepts expressed in free-form text.

A recent line of work [66, 45, 13] considers the problem

of text-conditioned object detection. These methods extend

mainstream one-stage and two-stage detection architectures

to achieve this goal. However, to the best of our knowl-

edge, it has not been demonstrated that such detectors can

improve performance on downstream tasks that require rea-

soning over the detected objects, such as visual question an-

swering (VQA). We believe this is because these detectors

are not end-to-end differentiable and thus cannot be trained

in synergy with downstream tasks.

Our method, MDETR, is an end-to-end modulated de-

tector based on the recent DETR [2] detection framework,

and performs object detection in conjunction with natural

language understanding, enabling truly end-to-end multi-

modal reasoning. MDETR relies solely on text and aligned

boxes as a form of supervision for concepts in an image.

Thus, unlike current detection methods, MDETR detects

nuanced concepts from free-form text, and generalizes to

unseen combinations of categories and attributes. We show-

case such a combination as well as modulated detection in

1

arXiv:2104.12763v2 [cs.CV] 12 Oct 2021