没有合适的资源?快使用搜索试试~ 我知道了~

数据挖掘导论(英文版·原书第2版)美陈封能(Pang-Ning Tan)2019版-(中)

需积分: 18 6 下载量 182 浏览量

2023-03-05

17:17:10

上传

评论

收藏 12.19MB PDF 举报

温馨提示

试读

500页

本书从算法的角度介绍数据挖掘所使用的主要原理与技术。为了更好地理解数据挖掘技术如何用于各种类型的数据,研究这些原理与技术是至关重要的。 本书所涵盖的主题包括:数据预处理、预测建模、关联分析、聚类分析、异常检测和避免错误发现。通过介绍每个主题的基本概念和算法,为读者提供将数据挖掘应用于实际问题所需的必要背景以及使用方法。 [美]陈封能(Pang-Ning Tan)迈克尔·斯坦巴赫(Michael Steinbach)阿努吉·卡帕坦(Anuj Karpatne)维平·库玛尔(Vipin Kumar)著:陈封能(Pang-Ning Tan) 密歇根州立大学计算机科学与工程系教授,主要研究方向是数据挖掘、数据库系统、网络空间安全、网络分析等。

资源推荐

资源详情

资源评论

Randomforestshavebeenempiricallyfoundtoprovidesignificant

improvementsingeneralizationperformancethatareoftencomparable,ifnot

superior,totheimprovementsprovidedbytheAdaBoostalgorithm.Random

forestsarealsomorerobusttooverfittingandrunmuchfasterthanthe

AdaBoostalgorithm.

4.10.7EmpiricalComparisonamong

EnsembleMethods

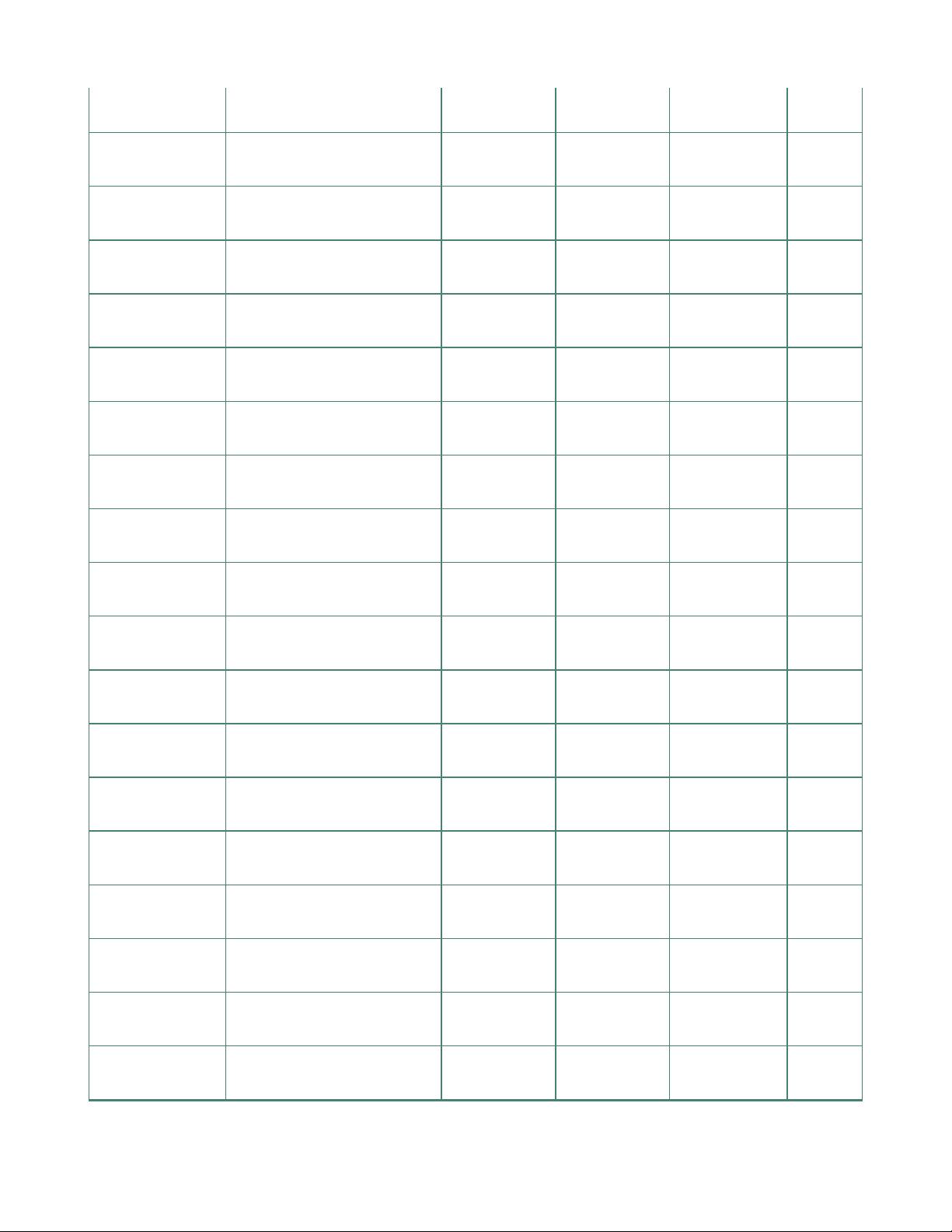

Table4.5 showstheempiricalresultsobtainedwhencomparingthe

performanceofadecisiontreeclassifieragainstbagging,boosting,and

randomforest.Thebaseclassifiersusedineachensemblemethodconsistof

50decisiontrees.Theclassificationaccuraciesreportedinthistableare

obtainedfromtenfoldcross-validation.Noticethattheensembleclassifiers

generallyoutperformasingledecisiontreeclassifieronmanyofthedatasets.

Table4.5.Comparingtheaccuracyofadecisiontreeclassifieragainst

threeensemblemethods.

DataSet Numberof(Attributes,

Classes,Instances)

Decision

Tree(%)

Bagging(%) Boosting(%) RF(%)

Anneal (39,6,898) 92.09 94.43 95.43 95.43

Australia (15,2,690) 85.51 87.10 85.22 85.80

Auto (26,7,205) 81.95 85.37 85.37 84.39

Breast (11,2,699) 95.14 96.42 97.28 96.14

Cleve (14,2,303) 76.24 81.52 82.18 82.18

Credit (16,2,690) 85.8 86.23 86.09 85.8

Diabetes (9,2,768) 72.40 76.30 73.18 75.13

German (21,2,1000) 70.90 73.40 73.00 74.5

Glass (10,7,214) 67.29 76.17 77.57 78.04

Heart (14,2,270) 80.00 81.48 80.74 83.33

Hepatitis (20,2,155) 81.94 81.29 83.87 83.23

Horse (23,2,368) 85.33 85.87 81.25 85.33

Ionosphere (35,2,351) 89.17 92.02 93.73 93.45

Iris (5,3,150) 94.67 94.67 94.00 93.33

Labor (17,2,57) 78.95 84.21 89.47 84.21

Led7 (8,10,3200) 73.34 73.66 73.34 73.06

Lymphography (19,4,148) 77.03 79.05 85.14 82.43

Pima (9,2,768) 74.35 76.69 73.44 77.60

Sonar (61,2,208) 78.85 78.85 84.62 85.58

Tic-tac-toe (10,2,958) 83.72 93.84 98.54 95.82

Vehicle (19,4,846) 71.04 74.11 78.25 74.94

Waveform (22,3,5000) 76.44 83.30 83.90 84.04

Wine (14,3,178) 94.38 96.07 97.75 97.75

Zoo (17,7,101) 93.07 93.07 95.05 97.03

4.11ClassImbalanceProblem

Inmanydatasetsthereareadisproportionatenumberofinstancesthat

belongtodifferentclasses,apropertyknownasskeworclass

imbalance.Forexample,considerahealth-careapplicationwherediagnostic

reportsareusedtodecidewhetherapersonhasararedisease.Becauseof

theinfrequentnatureofthedisease,wecanexpecttoobserveasmaller

numberofsubjectswhoarepositivelydiagnosed.Similarly,increditcard

frauddetection,fraudulenttransactionsaregreatlyoutnumberedbylegitimate

transactions.

Thedegreeofimbalancebetweentheclassesvariesacrossdifferent

applicationsandevenacrossdifferentdatasetsfromthesameapplication.

Forexample,theriskforararediseasemayvaryacrossdifferentpopulations

ofsubjectsdependingontheirdietaryandlifestylechoices.However,despite

theirinfrequentoccurrences,acorrectclassificationoftherareclassoftenhas

greatervaluethanacorrectclassificationofthemajorityclass.Forexample,it

maybemoredangeroustoignoreapatientsufferingfromadiseasethanto

misdiagnoseahealthyperson.

Moregenerally,classimbalanceposestwochallengesforclassification.First,

itcanbedifficulttofindsufficientlymanylabeledsamplesofarareclass.Note

thatmanyoftheclassificationmethodsdiscussedsofarworkwellonlywhen

thetrainingsethasabalancedrepresentationofbothclasses.Althoughsome

classifiersaremoreeffectiveathandlingimbalanceinthetrainingdatathan

others,e.g.,rule-basedclassifiersandk-NN,theyareallimpactedifthe

minorityclassisnotwell-representedinthetrainingset.Ingeneral,aclassifier

trainedoveranimbalanceddatasetshowsabiastowardimprovingits

performanceoverthemajorityclass,whichisoftennotthedesiredbehavior.

Asaresult,manyexistingclassificationmodels,whentrainedonan

imbalanceddataset,maynoteffectivelydetectinstancesoftherareclass.

Second,accuracy,whichisthetraditionalmeasureforevaluating

classificationperformance,isnotwell-suitedforevaluatingmodelsinthe

presenceofclassimbalanceinthetestdata.Forexample,if1%ofthecredit

cardtransactionsarefraudulent,thenatrivialmodelthatpredictsevery

transactionaslegitimatewillhaveanaccuracyof99%eventhoughitfailsto

detectanyofthefraudulentactivities.Thus,thereisaneedtousealternative

evaluationmetricsthataresensitivetotheskewandcancapturedifferent

criteriaofperformancethanaccuracy.

Inthissection,wefirstpresentsomeofthegenericmethodsforbuilding

classifierswhenthereisclassimbalanceinthetrainingset.Wethendiscuss

methodsforevaluatingclassificationperformanceandadaptingclassification

decisionsinthepresenceofaskewedtestset.Intheremainderofthis

section,wewillconsiderbinaryclassificationproblemsforsimplicity,where

theminorityclassisreferredasthepositive classwhilethemajorityclass

isreferredasthenegative class.

4.11.1BuildingClassifierswithClass

Imbalance

Therearetwoprimaryconsiderationsforbuildingclassifiersinthepresenceof

classimbalanceinthetrainingset.First,weneedtoensurethatthelearning

algorithmistrainedoveradatasetthathasadequaterepresentationofboth

themajorityaswellastheminorityclasses.Somecommonapproachesfor

ensuringthisincludesthemethodologiesofoversamplingandundersampling

(+)

(−)

thetrainingset.Second,havinglearnedaclassificationmodel,weneedaway

toadaptitsclassificationdecisions(andthuscreateanappropriatelytuned

classifier)tobestmatchtherequirementsoftheimbalancedtestset.Thisis

typicallydonebyconvertingtheoutputsoftheclassificationmodeltoreal-

valuedscores,andthenselectingasuitablethresholdontheclassification

scoretomatchtheneedsofatestset.Boththeseconsiderationsare

discussedindetailinthefollowing.

OversamplingandUndersampling

Thefirststepinlearningwithimbalanceddataistotransformthetrainingset

toabalancedtrainingset,wherebothclasseshavenearlyequal

representation.Thebalancedtrainingsetcanthenbeusedwithanyofthe

existingclassificationtechniques(withoutmakinganymodificationsinthe

learningalgorithm)tolearnamodelthatgivesequalemphasistoboth

classes.Inthefollowing,wepresentsomeofthecommontechniquesfor

transforminganimbalancedtrainingsettoabalancedone.

Abasicapproachforcreatingbalancedtrainingsetsistogenerateasample

oftraininginstanceswheretherareclasshasadequaterepresentation.There

aretwotypesofsamplingmethodsthatcanbeusedtoenhancethe

representationoftheminorityclass:(a)undersampling,wherethefrequency

ofthemajorityclassisreducedtomatchthefrequencyoftheminorityclass,

and(b)oversampling,whereartificialexamplesoftheminorityclassare

createdtomakethemequalinproportiontothenumberofnegativeinstances.

Toillustrateundersampling,consideratrainingsetthatcontains100positive

examplesand1000negativeexamples.Toovercometheskewamongthe

classes,wecanselectarandomsampleof100examplesfromthenegative

classandusethemwiththe100positiveexamplestocreateabalanced

trainingset.Aclassifierbuiltovertheresultantbalancedsetwillthenbe

剩余499页未读,继续阅读

资源评论

woodballhead

- 粉丝: 22

- 资源: 12

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功