没有合适的资源?快使用搜索试试~ 我知道了~

ChatGPT背后的大模型最新有哪些?最新最全《Transformer预训练模型分类》论文,pdf.pdf

需积分: 0 10 下载量 42 浏览量

2023-05-21

17:24:02

上传

评论

收藏 2.73MB PDF 举报

温馨提示

试读

36页

ChatGPT背后的大模型最新有哪些?最新最全《Transformer预训练模型分类》论文,pdf.pdf

资源推荐

资源详情

资源评论

TRANSFORMER MODELS: AN INTRODUCTION AND CATALOG

Xavier Amatriain

Los Gatos, CA 95032

xavier@amatriain.net

February 17, 2023

ABSTRACT

In the past few years we have seen the meteoric appearance of dozens of models of the Transformer

family, all of which have funny, but not self-explanatory, names. The goal of this paper is to offer

a somewhat comprehensive but simple catalog and classification of the most popular Transformer

models. The paper also includes an introduction to the most important aspects and innovation in

Transformer models.

Contents

1 Introduction: What are Transformers 3

1.1 Encoder/Decoder architecture . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

1.2 Attention . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

1.3 What are Transformers used for and why are they so popular . . . . . . . . . . . . . . . . . . . . . . 5

1.4 RLHF . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

1.5 Diffusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

2 The Transformers catalog 8

2.1 Features of a Transformer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

2.1.1 Pretraining Architecture . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

2.1.2 Pretraining Task . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

2.1.3 Application . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.2 Catalog table . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.3 Family Tree . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.4 Chronological timeline . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.5 Catalog List . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.5.1 ALBERT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.5.2 AlphaFold . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.5.3 Anthropic Assistant . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

2.5.4 BART . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

2.5.5 BERT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

2.5.6 Big Bird . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

arXiv:2302.07730v2 [cs.CL] 16 Feb 2023

A PREPRINT - FEBRUARY 17, 2023

2.5.7 BlenderBot3 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

2.5.8 BLOOM . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

2.5.9 ChatGPT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

2.5.10 Chinchilla . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

2.5.11 CLIP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

2.5.12 CM3 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

2.5.13 CTRL . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

2.5.14 DALL-E . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

2.5.15 DALL-E 2 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

2.5.16 Decision Transformers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

2.5.17 DialoGPT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

2.5.18 DistilBERT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

2.5.19 DQ-BART . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

2.5.20 ELECTRA . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

2.5.21 ERNIE . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

2.5.22 Flamingo . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

2.5.23 Gato . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

2.5.24 GLaM . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

2.5.25 GLIDE . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.5.26 Global Context ViT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.5.27 Gopher . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.5.28 GopherCite . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.5.29 GPT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.5.30 GPT-2 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.5.31 GPT-3 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

2.5.32 GPT-3.5 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

2.5.33 InstructGPT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

2.5.34 GPT-Neo . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.5.35 GPT-NeoX-20B . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.5.36 HTML . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.5.37 Imagen . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.5.38 Jurassic-1 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

2.5.39 LAMDA . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

2.5.40 mBART . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

2.5.41 Megatron . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

2.5.42 Minerva . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

2.5.43 MT-NLG (Megatron TouringNLG) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

2.5.44 OPT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

2.5.45 PalM . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

2

A PREPRINT - FEBRUARY 17, 2023

2.5.46 Pegasus . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

2.5.47 RoBERTa . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27

2.5.48 SeeKer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27

2.5.49 Sparrow . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27

2.5.50 StableDiffusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28

2.5.51 Swin Transformer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28

2.5.52 Switch . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28

2.5.53 T5 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

2.5.54 Trajectory Transformers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

2.5.55 Transformer XL . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

2.5.56 Turing-NLG . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

2.5.57 ViT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

2.5.58 Wu Dao 2.0 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

2.5.59 XLM-RoBERTa . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

2.5.60 XLNet . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

3 Further reading 31

1 Introduction: What are Transformers

Transformers are a class of deep learning models that are defined by some architectural traits. They were first introduced

in the now famous "Attention is All you Need" paper by Google researchers in 2017 [1] (the paper has accumulated a

whooping 38k citations in only 5 years) and associated blog post

1

.

The Transformer architecture is a specific instance of the encoder-decoder models[

2

]

2

that had become popular just

over the 2–3 years prior. Up until that point however, attention was just one of the mechanisms used by these models,

which were mostly based on LSTM (Long Short Term Memory)[

3

] and other RNN (Recurrent Neural Networks)[

4

]

variations. The key insight of the Transformers paper was that, as the title implies, attention could be used as the only

mechanism to derive dependencies between input and output.

It is beyond the scope of this blog to go into all the details of the Transformer architecture. For that, I will refer you

to the original paper above or to the wonderful The Illustrated Transformer

3

post. That being said, we will briefly

describe the most important aspects since we will be referring to them in the catalog below. Let’s start with the basic

architectural diagram from the original paper, and describe some of the components.

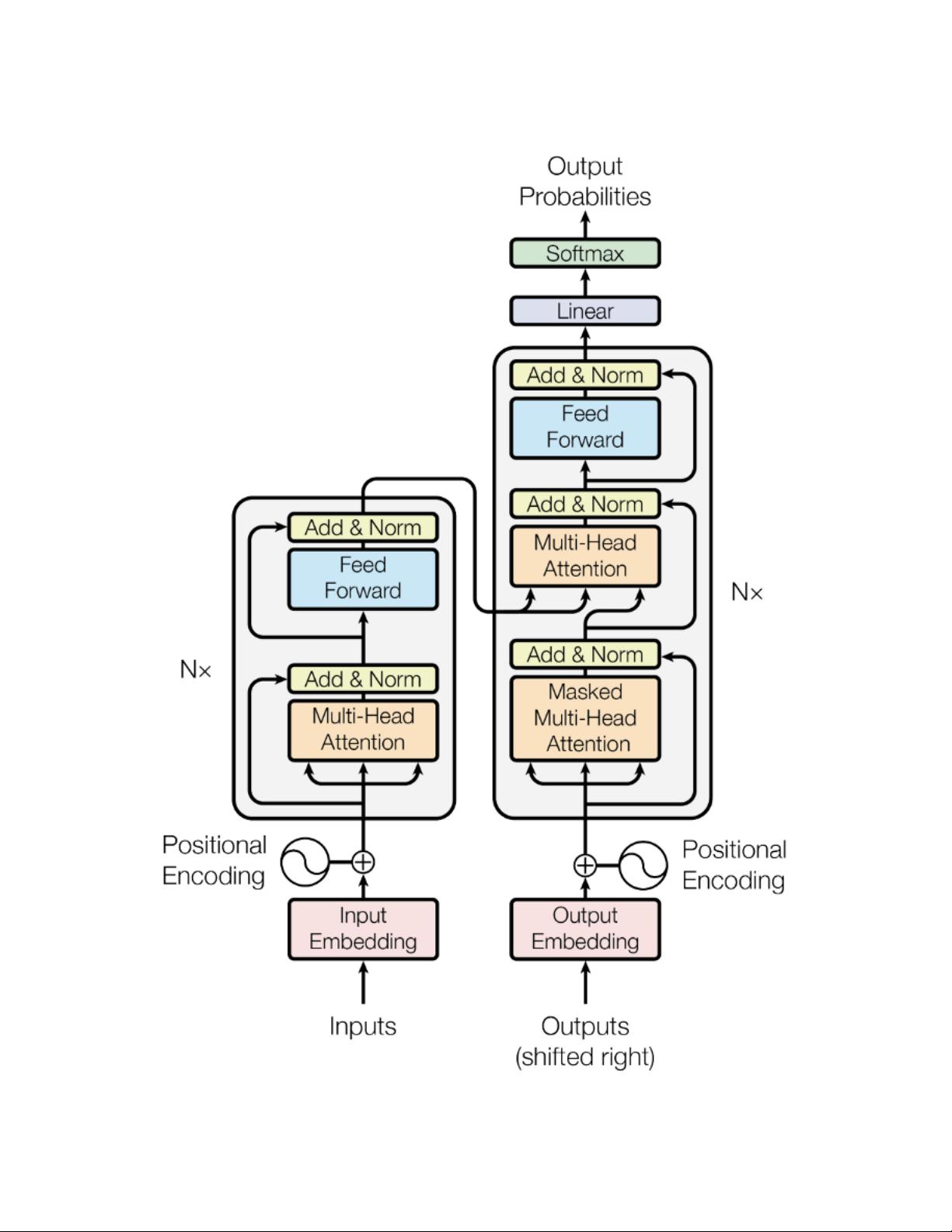

1.1 Encoder/Decoder architecture

A generic encoder/decoder architecture (see Figure 1) is made up of two models. The encoder takes the input and

encodes it into a fixed-length vector. The decoder takes that vector and decodes it into the output sequence. The

encoder and decoder are jointly trained to minimize the conditional log-likelihood. Once trained the encoder/decoder

can generate an output given an input sequence or can score a pair of input/output sequences.

In the case of the original Transformer architecture, both encoder and decoder had 6 identical layers. In each of those 6

layers the Encoder has two sub layers: a multi-head attention layer, and a simple feed forward network. Each sublayer

has a residual connection and a layer normalization. The output size of the Encoder is 512. The Decoder adds a third

sublayer, which is another multi-head attention layer over the output of the Encoder. Besides, the other multi-head layer

in the decoder is masked to prevent attention to subsequent positions.

1

https://ai.googleblog.com/2017/08/transformer-novel-neural-network.html

2

https://machinelearningmastery.com/encoder-decoder-long-short-term-memory-networks/

3

https://jalammar.github.io/illustrated-transformer/

3

A PREPRINT - FEBRUARY 17, 2023

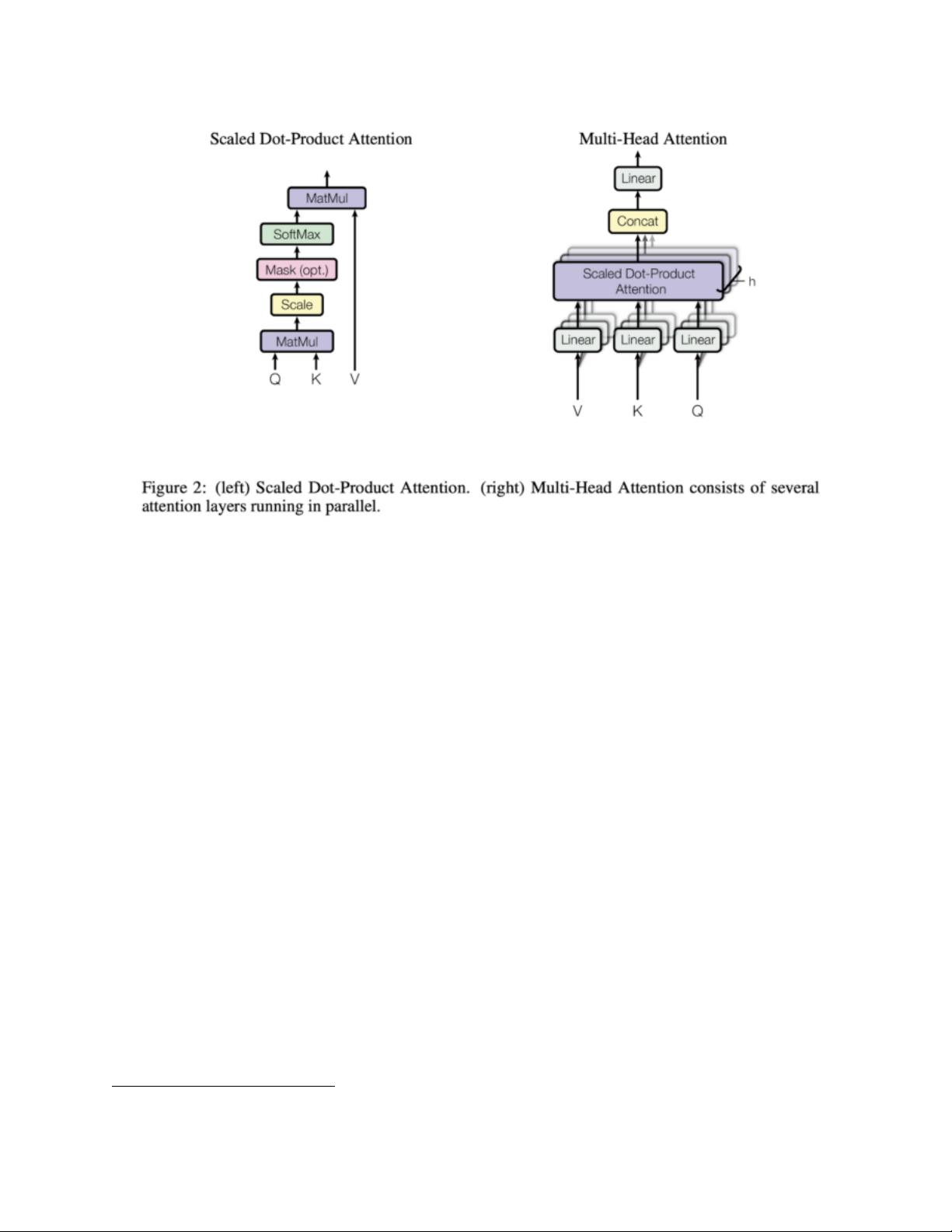

Figure 2: The Attention Mechanism

1.2 Attention

It is clear from the description above that the only “exotic” elements of the model architecture are the multi-headed

attention, but, as described above, that is where the whole power of the model lies! So, what is attention anyway? An

attention function is a mapping between a query and a set of key-value pairs to an output. The output is computed as

a weighted sum of the values, where the weight assigned to each value is computed by a compatibility function of

the query with the corresponding key. Transformers use multi-headed attention, which is a parallel computation of a

specific attention function called scaled dot-product attention. I will refer you again to the The Illustrated Transformer

4

post for many more details on how the attention mechanism works, but will reproduce the diagram from the original

paper in Figure 2 so you get the main idea

There are several advantages of attention layers over recurrent and convolutional networks, the two most important

being their lower computational complexity and their higher connectivity, especially useful for learning long-term

dependencies in sequences.

1.3 What are Transformers used for and why are they so popular

The original transformer was designed for language translation, particularly from English to German. But, already

the original paper showed that the architecture generalized well to other language tasks. This particular trend became

quickly noticed by the research community. Over the next few months most of the leaderboards for any language-related

ML task became completely dominated by some version of the transformer architecture (see for example the well

known SQUAD leaderboard

5

for question answer where all models at the top are ensembles of Transformers).

One of the key reasons Transformers were able to so quickly take over most NLP leaderboards is their ability to quickly

adapt to other tasks, a.k.a. Transfer learning. Pretrained Transformer models can adapt extremely easily and quickly to

tasks they have not been trained on, and that has huge advantages. As an ML practitioner, you no longer need to train

a large model on a huge dataset. All you need to do is re-use the pretrained model on your task, maybe just slightly

4

https://jalammar.github.io/illustrated-transformer/

5

https://rajpurkar.github.io/SQuAD-explorer/

5

剩余35页未读,继续阅读

资源评论

死磕代码程序媛

- 粉丝: 107

- 资源: 316

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功