Least Squares Support Vector Machine Classifiers

J.A.K. Suykens and J. Vandewalle

Katholieke Universiteit Leuven

Department of Electrical Engineering, ESAT-SISTA

Kardinaal Mercierlaan 94, B-3001 Leuven (Heverlee), Belgium

Email: johan.suykens@esat.kuleuven.ac.be

August 1998

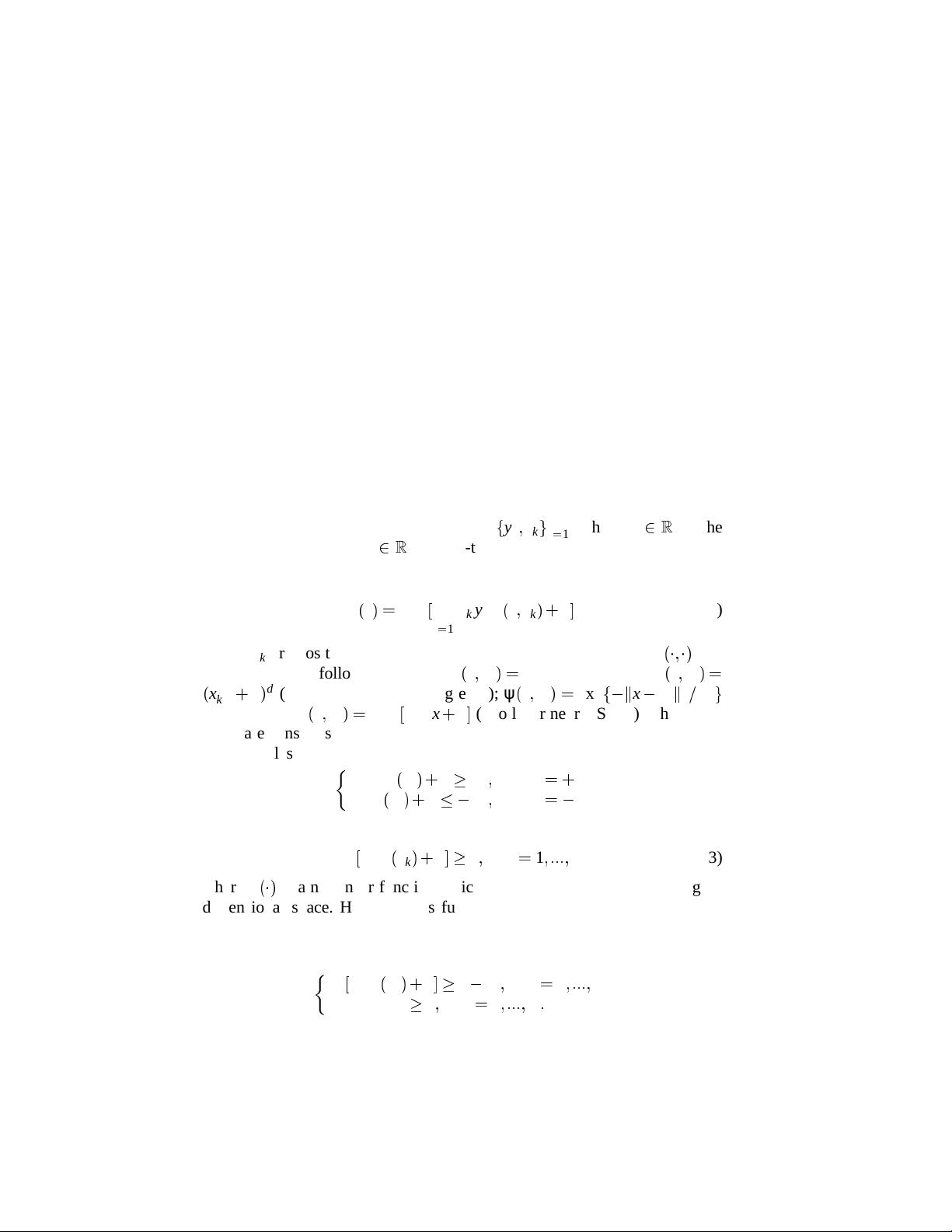

Abstract. In this letter we discuss a least squares version for support vector machine (SVM)

classifiers. Due to equality type constraints in the formulation, the solution follows from

solving a set of linear equations, instead of quadratic programming for classical SVM’s. The

approach is illustrated on a two-spiral benchmark classification problem.

Keywords: Classification, support vector machines, linear least squares, radial basis function

kernel

Abbreviations: SVM – Support Vector Machines; VC – Vapnik-Chervonenkis; RBF – Radial

Basis Function

1. Introduction

Recently, support vector machines (Vapnik, 1995; Vapnik, 1998a; Vapnik,

1998b) have been introduced for solving pattern recognition problems. In

this method one maps the data into a higher dimensional input space and

one constructs an optimal separating hyperplane in this space. This basically

involves solving a quadratic programming problem, while gradient based

training methods for neural network architectures on the other hand suf-

fer from the existence of many local minima (Bishop, 1995; Cherkassky &

Mulier, 1998; Haykin, 1994; Zurada, 1992). Kernel functions and parameters

are chosen such that a bound on the VC dimension is minimized. Later, the

support vector method has been extended for solving function estimation

problems. For this purpose Vapnik’s epsilon insensitive loss function and

Huber’s loss function have been employed. Besides the linear case, SVM’s

based on polynomials, splines, radial basis function networks and multilayer

perceptrons have been successfully applied. Being based on the structural

risk minimization principle and capacity concept with pure combinatorial

definitions, the quality and complexity of the SVM solution does not de-

pend directly on the dimensionality of the input space (Vapnik, 1995; Vapnik,

1998a; Vapnik, 1998b).

In this paper we formulate a least squares version of SVM’s for clas-

sification problems with two classes. For the function estimation problem a

support vector interpretation of ridge regression (Golub & Van Loan, 1989)

c

1999 Kluwer Academic Publishers. Printed in the Netherlands.

suykens.tex; 24/11/1999; 16:59; p.1