19 October, 2010 Made By Utpal Ray 1

Parallel Programming

Using

MPI

Overview

on

Message Passing Interface

Parallel Programming Using MPI

19 October, 2010 Made By Utpal Ray 2

There are quite a few approaches towards parallel

programming.

They are :

1. C* ( for SIMD architecture )

2. Thread ( for Multiprocessor architecture )

3. MPI ( for Multicomputer architecture )

4. OpenMP ( for Multiprocessor architecture )

As mentioned above each of the style are suitable for

particular parallel computer architecture.

Parallel Programming Using MPI

19 October, 2010 Made By Utpal Ray 3

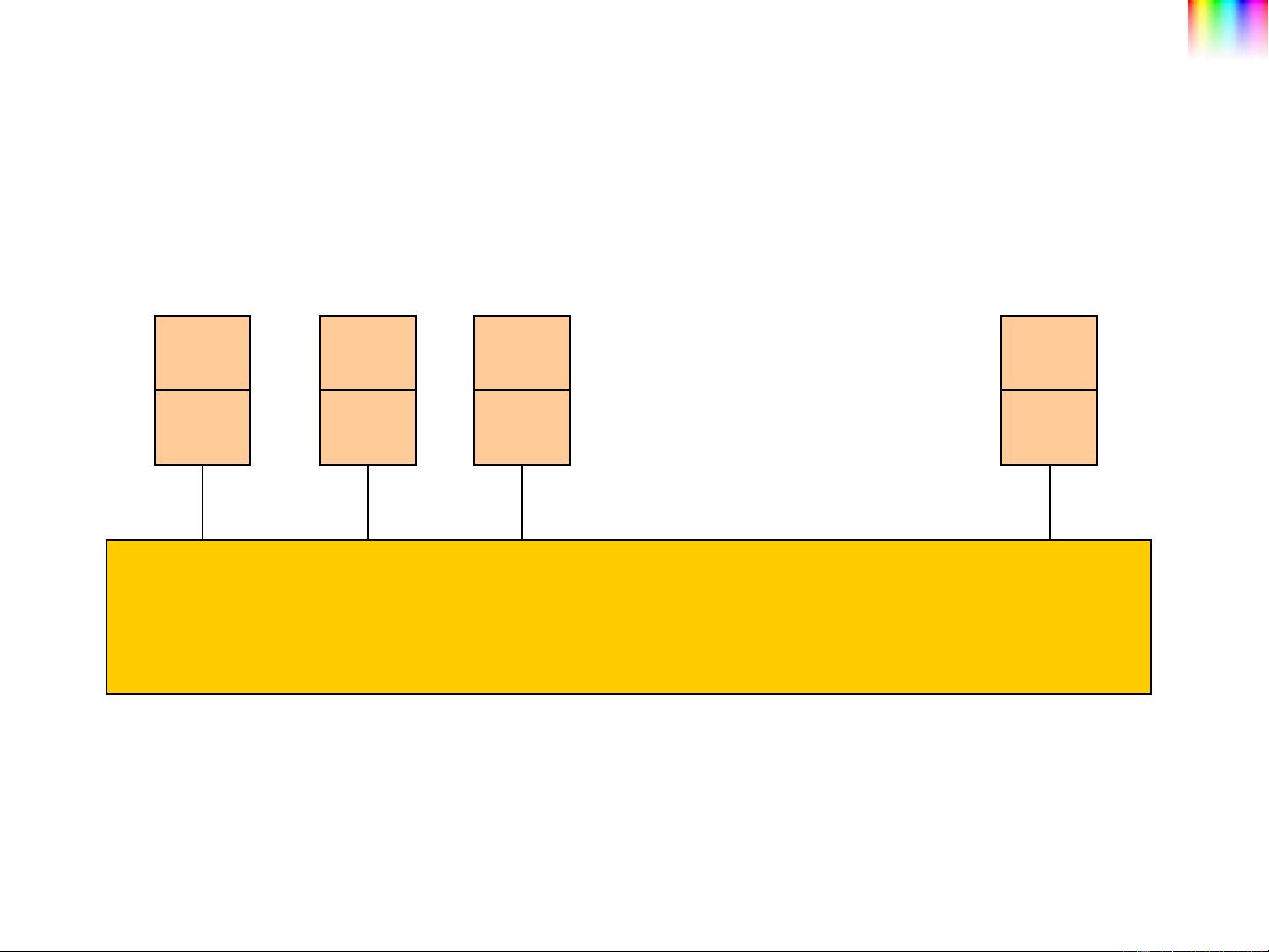

MPI (Message Passing Interface) Programming Model

P

M

P

M

P

M

P

M

INTERCONNECTION MATRIX

Parallel Programming Using MPI

19 October, 2010 Made By Utpal Ray 4

Message passing programs consist of multiple instances

of a serial program that communicate by library calls.

These calls may be roughly divided into four classes:

1. Calls used to initialize, manage, and finally terminate

communications.

2. Calls used to communicate between pairs of processors.

3. Calls that perform communications operations among

groups of processors.

4. Calls used to create arbitrary data types.

Basic Features of Message Passing Programs

Parallel Programming Using MPI

19 October, 2010 Made By Utpal Ray 5

A communicator is a handle representing a group of

processors that can communicate with one another.

The communicator name is required as an argument to all

point-to-point and collective operations.

The communicator specified in the send and receive calls

must agree for communication to take place.

Processors can communicate only if they share a

communicator.

There can be many communicators, and a given processor

can be a member of a number of different communicators.

Concept of Communicators