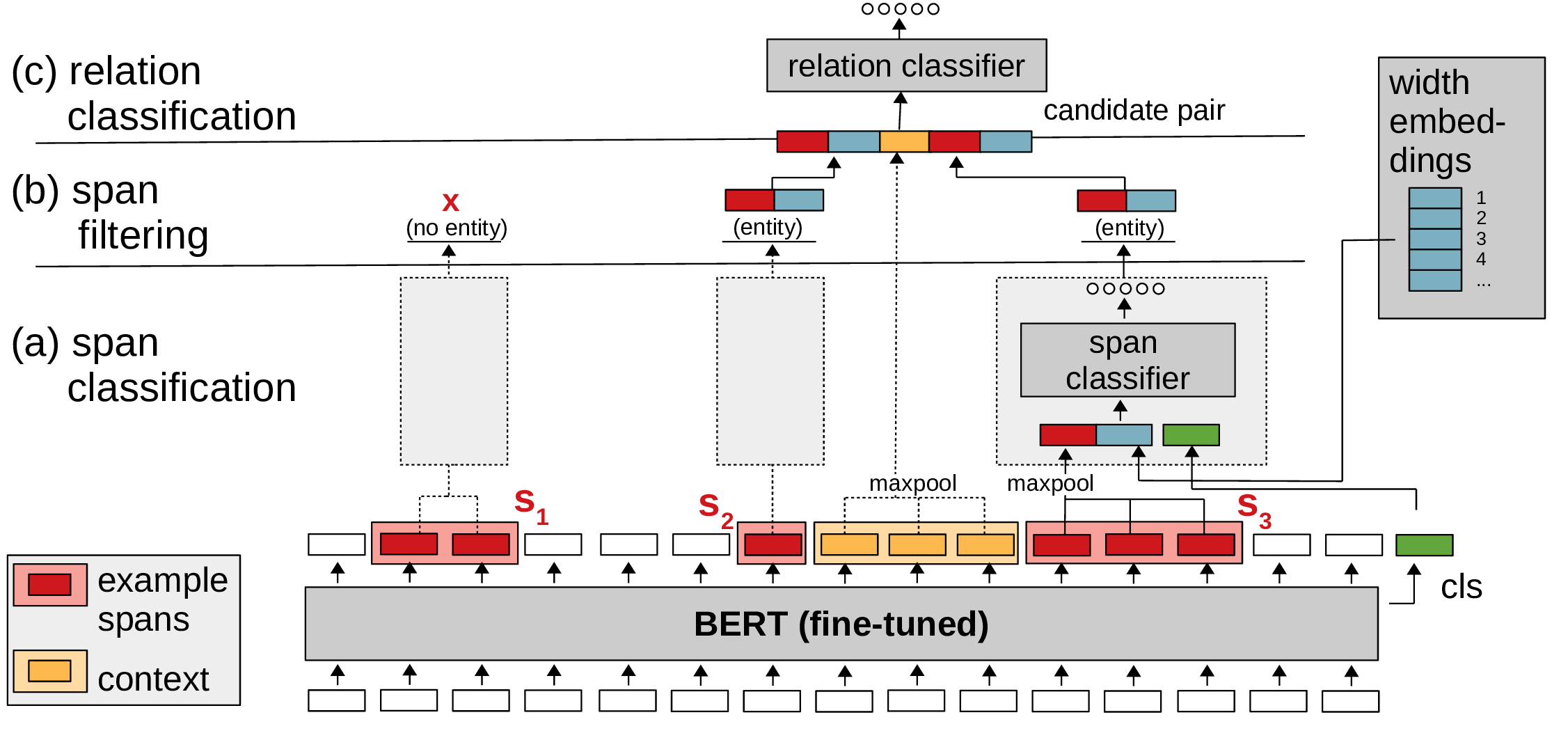

# SpERT: Span-based Entity and Relation Transformer

PyTorch code for SpERT: "Span-based Entity and Relation Transformer". For a description of the model and experiments, see our paper: https://arxiv.org/abs/1909.07755 (accepted at ECAI 2020).

## Setup

### Requirements

- Required

- Python 3.5+

- PyTorch (tested with version 1.4.0)

- transformers (+sentencepiece, e.g. with 'pip install transformers[sentencepiece]', tested with version 4.1.1)

- scikit-learn (tested with version 0.24.0)

- tqdm (tested with version 4.55.1)

- numpy (tested with version 1.17.4)

- Optional

- jinja2 (tested with version 2.10.3) - if installed, used to export relation extraction examples

- tensorboardX (tested with version 1.6) - if installed, used to save training process to tensorboard

- spacy (tested with version 3.0.1) - if installed, used to tokenize sentences for prediction

### Fetch data

Fetch converted (to specific JSON format) CoNLL04 \[1\] (we use the same split as \[4\]), SciERC \[2\] and ADE \[3\] datasets (see referenced papers for the original datasets):

```

bash ./scripts/fetch_datasets.sh

```

Fetch model checkpoints (best out of 5 runs for each dataset):

```

bash ./scripts/fetch_models.sh

```

The attached ADE model was trained on split "1" ("ade_split_1_train.json" / "ade_split_1_test.json") under "data/datasets/ade".

## Examples

(1) Train CoNLL04 on train dataset, evaluate on dev dataset:

```

python ./spert.py train --config configs/example_train.conf

```

(2) Evaluate the CoNLL04 model on test dataset:

```

python ./spert.py eval --config configs/example_eval.conf

```

(3) Use the CoNLL04 model for prediction. See the file 'data/datasets/conll04/conll04_prediction_example.json' for supported data formats. You have three options to specify the input sentences, choose the one that suits your needs. If the dataset contains raw sentences, 'spacy' must be installed for tokenization. Download a spacy model via 'python -m spacy download model_label' and set it as spacy_model in the configuration file (see 'configs/example_predict.conf').

```

python ./spert.py predict --config configs/example_predict.conf

```

## Notes

- To train SpERT with SciBERT \[5\] download SciBERT from https://github.com/allenai/scibert (under "PyTorch HuggingFace Models") and set "model_path" and "tokenizer_path" in the config file to point to the SciBERT directory.

- You can call "python ./spert.py train --help" / "python ./spert.py eval --help" "python ./spert.py predict --help" for a description of training/evaluation/prediction arguments.

- Please cite our paper when you use SpERT: <br/>

Markus Eberts, Adrian Ulges. Span-based Joint Entity and Relation Extraction with Transformer Pre-training. 24th European Conference on Artificial Intelligence, 2020.

## References

```

[1] Dan Roth and Wen-tau Yih, ‘A Linear Programming Formulation forGlobal Inference in Natural Language Tasks’, in Proc. of CoNLL 2004 at HLT-NAACL 2004, pp. 1–8, Boston, Massachusetts, USA, (May 6 -May 7 2004). ACL.

[2] Yi Luan, Luheng He, Mari Ostendorf, and Hannaneh Hajishirzi, ‘Multi-Task Identification of Entities, Relations, and Coreference for Scientific Knowledge Graph Construction’, in Proc. of EMNLP 2018, pp. 3219–3232, Brussels, Belgium, (October-November 2018). ACL.

[3] Harsha Gurulingappa, Abdul Mateen Rajput, Angus Roberts, JulianeFluck, Martin Hofmann-Apitius, and Luca Toldo, ‘Development of a Benchmark Corpus to Support the Automatic Extraction of Drug-related Adverse Effects from Medical Case Reports’, J. of BiomedicalInformatics,45(5), 885–892, (October 2012).

[4] Pankaj Gupta, Hinrich Schütze, and Bernt Andrassy, ‘Table Filling Multi-Task Recurrent Neural Network for Joint Entity and Relation Extraction’, in Proc. of COLING 2016, pp. 2537–2547, Osaka, Japan, (December 2016). The COLING 2016 Organizing Committee.

[5] Iz Beltagy, Kyle Lo, and Arman Cohan, ‘SciBERT: A Pretrained Language Model for Scientific Text’, in EMNLP, (2019).

```

没有合适的资源?快使用搜索试试~ 我知道了~

spert:SpERT的PyTorch代码

共30个文件

py:19个

conf:3个

html:2个

需积分: 49 9 下载量 120 浏览量

2021-05-13

06:58:37

上传

评论

收藏 41KB ZIP 举报

温馨提示

SpERT:基于跨度的实体和关系转换器 SpERT的PyTorch代码:“基于跨度的实体和关系转换器”。 有关模型和实验的说明,请参见我们的论文: : (ECAI 2020年接受)。 设置 要求 必需的 Python 3.5+ PyTorch(经过1.4.0版测试) 变压器(+哨片,例如,带有“ pip install变压器[哨片]”,已通过4.1.1版进行了测试) scikit-learn(经过0.24.0版测试) tqdm(经过4.55.1版测试) numpy(经过1.17.4版测试) 可选的 jinja2(经过2.10.3版测试)-如果已安装,则用于导出关系提取示例 tensorboardX(经过1.6版测试)-如果已安装,用于将训练过程保存到tensorboard spacy(经过3.0.1版测试)-如果已安装,则用于标记句子以进行预测 撷取资料 获取转换后的(转

资源详情

资源评论

资源推荐

收起资源包目录

spert-master.zip (30个子文件)

spert-master.zip (30个子文件)  spert-master

spert-master  .gitignore 1KB

.gitignore 1KB README.md 4KB

README.md 4KB spert.py 2KB

spert.py 2KB config_reader.py 2KB

config_reader.py 2KB configs

configs  example_predict.conf 427B

example_predict.conf 427B example_eval.conf 432B

example_eval.conf 432B example_train.conf 664B

example_train.conf 664B LICENSE 1KB

LICENSE 1KB spert

spert  spert_trainer.py 19KB

spert_trainer.py 19KB models.py 10KB

models.py 10KB input_reader.py 8KB

input_reader.py 8KB opt.py 218B

opt.py 218B util.py 6KB

util.py 6KB trainer.py 5KB

trainer.py 5KB prediction.py 8KB

prediction.py 8KB evaluator.py 15KB

evaluator.py 15KB __init__.py 0B

__init__.py 0B entities.py 11KB

entities.py 11KB loss.py 2KB

loss.py 2KB sampling.py 8KB

sampling.py 8KB templates

templates  relation_examples.html 8KB

relation_examples.html 8KB entity_examples.html 8KB

entity_examples.html 8KB __init__.py 0B

__init__.py 0B scripts

scripts  fetch_models.sh 539B

fetch_models.sh 539B conversion

conversion  convert_ade.py 6KB

convert_ade.py 6KB convert_conll04.py 3KB

convert_conll04.py 3KB convert_scierc.py 2KB

convert_scierc.py 2KB fetch_datasets.sh 553B

fetch_datasets.sh 553B requirements.txt 141B

requirements.txt 141B args.py 6KB

args.py 6KB共 30 条

- 1

步衫

- 粉丝: 29

- 资源: 4641

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0