没有合适的资源?快使用搜索试试~ 我知道了~

Swift RAID:分布式 的RAID 系统.pdf

0 下载量 150 浏览量

2024-05-10

09:14:40

上传

评论

收藏 1.21MB PDF 举报

温馨提示

试读

27页

Swift RAID:分布式 的RAID 系统.pdf

资源推荐

资源详情

资源评论

Swift/RAID: A Distributed

RAID

System

Darrell

D.

E. Long

and Bruce R.

Montague

University

of California,

Santa Cruz

Luis-Felipe

Cabrera IBM

Almaden

Research

Center

ABSTRACT: The

Swift VO architecture is

designed

to

provide

high data

rates

in support

of multimedia

type

applications in general-purpose

distributed

environ-

ments through

the use

of distributed striping.

Strþing

techniques

place

sections of

a single logical data

space

onto multiple

physical

devices. The original

Swift

pro-

totype was designed

to

validate the architecture,

but

did not

provide

fault

tolerance.

We have implemented

a new

prototype

of the

Swift architecture that

provides

fault tolerance in

the distributed

environment in

the

same manner as

RAID levels 4

and 5. RAID

(Redun-

dant

Arrays

of Inexpensive

Disks)

techniques have

recently been

widely used

to increase both

performance

and

fault tolerance

of disk

storage systems.

The new

Swift/RAID implementation

manages all

communication

using a distributed

transfer

plan

ex-

ecutor which isolates

all communication

code

from

the rest of

Swift.

The transfer

plan

executor

is im-

plemented

as a

distributed

finite state machine

that

decodes and

executes

a set of reliable data-transfer

op-

erations.

This

approach enables

us to easily investigate

alternative architectures

and

communications protocols.

Supported

in

part

by the National

Science Foundation

under Grant NSF

CCR-9111220

and by the Office

of

Naval Research

under Grant N00014-92-J-1807

@ 1994

The USENIX Association,

Computing

Systems, Vol.

7

.

No. 3

.

Summer 1994

333

Providing

fault

tolerance

comes

at a

cost, since

computing

and

administering

parity data

impacts

Swiff/RAID

data

rates.

For

a five

node

sysÛem,

in

one

typical

performance

benchmark,

Swift/RAID

level 5

obt¿ined

87

percent of the

original

Swift

read

throughput

and

53

percent of the

write

throughput.

Swift/RAID

level

4

obtained

92

petcent

of

the origi-

nal

Swift

read

throughput

and

34

percent

of

the write

throughput.

334

Long

et

al.

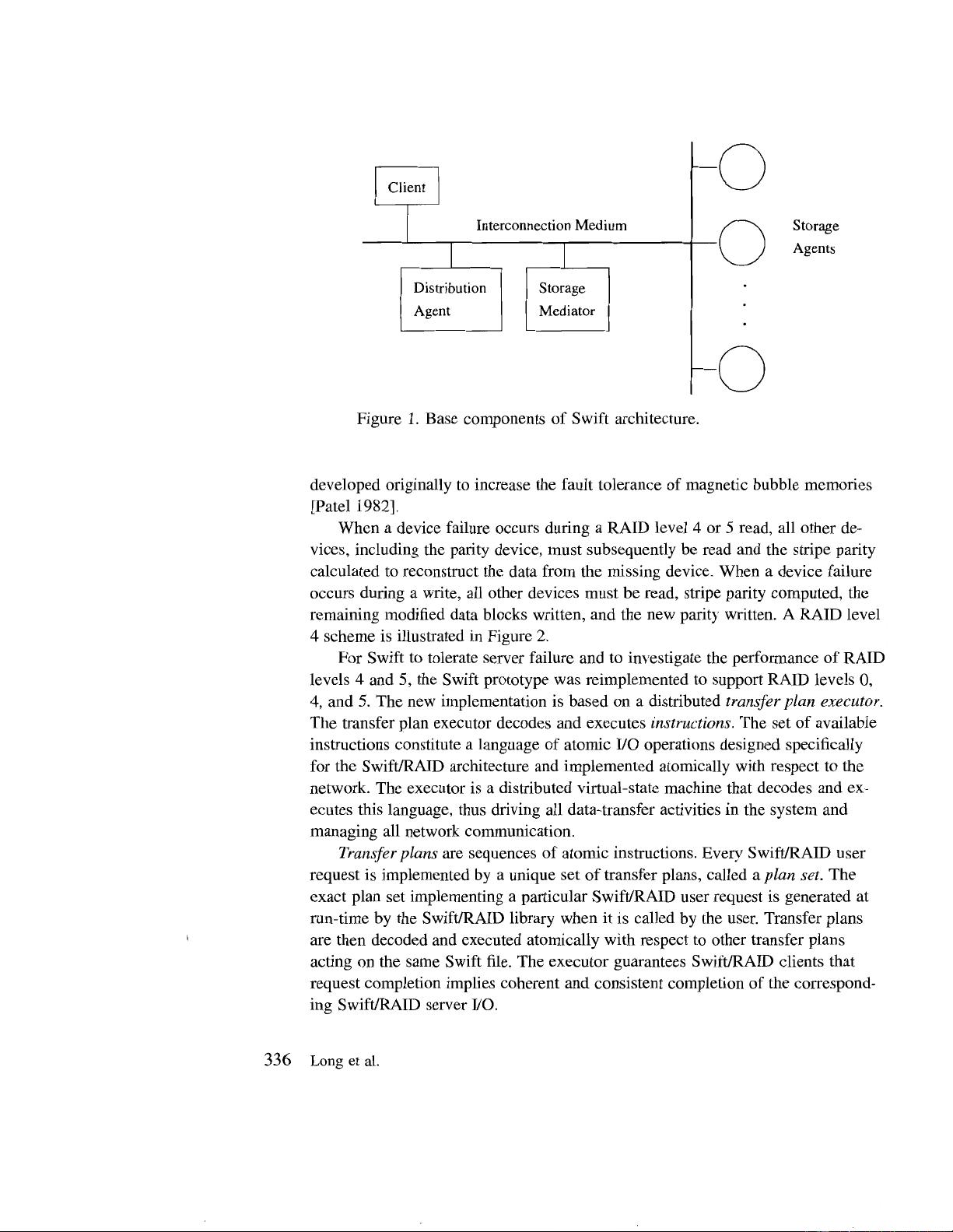

I. Introduction

The

Swift system was designed to investigate

the

use of network disk strip-

ing

to achieve the data rates required by multimedia

in a

general

purpose

dis-

tributed system. The original

Swift

prototype

was implemented during 1991,

and

its

design and

performance

was described, investigated,

and reported

in

Cabrera and Long

119911.

A high-level

view of the Swift architecture is

shown in Figure 1. Swift uses a high

speed

interconnection

medium to

aggre-

gate

arbitrarily many

(slow)

storage devices into a faster logical

storage ser-

vice,

making all applications unaware

of this aggregation.

Swift

uses a mod-

ular

client-server architecture made

up of independently replaceable compo-

nents.

Disk

striping

is

a technique analogous to

main memory interleaving that has

been used for

some time to enhance throughput and

balance disk load in disk ar-

rays

[Kim

1986;

Salem and

Garcia-Molina 1986]. In

such systems writes scatter

data across

devices

(the

members

of the stripe), and reads

"gafheÍ"

data from the

devices.

The number

of devices that

participate

in such an

operation defines the

degree

of striping. Data

within each data stripe is logically

contiguous. Swift uses

a

network

of workstations in a manner

similar to a disk array.

A Swift application

on

a client node issues

VO

requests

via

library

routines

that evenly distribute the

VO across multiple server nodes. The

original Swift

prototype

used striping solely

to

enhance

performance.

Currently, RAID

(Redundant

Arrays

of Inexpensive Disks)

systems are be-

ing used

to

provide

reliable high-performance

disk arrays

fKatz

et al. 19891.

RAID

systems achieve high

performance

by disk striping

while

providing

high

availability

by techniques

such

as RAID levels 1, 4,

and 5. RAID level

0

refers

to simple disk striping

as

in

the original Swift

prototype.

RAID level 1 implies

traditional disk mirroring.

RAID levels 4 and

5

keep

one

parity

block for

every

data

stripe.

This

parity

block can then be used to reconstruct

any unavailable

data

block

in the stripe. RAID levels 4

and 5 differ in

that

level4

uses a dedicated

par-

ity

device whereas level

5 scatters

parity

data across

all devices, thus achieving

a more

uniform load. The fault

tolerance of levels 4

and 5 can be applied to

any

block-structured

storage device; indeed,

the

level 4 technique

appears to have

been

Swíft/RAID:

A Distributed RAID

System 335

Storage

Agents

Figure

1. Base

components

of Swift

architecture'

developed

originally

to

increase

the

fault

tolerance

of

magnetic

bubble

memories

lPatel

1982].

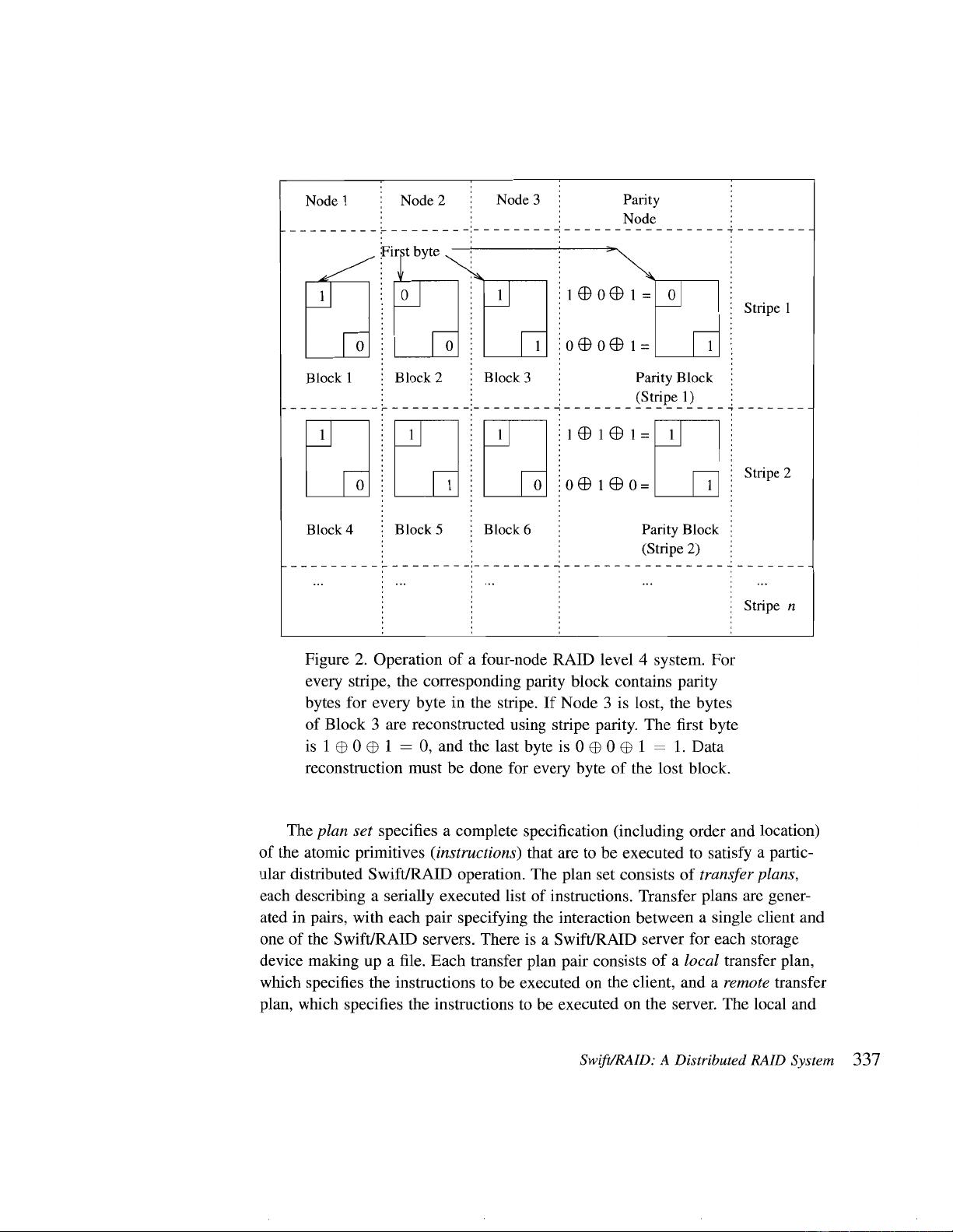

When

a

device

failure

occurs

during

a

RAID

level

4 or 5

read,

all other

de-

vices,

including

the

parity

device,

must subsequently

be

read

and

the stripe

parity

calculated

to reconstruct

the

data

from

the

missing

device.

When

a device

failure

occurs

during

a write,

all other

devices

must

be

read, stripe

parity computed,

the

remaining

modified

data

blocks

written,

and the

new

parity

written. A

RAID

level

4 scheme

is illustrated

in

Figure

2.

For

Swift

to

tolerate

server

failure

and

to

investigate

the

performance

of

RAID

levels

4 and

5, the

Swift

prototype was

reimplemented

to support

RAID

levels

0,

4, and 5.

The

new

implementation

is based

on

a distribufed

transþr

plan

executor.

The

transfer

plan executor

decodes

and executes

instructions.

The

set

of

available

instructions

constitute

a

language

of

atomic

VO

operations

designed

specifically

for

the Swift/RAID

architecture

and

implemented

atomically

with

respect

to the

network.

The

executor

is a

distributed

virtual-state

machine

that

decodes

and ex-

ecutes

this

language,

thus

driving

all

data-transfer

activities

in

the

system

and

managing

all

network

communication'

Transfer

plans

aÍe sequences

of

atomic

instructions.

Every

SwiflRAID

user

request

is

implemented

by

a unique

set

of transfer

plans, called

a

plan

set-

The

exact

plan

set

implementing

a

particular

Swift/RAID

user

request

is

generated

at

run-time

by

the

Swift/RAID

library

when

it is called

by

the

user.

Transfer

plans

are

then

decoded

and

executed

atomically

with

respect

to other

transfer

plans

acting

on

the same

Swift

file.

The

executor

guarantees Swift/RAID

clients

that

request

completion

implies

coherent

and

consistent

completion

of the

correspond-

ing Swift/RAID

server

VO.

336

Long

et al.

Stripe I

lock

)

Block I

ru

Block

4

o(EtOo=

Stripe 2

Parity

Block

(Stripe

2)

Block 6Block 5

Stripe n

Figure 2.

Operation

of a four-node

RAID level 4

system.

For

every stripe, the

corresponding parity

block

contains

parity

bytes for every byte in the

stripe. If Node

3

is lost, the bytes

of

Block

3

are reconstructed

using

stripe

parity.

The

first

byte

is

1

O0O

1

:

0,

andthe

lastbyte is

0O0

O

I

:

1. Data

reconstruction must

be done for

every byte of the lost block.

The

plan

s¿t specifies a complete specification

(including

order and location)

of the

atomic

primitives

(instructions)

that are to be executed to satisfy a

partic-

ular

distributed SwiflRAID operation. The

plan

set consists of

transfer

plans,

each describing a serially executed list

of

instructions. Transfer

plans

are

gener-

ated

in

pairs,

with each

pair

specifying the interaction

between a single client

and

one

of the Swift/RAID servers. There is

a Swift/RAID server for

each storage

device

making up a file. Each transfer

plan pair

consists of a local

transfer

plan,

which

specifies the instructions to be executed

on

the

client, and a remote

transfer

plan,

which specifies the instructions to be executed

on the server. The local

and

Swifi/RAID: A Distributed RAID

System 337

剩余26页未读,继续阅读

资源评论

百态老人

- 粉丝: 2129

- 资源: 2万+

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功