没有合适的资源?快使用搜索试试~ 我知道了~

计算机科学教师正在适应ChatGPT Copilot AI工具.docx

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

0 下载量 71 浏览量

2024-04-17

12:45:25

上传

评论

收藏 468KB DOCX 举报

温馨提示

试读

16页

计算机科学教师正在适应ChatGPT Copilot AI工具.docx

资源推荐

资源详情

资源评论

From "Ban It Till We Understand It" to "Resistance is Futile":

How University Programming Instructors Plan to Adapt as More

Students Use AI Code Generation and Explanation Tools such as

ChatGPT and GitHub Copilot

ABSTRACT

Sam Lau

UC

San

Diego

La Jolla, California, USA

lau@ucsd.edu

Philip J. Guo

UC

San

Diego

La Jolla, California, USA

pg@ucsd.edu

ACM Reference Format:

Over the past year (2022–2023), recently-released AI tools such

as ChatGPT and GitHub Copilot have gained significant attention

from computing educators. Both researchers and practitioners have

discovered that these tools can generate correct solutions to a va-

riety of introductory programming assignments and accurately

explain the contents of code. Given their current capabilities and

likely advances in the coming years, how do university instructors

plan to adapt their courses to ensure that students still learn well?

To gather a diverse sample of perspectives, we interviewed 20 in-

troductory programming instructors (9 women + 11 men) across

9 countries (Australia, Botswana, Canada, Chile, China, Rwanda,

Spain, Switzerland, United States) spanning all 6 populated conti-

nents. To our knowledge, this is the first empirical study to gather

instructor perspectives about how they plan to adapt to these AI

coding tools that more students will likely have access to in the

future. We found that, in the short-term, many planned to take

immediate measures to discourage AI-assisted cheating. Then opin-

ions diverged about how to work with AI coding tools longer-term,

with one side wanting to ban them and continue teaching program-

ming fundamentals, and the other side wanting to integrate them

into courses to prepare students for future jobs. Our study findings

capture a rare snapshot in time in early 2023 as computing in-

structors are just starting to form opinions about this fast-growing

phenomenon but have not yet converged to any consensus about

best practices. Using these findings as inspiration, we synthesized

a diverse set of open research questions regarding how to develop,

deploy, and evaluate AI coding tools for computing education.

CCS

CONCEPTS

•

Social and professional topics → Computing education.

KEYWORDS

AI coding tools, LLM, ChatGPT, Copilot, instructor perspectives

Permission to make digital or hard copies of part or all of this work for personal or

classroom use is granted without fee provided that copies are not made or distributed

for profit or commercial advantage and that copies bear this notice and the full citation

on the first page. Copyrights for third-party components of this work must be honored.

For all other uses, contact the owner/author(s).

ICER

’23

V1,

August

7–11,

2023,

Chicago,

IL,

USA

© 2023 Copyright held by the owner/author(s).

ACM ISBN 978-1-4503-9976-0/23/08.

https://doi.org/10.1145/3568813.3600138

Sam Lau and Philip J. Guo. 2023. From "Ban It Till We Understand It" to

"Resistance is Futile": How University Programming Instructors Plan to

Adapt as More Students Use AI Code Generation and Explanation Tools

such as ChatGPT and GitHub Copilot. In Proceedings of the 2023 ACM

Conference on International Computing Education Research V.1 (ICER ’23 V1),

August 7–11, 2023, Chicago, IL, USA. ACM, New York, NY, USA, 16 pages.

https://doi.org/10.1145/3568813.3600138

1

INTRODUCTION

Although research areas such as neural networks, program syn-

thesis [43], and natural language programming [75] have been

under development for decades, over the past year a series of com-

mercial product launches brought those ideas into wider public

consciousness. For instance, in June 2022 the GitHub Copilot AI

code generation tool [35] launched after a year in private beta. In

late November 2022, the ChatGPT AI chatbot launched [82]. Some

analysts estimated that it reached 100 million users in two months,

the fastest growth of any app on record [47]. Then, less than three

months later, both Microsoft and Google announced ChatGPT-like

conversational AI integration into their web search engines [74, 91].

This recent growth in popularity of AI tools has raised wide-

spread concerns about issues such as bias [21, 63], ethics [17], mis-

information [57], data licensing and privacy [22], energy usage and

climate impacts [100], and centralization of corporate power [114].

One specific concern on the forefront of many educators’ minds is

the

fact

that

these

tools

can

effectively

solve

homework

assignments

and exam problems across a wide variety of school subjects [50].

Within computing education in particular, researchers have

discovered that AI tools can be especially effective for program-

ming due to them being trained on billions of lines of open-source

code [61] and due to code having a more constrained logical struc-

ture than free-form natural language. These tools can generate

solutions to programming assignments and exam questions [32, 38,

39, 111] and explain the contents of code [33, 68, 97]. Given these

current realities and extrapolating to a future where AI capabilities

are likely to improve, how are computing educators planning to

adapt their courses in response to the growing proliferation

of AI code generation and explanation tools?

To gather a diverse set of perspectives on this question, we inter-

viewed 20 introductory programming course instructors (9 women

+ 11 men) in universities across 9 countries (Australia, Botswana,

Canada, Chile, China, Rwanda, Spain, Switzerland, United States)

spanning all 6 populated continents. Our participants ranged from

ICER

’23

V1,

August

7–11,

2023,

Chicago,

IL,

USA

Sam Lau and Philip J. Guo

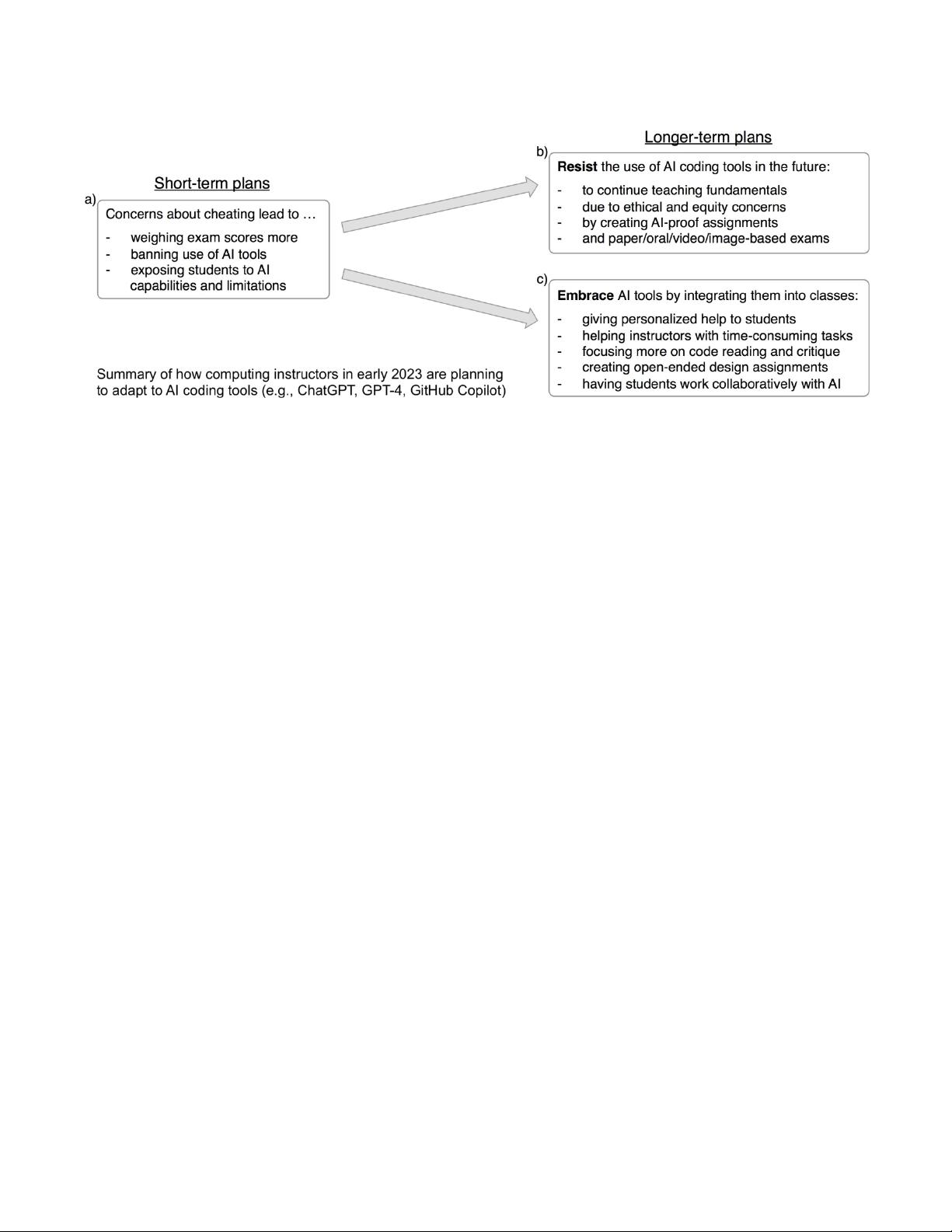

Figure 1: Summary of our study findings. We interviewed 20 introductory programming instructors and present both a) their

short-term plans, and their longer-term plans to either b) resist or c) embrace the use of AI coding tools in their classes.

having zero prior experience with AI coding tools to having used

them for personal programming projects.

Figure 1 summarizes our findings: a) In the short-term, all par-

ticipants were concerned about cheating, which led to immediate

reactions such as weighing exam scores more, banning the use

of AI, or showing students the capabilities and limitations of AI

tools. Longer-term, opinions diverged into two groups, with some

wanting to b) resist the use of AI tools and continue teaching pro-

gramming fundamentals, while others wanted to c) embrace AI

tools by integrating them into their classes to help both students

and instructors. Participants brainstormed a range of ideas for both

resisting and embracing AI in future classes, ranging from creating

‘AI-proof’ assignments that may deter AI tools to new kinds of

assignments where students must collaborate with AI.

Over the past academic year (2022–2023) computing educa-

tion researchers have been actively discussing these topics in blog

posts [19, 54, 55], a SIGCSE position paper [14], and workshops [65,

67]. Our paper complements these ongoing discussions by present-

ing the first empirical study of computing instructor perspectives on

AI code generation and explanation tools. The timing of our study is

unique since our interviews occurred in early 2023, which is the

first academic term where large numbers of students have access to

these tools due to ChatGPT’s release in late 2022. Thus, our findings

capture a rare snapshot in time when computing instructors are

recounting their early reactions to this fast-growing phenomenon

but have not worked out any best practices yet.

Since we are still very early in the adoption curve of AI coding

tools, we hope our study findings can spur conversations within

the computing education community about whether to resist or

embrace these tools in the coming years, and how to work with

these tools in ethical and equitable ways. We have a unique and

timely opportunity to develop both policies and social norms that

influence how these tools may impact future generations of students.

Thus, we conclude this paper with a set of open research questions

derived from our study findings (Section 7).

In sum, the contributions of this paper are:

•

A comprehensive snapshot of the current state of AI coding

tools and the range of human-centered research surrounding

them as of early 2023, less than a year after the public release

of ChatGPT and GitHub Copilot.

•

The first study of computing instructors’ perceptions of AI

coding tools, which found that they were most concerned

about cheating in the short term but that longer-term their

sentiments bifurcated into either wanting to resist these tools

or to embrace them by integrating them into future classes.

•

A set of open research questions for the computing education

community to consider as AI coding tools potentially grow

more widespread in the coming years.

2

BACKGROUND: THE CURRENT STATE OF

AI CODING TOOLS IN EARLY 2023

Over the past year (2022–2023), AI code generation and explanation

tools have become more widespread with the release of products

like GitHub Copilot (currently free for students and instructors) and

ChatGPT (currently free for the public). These are built upon neural

network models trained on terabytes of textual data scraped from

the public internet (e.g., billions of webpages, billions of lines of

open-source code from GitHub, and the contents of open-licensed

books [24, 85]). Their large-scale architecture enables them to ‘learn’

patterns from data and generate text that a human might plausibly

write, which is why they are commonly called Large Language

Models (LLMs) [24]. And since code is a structured form of text,

these tools can also synthesize code; thus, some refer to AI code

generation as “Large Language Model (LLM)-driven program syn-

thesis” [11, 55]. For brevity, throughout this paper we use the terms

‘AI coding tools’ or simply ‘AI tools’ as shorthand to refer to these

tools. Users interact with these AI tools in three main ways:

1)

Standalone: The simplest interface to LLMs is a web application

that shows a text box, such as the OpenAI Playground [84] for

GPT-series LLMs [20, 24, 85] (e.g., GPT-4) and TextSynth for vari-

ous open-source LLMs [36]. The user can input some text (called a

ICER

’23

V1,

August

7–11,

2023,

Chicago,

IL,

USA

‘prompt’ [112]) and the tool tries to generate text (or code) that plau-

sibly continues from the user’s input. An example code-generation

prompt might be “Write a Python function that adds two numbers.”

2)

Conversational: Chatbots such as ChatGPT [82] improve upon

a standalone interface by enabling users to hold a back-and-forth

multi-turn conversation with the AI. This allows users to refer back

to prior context instead of needing to re-enter their entire prompt

every time. For instance, a user could say “Now rewrite this code

using more descriptive variable names”

and ChatGPT knows that the

user is referring to code that was written earlier in the conversation.

3)

IDE-integrated: Tools such as GitHub Copilot [35], Replit Ghost-

writer [93], Amazon CodeWhisperer [8], Codeium [2], and Tab-

nine [110] integrate into the user’s IDE (e.g., Visual Studio Code).

This lets them do autocomplete and generate suggestions within

the context of the user’s codebase as they are coding. Another ben-

efit of IDE integration is that these tools can pull in the user’s own

surrounding code (both before and after the cursor, plus in other

open project files [94]) as context, which enables them to generate

personalized code suggestions to fit the user’s current task. Also,

some IDE-integrated AI tools include an embedded chat interface.

As an indicator of just how fast things are moving in this space,

many new LLMs and AI coding tools have been announced in the

2.5 months between when this paper was submitted (mid-March

2023) and when the final camera-ready publication was completed

(early June 2023). Examples include new coding-capable LLMs such

as LLaMA [103], GPT-4 [83], Cerebras-GPT [34], CodeGen2 [79],

DIDACT

[70],

replit-code

[5],

and

StarCoder

[61];

LLM-based

chat-

bots such as Alpaca [102], Claude [1], Dolly [29], Koala [42], and

Vicuna [26]; and new IDE-integrated AI tools such as Sourcegraph

Cody [7], Google’s Codey LLM (similar name but unrelated tool!)

integrated into Colab and Android Studio IDE [90], and Meta’s

CodeCompose [78]. GitHub also announced new Copilot X [3]

enhancements, which include IDE-embedded AI chat interfaces.

2.1 Current Capabilities AI Coding Tools

To provide context for the early-2023 era when our study’s inter-

views took place, we now summarize the current capabilities of AI

coding tools that are most relevant for educational use cases.

2.1.1

Code generation capabilities. Given natural language and/or

code as input, these tools can generate relevant code:

Specification-to-code: Given a natural language description for

what a piece of code should do (e.g., a function or class specifica-

tion), these tools can generate code to meet that specification. For

example: “Create a function that takes a list of first names and a

list of last names then returns a new list with those names joined.”

Note that many CS1/CS2 programming assignments are phrased as

specifications that students can directly input into tools.

Conversational specification-to-code: The main limitation of

‘specification-to-code’ is that novices are not good at writing precise

specifications, so the generated code may not be what they want.

To overcome this limitation, one can use the ‘flipped interaction

prompt pattern’ [112] to have a back-and-forth conversation with

ChatGPT before it generates the requested code. For instance, the

user could write: “ChatGPT, I want you to write a Python function to

join first and last names. Ask me clarifying questions one at a time

until you have enough information to write this code for me.”

1

Code completion: Tools that integrate into the IDE, such as Co-

pilot [35], Ghostwriter [93], CodeWhisperer [8], and Tabnine [110],

enable the user to start typing code and see a list of contextually-

relevant code completions, like an AI-enhanced autocomplete.

Code refactoring: Once the user has written some code, they can

ask the tool to rewrite it in order to improve readability, style, or

maintainability; e.g., “Refactor this function to use smaller helper

functions.” Again, holding a conversation with ChatGPT and an-

swering its follow-up questions can help it to generate better code.

Code simplification: One kind of refactoring that students may try

is to ask the tool to simplify a piece of code. For instance, “Rewrite

this code using only simple Python features that a student in an

introductory programming course would know about.”

Students could

use this technique to generate more ‘plausible-looking’ answers to

CS1/CS2 assignments, because otherwise it may look suspicious if

they turn in code that uses too many advanced language features.

Language translation: These tools can also translate code written

in one programming language into another (albeit imperfectly).

They can also translate mentions of human languages within code.

Test generation: Users can also ask AI tools to generate test cases

(e.g., unit/regression tests). For instance, Copilot has a TestPilot

feature [72] that generates tests and interactively refines them based

on user feedback. These tools can often create tests for unusual

edge cases that novices may not think of on their own [113].

Structured test data generation: AI tools can also generate struc-

tured data that users can pass into their software to manually test it.

For instance, one could ask a tool to generate 100 fake user profiles

(with fake names, ages, and locations) in some format, like JSON or

a Python dictionary, in order to test a prototype social media app.

2.1.2

Code explanation capabilities. Given a piece of code and

natural language instructions as input, these tools can explain what

that code does in a way that emulates how a human instructor

might explain it to a student:

Explanations at varying expertise levels: The most straightfor-

ward prompt is to ask the tool to explain what a piece of code does.

One can also use the ‘persona prompt pattern’ [112] to generate

explanations for a given expertise level. For instance, “ChatGPT, I

want you to take on the persona of a university CS1 instructor talking

to a student who has never taken a programming class before. Ex-

plain what this function does: [user’s code].” Users can also ask it to

automatically generate code comments or API documentation.

Debugging help: Users can ask the tool to find possible bugs in

the given code and explain why those may be bugs. Note that

the tool does not run the code or perform rigorous static code

analysis. But in practice, ‘superficial’ bugs in student code (e.g., off-

by-one errors in a loop bound) can be found by the tool matching

against patterns learned from billions of lines of open-source code.

And with ChatGPT, one can ask follow-up clarifying questions

and engage in a back-and-forth debugging conversation, which

simulate some aspects of working with a human tutor [71].

1

The prompts in this paper are simplified illustrative examples that may not work

optimally as-is. In practice, it likely takes a fair amount of iteration to craft prompts

that work reliably and effectively. This process is known as prompt engineering [112].

ICER

’23

V1,

August

7–11,

2023,

Chicago,

IL,

USA

Sam Lau and Philip J. Guo

Conversational bug finding: A more powerful way to do AI-

assisted bug finding is to start a back-and-forth debugging conver-

sation. For instance: “Here is my code and the output I see when I run

it. This output looks wrong because the last two array elements are

duplicated. What should I change in my code to help me find the bug

more easily?" The AI tool might suggest a code edit; then the user

can apply that edit, re-run their modified code, and paste the new

text output or error message into the next round of dialogue. This

conversational technique elegantly bypasses the AI tool’s limitation

that it cannot directly run the user’s code – instead, the user runs

the code on their computer and sends the output to the AI tool.

Code review and critique: AI tools can also serve as a code re-

viewer and give detailed critiques. Again, the ‘persona pattern’ [112]

can be useful here, e.g.,: “I want you to take on the persona of a senior

software engineer at a top technology company. I have submitted this

code to you for a formal code review. Please critique it: [user’s code]”

Conceptual explanations with code examples: The user can

also ask the tool to explain a programming concept just like how

they would ask a human instructor. For instance: “What’s the differ-

ence between checked and unchecked exceptions in Java? Give code

examples for each.”

The tool’s ability to generate code examples can

enable some basic level of (albeit imperfect) fact-checking since the

user can run that code and see if it matches the given explanation.

2.2

Notable Limitations of AI Coding Tools

Here are some commonly-known limitations of these tools and

other reasons people have cited for not using them:

•

Inaccuracies: The most notable limitation is that these tools

can generate inaccurate outputs with no quality guaran-

tees [81]. This may result in subtly-inaccurate code or incor-

rect explanations which appear believable to novices.

•

Code quality: They may generate code that is stylistically

non-ideal, that may not be robust to edge cases, that have

security vulnerabilities [86], or that is not aligned with what

students are learning in a particular class.

•

Knowledge cutoff: These tools only ‘know’ what is in their

training data, which is an older snapshot of the web. So they

cannot help with, say, a JavaScript library released last week.

That said, they do get periodically re-trained, and Microsoft

and Google are augmenting them to search the web [74, 91].

Also, tools like Sourcegraph Cody [7] augment an LLM by

retrieving code and text from a user’s own project repository

in order to generate responses about facts that are not on the

public web (a form of retrieval-augmented generation [60]).

•

Learning curve: Novices may have a hard time producing

high-quality results with simple prompts [115]. It takes some

level of expertise to craft effective and reliable prompts [112].

•

Nondeterminism: AI tools can produce different outputs even

when given the same prompt. There are settings to reduce

randomness of outputs, but whenever the underlying AI

models get updated, results can still end up non-reproducible.

•

Offensive content: AI tools can generate outputs that ex-

hibit harmful biases [17, 63]. For instance, AI-generated code

examples may contain offensive stereotypes embedded in

variable names or strings [14, 18, 24].

•

Ethical objections: Some people are opposed to using AI

tools due to concerns about their creators disregarding soft-

ware licenses when scraping code repositories for training

data [22], the environmental impact of training and running

LLMs [100], and companies using underpaid human workers

to label and filter training data [89].

3

RELATED

WORK

There are fast-growing lines of research on the technical architec-

ture of LLMs (large language models) that power AI code generation

tools

2

, applications of LLMs to many specific domains (e.g., cre-

ative writing [77]), and broader societal implications of LLMs [17].

Instead of surveying the entire landscape of research on LLMs, we

focus our discussion on parts of the literature that are the most

relevant to our interview study. This encompasses a range of human-

centered research on how LLM-based code generation tools relate

to the fields of software engineering and computing education.

3.1

How Software Developers Use AI Code

Generation Tools

Several recent groups of researchers have studied how software

developers use GitHub Copilot in practice, since it is marketed

as a tool to help developers be more productive [52]. Bird et al.

combined forum analysis, a think-aloud study, and a survey to

gather usage patterns such as Copilot enabling faster code-writing

but at the expense of less code understanding [18]. Sarkar et al.

analyzed blog and forum posts to give a similar overview [96].

Cheng et al. studied how developer communities might build trust

in AI tools [25]. Barke et al. found that developers used it in two

ways: to help them explore options and to accelerate their path

toward a known goal [13]. Peng et al. found in a 95-user between-

subjects study that using Copilot helped developers complete a

web development task 56% faster than the control group [87]. But

Vaithilingam et al. found in a 24-user within-subjects study that

although developers liked using Copilot as a starting point, it did

not always improve task completion time or accuracy [106].

More broadly, HCI researchers have done usability studies of AI

code generation tools and proposed improved interface designs. For

instance, Jayagopal/Lubin et al. analyzed the usability of 5 program

synthesis tools (Copilot was one of them) and found that those

which run in the background (without explicit user triggering) can

be more learnable for novices [49]. Sun et al. discovered users’ needs

for explainability in AI code generation tools [101]. Vaithilingam et

al. prototyped 19 user interface ideas for augmenting IDEs with AI

assistance [105]. Ross et al. augmented an IDE with a conversational

AI tool (similar to ChatGPT) called the Programmer’s Assistant [95].

McNutt et al. proposed a design space for how to integrate AI

assistance within computational notebooks, which have different

affordances than IDEs [73]. Liu et al. proposed a user experience

enhancement that translates the user’s prompts into code and then

back again to natural language in order to clarify what the tool

intends to do [64].

2

Some examples of LLMs specialized for programming include CodeBERT [37],

PyMT5

[28],

Codex

[24],

AlphaCode

[62],

CodeGen

[80],

CodeGen2

[79],

Parsel

[116],

InCoder [40], CodeT [23], StarCoder [61], CodeCompose [78], replit-code [5], and

Codey [90]. Note that modern general-purpose LLMs such as GPT-4 [83] are trained

on large amounts of code as well, so they can also do code generation and explanation.

剩余15页未读,继续阅读

资源评论

百态老人

- 粉丝: 2216

- 资源: 2万+

下载权益

C知道特权

VIP文章

课程特权

开通VIP

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 一个基于Python编程语言和numpy及matplotlib库的简单正弦波信号发生器示例

- jdk-17-linux-x64-bin.deb

- jdk-17-linux-aarch64-bin.rpm

- 折线图、散点图、柱状图和饼图,每个示例都显示了如何显示中文

- asp.net高校网上教材征订系统的设计与实现(源码)

- asp.net动态口令认证的网上选课系统的设计与实现(源码)

- NetAssist网络调试助手

- ASP.NET公文管理系统的设计与实现(源码)

- 操作系统原理与设计Chapter 2: OS Structure

- torch-2.3.1-cp312-cp312-manylinux2014-aarch64.whl

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功