没有合适的资源?快使用搜索试试~ 我知道了~

从密集的颜色和稀疏的交互式基于点的建模...doc

1.该资源内容由用户上传,如若侵权请联系客服进行举报

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

2.虚拟产品一经售出概不退款(资源遇到问题,请及时私信上传者)

版权申诉

0 下载量 44 浏览量

2024-04-02

14:31:40

上传

评论

收藏 11.4MB DOC 举报

温馨提示

试读

11页

从密集的颜色和稀疏的交互式基于点的建模...doc

资源推荐

资源详情

资源评论

1001 Acquisition Viewpoints

Efficient and Versatile View-Dependent Modeling of Real-World Scenes

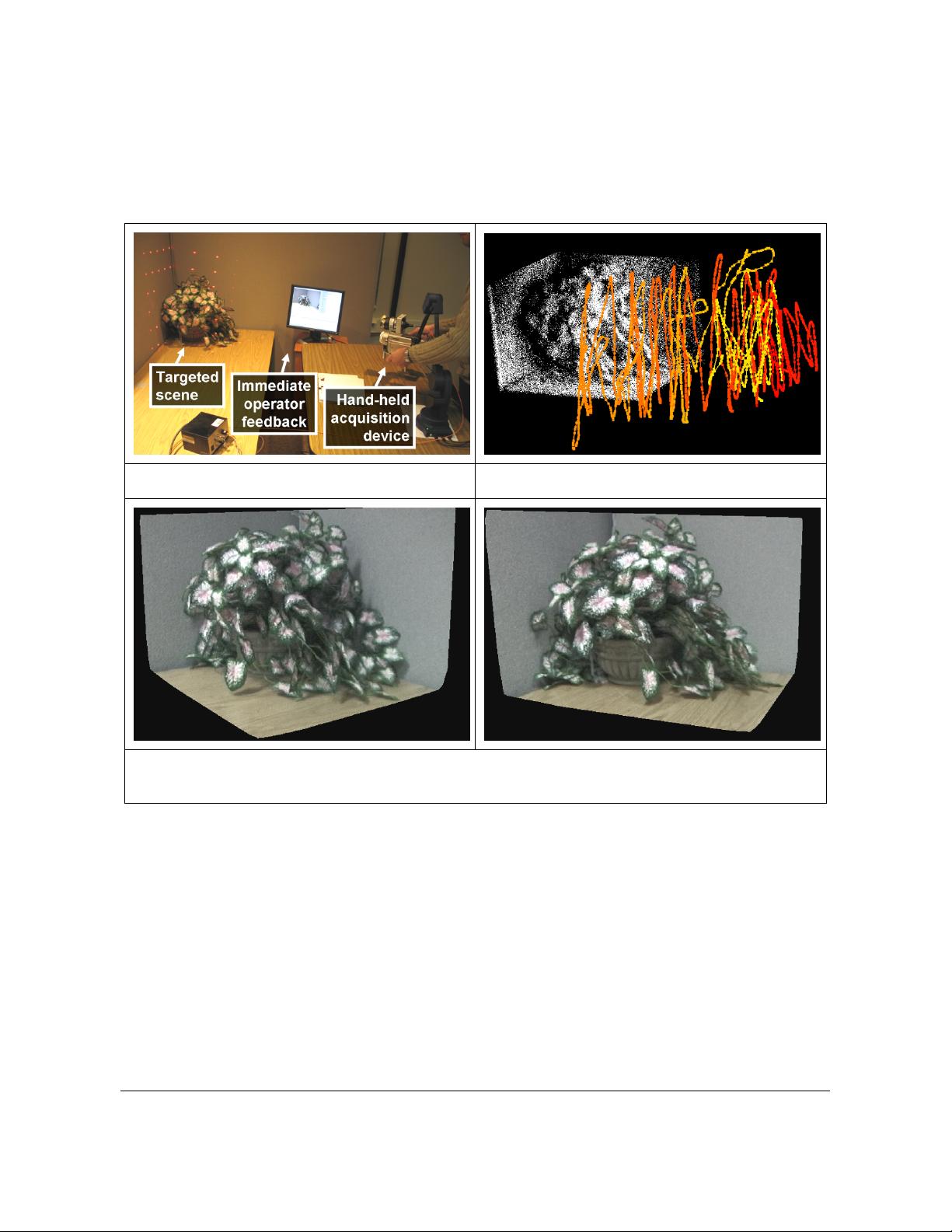

Figure 1: Acquisition system

Figure 2: Typical acquisition path with 3,684 viewpoints

Figure 3: Images rendered from plant model acquired in 10 minutes.

Abstract

Three dimensional modeling is a severe bottleneck for computer graphics applications. Manual modeling is time

consuming and, even so, the resulting models fail to capture the true complexity of real world scenes. Automated modeling

based on acquiring color and depth data is a promising alternative. The conventional approach is to sample the scene

densely from a sparse set of acquisition viewpoints. Unfortunately, even with careful view planning, a sparse set of

acquisition viewpoints does not and cannot ensure adequate coverage for complex scenes. Moreover, the approach is

inefficient due to the considerable data redundancy between neighboring acquisition viewpoints: acquisition from an

additional viewpoint has the same cost while it contributes fewer and fewer new samples.

We propose an automated modeling approach based on sampling the scene sparsely from a dense set of acquisition

viewpoints. We show that the sparse data quickly accumulates to generate models with good scene coverage. The sparse

depth is acquired efficiently and robustly, which enables an interactive, operator-in-the-loop acquisition pipeline. We

describe a modeling system that implements this approach. The system acquires scenes with complex geometry and complex

reflective properties from thousands of viewpoints in minutes. The resulting model has a compact memory footprint and it

supports photorealistic rendering at interactive rates. The system is robust, yet it does not require displacing scene objects or

altering scene lighting conditions.

Categories and Subject Descriptors (ACM CCS): I.3.3. [Computer Graphics]—Three-Dimensional Graphics and Realism.

submitted to EUROGRAPHICS 2008.

2

1. Introduction

Constructing high-fidelity 3D models of real-world

scenes is an important bottleneck for many computer

graphics applications. The conventional approach of

manual modeling using CAD or animation software

requires artistic talent and a huge time investment.

Automated modeling based on acquiring color and depth

data is a promising alternative.

The typical automated modeling approach is to densely

sample the color and geometry of the scene from several

acquisition viewpoints, and then to merge the datasets to

obtain the scene model. However, dense depth sampling of

complex scenes remains a challenging process that can take

tens of minutes per acquisition viewpoint (e.g. sequential

scanning in laser rangefinding, robust off-line

correspondence computation in depth from stereo, or

sequential light pattern projection in depth from structured

light). This limits acquisition to a few viewpoints.

Unfortunately, even with optimal acquisition viewpoint

planning, complex scenes cannot be adequately sampled

from only a sparse set of viewpoints.

Instead of dense depth sampling from a sparse set of

acquisition viewpoints we propose an automated modeling

approach based on sparse depth sampling from a dense set

of acquisition viewpoints. We show that the sparse per-

viewpoint depth data quickly accumulates to generate

models with superior scene coverage (see Section 4.3).

Moreover, sparse depth can be acquired robustly and

efficiently, which enables an interactive, operator-in-the-

loop, acquisition pipeline. The operator detects and

addresses acquisition problems right away, and aims the

acquisition device such as to maximize the impact of the

sparse data, which ensures that a quality model is obtained

in a single scanning session.

The sparse-depth/dense-viewpoint (SDDV) approach

enables adding detail at linear cost: a quick scan can be

refined at will by revisiting scanned regions in order to

increase model fidelity. This is in sharp contrast with the

dense-depth/sparse-viewpoint (DDSV) approach where,

due to data redundancy, acquisition from each additional

viewpoint comes at the same cost yet contributes fewer and

fewer new samples.

Another important advantage of the SDDV modeling

approach is an increased robustness of the acquisition of

reflective surfaces. Such surfaces are challenging for

passive methods (e.g. stereo) because they complicate

correspondence searching, and also for active methods (e.g.

laser rangefinding, structured light) because they limit the

amount of emitted energy reflected back to the sensor. Our

approach of interactive acquisition from a dense set of

viewpoints allows the operator to avoid grazing scanning

angles.

We describe a modeling system that implements the

SDDV modeling approach (Figure 1). The system acquires

the scene from thousands of viewpoints in minutes (Figure

2). The color and depth data is combined into a view

dependent model, which produces quality novel views of

the scene at interactive rates (Figure 3, Figure 10, and

accompanying video). The system is versatile—it supports

complex geometry and surfaces with complex reflective

properties—and robust—all the models shown here were

acquired in a single take in one afternoon. We currently

target scenes that fit in a 0.5m cube. However, the system

has ample depth acquisition range, the resulting models are

compact, and the system does not require altering scene

lighting conditions or displacing scene objects, which are

important pre-conditions for a future extension to SDDV

modeling of large environments.

2. Prior work

We give a brief discussion of prior work organized

according to the method used for depth acquisition.

No-depth methods

One automated modeling approach is to bypass depth

acquisition altogether and to sample the scene color densely

from a dense set of acquisition viewpoints. The resulting

4D ray database (light field [LH96], and lumigraph

[GGS*96]) supports photorealistic rendering at interactive

rates. Light fields and their extensions remain the only

approach for acquiring extremely complex scenes (e.g.

feathers, fur, translucent gaze [MPN*02]). However, the

approach suffers from the disadvantages of large model

sizes. Panoramas [Che95] and their extensions are compact

2D ray databases, but the reduction in size comes at the

price of restricting the desired viewpoint to the center of the

panorama. All ray database methods preclude quantitative

applications that require explicit geometry.

User-specified-depth methods

Another approach is to rely on the user to specify a

coarse geometric model of the scene using an interactive

software tool that leverages model and image space

geometric constraints [DTM96, HH02, QTZ*06]. The

approach takes advantage of the user’s understanding of the

scene, who specifies the most relevant geometric entities.

However, the method scales poorly with the geometric

complexity of the scene, when manual geometry

specification becomes prohibitively expensive.

Dense-depth methods

One of the oldest and most appealing ways of acquiring

scene geometry is depth from stereo, where the disparity of

corresponding points in overlapping photographs is

translated into depth [PG02, DRR03, LHY*05]. A simple

camera suffices to capture scenes on small or large scale,

indoors or outdoors. However, finding correspondences is a

challenging problem, relying on the presence of color

texture.

.

submitted to EUROGRAPHICS 2008

3

In depth from structured light one camera of the stereo

pair is replaced with a light source that casts a special light

pattern in the field of view of the remaining camera. The

approach simplifies the search for correspondences and is

robust to surfaces that lack color texture. Using complex

patterns of light enables recovering dense depth maps from

a few frames [RHL02, KGG03], but the approach relies on

video projectors which are bulky and require strict control

of scene lighting.

The alternative is to use a simpler and brighter light

pattern such as a plane of laser light. Such triangulation

laser rangefinding produces high resolution depth maps

[Lev00, GFS05] but at a large time cost due to sequential

scanning. Moreover, the laser stripe degrades quickly with

the distance to the acquired surface, which requires that the

acquisition device be kept close to the scanned surface or to

use laser source power levels that are not eye safe.

Complex geometry is also a challenge for the laser stripe

approach due to laser scattering and secondary reflections.

A third approach for dense depth acquisition is time-of-

flight laser rangefinding [MNP99, STY03, GFS05]. Like in

the case of triangulation laser rangefinding, quality depth

maps are acquired but only at a substantial time cost.

However, the approach allows scanning from afar. Both

laser rangefinding approaches do not acquire color directly.

One disadvantage common to all dense depth methods is

the high redundancy between depth maps. Another

disadvantage is slow acquisition which limits the number of

acquisition locations, which in turn leads to poor scene

coverage.

Interactive methods

We are not the first to notice the advantages of an

interactive automated modeling pipeline. An early type of

interactive modeling system allows the operator to move a

light pattern inside the field of view of the fixed camera

[Bor*98, BP99]. This enables the operator to avoid grazing

angles between the scene surfaces and the pattern of light,

but is fundamentally limited to a single viewpoint by the

fixed camera. The most the operator can do is to assign

depth to all the pixels of the camera image, which is

equivalent to one dense depth map.

The single-viewpoint limitation is overcome by systems

that employ a laser stripe triangulation rangefinder whose

position and orientation is provided by an electromagnetic

[FFG*96] or mechanical [Hi00] tracker. However, the laser

stripe limitations discussed above remain. A different

approach for achieving interactive acquisition from many

viewpoints is to move the targeted scene in the field of

view of a fixed scanner using a rotating platter [TOF05], or

by relying on the operator to rotate the object [RHL02].

Such systems are essentially object scanners and scale

poorly with the size of the scene.

The ModelCamera system [PSB03, BPM06] employs a

structured light pattern that consists of a matrix of laser

beams, which ensures robust acquisition of sparse depth

data at interactive rates. Our acquisition device is

similar to the ModelCamera and we describe the

similarities and differences in Section 4.1. The

ModelCamera scans simple scenes (e.g. large pieces of

furniture) in a hand-held mode, without reliance on an

external tracker [PSB03]. Complex scenes (e.g. plants,

rooms, buildings) are acquired by panning and tilting the

ModelCamera about a fixed acquisition viewpoint

[BPM06]. The single viewpoint constraint limits the

fidelity of the acquired models.

3. System overview

We developed a system that executes the sparse-

depth/dense-viewpoint automated modeling approach. We

employ a hand-held acquisition device that acquires dense

color and sparse depth at interactive rates (Section 4.1).

The operator sweeps the scene with the device and

monitors the acquisition process through immediate visual

feedback provided on a nearby monitor (Figure 1). Data

acquisition proceeds in two steps: depth acquisition

(Section 4.2), which achieves adequate scene coverage

(Section 6.1), followed by color acquisition (Section 4.3).

The depth and color data is combined into a view-

dependent model for rendering (Section 0).

4. Acquisition

To support the SDDV approach the system should:

- acquire sparse depth efficiently and robustly

- acquire high-quality color

- allow the operator to freely position the acquisition device

- provide real-time feedback during acquisition.

4.1. Acquisition device

We employ an acquisition device similar to the

ModelCamera [BPM06]. Like the ModelCamera, our

acquisition device consists of a video camera with an

attached laser system that casts a matrix of laser beams into

the field of view of the camera (Figure 4). Each beam

creates a bright dot in the frame which is detected and

triangulated into a depth sample. By construction, the laser

beams project to disjoint image plane epipolar segments

which makes dot detection efficient and robust. Compared

to a laser stripe, the dot pattern has the advantage of higher

brightness and of fewer scattering and secondary reflection

artifacts. The baseline of 20 cm provides a depth range of

50 to 400 cm.

Whereas the ModelCamera was restricted to a single

acquisition viewpoint using a parallax-free pan-tilt bracket,

our acquisition device is attached to a mechanical tracking

arm which allows 6-degree-of-freedom motion. The

operator can position and orient the acquisition device

freely within a sphere with a radius of 60 cm to acquire

剩余10页未读,继续阅读

资源评论

百态老人

- 粉丝: 2129

- 资源: 2万+

下载权益

C知道特权

VIP文章

课程特权

开通VIP

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功