没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

试读

16页

Fast Sorting Algorithms using AVX-512 on Intel Knights LandingBerenger BramasMax Planck Computing and Data Facility (MPCDF)Email: Berenger.Bramas@mpcdf.mpg.deThis paper describes fast sorting techniques using the recent AVX-512 instruction set. Our implementations benefit from the latest possibilities offered by AVX-512 to vectorize a two-parts hybrid algorithm: we sort the small arrays using a branch- free Bitonic variant, and we provide a vectorized partitioning kernel which is the main compon

资源推荐

资源详情

资源评论

Fast Sorting Algorithms using

AVX-512 on Intel Knights Landing

Berenger Bramas

Max Planck Computing and Data Facility (MPCDF)

Email: Berenger.Bramas@mpcdf.mpg.de

This paper describes fast sorting techniques using the recent AVX-512 instruction

set. Our implementations benefit from the latest possibilities offered by AVX-512

to vectorize a two-parts hybrid algorithm: we sort the small arrays using a branch-

free Bitonic variant, and we provide a vectorized partitioning kernel which is the

main component of the well-known Quicksort. Our algorithm sorts in-place and

is straightforward to implement thanks to the new instructions. Meanwhile, we

also show how an algorithm can be adapted and implemented with AVX-512. We

report a performance study on the Intel KNL where our approach is faster than

the GNU C++ sort algorithm for any size in both integer and double floating-point

arithmetics by a factor of 4 in average.

Keywords: quicksort; sort; vectorization; AVX-512; KNL

1. INTRODUCTION

Sorting is a fundamental problem in computer science,

and efficient implementations are critical for various

applications such as database servers [1] or image

rendering engines [2]. Moreover, sorting libraries are

massively used in software development to reduce the

complexity of specific algorithms. As an example,

once an array is sorted, it is possible to use

binary search and find any item in logarithmic time.

Therefore, having efficient sorting implementations on

new architectures can improve performance of a wide

range of applications.

From one CPU generation to the next, improvements

and changes are made at various levels. Some

modifications are hidden from the programmer and

might improve existing codes without any update.

This includes the low-level modules (speculation, out-

of-order execution, etc.) but also the CPU’s clock

frequency. On the other hand, some new features and

improvements require to be explicitly used. In this

category, the two dominant improvements are the usage

of vectorization units and multi-core parallelization.

Thus, to achieve high-performance on modern CPUs,

it is indispensable to vectorize a code, that is to take

advantage of the single instruction on multiple data

(SIMD) capacity. In fact, while the difference between a

scalar code and its vectorized equivalent was ”only” of a

factor of 2 in the year 2000 (SSE technology and double

precision), the difference is now up to a factor 4 on

most CPUs (AVX) and up to 8 (AVX-512) on the KNL.

Some algorithms or computational intensive kernels are

straightforward to vectorize and could even be auto-

vectorize by the compilers. However, data-processing

algorithms are generally not in this category because

the SIMD operations are not always well designed for

this purpose.

The Intel Knights Landing (KNL) processor [3] did

not follow the same path of improvement as the previous

CPUs. In fact, the clock frequency has been reduced

but the size of SIMD-vector and the number of cores in

the chip have been increased impressively (in addition

to having a new memory hierarchy). The KNL supports

the AVX-512 instruction set [4]: it supports Intel AVX-

512 foundational instructions (AVX-512F), Intel AVX-

512 conflict detection instructions (AVX-512CD), Intel

AVX-512 exponential and reciprocal instructions (AVX-

512ER), and Intel AVX-512 prefetch instructions (AVX-

512PF). AVX-512 allows to work on SIMD-vectors of

double the size compared to previous AVX(2) set, and

it comes with various new operations. Moreover, the

next-generation of Intel CPUs will support AVX-512

too. Consequently, the development of new algorithms

targeting Intel KNL should be beneficial to the future

Intel Xeon SkyLake CPU as well.

In the current paper, we look at different strategies

to develop an efficient sorting algorithm on Intel KNL

using AVX-512. The contributions of this study are the

following:

• studying sorting algorithms on the Intel KNL

• proposing a new partitioning algorithm using AVX-

512

• defining a new Bitonic sort variant to sort tiny

arrays using AVX-512

• implementing a new Quicksort variant using AVX-

512.

All in all, we show how we can obtain a fast and

arXiv:1704.08579v1 [cs.MS] 24 Apr 2017

2 B. Bramas

vectorized sorting algorithm

1

.

The rest of the paper is organized as follows. Sec-

tion 2 provides the background related to vectorization

and sorting. Then, we describe our proposal to sort tiny

arrays in Section 3, and our partitioning and Quicksort

variant in Section 4. Finally, in Section 5, we provide

a performance study of our method against the GNU

C++ standard sort (STL) implementation.

2. BACKGROUND

In this section, we provide the pre-requisite to our sort

implementation. We first introduce the Quicksort and

the Bitonic sort. Then we describe the objectives of the

vectorization and we finish with an overview of some

related work.

2.1. Sorting Algorithms

2.1.1. Quicksort Overview

We briefly describe the well-known Quicksort (QS)

algorithm to make the document self-content, but

readers comfortable with it can skip this section. QS

has been originally proposed in [5], and uses a divide-

and-conquer strategy by recursively partitioning the

input array until it ends with partitions of one value.

The partitioning puts values lower than a pivot at

the beginning of the array and the values greater at

the end with a linear complexity. QS has a worst-

case complexity of O(n

2

) but an average complexity

of O(n log n) in practice. The complexity is tied to the

choice of the pivot when partitioning, it must be close

to the median to ensure a low complexity. However,

its simplicity in terms of implementation and its speed

in practice has turned it into a very popular sorting

algorithm. Figure 1 shows the sort of an array using

QS.

3 1 2 0 5

1 0 2

5 3

3 50 1

≤ 2

≤ 1

≤ 5

∅

∅

l=0

r=1 r=5

l=0

l=4

r=5

FIGURE 1: Quicksort example to sort [3, 1, 2, 0, 5] to

[0, 1, 2, 3, 5]. The pivot is equal to the value in the

middle: the first pivot is 2, then at second recursion

level it is 1 and 5.

We provide in Algorithm 2 a possible implementation

of a scalar QS (here the term scalar refers to as single

1

The functions described in the current study are available

at https://gitlab.mpcdf.mpg.de/bbramas/avx-512-sort. This

repository includes a clean header-only library (branch master)

and a test file that generates the performance study of the current

manuscript (branch paper). The code is under MIT license.

value at the opposite of a SIMD vector). In this

implementation, the choice of the pivot is done naively

by selecting the value in the middle before partitioning.

This type of selection can result in very unbalanced

partitions, hence why more advanced heuristics have

been proposed in the past. As an example, the pivot

can be selected by taking a median from several values.

Algorithm 1: Quicksort

Input: array: an array to sort. length: the size of array.

Output: array: the array sorted.

1 function QS(array, length)

2 QS core(array, 0, length-1)

3 function QS core(array, left, right)

4 if left < right then

5 // Naive method, select value in the middle

6 pivot idx = ((right-left)/2) + left

7 swap(array[pivot idx], array[right])

8 partition bound = partition(array, left, right, array[right])

9 swap(array[partition bound], array[right])

10 QS core(array, left, partition bound-1)

11 QS core(array, partition bound+1, right)

12 end

13 function partition(array, left, right, pivot value)

14 for idx read ← left to right do

15 if array[idx read] ¡ pivot value then

16 swap(array[idx read], array[left])

17 left += 1

18 end

19 end

20 return left;

2.1.2. GNU std::sort Implementation (STL)

The worst case complexity of QS makes it no longer

suitable to be used as the standard C++ sort. In

fact, a complexity of O(n log n) in average was required

until year 2003 [6], but it is now a worst case limit [7]

that a pure QS implementation cannot guarantee.

Consequently, the current implementation is a 3-part

hybrid sorting algorithm [8] i.e. it relies on 3 different

algorithms. First, the algorithm uses an introsort [9] to

a maximum depth of 2 × log

2

n (introsort is a hybrid of

quicksort and heap sort) to obtain small partitions that

are then sorted using an insertion sort.

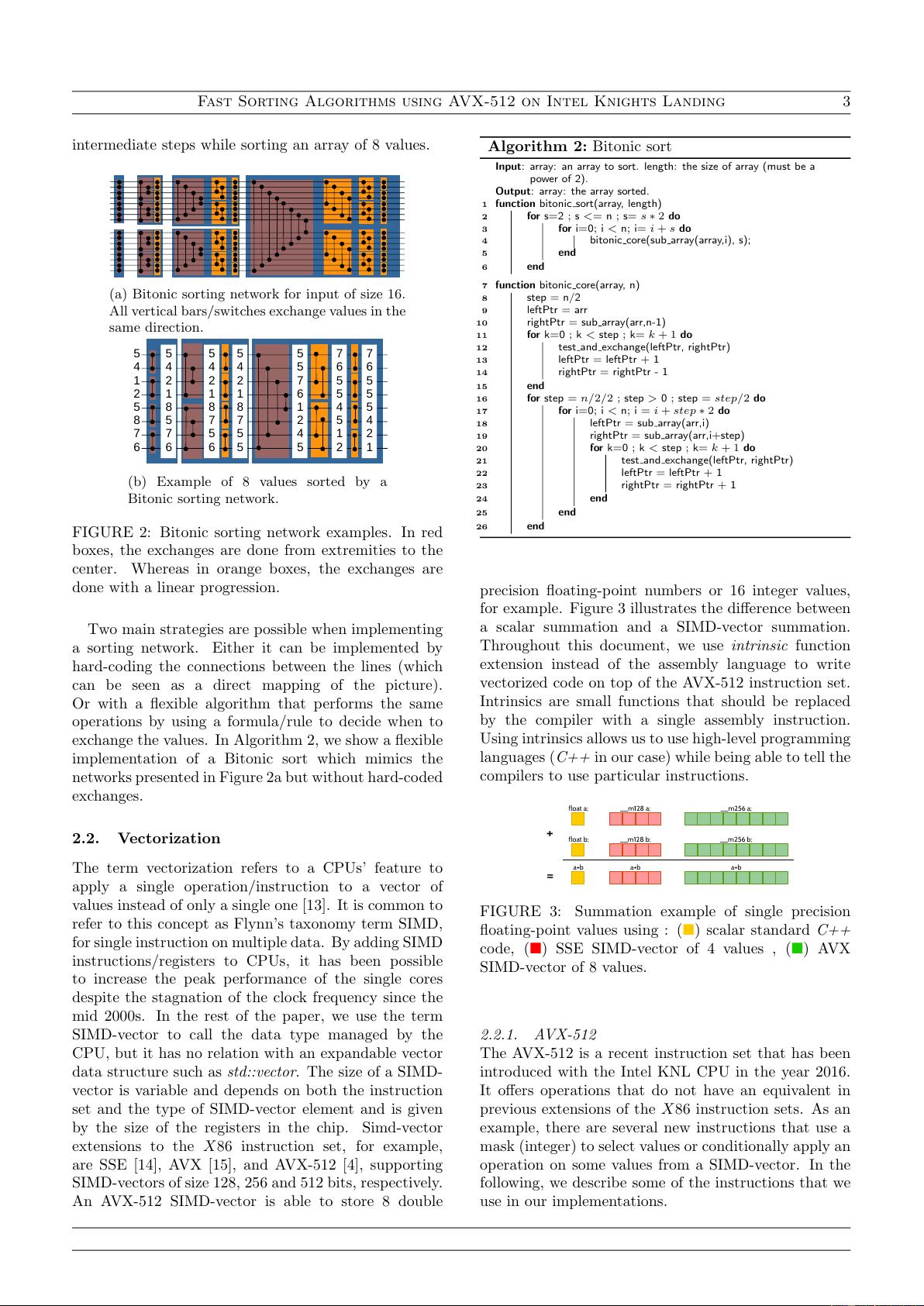

2.1.3. Bitonic Sorting Network

In computer science, a sorting network is an abstract

description of how to sort values from a fixed-size array;

how the values are compared and exchanged. A sorting

network can be represented graphically having each

input value as an horizontal lines and each compare and

exchange unit as a vertical connection between those

lines. It exists various sorting networks in the literature,

but we concentrate our description on the Bitonic sort

from [10]. This network is easy to implement and has an

algorithm complexity of O(n log(n)

2

). Moreover, it has

shown good performance on parallel computers [11] and

GPUs [12]. Figure 2a shows a Bitonic sorting network

to process 16 values. A sorting network can be seen as a

time-line where input values are transfered from left to

right and exchanged if needed at each vertical bar. We

show such execution in Figure 2b where we print the

Fast Sorting Algorithms using AVX-512 on Intel Knights Landing 3

intermediate steps while sorting an array of 8 values.

(a) Bitonic sorting network for input of size 16.

All vertical bars/switches exchange values in the

same direction.

5

4

1

2

5

8

7

6

5

4

2

1

6

6

0

5

5

4

2

1

6

6

5

0

5

4

2

1

8

5

7

6

5

4

2

1

8

7

5

6

5

4

2

1

8

7

5

5

5

5

7

6

1

2

4

5

7

6

5

5

4

5

1

2

7

6

5

5

5

4

2

1

(b) Example of 8 values sorted by a

Bitonic sorting network.

FIGURE 2: Bitonic sorting network examples. In red

boxes, the exchanges are done from extremities to the

center. Whereas in orange boxes, the exchanges are

done with a linear progression.

Two main strategies are possible when implementing

a sorting network. Either it can be implemented by

hard-coding the connections between the lines (which

can be seen as a direct mapping of the picture).

Or with a flexible algorithm that performs the same

operations by using a formula/rule to decide when to

exchange the values. In Algorithm 2, we show a flexible

implementation of a Bitonic sort which mimics the

networks presented in Figure 2a but without hard-coded

exchanges.

2.2. Vectorization

The term vectorization refers to a CPUs’ feature to

apply a single operation/instruction to a vector of

values instead of only a single one [13]. It is common to

refer to this concept as Flynn’s taxonomy term SIMD,

for single instruction on multiple data. By adding SIMD

instructions/registers to CPUs, it has been possible

to increase the peak performance of the single cores

despite the stagnation of the clock frequency since the

mid 2000s. In the rest of the paper, we use the term

SIMD-vector to call the data type managed by the

CPU, but it has no relation with an expandable vector

data structure such as std::vector. The size of a SIMD-

vector is variable and depends on both the instruction

set and the type of SIMD-vector element and is given

by the size of the registers in the chip. Simd-vector

extensions to the X86 instruction set, for example,

are SSE [14], AVX [15], and AVX-512 [4], supporting

SIMD-vectors of size 128, 256 and 512 bits, respectively.

An AVX-512 SIMD-vector is able to store 8 double

Algorithm 2: Bitonic sort

Input: array: an array to sort. length: the size of array (must be a

power of 2).

Output: array: the array sorted.

1 function bitonic sort(array, length)

2 for s=2 ; s <= n ; s= s ∗ 2 do

3 for i=0; i < n; i= i + s do

4 bitonic core(sub array(array,i), s);

5 end

6 end

7 function bitonic core(array, n)

8 step = n/2

9 leftPtr = arr

10 rightPtr = sub array(arr,n-1)

11 for k=0 ; k < step ; k= k + 1 do

12 test and exchange(leftPtr, rightPtr)

13 leftPtr = leftPtr + 1

14 rightPtr = rightPtr - 1

15 end

16 for step = n/2/2 ; step > 0 ; step = step/2 do

17 for i=0; i < n; i = i + step ∗ 2 do

18 leftPtr = sub array(arr,i)

19 rightPtr = sub array(arr,i+step)

20 for k=0 ; k < step ; k= k + 1 do

21 test and exchange(leftPtr, rightPtr)

22 leftPtr = leftPtr + 1

23 rightPtr = rightPtr + 1

24 end

25 end

26 end

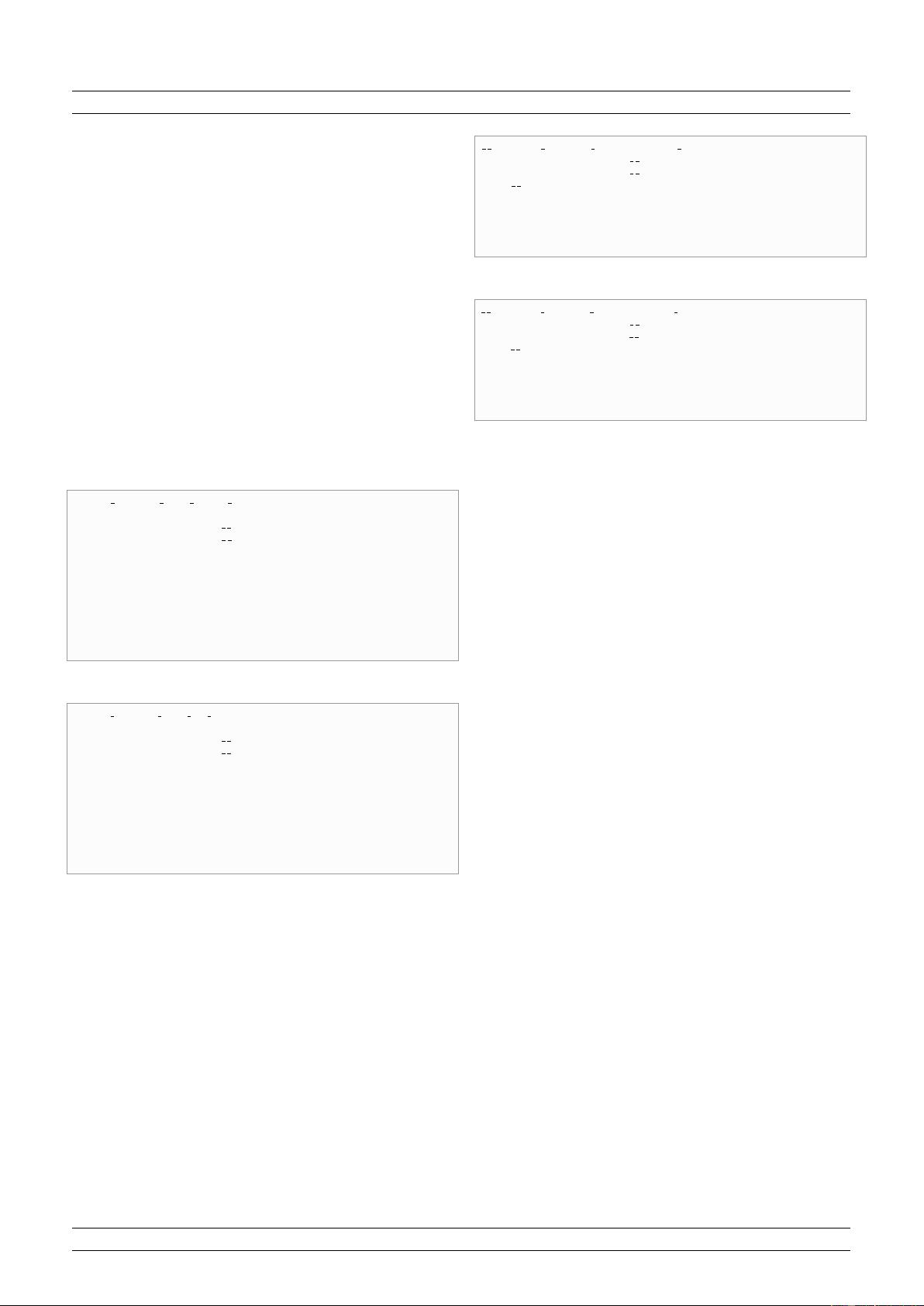

precision floating-point numbers or 16 integer values,

for example. Figure 3 illustrates the difference between

a scalar summation and a SIMD-vector summation.

Throughout this document, we use intrinsic function

extension instead of the assembly language to write

vectorized code on top of the AVX-512 instruction set.

Intrinsics are small functions that should be replaced

by the compiler with a single assembly instruction.

Using intrinsics allows us to use high-level programming

languages (C++ in our case) while being able to tell the

compilers to use particular instructions.

float a; __m128 a;

__m256 a;

float b; __m128 b; __m256 b;

a+b a+b a+b

+

=

FIGURE 3: Summation example of single precision

floating-point values using : () scalar standard C++

code, () SSE SIMD-vector of 4 values , () AVX

SIMD-vector of 8 values.

2.2.1. AVX-512

The AVX-512 is a recent instruction set that has been

introduced with the Intel KNL CPU in the year 2016.

It offers operations that do not have an equivalent in

previous extensions of the X86 instruction sets. As an

example, there are several new instructions that use a

mask (integer) to select values or conditionally apply an

operation on some values from a SIMD-vector. In the

following, we describe some of the instructions that we

use in our implementations.

4 B. Bramas

Load/set/store. As in previous instruction sets, AVX-

512 has instructions to load a contiguous block of values

from main memory and to transform it into a SIMD-

vector (load), fill a SIMD-vector with a given value (set)

and move back a SIMD-vector into memory (store).

Store some. A new operation allows to save only

some values from a SIMD-vector into memory

(vpcmpd/vcmppd). The values are saved contiguously.

This is a major improvement because without this

instruction, several operations are needed to obtain the

same result. For example, to save some values from a

SIMD-vector v at address p in memory, one possibility

is to load the current values from p into a SIMD-vector

v

0

, permute the values in v to move the values to store

at the beginning, merge v and v

0

, and finally save the

resulting vector. The pseudo-code in Figure 4 describes

the results obtained with this store-some instruction.

1 void mm512 cmp epi32 mask (

2 in t ∗ pt r ,

3 mas k16 msk ,

4 m5 1 2i v a l u e s ) {

5 o f f s e t = 0 ;

6 fo r ( id x from 0 t o 15) {

7 i f ( msk AND s h i f t ( 1 , i d x ) ) {

8 p tr [ o f f s e t ] = v a l u e s [ i dx ] ;

9 o f f s e t += 1 ;

10 }

11 }

12 }

13

Store some (integer)

1 void mm512 cmp pd mask (

2 double ∗ p t r ,

3 mask 8 msk ,

4 m512d v a l u e s ) {

5 o f f s e t = 0 ;

6 fo r ( id x from 0 t o 7) {

7 i f ( msk AND s h i f t ( 1 , i d x ) ) {

8 p tr [ o f f s e t ] = v a l u e s [ i dx ] ;

9 o f f s e t += 1 ;

10 }

11 }

12 }

13

Store some (double)

FIGURE 4: AVX-512 store-some behavior for an

integer and a double floating-point vectors.

Vector permutation. Permuting the values inside

a vector was possible since AVX/AVX2 using

permutevar8x32 (instruction vperm(d,ps)). This in-

struction allows to re-order the values inside a SIMD-

vector using a second integer array which contains the

permutation indexes. AVX-512 also has this instruction

on its own SIMD-vector, and we synthetize its behavior

in Figure 5.

Min/Max. The min/max operations (vpmaxsd/vp-

minsd/vmaxpd/vminpd ) return a SIMD-vector where

1 m5 1 2i mm5 12 p ermu tex var e pi3 2 (

2 m5 1 2i permIdxs ,

3 m5 1 2i v a l u e s ) {

4 m5 1 2i r e s ;

5 fo r ( id x from 0 t o 15)

6 r e s [ i d x ] = v a l u e s [ permIdxs [ i d x ] ] ;

7 return r e s ;

8 }

9

Permute (integer)

1 m512d mm512 permutexvar pd (

2 m5 1 2i permIdxs ,

3 m512d v a l u e s ) {

4 m512d r e s ;

5 fo r ( id x from 0 t o 7)

6 r e s [ i d x ] = v a l u e s [ permIdxs [ i d x ] ] ;

7 return r e s ;

8 }

9

Permute (double)

FIGURE 5: AVX-512 permute behavior for an integer

and a double floating-point vectors.

each value correspond to the minimum/maximum value

of the two input vectors at the same position (they do

not return a single scalar as the global minimum/max-

imum among all the values). Such instructions exist in

SSE/SSE2/AVX too.

Comparison. In AVX-512, the value returned by a

test/comparison (vpcmpd/vcmppd) is a mask (integer)

and not a SIMD-vector of integers as it was in

SSE/AVX. Therefore, it is easy to modify and work

directly on the mask with arithmetic and binary

operations for scalar integers. The behavior of the

comparison is shown in Figure 6, where the mask is

filled with bits from the comparison results. AVX-512

provides several instructions that use this type of mask

like the conditional selection for instance.

Conditional selection. Among the mask-based instruc-

tions, the mask move (vmovdqa32/vmovapd) allows to

select values between two vectors using a mask. The be-

havior is show in Figure 7, where a value is taken from

the second vector where the mask is false and from the

first vector otherwise. Achieving the same result was

possible in previous instruction set only using several

operations and not one dedicated instruction.

2.3. Vectorized Sorting Algorithms

The literature on sorting and vectorized sorting

implementations is immense. Therefore, we only

cite here some of the studies that we consider most

connected to our work.

The sorting technique from [16] tries to remove

branches and improves the prediction of a scalar sort,

and they show a speedup by a factor of 2 against the

STL (the implementation of the STL at that time was

剩余15页未读,继续阅读

资源评论

weixin_38682279

- 粉丝: 9

- 资源: 889

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- HITK0203MP-VB一款N-Channel沟道SOT23的MOSFET晶体管参数介绍与应用说明

- HITK0202MP-VB一款N-Channel沟道SOT23的MOSFET晶体管参数介绍与应用说

- 电子电气工程师使用的单位和符号

- HITK0201MP-VB一款N-Channel沟道SOT23的MOSFET晶体管参数介绍与应用说明

- MyBatis动态SQL:构建灵活查询的利器.md

- HITJ0303MP-VB一款P-Channel沟道SOT23的MOSFET晶体管参数介绍与应用说明

- tesseract安装包

- 1_32陀螺仪舵机.zip

- HITJ0302MP-VB一款P-Channel沟道SOT23的MOSFET晶体管参数介绍与应用说明

- XILINXFPGA源码PCIExpress标准概述

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功