没有合适的资源?快使用搜索试试~ 我知道了~

class variable. All of the data in Figure 1.1 constitutes “training data,” so th

资源详情

资源评论

资源推荐

Chapter 1

C4.5

Naren Ramakrishnan

Contents

1.1 Introduction ..............................................................1

1.2 Algorithm Description ....................................................3

1.3 C4.5 Features .............................................................7

1.3.1 Tree Pruning ......................................................7

1.3.2 Improved Use of Continuous Attributes ............................8

1.3.3 Handling Missing Values ..........................................9

1.3.4 Inducing Rulesets ................................................ 10

1.4 Discussion on Available Software Implementations ...................... 10

1.5 Two Illustrative Examples ............................................... 11

1.5.1 Golf Dataset ..................................................... 11

1.5.2 Soybean Dataset ................................................. 12

1.6 Advanced Topics ........................................................ 13

1.6.1 Mining from Secondary Storage .................................. 13

1.6.2 Oblique Decision Trees .......................................... 13

1.6.3 Feature Selection ................................................ 13

1.6.4 Ensemble Methods .............................................. 14

1.6.5 Classification Rules .............................................. 14

1.6.6 Redescriptions ................................................... 15

1.7 Exercises ............................................................... 15

References ................................................................... 17

1.1 Introduction

C4.5 [30] is a suite of algorithms for classification problems in machine learning and

data mining. It is targeted at supervised learning: Given an attribute-valued dataset

where instances are described by collections of attributes and belong to one of a set

of mutually exclusive classes, C4.5 learns a mapping from attribute values to classes

that can be applied to classify new, unseen instances. For instance, see Figure 1.1

where rows denote specific days, attributes denote weather conditions on the given

day, and the class denotes whether the conditions are conducive to playing golf.

Thus, each row denotes an instance, described by values for attributes such as Out-

look (a ternary-valued random variable) Temperature (continuous-valued), Humidity

1

© 2009 by Taylor & Francis Group, LLC

2 C4.5

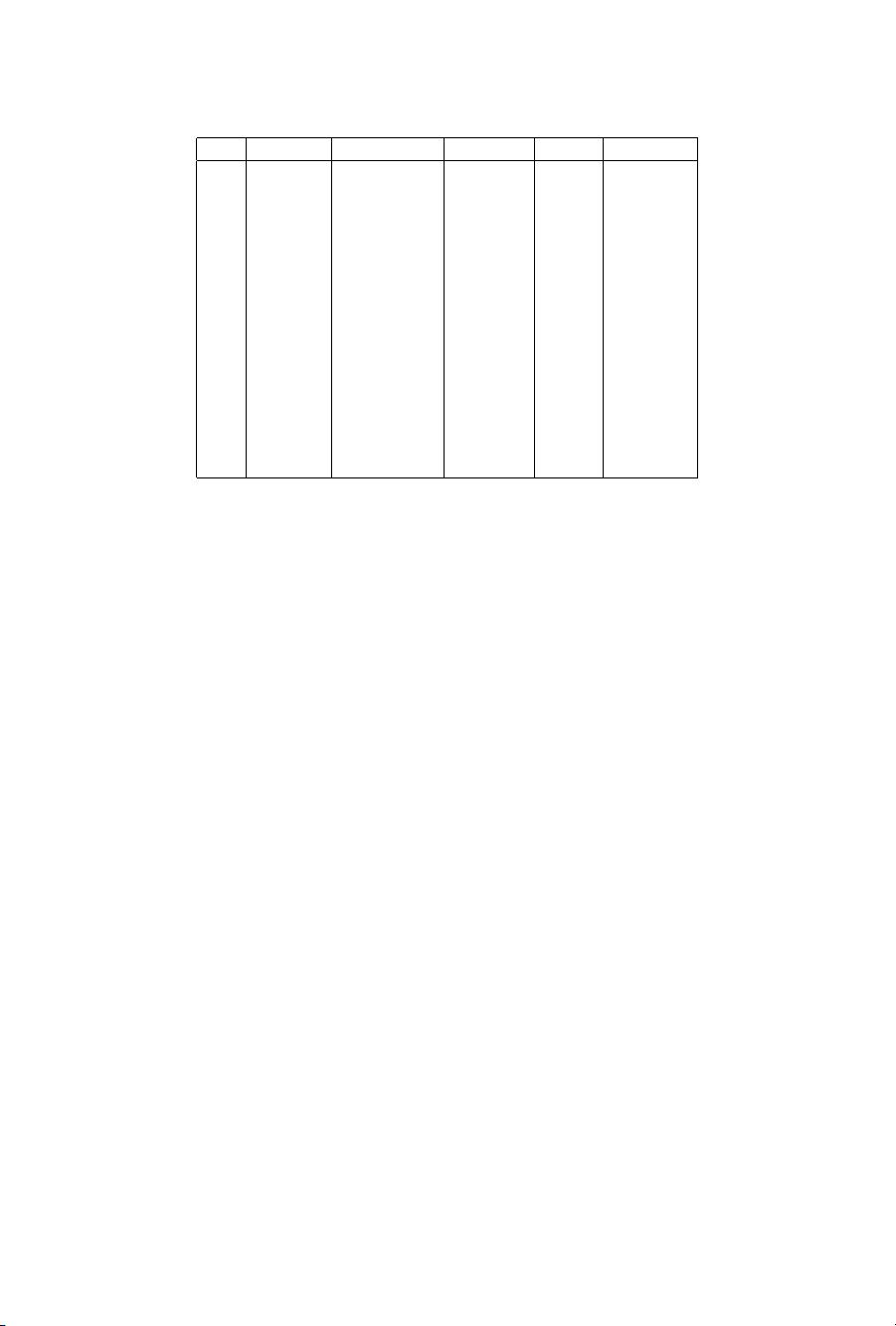

Day Outlook Temperature Humidity Windy Play Golf?

1 Sunny 85 85 False No

2 Sunny 80 90 True No

3 Overcast 83 78 False Yes

4 Rainy 70 96 False Yes

5 Rainy 68 80 False Yes

6 Rainy 65 70 True No

7 Overcast 64 65 True Yes

8 Sunny 72 95 False No

9 Sunny 69 70 False Yes

10 Rainy 75 80 False Yes

11 Sunny 75 70 True Yes

12 Overcast 72 90 True Yes

13 Overcast 81 75 False Yes

14 Rainy 71 80 True No

Figure 1.1 Example dataset input to C4.5.

(also continuous-valued), and Windy (binary), and the class is the Boolean PlayGolf?

class variable. All of the data in Figure 1.1 constitutes “training data,” so that the

intent is to learn a mapping using this dataset and apply it on other, new instances

that present values for only the attributes to predict the value for the class random

variable.

C4.5, designed by J. Ross Quinlan, is so named because it is a descendant of the

ID3 approach to inducing decision trees [25], which in turn is the third incarnation in

a series of “iterative dichotomizers.” A decision tree is a series of questions systemat-

ically arranged so that each question queries an attribute (e.g., Outlook) and branches

based on the value of the attribute. At the leaves of the tree are placed predictions of

the class variable (here, PlayGolf?). A decision tree is hence not unlike the series of

troubleshooting questions you might find in your car’s manual to help determine what

could be wrong with the vehicle. In addition to inducing trees, C4.5 can also restate its

trees in comprehensible rule form. Further, the rule postpruning operations supported

by C4.5 typically result in classifiers that cannot quite be restated as a decision tree.

The historical lineage of C4.5 offers an interesting study into how different sub-

communities converged on more or less like-minded solutions to classification. ID3

was developed independently of the original tree induction algorithm developed by

Friedman [13], which later evolved into CART [4] with the participation of Breiman,

Olshen, and Stone. But, from the numerous references to CART in [30], the design

decisions underlying C4.5 appear to have been influenced by (to improve upon) how

CART resolved similar issues, such as procedures for handling special types of at-

tributes. (For this reason, due to the overlap in scope, we will aim to minimize with

the material covered in the CART chapter, Chapter 10, and point out key differences

at appropriate junctures.) In [25] and [36], Quinlan also acknowledged the influence

of the CLS (Concept Learning System [16]) framework in the historical development

© 2009 by Taylor & Francis Group, LLC

1.2 Algorithm Description 3

of ID3 and C4.5. Today, C4.5 is superseded by the See5/C5.0 system, a commercial

product offered by Rulequest Research, Inc.

The fact that two of the top 10 algorithms are tree-based algorithms attests to

the widespread popularity of such methods in data mining. Original applications of

decision trees were in domains with nominal valued or categorical data but today

they span a multitude of domains with numeric, symbolic, and mixed-type attributes.

Examples include clinical decision making, manufacturing, document analysis, bio-

informatics, spatial data modeling (geographic information systems), and practically

any domain where decision boundaries between classes can be captured in terms of

tree-like decompositions or regions identified by rules.

1.2 Algorithm Description

C4.5 is not one algorithm but rather a suite of algorithms—C4.5, C4.5-no-pruning,

and C4.5-rules—with many features. We present the basic C4.5 algorithm first and

the special features later.

The generic description of how C4.5 works is shown in Algorithm 1.1. All tree

induction methods begin with a root node that represents the entire, given dataset and

recursively split the data into smaller subsets by testing for a given attribute at each

node. The subtrees denote the partitions of the original dataset that satisfy specified

attribute value tests. This process typically continues until the subsets are “pure,” that

is, all instances in the subset fall in the same class, at which time the tree growing is

terminated.

Algorithm 1.1 C4.5(D)

Input: an attribute-valued dataset D

1: Tree = {}

2:

if D is “pure” OR other stopping criteria met then

3: terminate

4: end if

5: for all attribute a ∈ D do

6: Compute information-theoretic criteria if we split on a

7: end for

8: a

best

= Best attribute according to above computed criteria

9: Tree = Create a decision node that tests a

best

in the root

10: D

v

= Induced sub-datasets from D based on a

best

11: for all D

v

do

12: Tree

v

= C4.5(D

v

)

13:

Attach Tree

v

to the corresponding branch of Tree

14: end for

15: return Tree

© 2009 by Taylor & Francis Group, LLC

4 C4.5

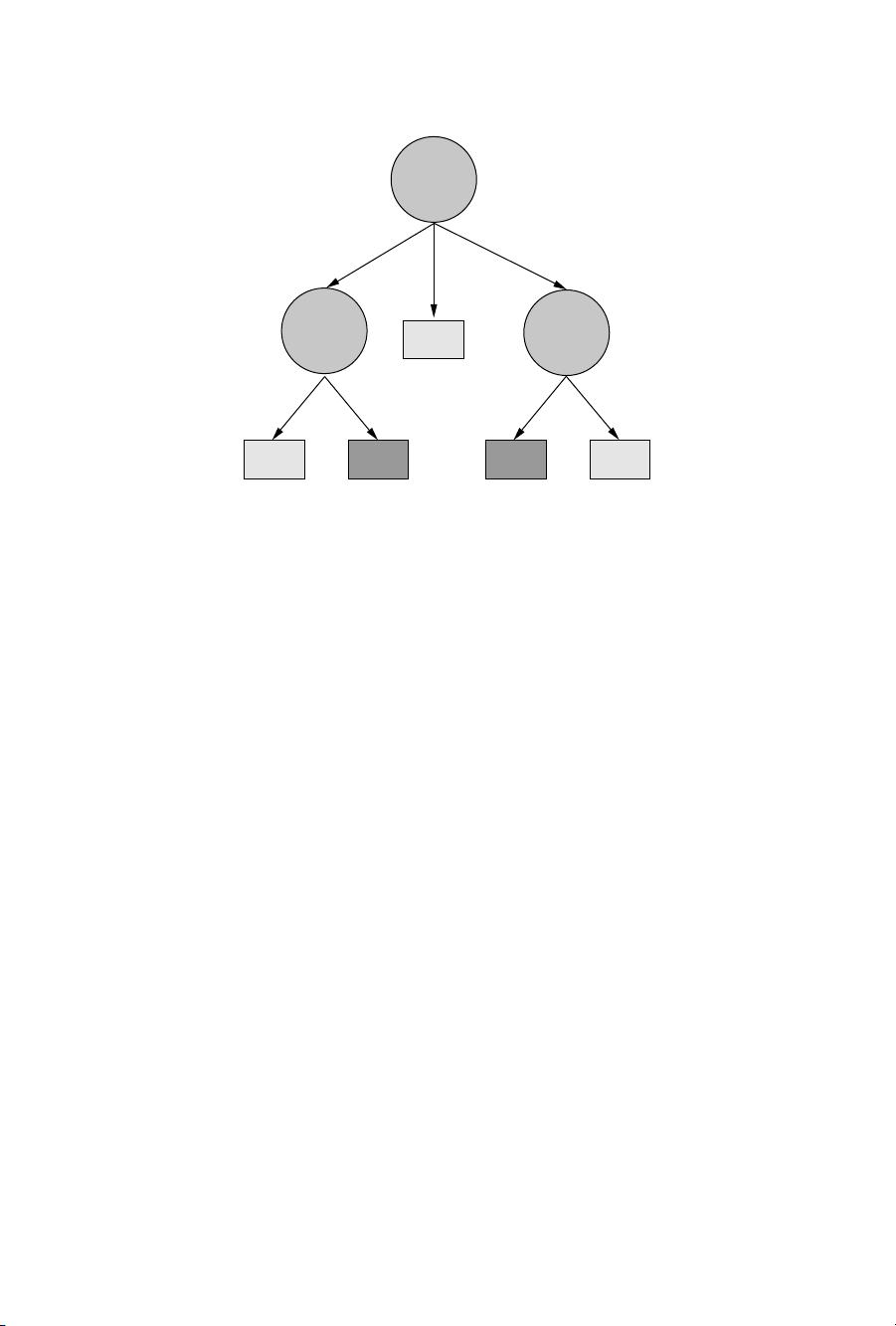

Yes

Yes

Yes

No No

Outlook

Humidity

Windy

Sunny Rainy

Overcast

>75<=75 FalseTrue

Figure 1.2 Decision tree induced by C4.5 for the dataset of Figure 1.1.

Figure 1.1 presents the classical “golf” dataset, which is bundled with the C4.5

installation. As stated earlier, the goal is to predict whether the weather conditions

on a particular day are conducive to playing golf. Recall that some of the features are

continuous-valued while others are categorical.

Figure 1.2 illustrates the tree induced by C4.5 using Figure 1.1 as training data

(and the default options). Let us look at the various choices involved in inducing such

trees from the data.

r

What types of tests are possible? As Figure 1.2 shows, C4.5 is not restricted

to considering binary tests, and allows tests with two or more outcomes. If the

attribute is Boolean, the test induces two branches. If the attribute is categorical,

the test is multivalued, but different values can be grouped into a smaller set of

options with one class predicted for each option. If the attribute is numerical,

then the tests are again binary-valued, and of the form {≤ θ?,> θ?}, where θ

is a suitably determined threshold for that attribute.

r

How are tests chosen? C4.5 uses information-theoretic criteria such as gain

(reduction in entropy of the class distribution due to applying a test) and

gain ratio (a way to correct for the tendency of gain to favor tests with many

outcomes). The default criterion is gain ratio. At each point in the tree-growing,

the test with the best criteria is greedily chosen.

r

How are test thresholds chosen? As stated earlier, for Boolean and categorical

attributes, the test values are simply the different possible instantiations of that

attribute. For numerical attributes, the threshold is obtained by sorting on that

attribute and choosing the split between successive values that maximize the

criteria above. Fayyad and Irani [10] showed that not all successive values need

to be considered. For two successive values v

i

and v

i+1

of a continuous-valued

© 2009 by Taylor & Francis Group, LLC

1.2 Algorithm Description 5

attribute, if all instances involving v

i

and all instances involving v

i+1

belong to

the same class, then splitting between them cannot possibly improve informa-

tion gain (or gain ratio).

r

How is tree-growing terminated? A branch from a node is declared to lead

to a leaf if all instances that are covered by that branch are pure. Another way

in which tree-growing is terminated is if the number of instances falls below a

specified threshold.

r

Howareclass labelsassignedto the leaves?Themajority class of the instances

assigned to the leaf is taken to be the class prediction of that subbranch of the

tree.

The above questions are faced by any classification approach modeled after trees and

similar, or other reasonable, decisions are made by most tree induction algorithms.

The practical utility of C4.5, however, comes from the next set of features that build

upon the basic tree induction algorithm above. But before we present these features,

it is instructive to instantiate Algorithm 1.1 for a simple dataset such as shown in

Figure 1.1.

We will work out in some detail how the tree of Figure 1.2 is induced from

Figure 1.1. Observe how the first attribute chosen for a decision test is the Outlook

attribute. To see why, let us first estimate the entropy of the class random variable

(PlayGolf?). This variable takes two values with probability 9/14 (for “Yes”) and

5/14 (for “No”). The entropy of a class random variable that takes on c values with

probabilities p

1

, p

2

,...,p

c

is given by:

c

i=1

−p

i

log

2

p

i

The entropy of PlayGolf? is thus

−(9/14) log

2

(9/14) − (5/14) log

2

(5/14)

or 0.940. This means that on average 0.940 bits must be transmitted to communicate

information about the PlayGolf? random variable. The goal of C4.5 tree induction is

to ask the right questions so that this entropy is reduced. We consider each attribute in

turn to assess the improvement in entropy that it affords. For a given random variable,

say Outlook, the improvement in entropy, represented as Gain(Outlook), is calculated

as:

Entropy(PlayGolf? in D) −

v

|D

v

|

|D|

Entropy(PlayGolf? in D

v

)

where v is the set of possible values (in this case, three values for Outlook), D denotes

the entire dataset, D

v

is the subset of the dataset for which attribute Outlook has that

value, and the notation |·|denotes the size of a dataset (in the number of instances).

This calculation will show that Gain(Outlook) is 0.940−0.694 = 0.246. Similarly,

we can calculate that Gain(Windy) is 0.940 −0.892 = 0.048. Working out the above

calculations for the other attributes systematically will reveal that Outlook is indeed

© 2009 by Taylor & Francis Group, LLC

剩余215页未读,继续阅读

普通网友

- 粉丝: 17

- 资源: 314

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0