没有合适的资源?快使用搜索试试~ 我知道了~

中文命名体识别2

需积分: 0 0 下载量 130 浏览量

2022-08-03

12:05:20

上传

评论

收藏 536KB PDF 举报

温馨提示

试读

11页

中文命名体识别2

资源详情

资源评论

资源推荐

Chinese NER Using Lattice LSTM

Yue Zhang

∗

and Jie Yang

∗

Singapore University of Technology and Design

yue zhang@sutd.edu.sg

jie yang@mymail.sutd.edu.sg

Abstract

We investigate a lattice-structured LSTM

model for Chinese NER, which encodes

a sequence of input characters as well as

all potential words that match a lexicon.

Compared with character-based methods,

our model explicitly leverages word and

word sequence information. Compared

with word-based methods, lattice LSTM

does not suffer from segmentation errors.

Gated recurrent cells allow our model to

choose the most relevant characters and

words from a sentence for better NER re-

sults. Experiments on various datasets

show that lattice LSTM outperforms both

word-based and character-based LSTM

baselines, achieving the best results.

1 Introduction

As a fundamental task in information extraction,

named entity recognition (NER) has received con-

stant research attention over the recent years. The

task has traditionally been solved as a sequence

labeling problem, where entity boundary and cate-

gory labels are jointly predicted. The current state-

of-the-art for English NER has been achieved by

using LSTM-CRF models (Lample et al., 2016;

Ma and Hovy, 2016; Chiu and Nichols, 2016; Liu

et al., 2018) with character information being in-

tegrated into word representations.

Chinese NER is correlated with word segmen-

tation. In particular, named entity boundaries are

also word boundaries. One intuitive way of per-

forming Chinese NER is to perform word segmen-

tation first, before applying word sequence label-

ing. The segmentation → NER pipeline, how-

ever, can suffer the potential issue of error propa-

gation, since NEs are an important source of OOV

∗

Equal contribution.

南

South

京

Capital

市

City

长

Long

江

River

大

Big

桥

Bridge

长江

Yangtze River

市长

mayor

南京

Nanji ng

大桥

Bridge

长江大桥

Yangtze River Bridge

Person?

南京市

Nanjing City

Figure 1: Word character lattice.

in segmentation, and incorrectly segmented en-

tity boundaries lead to NER errors. This prob-

lem can be severe in the open domain since cross-

domain word segmentation remains an unsolved

problem (Liu and Zhang, 2012; Jiang et al., 2013;

Liu et al., 2014; Qiu and Zhang, 2015; Chen et al.,

2017; Huang et al., 2017). It has been shown that

character-based methods outperform word-based

methods for Chinese NER (He and Wang, 2008;

Liu et al., 2010; Li et al., 2014).

One drawback of character-based NER, how-

ever, is that explicit word and word sequence in-

formation is not fully exploited, which can be

potentially useful. To address this issue, we in-

tegrate latent word information into character-

based LSTM-CRF by representing lexicon words

from the sentence using a lattice structure LSTM.

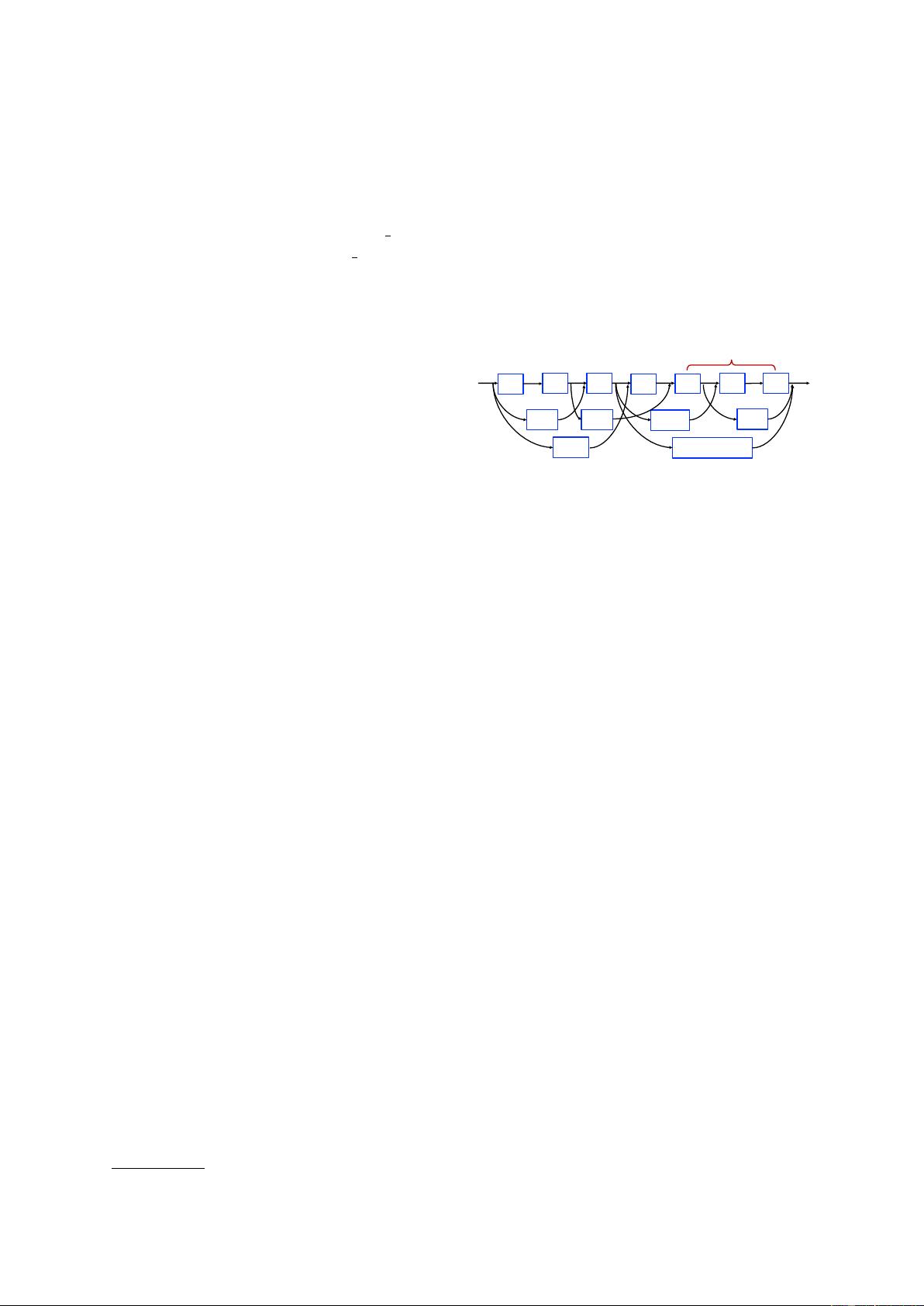

As shown in Figure 1, we construct a word-

character lattice by matching a sentence with a

large automatically-obtained lexicon. As a re-

sult, word sequences such as “长江大桥 (Yangtze

River Bridge)”, “长江 (Yangtze River)” and “大

桥 (Bridge)” can be used to disambiguate poten-

tial relevant named entities in a context, such as

the person name “江大桥 (Daqiao Jiang)”.

Since there are an exponential number of word-

character paths in a lattice, we leverage a lattice

LSTM structure for automatically controlling in-

formation flow from the beginning of the sentence

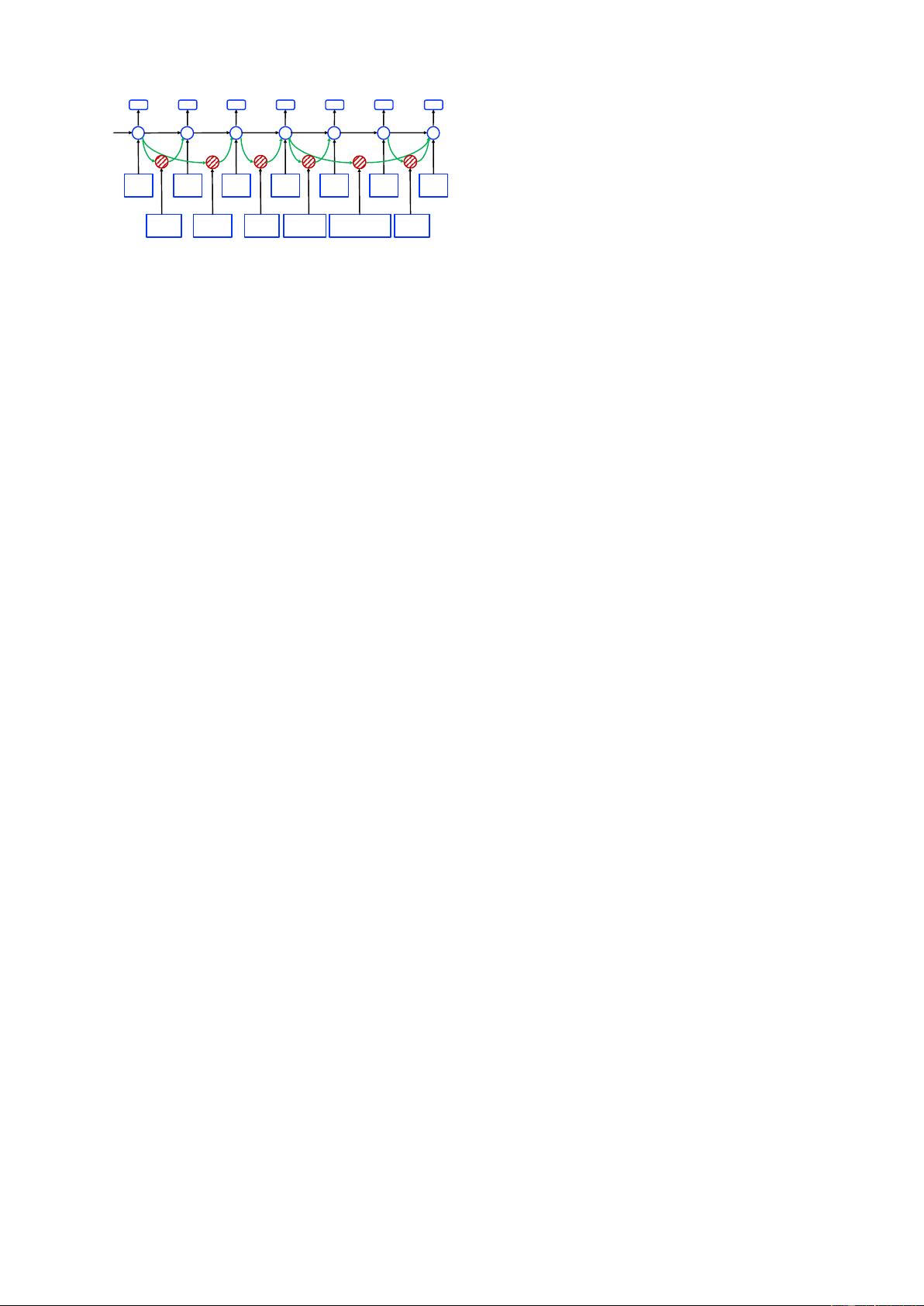

to the end. As shown in Figure 2, gated cells

are used to dynamically route information from

arXiv:1805.02023v4 [cs.CL] 5 Jul 2018

南

South

c

h

京

Capital

c

h

市

City

c

h

长

Long

c

h

江

River

c

h

大

Big

c

h

桥

Bridge

c

h

长江

Yangtze River

市长

mayor

南京

Nanji ng

长江大桥

Yangtze River Bridge

南京市

Nanji ng City

大桥

Bridge

Figure 2: Lattice LSTM structure.

different paths to each character. Trained over

NER data, the lattice LSTM can learn to find more

useful words from context automatically for bet-

ter NER performance. Compared with character-

based and word-based NER methods, our model

has the advantage of leveraging explicit word in-

formation over character sequence labeling with-

out suffering from segmentation error.

Results show that our model significantly out-

performs both character sequence labeling models

and word sequence labeling models using LSTM-

CRF, giving the best results over a variety of

Chinese NER datasets across different domains.

Our code and data are released at https://

github.com/jiesutd/LatticeLSTM.

2 Related Work

Our work is in line with existing methods us-

ing neural network for NER. Hammerton (2003)

attempted to solve the problem using a uni-

directional LSTM, which was among the first neu-

ral models for NER. Collobert et al. (2011) used

a CNN-CRF structure, obtaining competitive re-

sults to the best statistical models. dos Santos

et al. (2015) used character CNN to augment a

CNN-CRF model. Most recent work leverages

an LSTM-CRF architecture. Huang et al. (2015)

uses hand-crafted spelling features; Ma and Hovy

(2016) and Chiu and Nichols (2016) use a char-

acter CNN to represent spelling characteristics;

Lample et al. (2016) use a character LSTM in-

stead. Our baseline word-based system takes a

similar structure to this line of work.

Character sequence labeling has been the dom-

inant approach for Chinese NER (Chen et al.,

2006b; Lu et al., 2016; Dong et al., 2016). There

have been explicit discussions comparing statisti-

cal word-based and character-based methods for

the task, showing that the latter is empirically a

superior choice (He and Wang, 2008; Liu et al.,

2010; Li et al., 2014). We find that with proper

representation settings, the same conclusion holds

for neural NER. On the other hand, lattice LSTM

is a better choice compared with both word LSTM

and character LSTM.

How to better leverage word information for

Chinese NER has received continued research at-

tention (Gao et al., 2005), where segmentation in-

formation has been used as soft features for NER

(Zhao and Kit, 2008; Peng and Dredze, 2015; He

and Sun, 2017a), and joint segmentation and NER

has been investigated using dual decomposition

(Xu et al., 2014), multi-task learning (Peng and

Dredze, 2016), etc. Our work is in line, focusing

on neural representation learning. While the above

methods can be affected by segmented training

data and segmentation errors, our method does not

require a word segmentor. The model is conceptu-

ally simpler by not considering multi-task settings.

External sources of information has been lever-

aged for NER. In particular, lexicon features have

been widely used (Collobert et al., 2011; Passos

et al., 2014; Huang et al., 2015; Luo et al., 2015).

Rei (2017) uses a word-level language modeling

objective to augment NER training, performing

multi-task learning over large raw text. Peters

et al. (2017) pretrain a character language model to

enhance word representations. Yang et al. (2017b)

exploit cross-domain and cross-lingual knowledge

via multi-task learning. We leverage external

data by pretraining word embedding lexicon over

large automatically-segmented texts, while semi-

supervised techniques such as language modeling

are orthogonal to and can also be used for our lat-

tice LSTM model.

Lattice structured RNNs can be viewed as a nat-

ural extension of tree-structured RNNs (Tai et al.,

2015) to DAGs. They have been used to model

motion dynamics (Sun et al., 2017), dependency-

discourse DAGs (Peng et al., 2017), as well as

speech tokenization lattice (Sperber et al., 2017)

and multi-granularity segmentation outputs (Su

et al., 2017) for NMT encoders. Compared with

existing work, our lattice LSTM is different in

both motivation and structure. For example, be-

ing designed for character-centric lattice-LSTM-

CRF sequence labeling, it has recurrent cells but

not hidden vectors for words. To our knowledge,

we are the first to design a novel lattice LSTM

representation for mixed characters and lexicon

words, and the first to use a word-character lattice

for segmentation-free Chinese NER.

3 Model

We follow the best English NER model (Huang

et al., 2015; Ma and Hovy, 2016; Lample et al.,

2016), using LSTM-CRF as the main network

structure. Formally, denote an input sentence as

s = c

1

, c

2

, . . . , c

m

, where c

j

denotes the jth char-

acter. s can further be seen as a word sequence

s = w

1

, w

2

, . . . , w

n

, where w

i

denotes the ith

word in the sentence, obtained using a Chinese

segmentor. We use t(i, k) to denote the index j

for the kth character in the ith word in the sen-

tence. Take the sentence in Figure 1 for exam-

ple. If the segmentation is “南京市 长江大桥”,

and indices are from 1, then t(2, 1) = 4 (长) and

t(1, 3) = 3 (市). We use the BIOES tagging

scheme (Ratinov and Roth, 2009) for both word-

based and character-based NER tagging.

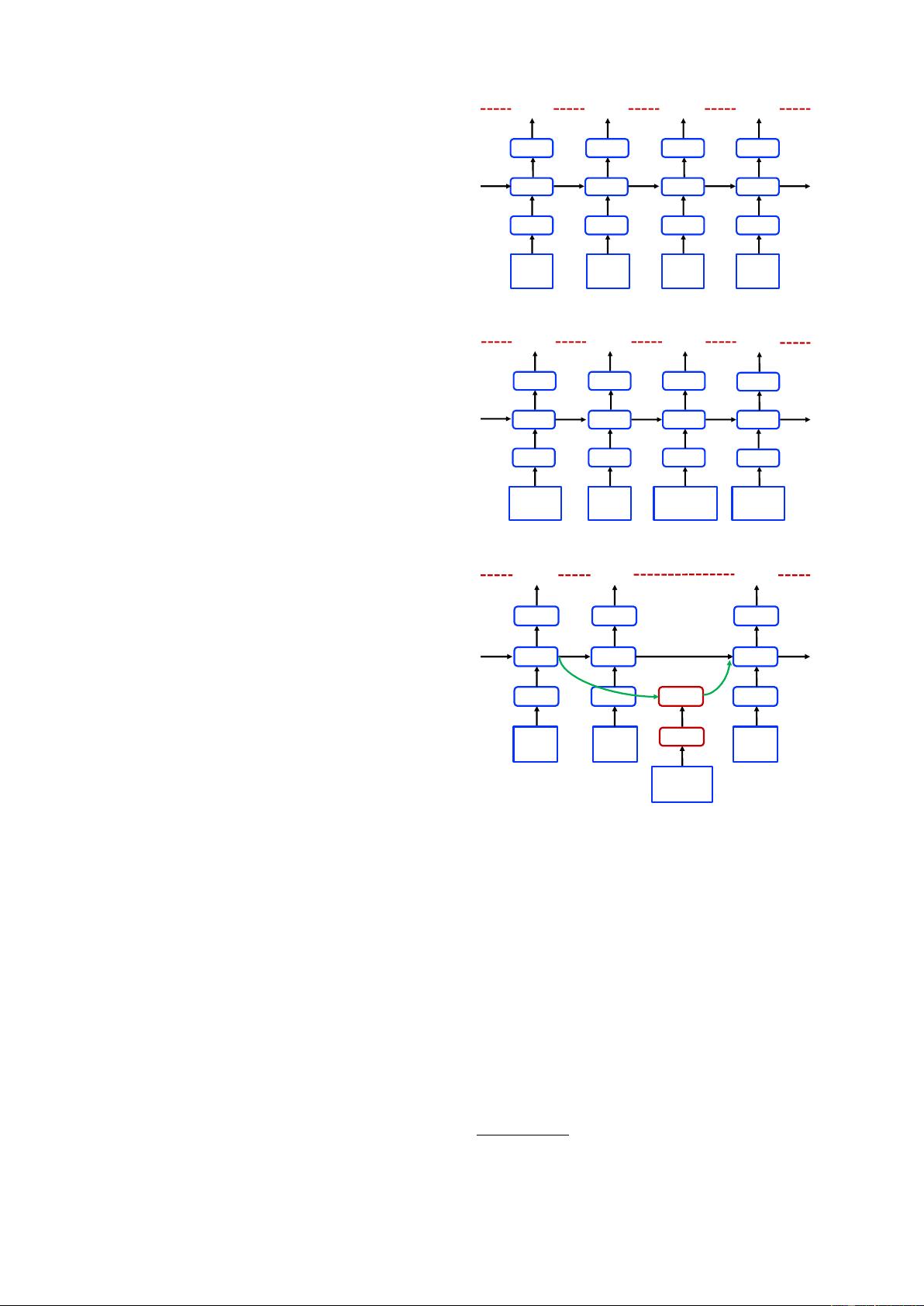

3.1 Character-Based Model

The character-based model is shown in Figure

3(a). It uses an LSTM-CRF model on the char-

acter sequence c

1

, c

2

, . . . , c

m

. Each character c

j

is

represented using

x

c

j

= e

c

(c

j

) (1)

e

c

denotes a character embedding lookup table.

A bidirectional LSTM (same structurally as

Eq. 11) is applied to x

1

, x

2

, . . . , x

m

to obtain

−→

h

c

1

,

−→

h

c

2

, . . . ,

−→

h

c

m

and

←−

h

c

1

,

←−

h

c

2

, . . . ,

←−

h

c

m

in the

left-to-right and right-to-left directions, respec-

tively, with two distinct sets of parameters. The

hidden vector representation of each character is:

h

c

j

= [

−→

h

c

j

;

←−

h

c

j

] (2)

A standard CRF model (Eq. 17) is used on

h

c

1

, h

c

2

, . . . , h

c

m

for sequence labelling.

• Char + bichar. Character bigrams have been

shown useful for representing characters in word

segmentation (Chen et al., 2015; Yang et al.,

2017a). We augment the character-based model

with bigram information by concatenating bigram

embeddings with character embeddings:

x

c

j

= [e

c

(c

j

); e

b

(c

j

, c

j+1

)], (3)

where e

b

denotes a charater bigram lookup table.

• Char + softword. It has been shown that using

segmentation as soft features for character-based

NER models can lead to improved performance

(Zhao and Kit, 2008; Peng and Dredze, 2016).

京

Capital

I (LOC

𝒄

"

#

𝒙

"

#

𝒉

"

#

E(LOC

𝒄

&

#

𝒙

&

#

𝒉

&

#

B(LOC

𝒄

'

#

𝒙

'

#

𝒉

'

#

市

Cit y

长

Long

B(LOC

𝒄

(

#

𝒙

(

#

𝒉

(

#

南

South

(a) Character-based model.

B"LOC

𝒄

"

#

𝒙

"

#

𝒉

"

#

E"LOC

𝒄

&

#

𝒙

&

#

𝒉

&

#

市

Cit y

南京

Nanjing

B"LOC

𝒄

'

#

𝒙

'

#

𝒉

'

#

长江

Yangtze River

E"LOC

𝒄

(

#

𝒙

(

#

𝒉

(

#

大桥

Bridge

(b) Word-based model.

京

Capital

I(LOC

𝒄

"

#

𝒙

"

#

𝒉

"

#

E(LOC

𝒄

&

#

𝒙

&

#

𝒉

&

#

市

Cit y

B(LOC

𝒄

'

#

𝒙

'

#

𝒉

'

#

南

South

南京市

Nanjing City

𝒙

',&

)

𝒄

',&

)

(c) Lattice model.

Figure 3: Models.

1

We augment the character representation with seg-

mentation information by concatenating segmen-

tation label embeddings to character embeddings:

x

c

j

= [e

c

(c

j

); e

s

(seg(c

j

))], (4)

where e

s

represents a segmentation label em-

bedding lookup table. seg(c

j

) denotes the segmen-

tation label on the character c

j

given by a word

segmentor. We use the BMES scheme for repre-

1

To keep the figure concise, we (i) do not show gate cells,

which uses h

t−1

for calculating c

t

; (ii) only show one direc-

tion.

剩余10页未读,继续阅读

天使的梦魇

- 粉丝: 32

- 资源: 321

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- SM4-CFB代码实现及基本补位示例代码

- 基于asp的搜索引擎开发(源代码)

- Java课设相关材料.zip

- JSP搜索引擎的研究与实现(源代码)

- delphi 12 控件之delphipi.0.85.setup.exe

- 数据库管理工具:dbeaver-ce-23.0.2-amd64.deb

- 搜索链接淘特搜索引擎共享版-tot-search-engine

- 数据库管理工具:dbeaver-ce-24.0.3-macos-x86-64.dmg

- 数据库管理工具:dbeaver-ce-24.0.1-x86-64-setup.exe

- GoogleCloud2024年数据和AI趋势报告+生成式AI+数据治理

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0