没有合适的资源?快使用搜索试试~ 我知道了~

2019-稀疏三元压缩解决通信开销,考虑下行通信和noniid-Efficient Federated Learning fro

需积分: 0 1 下载量 6 浏览量

2022-08-04

15:09:37

上传

评论

收藏 1.11MB PDF 举报

温馨提示

试读

17页

2019-稀疏三元压缩解决通信开销,考虑下行通信和noniid-Efficient Federated Learning from Non-IID Data1

资源详情

资源评论

资源推荐

SATTLER ET AL. – ROBUST AND COMMUNICATION-EFFICIENT FEDERATED LEARNING FROM NON-IID DATA 1

Robust and Communication-Efficient Federated

Learning from Non-IID Data

Felix Sattler, Simon Wiedemann, Klaus-Robert Müller*, Member, IEEE, and Wojciech Samek*, Member, IEEE

Abstract—Federated Learning allows multiple parties to jointly

train a deep learning model on their combined data, without

any of the participants having to reveal their local data to a

centralized server. This form of privacy-preserving collaborative

learning however comes at the cost of a significant communication

overhead during training. To address this problem, several

compression methods have been proposed in the distributed

training literature that can reduce the amount of required

communication by up to three orders of magnitude. These

existing methods however are only of limited utility in the

Federated Learning setting, as they either only compress the

upstream communication from the clients to the server (leaving

the downstream communication uncompressed) or only perform

well under idealized conditions such as iid distribution of the

client data, which typically can not be found in Federated

Learning. In this work, we propose Sparse Ternary Compres-

sion (STC), a new compression framework that is specifically

designed to meet the requirements of the Federated Learning

environment. STC extends the existing compression technique of

top-k gradient sparsification with a novel mechanism to enable

downstream compression as well as ternarization and optimal

Golomb encoding of the weight updates. Our experiments on

four different learning tasks demonstrate that STC distinctively

outperforms Federated Averaging in common Federated Learning

scenarios where clients either a) hold non-iid data, b) use small

batch sizes during training, or where c) the number of clients is

large and the participation rate in every communication round is

low. We furthermore show that even if the clients hold iid data and

use medium sized batches for training, STC still behaves pareto-

superior to Federated Averaging in the sense that it achieves fixed

target accuracies on our benchmarks within both fewer training

iterations and a smaller communication budget. These results

advocate for a paradigm shift in Federated optimization towards

high-frequency low-bitwidth communication, in particular in

bandwidth-constrained learning environments.

Keywords—Deep learning, distributed learning, Federated Learn-

ing, efficient communication, privacy-preserving machine learning.

This work was supported by the Fraunhofer Society through the MPI-FhG

collaboration project “Theory & Practice for Reduced Learning Machines”.

This research was also supported by the German Ministry for Education and

Research as Berlin Big Data Center (01IS14013A) and the Berlin Center for

Machine Learning (01IS18037I). Partial funding by DFG is acknowledged

(EXC 2046/1, project-ID: 390685689). This work was also supported by the

Information & Communications Technology Planning & Evaluation (IITP)

grant funded by the Korea government (No. 2017-0-00451).

F. Sattler, S. Wiedemann and W. Samek are with Fraunhofer Heinrich Hertz

Institute, 10587 Berlin, Germany (e-mail: wojciech.samek@hhi.fraunhofer.de).

K.-R. Müller is with the Technische Universität Berlin, 10587 Berlin,

Germany, with the Max Planck Institute for Informatics, 66123 Saarbrücken,

Germany, and also with the Department of Brain and Cognitive Engineering,

Korea University, Seoul 136-713, South Korea (e-mail: klaus-robert.mueller@tu-

berlin.de).

I. INTRODUCTION

Three major developments are currently transforming the

ways how data is created and processed: First of all, with the

advent of the Internet of Things (IoT), the number of intelligent

devices in the world has rapidly grown in the last couple of

years. Many of these devices are equipped with various sensors

and increasingly potent hardware that allow them to collect

and process data at unprecedented scales [1][2][3].

In a concurrent development deep learning has revolutionized

the ways that information can be extracted from data resources

with groundbreaking successes in areas such as computer

vision, natural language processing or voice recognition among

many others [

4

][

5

][

6

][

7

][

8

][

9

]. Deep learning scales well with

growing amounts of data and it’s astounding successes in recent

times can be at least partly attributed to the availability of very

large datasets for training. Therefore there lays huge potential

in harnessing the rich data provided by IoT devices for the

training and improving of deep learning models [10].

At the same time data privacy has become a growing concern

for many users. Multiple cases of data leakage and misuse in

recent times have demonstrated that the centralized processing

of data comes at a high risk for the end users privacy. As IoT

devices usually collect data in private environments, often even

without explicit awareness of the users, these concerns hold

particularly strong. It is therefore generally not an option to

share this data with a centralized entity that could conduct

training of a deep learning model. In other situations local

processing of the data might be desirable for other reasons

such as increased autonomy of the local agent.

This leaves us facing the following dilemma: How are we

going to make use of the rich combined data of millions of

IoT devices for training deep learning models if this data can

not be stored at a centralized location?

Federated Learning resolves this issue as it allows multiple

parties to jointly train a deep learning model on their combined

data, without any of the participants having to reveal their data

to a centralized server [

10

]. This form of privacy-preserving

collaborative learning is achieved by following a simple three

step protocol illustrated in Fig. 1. In the first step, all partici-

pating clients download the latest master model

W

from the

server. Next, the clients improve the downloaded model, based

on their local training data using stochastic gradient descent

(SGD). Finally, all participating clients upload their locally

improved models

W

i

back to the server, where they are gathered

and aggregated to form a new master model (in practice, weight

updates

∆W = W

new

− W

old

can be communicated instead

of full models

W

, which is equivalent as long as all clients

remain synchronized). These steps are repeated until a certain

arXiv:1903.02891v1 [cs.LG] 7 Mar 2019

SATTLER ET AL. – ROBUST AND COMMUNICATION-EFFICIENT FEDERATED LEARNING FROM NON-IID DATA 2

Data

Data

Data

Data

Data

Data

(a) (b)

Δ

Δ

Δ

... ...

Client2 ClientnClient2 ClientnClient1 Client1

Client2 ClientnClient1

ServerServer

SGDSGDSGD

download

Data

Data

Data

Data

Data

Data

(a) (b)

Δ

Δ

Δ

... ...

ServerServer

SGDSGDSGD

download

(c)

Δ

Δ

Δ

Server

global

averaging

Data

Data

Data

...

upload

(c)

Δ

Δ

Δ

Server

global

averaging

Data

Data

Data

...

upload

Client2 ClientnClient2 ClientnClient1 Client1

Client2 ClientnClient1

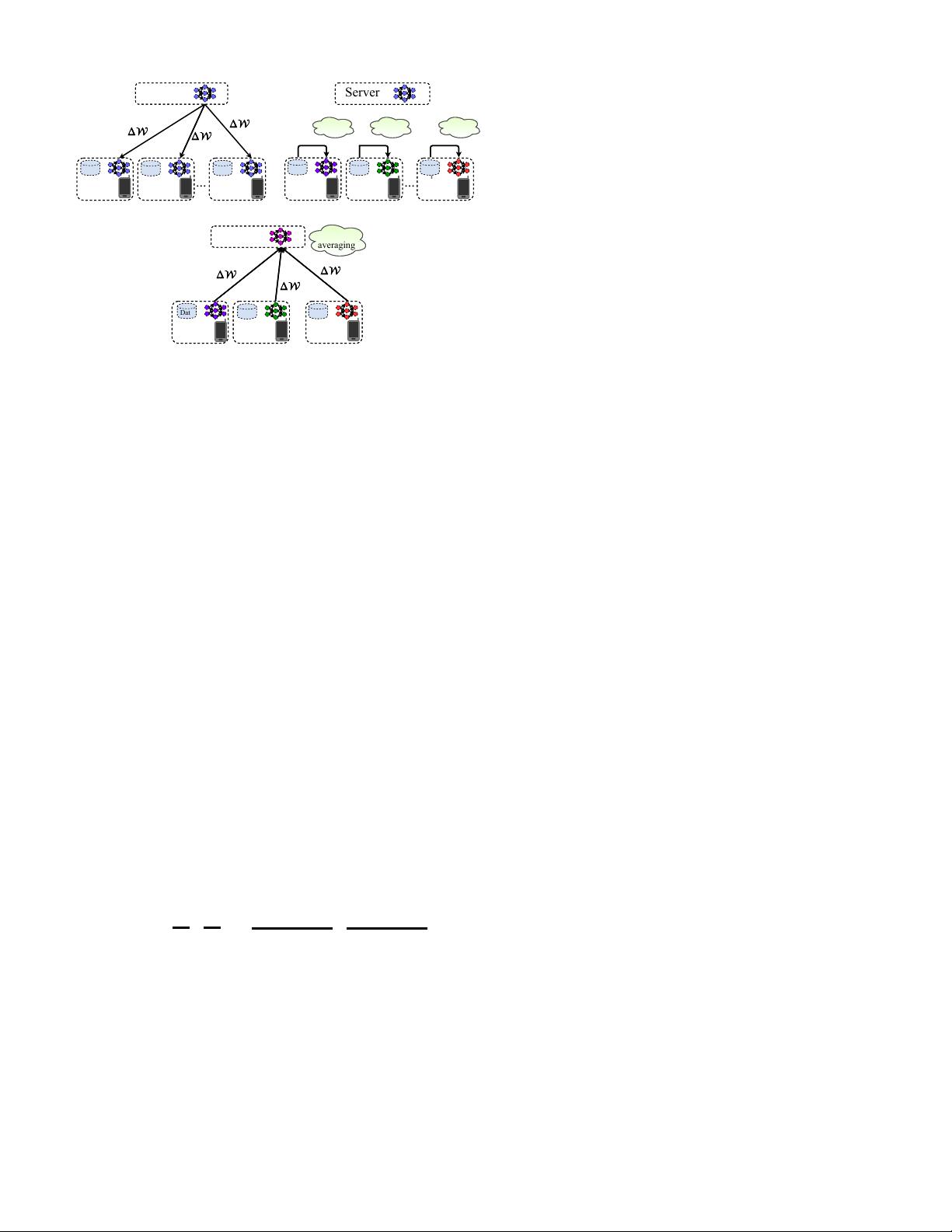

Fig. 1: Federated Learning with a parameter server. Illustrated

is one communication round of distributed SGD: a) Clients

synchronize with the server. b) Clients compute a weight update

independently based on their local data. c) Clients upload their

local weight updates to the server, where they are averaged to

produce the new master model.

convergence criterion is satisfied. Observe, that when following

this protocol, training data never leaves the local devices as

only model updates are communicated. Although it has been

shown that in adversarial settings information about the training

data can still be inferred from these updates [

11

], additional

mechanisms such as homomorphic encryption of the updates

[

12

][

13

] or differentially private training [

14

] can be applied

to fully conceal any information about the local data.

A major issue in Federated Learning is the massive commu-

nication overhead that arises from sending around the model

updates. When naively following the protocol described above,

every participating client has to communicate a full model

update during every training iteration. Every such update is of

the same size as the trained model, which can be in the range of

gigabytes for modern architectures with millions of parameters

[

15

][

16

]. Over the course of multiple hundred thousands of

training iterations on big datasets the total communication

for every client can easily grow to more than a petabyte

[

17

]. Consequently, if communication bandwidth is limited

or communication is costly (naive) Federated Learning can

become unproductive or even completely unfeasible.

The total amount of bits that have to be uploaded and

downloaded by every client during training is given by

b

up/down

∈ O(N

iter

× f

| {z }

# updates

× |W| × (H(∆W

up/down

) + η)

| {z }

update size

) (1)

where

N

iter

is the total number of training iterations (forward-

backward passes) performed by every client,

f

is the communi-

cation frequency,

|W|

is the size of the model,

H(∆W

up/down

)

is the entropy of the weight updates exchanged during upload

and download respectively, and

η

is the inefficiency of the

encoding, i.e. the difference between the true update size and

the minimal update size (which is given by the entropy). If we

assume the size of the model and number of training iterations

to be fixed (e.g. because we want to achieve a certain accuracy

on a given task), this leaves us with three options to reduce

communication: We can a) reduce the communication frequency

f

, b) reduce the entropy of the weight updates

H(∆W

up/down

)

via lossy compression schemes and/or c) use more efficient

encodings to communicate the weight updates, thus reducing

η.

II. CHALLENGES OF THE FEDERATED LEARNING

ENVIRONMENT

Before we can consider ways to reduce the amount of

communication we first have to take into account the unique

characteristics, which distinguish Federated Learning from other

distributed training settings such as Parallel Training (compare

also with [

10

]). In Federated Learning the distribution of both

training data and computational resources is a fundamental and

fixed property of the learning environment. This entails the

following challenges:

Unbalanced and non-IID data:

As the training data present

on the individual clients is collected by the clients themselves

based on their local environment and usage pattern, both the

size and the distribution of the local datasets will typically vary

heavily between different clients.

Large number of clients:

Federated Learning environments

may constitute of multiple millions of participants [

18

]. Fur-

thermore, as the quality of the collaboratively learned model

is determined by the combined available data of all clients,

collaborative learning environments will have a natural tendency

to grow.

Parameter server:

Once the number of clients grows

beyond a certain threshold, direct communication of weight

updates becomes unfeasible, because the workload for both

communication and aggregation of updates grows linearly with

the number of clients. In Federated Learning it is therefore

unavoidable to communicate via an intermediate parameter

server. This reduces the amount of communication per client

and communication rounds to one single upload of a local

weight update to and one download of the aggregated update

from the server and moves the workload of aggregation away

from the clients. Communicating via a parameter server however

introduces an additional challenge to communication-efficient

distributed training, as now both the upload to the server and

the download from the server need to be compressed in order

to reduce communication time and energy consumption.

Partial participation:

In the general Federated Learning for

IoT setting it can generally not be guaranteed that all clients

participate in every communication round. Devices might loose

their connection, run out of battery or seize to contribute to

the collaborative training for other reasons.

Limited battery and memory:

Mobile and embedded

devices often are not connected to a power grid. Instead

their capacity to run computations is limited by a finite

battery. Performing iterations of stochastic gradient descent is

notoriously expensive for deep neural networks. It is therefore

necessary to keep the number of gradient evaluations per

client as small as possible. Mobile and embedded devices

SATTLER ET AL. – ROBUST AND COMMUNICATION-EFFICIENT FEDERATED LEARNING FROM NON-IID DATA 3

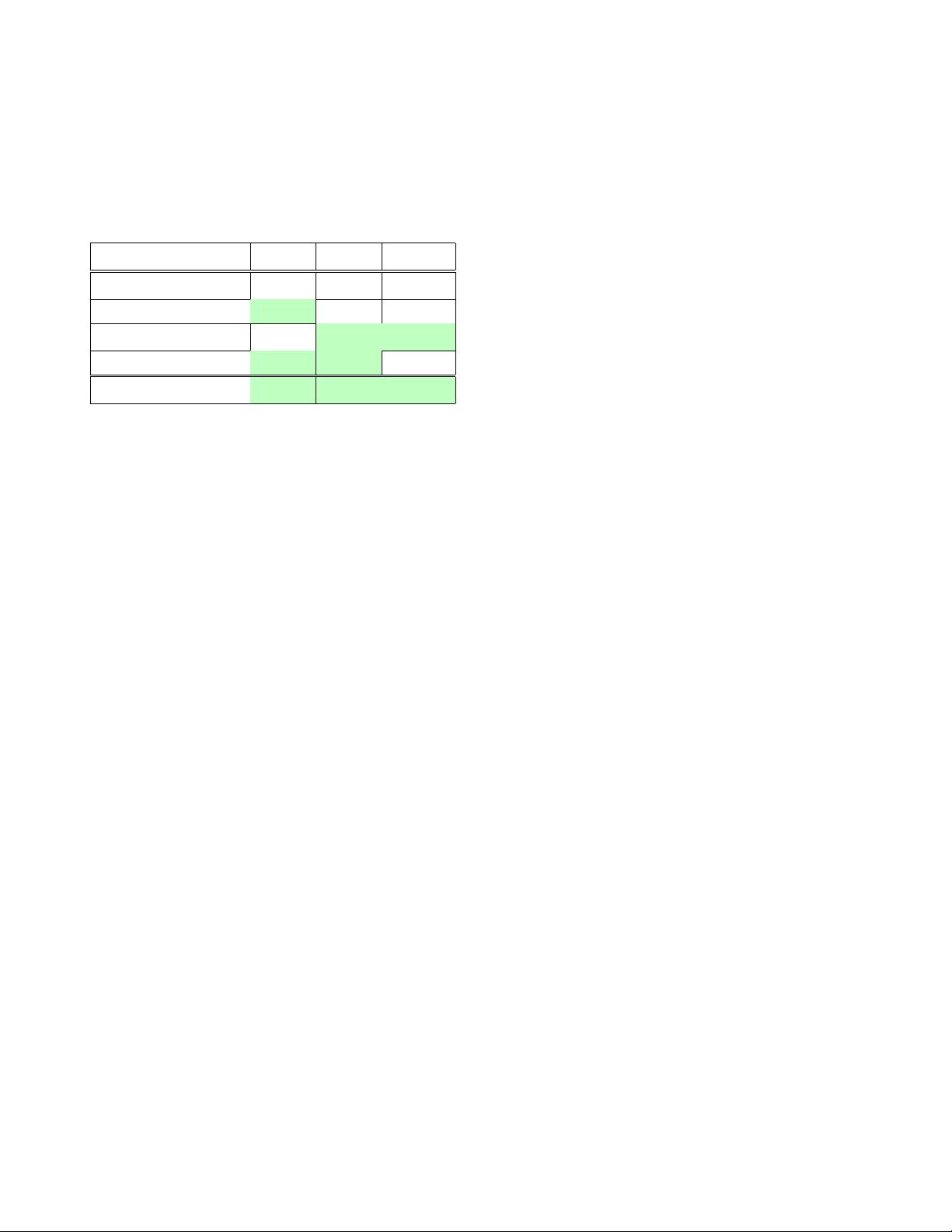

TABLE I: Different methods for communication-efficient

distributed deep learning proposed in the literature. None of

the existing methods satisfies all requirements

(R1)

-

(R3)

of

the Federated Learning environment. We call a method "robust

to non-iid data" if the federated training converges independent

of the local distribution of client data. We call compression

rates greater than

×32

"strong" and those smaller or equal to

×32 "weak".

Method

Downstream

Compression

Compression

Rate

Robust to

NON-IID Data

TernGrad [19], QSGD [20],

ATOMO [21]

NO WEAK NO

signSGD [22] YES WEAK NO

Gradient Dropping [23], DGC [24],

Variance based [25], Strom [26]

NO STRONG YES

Federated Averaging [10] YES STRONG NO

Sparse Ternary

Compression (ours)

YES STRONG YES

also typically have only very limited memory. As the memory

footprint of SGD grows linearly with the batch size, this might

force the devices to train on very small batch sizes.

Based on the above characterization of the Federated Learn-

ing environment we conclude that a communication-efficient

distributed training algorithm for Federated Learning needs to

fulfill the following requirements:

(R1)

It should compress both upstream and downstream

communication.

(R2)

It should be robust to non-iid, small batch sizes and

unbalanced data.

(R3)

It should be robust to large numbers of clients and partial

client participation.

In this work we will demonstrate that none of the existing

methods proposed for communication-efficient Federated Learn-

ing satisfies all of these requirements (cf. Table I). More

concretely we will show, that the methods which are able

to compress both upstream and downstream communication are

very sensitive to non-iid data distributions, while the methods

which are more robust to this type of data do not compress the

downstream (Section IV). We will then proceed to construct

a new communication protocol that resolves these issues and

meets all requirements

(R1)

-

(R3)

. We will provide extensive

empirical results on four different neural network architectures

and datasets that will demonstrate that our protocol is superior

to existing compression schemes in that it requires both fewer

gradient evaluations and communicated bits to converge to a

given target accuracy (Section VIII). These results also extend

to the iid regime.

III. RELATED WORK

In the broader realm of communication-efficient distributed

deep learning, a wide variety of methods has been proposed

to reduce the amount of communication during the training

process. Using equation

(1)

as a reference, we can organize the

substantial existing research body on communication-efficient

distributed deep learning into three different groups:

Communication delay

methods reduce the communication

frequency

f

. McMahan et al. [

10

] propose Federated Averaging

where instead of communicating after every iteration, every

client performs multiple iterations of SGD to compute a weight

update. The authors observe that on different convolutional and

recurrent neural network architectures communication can be

delayed for up to 100 iterations without significantly affecting

the convergence speed as long as the data is distributed among

the clients in an iid manner. The amount of communication can

be reduced even further with longer delay periods, however

this comes at the cost of an increased number of gradient

evaluations. In a follow-up work Konecny et al. [

27

] combine

this communication delay with random sparsification and

probabilistic quantization. They restrict the clients to learn

random sparse weight updates or force random sparsity on them

afterwards ("structured" vs "sketched" updates) and combine

this sparsification with probabilistic quantization. Their method

however significantly slows down convergence speed in terms

of SGD iterations. Communication delay methods automatically

reduce both upstream and downstream communication and are

proven to work with large numbers of clients and partial client

participation.

Sparsification methods reduce the entropy H(∆W) of the

updates by restricting changes to only a small subset of the

parameters. Strom [

25

] presents an approach (later modified by

[

26

]) in which only gradients with a magnitude greater than

a certain predefined threshold are sent to the server. All other

gradients are accumulated in a residual. This method is shown

to achieve upstream compression rates of up to 3 orders of

magnitude on an acoustic modeling task. In practice however,

it is hard to choose appropriate values for the threshold, as it

may vary a lot for different architectures and even different

layers. To overcome this issue Aji et al. [

23

] instead fix the

sparsity rate and only communicate the fraction

p

entries with

the biggest magnitude of each gradient, while also collecting

all other gradients in a residual. At a sparsity rate of

p = 0.001

their method only slightly degrades the convergence speed and

final accuracy of the trained model. Lin et al. [

24

] present

minor modifications to the work of Aji et al. which even close

this small performance gap. Sparsification methods have been

proposed primarily with the intention to speed up parallel

training in the data center. Their convergence properties in

the much more challenging Federated Learning environments

have not yet been investigated. Sparsification methods (in their

existing form) primarily compress the upstream communication,

as the sparsity patterns on the updates from different clients

will generally differ. If the number of participating clients is

greater than the inverse sparsity rate, which can easily be the

case in Federated Learning, the downstream update will not

even be compressed at all.

Dense quantization

methods reduce the entropy of the

weight updates by restricting all updates to a reduced set of

values. Bernstein et al. propose signSGD [

22

], a compression

method with theoretical convergence guarantees on iid data

that quantizes every gradient update to it’s binary sign, thus

SATTLER ET AL. – ROBUST AND COMMUNICATION-EFFICIENT FEDERATED LEARNING FROM NON-IID DATA 4

0 20000 40000

Iterations

0.2

0.4

0.6

0.8

Accuracy

VGG11* @ CIFAR

IID Data

FedAvg

n=100

no comp.

signSGD

sparse top-k

p=0.01

0 20000 40000

Iterations

0.2

0.4

0.6

0.8

VGG11* @ CIFAR

NON-IID Data (2)

0 20000 40000

Iterations

0.2

0.4

0.6

0.8

VGG11* @ CIFAR

NON-IID Data (1)

0 5000 10000

Iterations

0.6

0.7

0.8

0.9

Accuracy

Logistic @ MNIST

IID Data

0 5000 10000

Iterations

0.6

0.7

0.8

0.9

Logistic @ MNIST

NON-IID Data (2)

0 5000 10000

Iterations

0.6

0.7

0.8

0.9

Logistic @ MNIST

NON-IID Data (1)

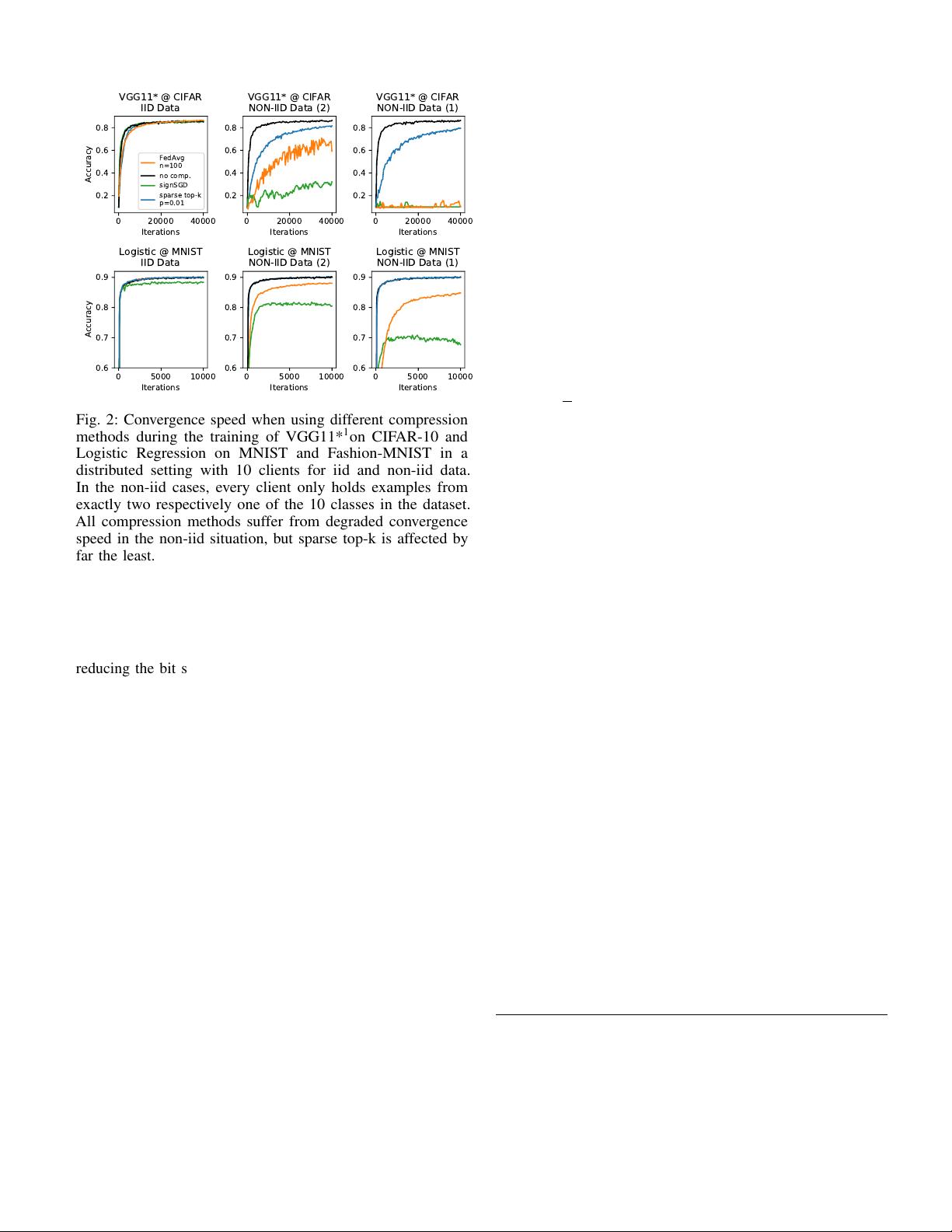

Fig. 2: Convergence speed when using different compression

methods during the training of VGG11*

1

on CIFAR-10 and

Logistic Regression on MNIST and Fashion-MNIST in a

distributed setting with 10 clients for iid and non-iid data.

In the non-iid cases, every client only holds examples from

exactly two respectively one of the 10 classes in the dataset.

All compression methods suffer from degraded convergence

speed in the non-iid situation, but sparse top-k is affected by

far the least.

reducing the bit size per update by a factor of

×32

. signSGD

also incorporates download compression by aggregating the

binary updates from all clients by means of a majority vote.

Other authors propose to stochastically quantize the gradients

during upload in an unbiased way (TernGrad [

19

], QSGD

[

20

], ATOMO [

21

]). These methods are theoretically appealing,

as they inherit the convergence properties of regular SGD

under relatively mild assumptions. However their empirical

performance and compression rates do not match those of

sparsification methods.

Out of all the above listed methods, only Federated Averaging

and signSGD compress both the upstream and downstream

communication. All other methods are of limited utility in the

Federated Learning setting defined in Section II as they leave

the communication from the server to the clients uncompressed.

Notation:

In the following calligraphic

W

will refer to the

entirety of parameters of a neural network, while regular

uppercase

W

refers to one specific tensor of parameters within

W

and lowercase

w

refers to one single scalar parameter of

the network. Arithmetic operations between neural network

parameters are to be understood element-wise.

IV. LIMITATIONS OF EXISTING COMPRESSION METHODS

The related work on efficient distributed deep learning almost

exclusively considers iid data distributions among the clients,

i.e. they assume unbiasedness of the local gradients with respect

to the full-batch gradient according to

E

x∼p

i

[∇

W

l(x, W)] = ∇

W

R(W) ∀i = 1, .., n (2)

where

p

i

is the distribution of data on the

i

-th client and

R(W)

is the empirical risk function over the combined training data.

While this assumption is reasonable for parallel training

where the distribution of data among the clients is chosen by the

practitioner, it is typically not valid in the Federated Learning

setting where we can generally only hope for unbiasedness in

the mean

1

n

n

X

i=1

E

x

i

∼p

i

[∇

W

l(x

i

, W)] = ∇

W

R(W) (3)

while the individual client’s gradients will be biased towards

the local dataset according to

E

x∼p

i

[∇

W

l(x, W)] = ∇

W

R

i

(W) 6= ∇

W

R(W) ∀i = 1, .., n.

(4)

As it violates assumption

(2)

, a non-iid distribution of

the local data renders existing convergence guarantees as

formulated in [

19

][

20

][

29

][

21

] inapplicable and has dramatic

effects on the practical performance of communication-efficient

distributed training algorithms as we will demonstrate in the

following experiments.

A. Preliminary Experiments

We run preliminary experiments with a simplified version of

the well-studied 11-layer VGG11 network [

28

], which we train

on the CIFAR-10 [

30

] dataset in a Federated Learning setup

using 10 clients. For the iid setting we split the training data

randomly into equally sized shards and assign one shard to

every one of the clients. For the "non-iid (

m

)" setting we assign

every client samples from exactly

m

classes of the dataset. The

data splits are non-overlapping and balanced such that every

client ends up with the same number of data points. The detailed

procedure that generates the split of data is described in Section

B of the appendix. We also perform experiments with a simple

logistic regression classifier, which we train on the MNIST

dataset [

31

] under the same setup of the Federated Learning

environment. Both models are trained using momentum SGD.

To make the results comparable, all compression methods use

the same learning rate and batch size.

1

We denote by VGG11* a simplified version of the original VGG11

architecture described in [

28

], where all dropout and batch normalization

layers are removed and the number of convolutional filters and size of all

fully-connected layers is reduced by a factor of 2.

剩余16页未读,继续阅读

士多霹雳酱

- 粉丝: 22

- 资源: 299

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 学生成绩管理系统-C++版本

- 吉林大学离散数学2笔记.pdf

- 通道处理过程的模拟通常涉及对通道处理机制的理解与实现.txt

- Flume进阶-自定义拦截器jar包

- Dubins曲线算法讲解和在运动规划中的使用.pdf

- 上市公司-股票性质数据-工具变量(民企、国企、央企)2003-2022年.dta

- 上市公司-股票性质数据-工具变量(民企、国企、央企)2003-2022年.xlsx

- Reeds+Shepp曲线算法讲解和实现.pdf

- 毕业设计基于SpringBoot+MyBatisPlus+MySQL+Vue的外卖配送信息系统源代码+数据库

- 词向量(Word Embeddings)是自然语言处理(NLP)领域的一种重要技术.txt

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0