没有合适的资源?快使用搜索试试~ 我知道了~

2019-ICLR-Pooling Is Neither Necessary nor Sufficient for Approp

需积分: 0 0 下载量 145 浏览量

2022-08-04

14:08:51

上传

评论

收藏 4.54MB PDF 举报

温馨提示

试读

12页

work, we rigorously test these questions, and find that deformation stability in

资源详情

资源评论

资源推荐

Under review as a conference paper at ICLR 2019

POOLING IS NEITHER NECESSARY NOR SUFFICIENT

FOR APPROPRIATE DEFORMATION STABILITY IN CNNS

Anonymous authors

Paper under double-blind review

ABSTRACT

Many of our core assumptions about how neural networks operate remain empiri-

cally untested. One common assumption is that convolutional neural networks need

to be stable to small translations and deformations to solve image recognition tasks.

For many years, this stability was baked into CNN architectures by incorporating

interleaved pooling layers. Recently, however, interleaved pooling has largely been

abandoned. This raises a number of questions: Are our intuitions about deforma-

tion stability right at all? Is it important? Is pooling necessary for deformation

invariance? If not, how is deformation invariance achieved in its absence? In this

work, we rigorously test these questions, and find that deformation stability in

convolutional networks is more nuanced than it first appears: (1) Deformation

invariance is not a binary property, but rather that different tasks require different

degrees of deformation stability at different layers. (2) Deformation stability is

not a fixed property of a network and is heavily adjusted over the course of train-

ing, largely through the smoothness of the convolutional filters. (3) Interleaved

pooling layers are neither necessary nor sufficient for achieving the optimal form

of deformation stability for natural image classification. (4) Pooling confers too

much deformation stability for image classification at initialization, and during

training, networks have to learn to counteract this inductive bias. Together, these

findings provide new insights into the role of interleaved pooling and deformation

invariance in CNNs, and demonstrate the importance of rigorous empirical testing

of even our most basic assumptions about the working of neural networks.

1 INTRODUCTION

Within deep learning, a variety of intuitions have been assumed to be common knowledge without

empirical verification, leading to recent active debate (Rahimi & Recht, 2017; LeCun, 2017; Sculley

et al., 2015; 2018). Nevertheless, many of these core ideas have informed the structure of broad

classes of models, with little attempt to rigorously test these assumptions.

In this paper, we seek to address this issue by undertaking a careful, empirical study of one of the

foundational intuitions informing convolutional neural networks (CNNs) for visual object recognition:

the need to make these models stable to small translations and deformations in the input images. This

intuition runs as follows: much of the variability in the visual domain comes from slight changes in

view, object position, rotation, size, and non-rigid deformations of (e.g.) organic objects; represen-

tations which are invariant to such transformations would (presumably) lead to better performance.

This idea is arguably one of the core principles initially responsible for the architectural choices of

convolutional filters and interleaved pooling LeCun et al. (1998; 2015), as well as the deployment

of parametric data augmentation strategies during training Simard et al. (2003). Yet, despite the

widespread impact of this idea, the relationship between visual object recognition and deformation

stability has not been thoroughly tested, and we do not actually know how modern CNNs realize

deformation stability, if they even do at all.

Moreover, for many years, the very success of CNNs on visual object recognition tasks was thought

to depend on the interleaved pooling layers that purportedly rendered these models insensitive to

small translations and deformations. However, despite this reasoning, recent models have largely

abandoned interleaved pooling layers, achieving similar or greater success without them Springenberg

et al. (2014); He et al. (2016).

1

Under review as a conference paper at ICLR 2019

These observations raise several critical questions. Is deformation stability necessary for visual object

recognition? If so, how is it achieved in the absence of pooling layers? What role does interleaved

pooling play when it is present?

Here, we seek to answer these questions by building a broad class of image deformations, and

comparing CNNs’ responses to original and deformed images. While this class of deformations is

an artificial one, it is rich and parametrically controllable, includes many commonly used image

transformations (including affine transforms: translations, shears, and rotations, and thin-plate spline

transforms, among others) and it provides a useful model for probing how CNNs might respond to

natural image deformations. We use these to study CNNs with and without pooling layers, and how

their representations change with depth and over the course of training. Our contributions are as

follows:

•

Networks without pooling are sensitive to deformation at initialization, but ultimately learn

representations that are stable to deformation.

•

The inductive bias provided by pooling is too strong at initialization, and deformation

stability in these networks decrease over the course of training.

•

The pattern of deformation stability across layers for trained networks with and without

pooling converges to a similar structure.

•

Networks both with and without pooling implement and modulate deformation stability

largely through the smoothness of learned filters.

More broadly, this work demonstrates that our intuitions as to why neural networks work can often be

inaccurate, no matter how reasonable they may seem, and require thorough empirical and theoretical

validation.

1.1 PRIOR WORK

Invariances in non-neural models.

There is a long history of non-neural computer vision models

architecting invariance to deformation. For example, SIFT features are local features descriptors

constructed such that they are invariant to translation, scaling and rotation Lowe (1999). In addition,

by using blurring, SIFT features become somewhat robust to deformations. Another example is the

deformable parts models which contain a single stage spring-like model of connections between pairs

of object parts giving robustness to translation at a particular scale Felzenszwalb et al. (2008).

Deformation invariance and pooling.

Important early work in neuroscience found that in the visual

cortex of cats, there exist special complex-cells which are somewhat insensitive to the precise location

of edges Hubel & Wiesel (1968). These findings inspired work on the neocognitron, which cascaded

locally-deformation-invariant modules into a hierarchy Fukushima & Miyake (1982). This, in turn,

inspired the use of pooling layers in CNNs LeCun et al. (1990). Here, pooling was directly motivated

as conferring invariance to translations and deformations. For example, LeCun et al. (1990) expressed

this as follows: Each feature extraction in our network is followed by an additional layer which

performs a local averaging and a sub-sampling, reducing the resolution of the feature map. This

layer introduces a certain level of invariance to distortions and translations. In fact, until recently,

pooling was still seen as an essential ingredient in CNNs, allowing for invariance to small shifts and

distortions Simonyan & Zisserman (2014); He et al. (2016); Krizhevsky et al. (2012); Simonyan &

Zisserman (2014); LeCun et al. (2015); Giusti et al. (2013).

Previous theoretical analyses of invariances in CNNs.

A significant body of theoretical work

shows formally that scattering networks, which share some architectural components with CNNs, are

stable to deformations Mallat (2012); Sifre & Mallat (2013); Bruna & Mallat (2013); Mallat (2016).

However this work does not apply to widely used CNN architectures for two reasons. First, there are

significant architectural differences, including in connectivity, pooling, and non-linearities. Second,

and perhaps more importantly, this line of work assumes that the filters are fixed wavelets that do not

change during training.

The more recent theoretical study of Bietti & Mairal (2017) uses reproducing kernel Hilbert spaces

to study the inductive biases (including deformation stability) of architectures more similar to the

CNNs used in practice. However, this work assumes the use of interleaved pooling layers between the

convolutional layers, and cannot explain the success of more recent architectures which lack them.

2

Under review as a conference paper at ICLR 2019

(a) (b)

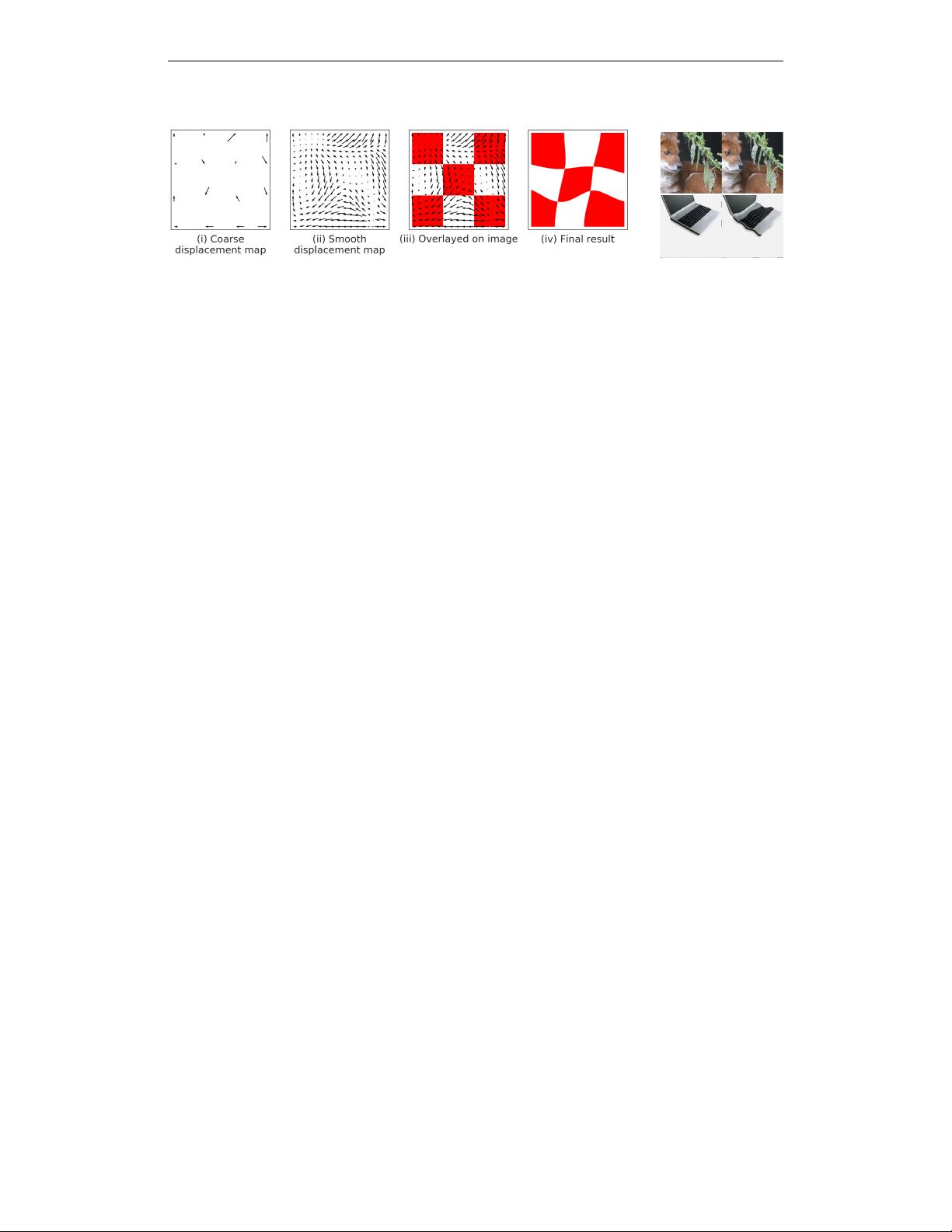

Figure 1: (a)

Generating deformed images

: To randomly deform an image we: (i) Start with a fixed

evenly spaced grid of control points (here 4x4 control points) and then choose a random source for

each control point within a neighborhood of the point; (ii) we then smooth the resulting vector field

using thin plate interpolation; (iii) vector field overlayed on original image: the value in the final

result at the tip of an arrow is computed using bilinear interpolation of values in a neighbourhood

around the tail of the arrow in the original image; (iv) the final result. (b)

Examples of deformed

ImageNet images.

left: original images, right: deformed images. While the images have changed

significantly, for example under the

L

2

metric, they would likely be given the same label by a human.

Empirical investigations.

Previous empirical investigations of these phenomena in CNNs include

the work of Lenc & Vedaldi (2015), which focused on a more limited set of invariances such as

global affine transformations. More recently, there has been interest in the robustness of networks

to adversarial geometric transformations in the work of Fawzi & Frossard (2015) and Kanbak et al.

(2017). In particular, these studies looked at worst-case sensitivity of the output to such transforma-

tions, and found that CNNs can indeed be quite sensitive to particular geometric transformations (a

phenomenon that can be mitigated by augmenting the training sets). However, this line of work does

not address how deformation sensitivity is generally achieved in the first place, and how it changes

over the course of training. In addition, these investigations have been restricted to a limited class of

deformations, which we seek to remedy here.

2 METHODS

2.1 DEFORMATION SENSITIVITY

In order to study how CNN representations are affected by image deformations we first need a

controllable source of deformation. Here, we choose a flexible class of local deformations of image

coordinates, i.e., maps

τ : R

2

→ R

2

such that

k∇τk

∞

< C

for some

C

, similar to Mallat (2012).

We choose this class for several reasons. First, it subsumes or approximates many of the canonical

forms of image deformation we would want to be robust to, including:

• Pose: Small shifts in pose or location of subparts

• Affine transformations: translation, scaling, rotation or shear

• Thin-plate spline transforms

• Optical flow: Roth & Black (2007); Rosenbaum et al. (2013)

We show examples of several of these in Section 2 of the supplementary material.

This class also allows us to modulate the strength of image deformations, which we deploy to

investigate how task demands are met by CNNs. Furthermore, this class of deformations approximates

most of the commonly used methods of data augmentation for object recognition Simard et al. (2003);

Wong et al. (2016); Cire¸san et al. (2010).

While it is in principle possible to explore finer-grained distributions of deformation (e.g., choosing

adversarial deformations to maximally shift the representation), we think our approach offers good

coverage over the space, and a reasonable first order approximations to the class of natural deforma-

tions. We leave the study of richer transformations—such as those requiring a renderer to produce or

those chosen adversarially Fawzi & Frossard (2015); Kanbak et al. (2017)—as future work.

3

剩余11页未读,继续阅读

三山卡夫卡

- 粉丝: 16

- 资源: 323

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0