没有合适的资源?快使用搜索试试~ 我知道了~

TOD-Net An end-to-end transformer-based object detection network

0 下载量 43 浏览量

2023-11-25

15:40:36

上传

评论

收藏 6.45MB PDF 举报

温馨提示

试读

12页

SCI paper:TOD-Net An end-to-end transformer-based object detection network

资源推荐

资源详情

资源评论

Computers and Electrical Engineering 108 (2023) 108695

Available online 8 April 2023

0045-7906/© 2023 Elsevier Ltd. All rights reserved.

TOD-Net: An end-to-end transformer-based object

detection network

Museboyina Sirisha

a

,

*

, S.V. Sudha

b

a

School of Computer Science and Engineering, VIT-AP University, Amaravathi, Andhra Pradesh 522237, India

b

Professor and Dean, School of Computer Science and Engineering, VIT-AP University, Amaravathi, Andhra Pradesh 522237, India

ARTICLE INFO

Editor E. Cabal-Yepez

Keywords:

Object detection

Network learning

Feature representation

Transformer

Predictor module

Feature analysis

Local features

Scaling

Semantic analysis

Network layer

ABSTRACT

Various object detection approaches using a learning model intends to learn the semantic and

multi-scaling information to attain superior object saliency. This research employs a transformer-

based network framework for object detection (TOD − Net) for object detection. It is composed of

encoders, decoders, and transformer and predictor module. The predictor model bridges the

connectivity between the encoder and the transformer module and offers better insight into the

transformer module’s local measures. Here, feature extraction is performed to measure the local

features and establishes dense modeling by analyzing local features. The model gives broader

knowledge of local and global features. Python programming was used to experiment with the MS

COCO dataset (Microsoft Common Objects in Context) where the experimentation gives better

results over existing models. In contrast to existing methods, the proposed method achieves

68.7% precision and 4% accuracy. The proposed model outperforms different prevailing ap-

proaches and establishes a better trade-off.

1. Introduction

Object detection remains one of the biggest challenges in computer vision. Object detection methods are broadly utilized in

different regions, like detecting faces, tracking objects [1], detecting pedestrians, autonomous driving, and medical imaging based on

deep learning.[1–3] The rapid development in the latest days is achieved using CNNs(Convolutional Neural networks), which started

from Alexnet [4], making considerable gains in detecting objects. A pre-trained backbone network requires a large volume of data like

ImageNet and a signicant processing time, broadly utilized to extract features [5] effectively. MobileNetV2 [6] utilizes the linear

bottleneck and the inverted residuals, making it suitable to be a lightweight backbone network in many object detection models. The

process of depth-wise separable convolution was applied to the inception in Xception [7], an approach that separates channels from

spaces in a network. In addition, the point-wise convolution and the channels are used by ShufeNet [8]. ResNet [9] improved

performance by using residual learning to construct a deeper neural network, while ResNeXt [10] introduced grouped convolutions

based on ResNet to enhance accuracy.ResNeXt achieves relative performance by maximizing the cardinality with the help of grouped

convolutions based on the ResNet. The method of image pyramid [11] uses the multi-scale map for the features to identify the

multi-scale objects from the higher layers to the lower layers at the increased cost of the memory space and computational load. The

study helps to integrate the efcient mapping of features for the higher and lower layers, which is performed actively to solve this

problem.

* Corresponding author.

E-mail address: musesirisha@gmail.com (M. Sirisha).

Contents lists available at ScienceDirect

Computers and Electrical Engineering

journal homepage: www.elsevier.com/locate/compeleceng

https://doi.org/10.1016/j.compeleceng.2023.108695

Received 25 November 2022; Received in revised form 20 March 2023; Accepted 22 March 2023

Computers and Electrical Engineering 108 (2023) 108695

2

Feature Pyramid Network (FPN) represents one of the most common models for generating pyramidal feature representations of

detecting objects [12]. The backbone for extracting the higher-level features like the object parts or the textures is done with the help of

deep layers. However, lower-level features like the curves and the edges are extracted as the backbone in the shallow layers. FPN uses

the higher and lower-level features for hierarchically stacking the feature maps via the top-down way, with lateral connection for

detecting objects of different sizes. The multi-scale objects are detected to have the performance through the enhanced method, and the

problems related to memory and the cost of computation are resolved. A stronger structure of FPN is suggested [13] by adding the

bottom-up way. In addition, a new structure of FPN is suggested [14] via the search architecture of neural model, and the method of

fusion is suggested by Bi-FPN [15] for the multi-scale feature map using the implementation of the weighted bi-directional pyramid

network of the features.

The interpolation or deconvolution [16] is used with the structure based on the previous FPN for fusing the higher-level and

lower-level feature maps. Interpolation is performed mainly by using nearest neighbor interpolation or bilinear interpolation. The

calculation of the nearest neighbor interpolation is performed in a quick way using the value lled with the help of pixel values at the

nearest location. Still, the edge becomes ambiguous due to the presence of aliasing. The neighboring four-pixel values are multiplied

with the weights to obtain the values using the bilinear interpolation during the resize of the original image size using N times,

resulting in a blur on the image. The feature map size is expanded using deconvolution through inverse calculation of the convolution.

The artifact of the checkerboard arises because of the overlapped phenomenon based on the size of the kernel and the size of the stride.

PPM (Pyramid pooling module) is suggested for resolving the above issue, which uses various pooling sizes [17]. In addition, the

ASPP (Atrous spatial pyramid pooling) is suggested using the superimposition of the dilated convolution having different rates on the

atrous layer of pooling [16]. PPM, however, has the drawback of losing information about pixel location when applying pooling to a

range of sizes. In particular, the broad receptive eld is used at the loss of cost with the information of local context since the ASPP

utilizes dilated convolution. The system uses upsampling to reduce the loss of semantic information related to the images of input,

having high-level maps of features in the structure of FPN and the attraction to the global and local context information. Therefore,

modeling multi-scale information is an essential requirement in object detection. The major research contributions are summarized

below:

• To propose a novel TOD − Net model with a transformer for object detection, which employs an attention module to encode the

information and enhance feature learning.

• The feature extractor and the attention modules help to obtain the multi-scale features, and interpolation helps in fusing the

features and enhancing the prediction accuracy.

• The classier is used for feature mapping related to multi-scale. For performing the experimentation and to get better prediction

outcomes, Python programming was used to experiment with the MS COCO dataset, and the average precision metric value was

compared with other models to establish a trade-off.

1.1. Paper organization

The article is drafted as follows: Section 2 gives a wider analysis of various prevailing approaches and provides the merits and

demerits. The proposed TOD − Net is elaborated in Section 3, where the object detection process is elaborated. The outcomes are

represented in Section 4, with a summary in Section 5.

2. Related works

An object is usually detected using two types of techniques: one-stage detection and two-stage detection. As a result of applying

regional proposals sequentially, the two-stage detection methods proved to be highly accurate. Regional Convolutional Neural Net-

works (R-CNN) [18] use CNNs to propose candidate regions and extract features from each region. It is the rst method to use both

CNNs and features extracted from each region in two stages. Multiple enhanced variants for the R-CNN have been proposed, such as

faster-RCNN [19], cascade R-CNN, libra R-CNN [20], and mask R-CNN [21]. On the other hand, one-stage detection methods are faster

than two-stage methods but have lower accuracy. Solid State Devices (SSD) [22], You Only Look Once (YOLO) [23], EfcientDet, and

RetinaNet [24] are some examples of methods of one-stage detection. Some advanced methods for object detection, such as CenterNet

[25], FCOS [26], center-based ATSS, and CornerNet [27] that are anchor-free are proposed in recent years. Recently, many object

detection methods have eliminated NMS or the generation of anchors using transformers, which has greatly improved detection ac-

curacy [28].

Furthermore, detecting multi-scale objects is an essential issue to be addressed in the detection task. Image pyramids were widely

used in object detection to improve multiscale object detection accuracy. There is, however, a redundant computational cost associated

with extracting features at each level of the image pyramid. FPN is used to compensate for the semantic information loss during

forwarding with the help of a top-down way and lateral connection for lowering the computation load and enhancing the detection

accuracy. In recent years, more robust pyramid structures have been proposed based on FPN. The PANet adds the augmentation of the

bottom-up path for delivering the lower level of information to the higher level again to enable localization in a precise way. A new

FPN structure called NAS-FPN (Neural architecture search - FPN) was designed that uses natural architecture research (NAS). The

network’s performance improved but required more memory. In addition, the weighted BiFPN, a bi-directional FPN, is suggested,

which resolves the issue of cost computation, and multi-scale feature maps are fused more efciently and quickly.

M. Sirisha and S.V. Sudha

Computers and Electrical Engineering 108 (2023) 108695

3

Natural language processing has actively studied attention mechanisms as essential elements for neural networks. In recent years,

attention mechanisms in computer vision have been applied to various elds to enhance performance by highlighting regions of in-

terest in images and detecting long-range dependencies. Segmentation, generation of images, and classication of images are some of

the applications of computer vision using them. Previous studies have proven that attention mechanisms can improve object detection

performance by locating and recognizing objects in images. In addition, a MAD unit is suggested in [29] to identify the activation of the

neuron in the lower and higher streams via the aggressive search. A new structure couplenet is suggested, which combines the local and

global information related to objects to improve detection performance. Recent improvements have been made in the accuracy of

object detection methods using transformers.

The attention-based NLP models’ success has inspired a recent initiative to integrate transformers into CNNs. The ViT Vision

Transformer is a pure transformer used to classify the images. The 2D image is reshaped into attened patches to handle it. The

transformer attens the patches and maps them to dimensions using a trainable linear projection using constant latent vector sizes

across all its layers. The results obtained when trained on large datasets prove that the transformers efciently extract features of an

image. Video Vision Transformer (ViViT) is proposed for the classication of videos. A sequence of spatiotemporal tokens extracted

from the video input is used in this architecture as the primary method of computation for self-attention. Several methods are applied

to factorize the model along spatial and temporal dimensions to increase efciency and scalability. The author suggested the Tran-

sUNet, which integrates the transformer’s encoder and the U-Net. Transformer encodes the tokenized image patches based on the CNN

feature map to detect global context initially and extract global context later. Decoding ensures that the encoded features are

upsampled, and the CNN feature maps are combined for precise localization. TransUNet leverages detailed, high-resolution geospatial

information and the global context by combining CNN features and transformers. In various medical applications, TransUNet out-

performs other competing methods [29].

Many methods based on U-Net related to detecting video anomaly utilize the successive stacked frames as input and the constraints

of motion like loss of optical ow to extract the temporal information. The structure is limited, and inadequate temporal information is

used to predict and reconstruct. Better performance is obtained by ViViT than other models for video classication using different

variants of the transformers [29]. Using the transformers, the model performs better, encoding both spatial and temporal features in

the videos. Furthermore, the U-Net is widely used for detecting video anomalies. In addition, the potential integration of the U-Net and

the transformer is mentioned by the TransUNet. The model inspires us to modify the encoder of the transformer in the proposed system

to make reliable predictions in the video. The model encodes spatial data, while the suggested encoder of the transformer encodes the

temporal data. The model performs better than the baseline model based on prediction without the transformer module. However, the

performance is comparatively lesser and triggers the need for a novel approach. This research intends to solve the complexity of the

existing approaches by proposing a transformer-based network framework for object detection (TOD − Net).

3. Methodology

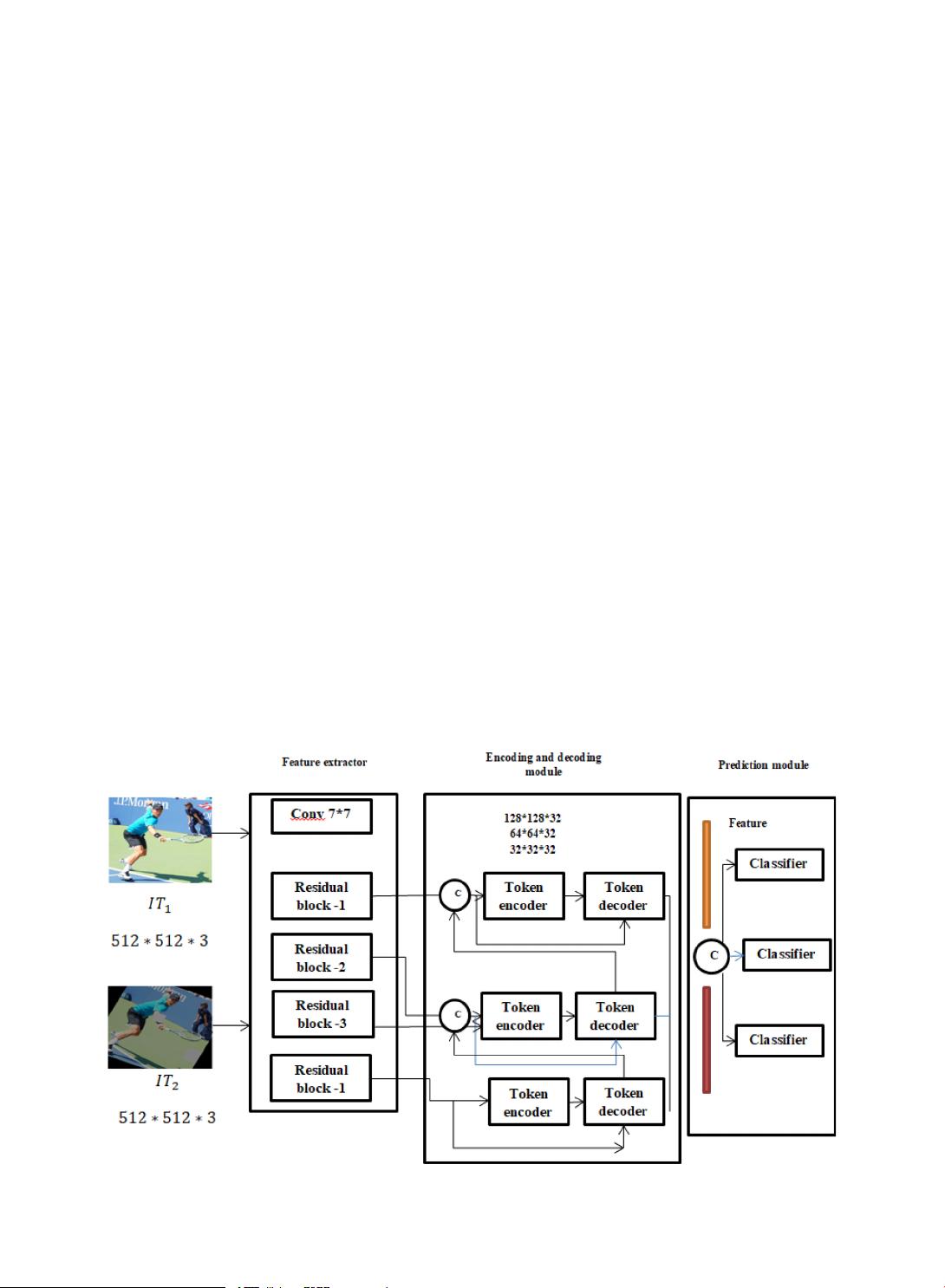

The backbone of the proposed network uses TOD − Net as the feature extractor that is modied by taking the existing ResNet-18 and

removing the primary fully connected layer. Hence, the feature extractor has a convolution layer of 7 × 7 and four residual blocks

Fig 1. Overview of TOD − Net.

M. Sirisha and S.V. Sudha

剩余11页未读,继续阅读

资源评论

DrYJ

- 粉丝: 40

- 资源: 24

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功