没有合适的资源?快使用搜索试试~ 我知道了~

Texture-GS原文

资源推荐

资源详情

资源评论

Texture-GS: Disentangling the Geometry and

Texture for 3D Gaussian Splatting Editing

Tian-Xing Xu

1

, Wenbo Hu

2†

, Yu-Kun Lai

3

, Ying Shan

2

, and Song-Hai Zhang

1†

1

Tsinghua University, China

xutx21@mails.tsinghua.edu.cn,shz@tsinghua.edu.cn

2

Tencent AI Lab, China

wbhu@tencent.com,yingsshan@tencent.com

3

Cardiff University, United Kingdom

LaiY4@cardiff.ac.uk

Abstract. 3D Gaussian splatting, emerging as a groundbreaking ap-

proach, has drawn increasing attention for its capabilities of high-fidelity

reconstruction and real-time rendering. However, it couples the appear-

ance and geometry of the scene within the Gaussian attributes, which

hinders the flexibility of editing operations, such as texture swapping.

To address this issue, we propose a novel approach, namely Texture-GS,

to disentangle the appearance from the geometry by representing it as a

2D texture mapped onto the 3D surface, thereby facilitating appearance

editing. Technically, the disentanglement is achieved by our proposed

texture mapping module, which consists of a UV mapping MLP to learn

the UV coordinates for the 3D Gaussian centers, a local Taylor expan-

sion of the MLP to efficiently approximate the UV coordinates for the

ray-Gaussian intersections, and a learnable texture to capture the fine-

grained appearance. Extensive experiments on the DTU dataset demon-

strate that our method not only facilitates high-fidelity appearance edit-

ing but also achieves real-time rendering on consumer-level devices, e.g .

a single RTX 2080 Ti GPU.

Keywords: Neural rendering · Scene editing · Novel view synthesis ·

Gaussian splatting · Texture mapping · Disentanglement

1 Introduction

Reconstruction, editing, and real-time rendering of photo-realistic scenes are fun-

damental problems in computer vision and graphics, with diverse applications

such as film production, computer games, and virtual/augmented reality. Polyg-

onal meshes have served as the standard 3D representation within traditional

rendering pipelines, owing to their rendering speed and editing flexibility (with

texture mapping).

Due to the laborious process of manual mesh-based scene modeling, 3D Gaus-

sian Splatting [13] (3D-GS) has gained considerable attention for its capability

†

Corresponding authors.

arXiv:2403.10050v1 [cs.CV] 15 Mar 2024

2 T.-X. et al.

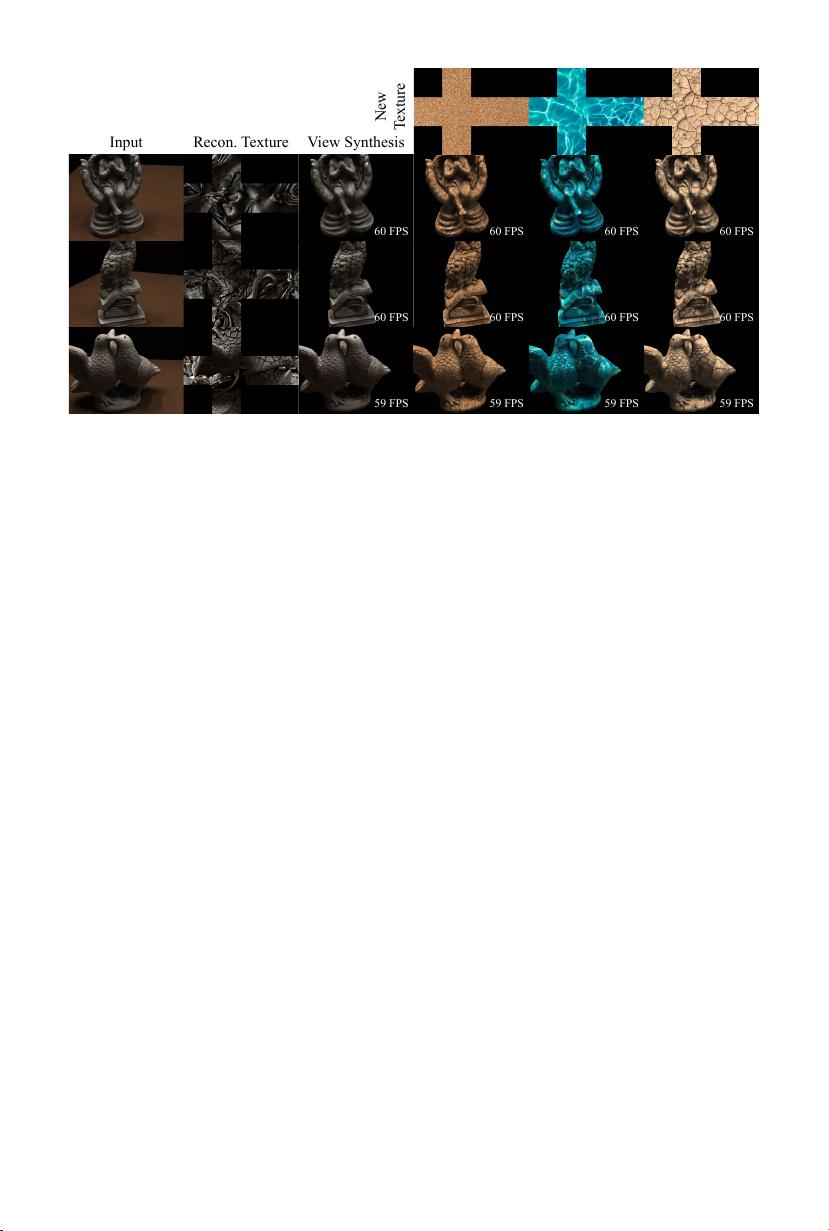

60 FPS 60 FPS 60 FPS 60 FPS

60 FPS 60 FPS 60 FPS 60 FPS

59 FPS 59 FPS 59 FPS 59 FPS

Input Recon. Texture View Synthesis

New

Texture

Fig. 1: Texture swapping with our method. We propose to disentangle the appearance

from the geometry for 3D-GS, thereby facilitating real-time appearance editing such

as texture swapping. The rendering speed is shown in each result.

of faithfully reconstructing complex scenes from multi-view images and real-

time rendering. 3D-GS represents the scene as a set of 3D anisotropic Gaussians

equipped with per-Gaussian color attributes and supports real-time rendering

by splatting these Gaussians onto the image plane. However, this representa-

tion couples the appearance and geometry of the scene within the unordered

and irregular 3D Gaussians, which hinders the flexibility of appearance editing

for 3D-GS compared to meshes, where appearance can be easily parameterized

into texture maps. Although considerable efforts have been made to edit 3D

Gaussian-based scenes [3, 7, 14, 24, 28], the manipulation of appearance remains

inconvenient since these works still follow the entangled representation of 3D-GS.

In this paper, we propose a novel method, named Texture-GS, which aims

to explicitly disentangle the geometry and texture for 3D-GS, thereby signifi-

cantly improving the flexibility of appearance editing for 3D scenes. Texture-GS

follows 3D-GS in modeling the geometry as a set of anisotropic 3D Gaussians,

but crucially, it represents the view independent appearance as a 2D texture

map. Leveraging this disentangled representation, Texture-GS retains the pow-

erful capabilities of 3D-GS for faithful reconstruction and real-time rendering,

while also facilitating various appearance editing applications, such as texture

swapping shown in Fig. 1.

The key challenge in implementing our Texture-GS lies in establishing a con-

nection between the geometry (3D Gaussians) and appearance (2D texture map).

NeuTex [23] has proposed a texture mapping MLP (Multi-Layer Perceptron)

that regresses 2D UV coordinates for every 3D point to represent the radiance

of NeRF (Neural Radiance Field) [16] in a 2D texture space. However, evaluating

an MLP for each ray-Gaussian intersection is unsuitable for our Texture-GS, as

it would be prohibitively expensive for real-time rendering, which is a key advan-

tage of 3D-GS over NeRF-based methods. To maintain the ability of real-time

rendering, one straightforward solution is to employ a texture mapping MLP

Texture-GS 3

to pre-compute UV coordinates for each Gaussian based on its center location

and then query the per-Gaussian color attributes from the texture map before

rendering. Given the fact that each 3D Gaussian often covers more than one

pixel in practice, this straightforward solution would result in all pixels covered

by a single Gaussian being mapped to the same UV location, leading to discon-

tinuities in the texture space. To address this issue, we propose a novel texture

mapping module. It incorporates an MLP for mapping Gaussian centers into the

texture UV space before rendering, along with a Taylor expansion at the Gaus-

sian centers, which serves an approximation of the MLP and enables efficient

mapping of the ray-Gaussian intersections to UV coordinates during rendering.

Our texture mapping module not only promotes a smooth texture map, as the

Taylor expansion guarantees the local continuity for the UV coordinates of pix-

els within a projected Gaussian, but also preserves rendering efficiency, as the

inference of UV coordinates merely involves a small matrix product.

To evaluate the effectiveness of our Texture-GS, we conduct extensive quan-

titative and qualitative experiments on the DTU dataset [1]. The results demon-

strate that Texture-GS recovers a smooth high-quality 2D texture map from

multi-view images, while also facilitates various editing applications such as

global texture swapping and fine-grained texture editing. Besides, our method

achieves an average rendering speed of 58 FPS on a single RTX 2080 Ti GPU,

demonstrating its real-time rendering capabilities. Our contributions are sum-

marized below.

– To the best of our knowledge, we are the first to disentangle the geometry

and texture for 3D-GS, thereby enabling various editing applications.

– We propose a novel texture mapping module to map ray-Gaussian intersec-

tions into a continuous 2D texture space while maintaining efficiency.

– Experiments validate the effectiveness of our method for novel view synthe-

sis, global texture swapping, and local appearance editing, with real-time

rendering speed on consumer-level devices.

2 Related Work

Neural UV Mapping. UV mapping plays an essential role in the traditional

rendering pipeline, aiming at computing a bijective mapping between the 3D sur-

face and a suitable parametric domain. UV mapping is usually accompanied with

3D shapes and jointly modeled by artists, necessitating considerable labor costs.

In recent years, NeRF [16] has gained increasing attention for its superior view

synthesis quality, inspiring many follow-up works [6,23,30] to reconstruct 3D ge-

ometry with a volumetric density field while concurrently learning UV mapping

based on neural networks. NeuTex [23] is the first work to recover a meaningful

surface-aware UV mapping function from multi-view images. Provided with any

3D shading point during the NeRF’s ray marching process, NeuTex obtains its

radiance by sampling the reconstructed texture at its UV location, which is out-

put by a texture mapping MLP. To ensure the bijective property of UV mapping,

4 T.-X. et al.

NeuTex adopts a cycle consistency loss to regularize the network. The follow-

up Neural Gauge Field [30] draws inspiration from the principle of information

conservation during gauge transformation [17] and proposes an information reg-

ularization term to maximize the mutual information. Nuvo [21] extends NeuTex

with multiple charts and a chart assignment network for general scenes. Apart

from the above methods, some approaches focus on specific object categories

such as human faces [5, 15, 25], documents [6] and human bodies [4]. However,

NeRF-based methods adopt ray marching for rendering, which evaluates a large

texture MLP with an additional UV mapping network at hundreds of sample

shading points along the ray for each pixel to compute the final color. This pro-

cess is excessively slow for interactive visualization and real-time applications.

3D Gaussian Editing. 3D Gaussian Splatting [13] (3D-GS) has emerged as

an alternative 3D representation to NeRF [16], achieving real-time rendering

via splatting 3D Gaussians instead of ray marching. It has received increasing

attention for its explicit representation and promising reconstruction quality,

which is more suitable for scene editing. Leveraging its explicit, point cloud-like

formulation, Point’n Move [10], Gaussian Grouping [28], SA-GS [9] and Feature

3DGS [31] combine semantic segmentation methods such as SAM [14] with 3D

GS representation to obtain the mask of the target and explicitly manipulate the

selected object in the scene in real time. SC-GS [11] and Cogs [29] introduce novel

frameworks for manipulating and editing dynamic content in 4D space. With the

advancement of text-to-image models [19], some works [3, 7] achieve swift and

controllable 3D scene editing in accordance with text instructions, incorporating

a 2D diffusion model to fine-tune 3D-GS representations. PhysGaussian [24]

explores the physical properties of 3D Gaussians, employing a custom Material

Point Method for physical simulation. Despite yielding promising results, these

methods capture the appearance in per-Gaussian color attributes and regard

3D Gaussians as isolated shading elements, neglecting the global appearance.

The entanglement of color and density attributes hinders editing flexibility and

precludes editing applications such as texture swapping.

3 Preliminaries

3D-GS [13] represents the scene as a set of 3D Gaussians G = {G

i

(x)}

N

i=1

, where

N denotes the number of Gaussians. Each Gaussian is defined by its center

position µ

i

∈ R

3

and covariance matrix Σ

i

∈ R

3×3

, expressed as:

G

i

(x) = exp

−

1

2

(x − µ)

T

Σ

−1

i

(x − µ)

. (1)

The appearance of the scene is represented in the per-Gaussian attributes, i.e.

an opacity value o

i

∈ R to adjust the influence weight and an RGB color c

i

∈ R

3

given by sphere harmonic (SH) coefficients. To render the scene, 3D-GS splats

3D Gaussians onto the image plane via EWA splatting [32] to form 2D Gaussians

G

′

i

, whose covariance matrix Σ

′

i

∈ R

2×2

is defined as Σ

′

i

= J W Σ

i

W

T

J

T

. Here

剩余19页未读,继续阅读

资源评论

qq_42615125

- 粉丝: 0

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功