没有合适的资源?快使用搜索试试~ 我知道了~

人脸识别论文

温馨提示

试读

12页

最新的IEEE上的人脸识别论文

资源详情

资源评论

资源推荐

Eigenfeature Regularization and Extraction

in Face Recognition

Xudong Jiang, Senior Member, IEEE, Bappaditya Mandal, and Alex Kot, Fellow, IEEE

Abstract—This work proposes a subspace approach that regularizes and extracts eigenfeatures from the face image. Eigenspace of the

within-class scatter matrix is decomposed into three subspaces: a reliable subspace spanned mainly by the facial variation, an unstable

subspace due to noise and finite number of training samples, and a null subspace. Eigenfeatures are regularized differently in these three

subspaces based on an eigenspectrum model to alleviate problems of instability, overfitting, or poor generalization. This also enables the

discriminant evaluation performed in the whole space. Feature extraction or dimensionality reduction occurs only at the final stage after

the discriminant assessment. These efforts facilitate a discriminative and a stable low-dimensional feature representation of the face

image. Experiments comparing the proposed approach with some other popular subspace methods on the FERET, ORL, AR, and GT

databases show that our method consistently outperforms others.

Index Terms—Face recognition, linear discriminant analysis, regularization, feature extraction, subspace methods.

Ç

1INTRODUCTION

F

ACE recognition has attracted many researchers in the area

of pattern recognition, machine learning, and computer

vision because of its immense application potential. Numer-

ous methods have been proposed in the last two decades [1],

[2]. However, there are still substantial challenging problems,

which remain to be unsolved. One of the critical issues is how

to extract discriminative and stable features for classification.

Linear subspace analysis has been extensively studied and

becomes a popular feature extraction method since the

principal component analysis (PCA) [3], Bayesian maximum

likelihood (BML) [4], [5], [6], and linear discriminant analysis

(LDA) [7], [8] were introduced into face recognition. A

theoretical analysis showed that a low-dimensional linear

subspace could capture the set of images of an object

produced by a variety of lighting conditions [9]. The agree-

able properties of the linear subspace analysis and its

promising performance achieved in the face recognition

encourage researchers to extend it to higher order statistics

[10], [11], nonlinear methods [12], [13], [14], Gabor features

[15], [16], and localit y preserving projections [17], [18].

However, the basic linear subspace analysis has still out-

standing challenging problems when applied to the face

recognition due to the high dimensionality of face images and

the finite number of training samples in practice.

PCA maximizes the variances of the extracted features

and, hence, minimizes the reconstruction error and removes

noise residing in the discarded dimensi ons. The best

representation of data may not perform well from the

classification point of view because the total scatter matrix

is contributed by both the within and between-class varia-

tions. To differentiate face images of one person from those of

the others, the discrimination of the features is the most

important. LDA is an efficient way to extract the discrimina-

tive features as it handles the within and between-class

variations separately. However, this method needs the

inverse of the within-class scatter matrix. This is problematic

in many practical face recognition tasks because the dimen-

sionality of the face image is usually very high compared to

the number of available training samples and, hence, the

within-class scatter matrix is often singular.

Numerous methods have been proposed to solve this

problem in the last decade. A popular approach called

Fisherface (FLDA) [19] applies PCA first for dimensionality

reduction so as to make the within-class scatter matrix

nonsingular before the application of LDA. However,

applying PCA for dimensionality reduction may result in

the loss of discriminative information [20], [21], [22]. Direct

LDA (DLDA) method [23], [24] removes null space of the

between-class scatter matrix and extracts the eigenvectors

corresponding to the smallest eigenvalues of the within-class

scatter matrix. It is an open question of how to scale the

extracted features, as the smallest eigenvalues are very

sensitive to noise. The null space (NLDA) approaches [25],

[26], [22] assume that the null space contains the most

discriminative information. Interestingly, this appears to be

contradicting the popular FLDA that only uses the principal

space and discards the null space. A common problem of all

these approaches is that they all lose some discriminative

information, either in the principal or in the null space.

In fact, the discriminative information resides in both

subspaces. To use both subspaces, a modified LDA approach

[27] replaces the within-class scatter matrix by the total scatter

matrix. Subsequent work [28] extracts features separately

from the principal and null spaces of the within-class scatter

matrix. However, the extracted features may not be properly

scaled andundueemphasis isplaced on the null space in these

two approaches due to the replacement of the within-class

scatter matrix by the total scatter matrix. The dual-space

IEEE TRANSACTIONS ON PATTERN ANALYSIS AND MACHINE INTELLIGENCE, VOL. 30, NO. 3, MARCH 2008 383

. The authors are with the School of Electrical and Electronic Engineering,

Nanyang Technological University, Nanyang Link, Singapore 639798.

E-mail: {exdjiang, bapp0001, eackot}@ntu.edu.sg.

Manuscript received 27 June 2006; revised 6 Nov. 2006; accepted 15 May

2007; published online 31 May 2007.

Recommended for acceptance by T. Tan.

For information on obtaining reprints of this article, please send e-mail to:

tpami@computer.org, and reference IEEECS Log Number TPAMI-0475-0606.

Digital Object Identifier no. 10.1109/TPAMI.2007.70708.

0162-8828/08/$25.00 ß 2008 IEEE Published by the IEEE Computer Society

approach (DSL) [21] scales features in the complementary

subspace by the average eigenvalue of the within-class scatter

matrix over this subspace. As eigenvalues in this subspace are

not well estimated [21], their average may not be a good

scaling factor relative to those in the principal subspace.

Features extracted from the two complementary subspaces

are properly fused by using summed normalized distance

[14]. Open questions of these approaches are how to divide

the spaceinto the principal and the complementary subspaces

and how to apportion a given number of features to the two

subspaces. Furthermore, as the discriminative information

resides in both subspaces, it is inefficient or only suboptimal

to extract features separately from the two subspaces.

The above approaches focus on the problem of singularity

of the within-class scatter matrix. In fact, the instability and

noise disturbance of the small eigenvalues cause great

problems when the inverse of matrix is applied such as in

the Mahalanobis distance, in the BML estimation, and in the

whitening process of various LDA approaches. Problems of

the noise disturbance were addressed in [29], and a unified

framework of subspace methods (UFS) was proposed. Good

recognition performance of this framework shown in [29]

verifies the importance of noise suppression. However, this

approach applies three stages of subspace decompositions

sequentially on the face training data, and dimensionality

reduction occurs at the very first stage. As addressed in the

literature [20], [21], [22], applying PCA for dimensionality

reduction may result in the lost of discriminative information.

Another open question of UFS is how to choose the number of

principal dimensions for the first two stages of subspace

decompositions before selecting the final number of features

at the third stage. The experimental results in [29] show that

recognition performance is sensitive to these choices at

different stages.

In this paper, we present a new approach for facial

eigenfeature regularization and extraction. Im age space

spanned by the eigenvectors of the within-class scatter matrix

is decomposed into three subspaces. Eigenfeatures are

regulariz ed differently in these subspaces based on an

eigenspectrum model. This alleviates the problem of unreli-

able small and zero eigenvalues caused by noise and the

limited number of training samples. It also enables discrimi-

nant evaluation to be performed in the full dimension of the

image data. Feature extraction or dimensionality reduction

occurs only at the final stage after the discriminant assess-

ment. In Section 2, we model the eigenspectrum, study the

effect of the unreliable small eigenvalues on the feature

weighting, and decompose the eigenspace into face, noise,

and null subspaces. Eigenfeature regularization and extrac-

tion are presented in Section 3. Analysis of the proposed

approach and comparison with other relevant methods are

provided in Section 4. Experimental results are presented in

Section 5 before drawing conclusions in Section 6.

2EIGENSPECTRUM MODELING AND SUBSPACE

DECOMPOSITION

Given a set of properly normalized w-by-h face images, we

can form a training set of column image vectors fX

ij

g, where

X

ij

2 IR

n¼wh

, by lexicographic ordering the pixel elements of

image j of person i. Let the training set contain p persons and

q

i

sample images for person i. The number of total training

sample is l ¼

P

p

i¼1

q

i

. For face recognition, each person is a

class with prior probability of c

i

. The within-class scatter

matrix is defined by

S

w

¼

X

p

i¼1

c

i

q

i

X

q

i

j¼1

ðX

ij

X

i

ÞðX

ij

X

i

Þ

T

; ð1Þ

where

X

i

¼

1

q

i

P

q

i

j¼1

X

ij

. The between-class scatter matrix S

b

and the total (mixture) scatter matrix S

t

are defined by

S

b

¼

X

p

i¼1

c

i

ðX

i

XÞðX

i

XÞ

T

; ð2Þ

S

t

¼

X

p

i¼1

c

i

q

i

X

q

i

j¼1

ðX

ij

XÞðX

ij

XÞ

T

; ð3Þ

where

X ¼

P

p

i¼1

c

i

X

i

. If all classes have equal pri or

probability, then c

i

¼ 1=p.

Let S

g

, g 2ft; w; bg represent one of the above scatter

matrices. If we regard the elements of the image vector and

the class mean vector as features, these preliminary features

will be decorrelated by solving the eigenvalue problem

g

¼

g

T

S

g

g

; ð4Þ

where

g

¼½

g

1

; ...;

g

n

is the eigenvector matrix of S

g

, and

g

is the diagonal matrix of eigenvalues

g

1

; ...;

g

n

corresponding to the eigenvectors.

Suppose that the eigenvalues are sorted in descending

order

g

1

; ...;

g

n

. The plot of eigenvalues

g

k

against the

index k is called eigenspectrum of the face training data. It

plays a critical role in subspace methods as the eigenvalues

are used to scale and extract features. We first model the

eigenspectrum to show its problems in feature scaling and

extraction.

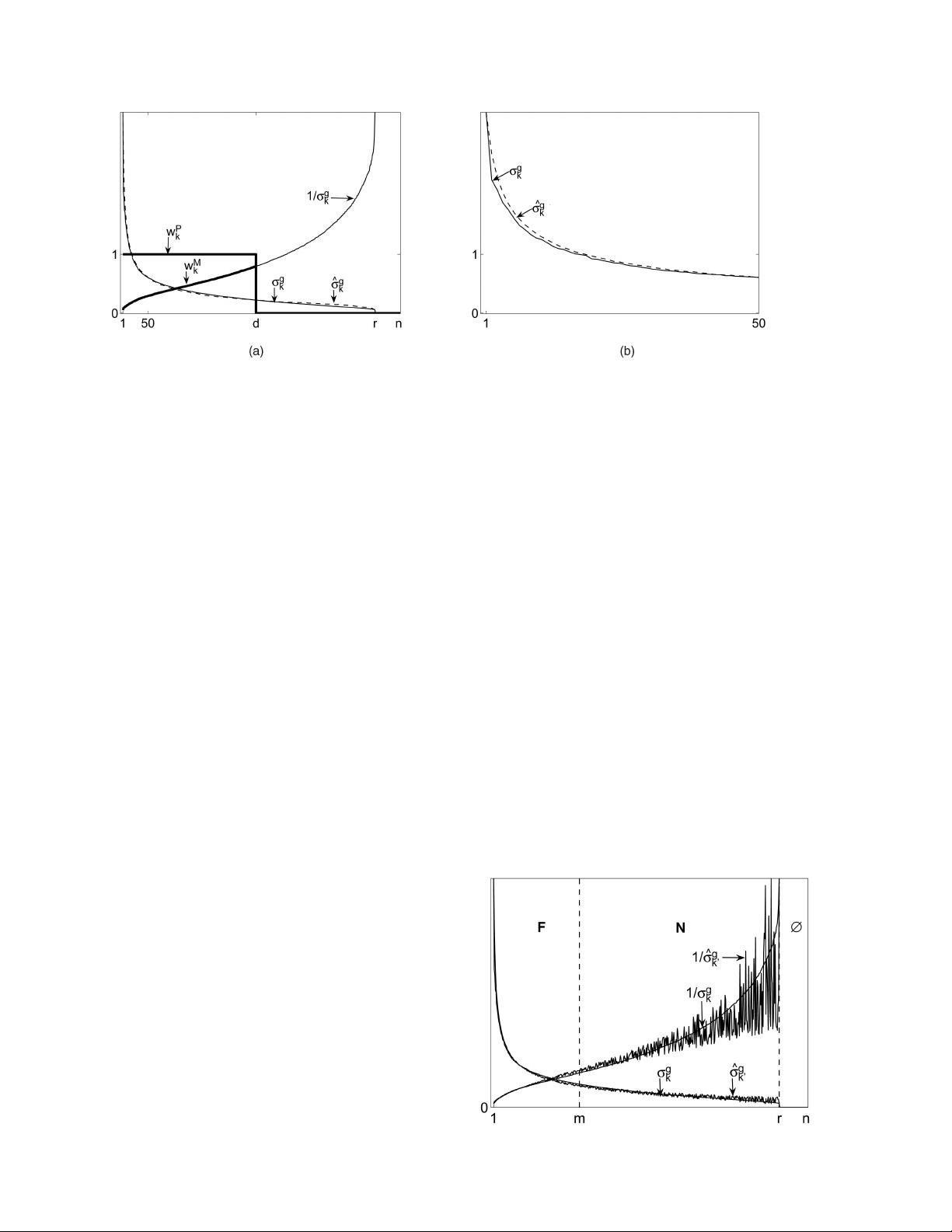

2.1 Eigenspectrum Modeling

If we regard X

ij

as samples of a random variable vector X, the

eigenvalue

g

k

is a variance estimate of X projected on the

eigenvector

g

k

estimated from the training samples. It usually

deviates from the true variance of the projected random

vector X due to the finite number of training samples. Thus,

we model the eigenspectrum in the range subspace

g

k

,

1 k r, as the sum of the true variance component v

F

k

and

a deviation component

k

.Forsimplicity,wecallv

F

k

face component and

k

noise component. As the face

component typically decays rapidly and stabilizes, we can

model it by a function of the form 1 =f that can well fit to the

decaying nature of the eigenspectrum. The function form 1=f

was used in [4] to extrapolate eigenvalues in the null space for

computing the average eigenvalue over a subspace. The noise

component

k

that includes the effect of the finite number of

training samples can be negative if the face component v

F

k

is

modeled by a 1=f function that always has positive values.

We propose to model the eigenvalues first in descending

order of the face component v

F

k

by

^

F

k

¼ v

F

k

þ

k

¼

k þ

þ

k

; 1 k r; ð5Þ

where and are two constants that will be given in

Section 3.1. The modeled eigenspectrum

^

g

k

is then obtained

by sorting

^

F

k

in descending order. As the eigenspectrum

384 IEEE TRANSACTIONS ON PATTERN ANALYSIS AND MACHINE INTELLIGENCE, VOL. 30, NO. 3, MARCH 2008

decays very fast, we plot the square roots

g

k

¼

ffiffiffiffi

g

k

p

and ^

g

k

¼

ffiffiffiffi

^

g

k

p

for clearer illustration (we still call them eigenspectrum

for simplicity). A typical real eigenspectrum

g

k

and its

model ^

g

k

are shown in Fig. 1, where

k

is a random number

evenly distributed in a small range of ½

g

1

=1000;

g

1

=1000.

In Fig. 1, we see that the proposed model closely

approximates to the real eigenspectrum.

2.2 Problems of Feature Scaling and Extraction

The PCA approach with euclidean distance selects d leading

eigenvectors and discards the others. This can be seen as

weighting the eigenfeatures with a step function as

u

d

k

¼

1;k d

0;k>d:

ð6Þ

This weighting function of PCA w

P

k

¼ u

d

k

is shown in Fig. 1.

In a practical face recognition problem, the recognition

performance usually improves with the increase of d. The

PCA with Mahalanobis (PCAM) distance can be seen as the

PCA with euclidean distance and a weighting function

w

M

k

¼ u

d

k

=

g

k

. This weighting function is shown in Fig. 1.

Using the inverse of the square root of the eigenvalue to weigh

the eigenfeature makes a large difference in the face

recognition performance. The recognition accuracy usually

increases sharply with the increase of d and is better than the

euclidean distance for smaller d. However, the recognition

accuracy decreases also sharply and is much worse than the

euclidean distance for larger d. The BML approach that

weights the principal features ð1 k dÞ by the inverse of

the square root of the eigenvalue also suffers from the

performance decrease with the increase of the d after d reaches

a small value.

Noise disturbance and poor estimates of small eigenva-

lues due to the finite number of training samples are the

culprits. The limited number of training samples results in

very small eigenvalues in some dimensions that may not

well represent the true variance in these dimensions. This

may result in serious problems if their inverses are used to

weight the eigenfeatures. The characteristics of the eigen-

spectrum and the generalization deterioration caused by the

small eigenvalues were well addressed in [30]. To exclude

the small eigenvalues in the discriminant evaluation,

probabilistic reasoning models and enhanced FLDA models

were proposed and compared in [30]. The modeled

eigenspectrum in Fig. 1 that approximates closely to the

real one is resorted in descending order of the face

component v

F

k

and is plotted in Fig. 2. The small noise

disturbances are now visible in Fig. 2 given that the

variances of the face component should always decay.

These small disturbances cause large vibrations of the

inverse eigenspectrum, as shown in Fig. 2. Eigenfeatures of

larger index k are heavily weighted by the scaling factors

that are highly sensitive to noise and training data. This

causes the deterioration of the recognition performance,

especially on the independent testing data.

2.3 Subspace Decomposition

As shown in Fig. 2, small noise disturbances that have little

effect on the initial portion of the eigenspectrum cause large

vibrations of the inverse eigenspectrum in the region of small

eigenvalues. Therefore, we propose to decompose the eigen-

space IR

n

spanned by eigenvectors f

g

k

g

n

k¼1

into three

subspaces: a reliable face variation dominating subspace (or

simply face space) F ¼f

g

k

g

m

k¼1

, an unstable noise variation

dominating subspace (or simply noise space) N ¼f

g

k

g

r

k¼mþ1

and a null space ;¼f

g

k

g

n

k¼rþ1

, as illustrated in Fig. 2. The

purpose of this decomposition is to modify or regularize the

unreliable eigenvalues for better generalization.

JIANG ET AL.: EIGENFEATURE REGULARIZATION AND EXTRACTION IN FACE RECOGNITION 385

Fig. 1. A typical real eigenspectrum, (a) its model and feature weighting/extraction of PCA. The first 50 real eigenvalues and (b) the values of the

model.

Fig. 2. A typical real eigenspectrum, its model sorted in descending

order of the face component and their inverse; decomposition of the

eigenspace into face, noise, and null-subspaces.

剩余11页未读,继续阅读

nacy123

- 粉丝: 0

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论9