没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

试读

29页

This is a thorough guide to tell you ways to boost the performance of your DNN! This is a thorough guide to tell you ways to boost the performance of your DNN! This is a thorough guide to tell you ways to boost the performance of your DNN! This is a thorough guide to tell you ways to boost the performance of your DNN! This is a thorough guide to tell you ways to boost the performance of your DNN! This is a thorough guide to tell you ways to boost the performance of your DNN! DNN! DNN! DNN! DNN!

资源推荐

资源详情

资源评论

In[]:

DNN

mission: get the best DNN

model: DNN

Ways to solve Gradient Boosting or Gradient vanishing problem

during the value passing through layers

Better Initialization - Speeding up training Meanwhile

Xavier/Glorot Initialization - when using sigmoid function as an activation function

use normal distribution with mean 0 and variance

where

or use uniform distribution between -r and r, with

Other Useful Initializers

usually, use LeCun with a normal distribution

Default: Glorot initialization with a uniform distribution

=

σ

2

1

𝑓𝑎

𝑛

𝑎𝑣𝑔

𝑓𝑎

=

𝑛

𝑎𝑣𝑔

𝑓𝑎

+

𝑓𝑎

𝑛

𝑖𝑛

𝑛

𝑜𝑢𝑡

2

𝑓𝑎

is the number of inputs of a layer, similarly with

𝑓𝑎

𝑛

𝑖𝑛

𝑛

𝑜𝑢𝑡

𝑟

=

3σ

²

⎯ ⎯⎯⎯⎯

√

'''from google.colab import drive

drive.mount('/content/drive')'''

In[19]:

In[]:

Better Activation Function - Speeding up training Meanwhile

Though ReLU works well, it may lead to a so-called problem dying ReLUs. To solve it, we use the variants of it.

Luckily, we have a variant of ReLU.

Prevent Dying ReLUs

They can be used in tf.keras.layers.LeakyReLU or tf.keras.layers.PReLU

Leaky ReLU - He Initialization

LeakyReLU_α(z)=max(αz,z) where α is the hyperparameter and α<1

α=0.3 by default

In[]:

In[]:

RReLU - He Initialization

randomized Leaky ReLU - randomly set α to train and use the mean of α to test

PReLU is the Best for Large Image Set

1. Tend to overfit small sets.

2. Use He initialization.

import

tensorflow

as

tf

import

numpy

as

np

import

math

as

m

dense

=

tf.keras.layers.Dense(50, activation

=

'relu', kernel_initializer

=

'he_normal')

# you can set

# now change fan_{in} into fan_{avg}

he_uni_init

=

tf.keras.initializers.VarianceScaling(scale

=

2., mode

=

'fan_avg', distribution

=

'uniform'

dense

=

tf.keras.layers.Dense(50, activation

=

'sigmoid', kernel_initializer

=

he_uni_init)

dense

=

tf.keras.layers.Dense(50, activation

=

tf.keras.layers.LeakyReLU(alpha

=

0.2), kernel_initialize

# this is equivalent when creating model

'''

tf.keras.layers.Dense(50, kernel_initializer='he_normal'),

tf.keras.layers.LeakyReLU(alpha=0.2),

'''

In[]:

In[]:

Smooth ReLUs

A not smooth gradient will let the steps bounce around the optimum. Here comes Smooth ReLU.

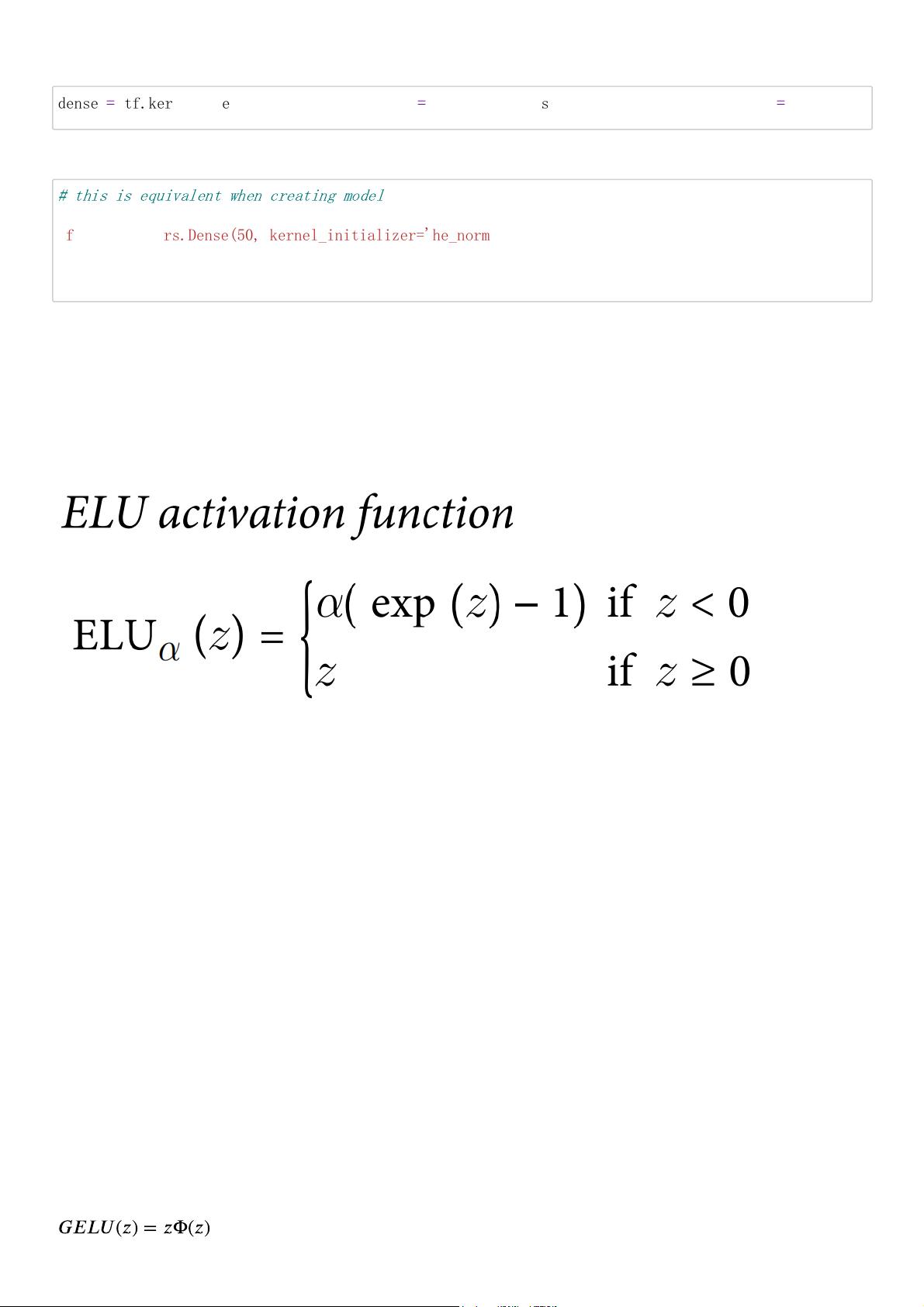

ELU

activation='elu'

alpha=1 by default

use He initialization

a little slower during the whole test process

SELU

activation='selu'

self-normalization conditions

1. standardized inputs

2. use LeCun to initialize hidden layers - kernel_initializer='lecun_normal'

3. use ELU in other architectures apart from MLP

4. no regularization

Other Activation Functions that Outperforms SELU

GELU

where Φ(z) is CDF

𝐺𝐸𝐿𝑈

(

𝑧

) =

𝑧

Φ(

𝑧

)

dense

=

tf.keras.layers.Dense(50, activation

=

tf.keras.layers.PReLU(), kernel_initializer

=

'he_normal'

# this is equivalent when creating model

'''

tf.keras.layers.Dense(50, kernel_initializer='he_normal'),

tf.keras.layers.PReLU(),

'''

outperforms the activation functions metioned before, but it is computationally intensive

=zσ(1.702z) or so, similar performance, but less time

Swish

β is the hyperparameter, or trainable parameter

Remark: β=1 - set activation='gelu'

Mish

Mish(z)=ztanh(softplus(z)), where softplus(z)=log(1+exp(z))

𝑆𝑤𝑖𝑠

(

𝑧

) =

𝑧

σ(β

𝑧

)

ℎ

β

How to choose activation function?

1. simple tasks - ReLU

2. complex tasks without caring more time - Swish with learnable β

3. running time matters and task is complicated - Leaky ReLU (with learnable α)

4. deep MLP - try SELU - make sure conditions are satisfied ...

Use cross-validation to try other activation function if permitted.

Stabilize gradients

Batch Normalization - Speeding up training Meanwhile

Steps:

1. per layer - compute the mean and the modified standard deviation of each small batch

2. scale and shift each normalized inputs - these parameters can be learnt

3. those outputs are the transformed value

where ε is the smoothing term.

Add this operation just before or after the activation function of each hidden layer.

In the case where we have instances rather than batches, or the dataset is too small or not i.i.d., we can

1. wait until the whole data is updated and then calculate the whole means and standard deviations

2. (recommended) using the moving averages and standard deviations (and this is what Keras does when we

use BatchNormalization class)

In consideration of robustness, we usually compute the means and standard deviations by using exponetial

moving average which is implemented batch by batch.

where momentum is usually set close to 1, i.e., often set to 0.9 or 0.99

= momentum × + (1

−

momentum) ×

𝜇

running

𝜇

running

𝜇

batch

= momentum × + (1

−

momentum) ×

𝜎

running

𝜎

running

𝜎

batch

In[]:

μ and σ are learnt by the algorithm above (batch by batch), whereas γ and β are learnt by SGD with

backpropagation.

It wins the great performance of ImageNet and DNN.

BN gives more space for activation functions and weight initialization, solving the vanishing or exploding

gradient problems enormously.

BNlayer

=

tf.keras.layers.BatchNormalization()

剩余28页未读,继续阅读

资源评论

AI是这个时代的魔法

- 粉丝: 22

- 资源: 15

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功