没有合适的资源?快使用搜索试试~ 我知道了~

Interpretable Machine Learning by Christoph Molnar .pdf

温馨提示

试读

255页

Interpretable Machine Learning by Christoph Molnar .pdf

资源推荐

资源详情

资源评论

Interpretable Machine Learning

Interpretable Machine Learning

A Guide for Making Black Box Models Explainable.

Christoph Molnar

2021-03-12

Summary

Machine learning has great potential for improving products, processes and research. But computers usually do not

explain their predictions which is a barrier to the adoption of machine learning. This book is about making machine

learning models and their decisions interpretable.

After exploring the concepts of interpretability, you will learn about simple, interpretable models such as decision

trees, decision rules and linear regression. Later chapters focus on general model-agnostic methods for interpreting

black box models like feature importance and accumulated local effects and explaining individual predictions with

Shapley values and LIME.

All interpretation methods are explained in depth and discussed critically. How do they work under the hood? What

are their strengths and weaknesses? How can their outputs be interpreted? This book will enable you to select and

correctly apply the interpretation method that is most suitable for your machine learning project.

The book focuses on machine learning models for tabular data (also called relational or structured data) and less on

computer vision and natural language processing tasks. Reading the book is recommended for machine learning

practitioners, data scientists, statisticians, and anyone else interested in making machine learning models interpretable.

You can buy the PDF and e-book version (epub, mobi) on leanpub.com.

You can buy the print version on lulu.com.

About me: My name is Christoph Molnar, I'm a statistician and a machine learner. My goal is to make machine

learning interpretable.

Mail: christoph.molnar.ai@gmail.com

Website: https://christophm.github.io/

Follow me on Twitter! @ChristophMolnar

Cover by @YvonneDoinel

Creative Commons License

This book is licensed under the Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

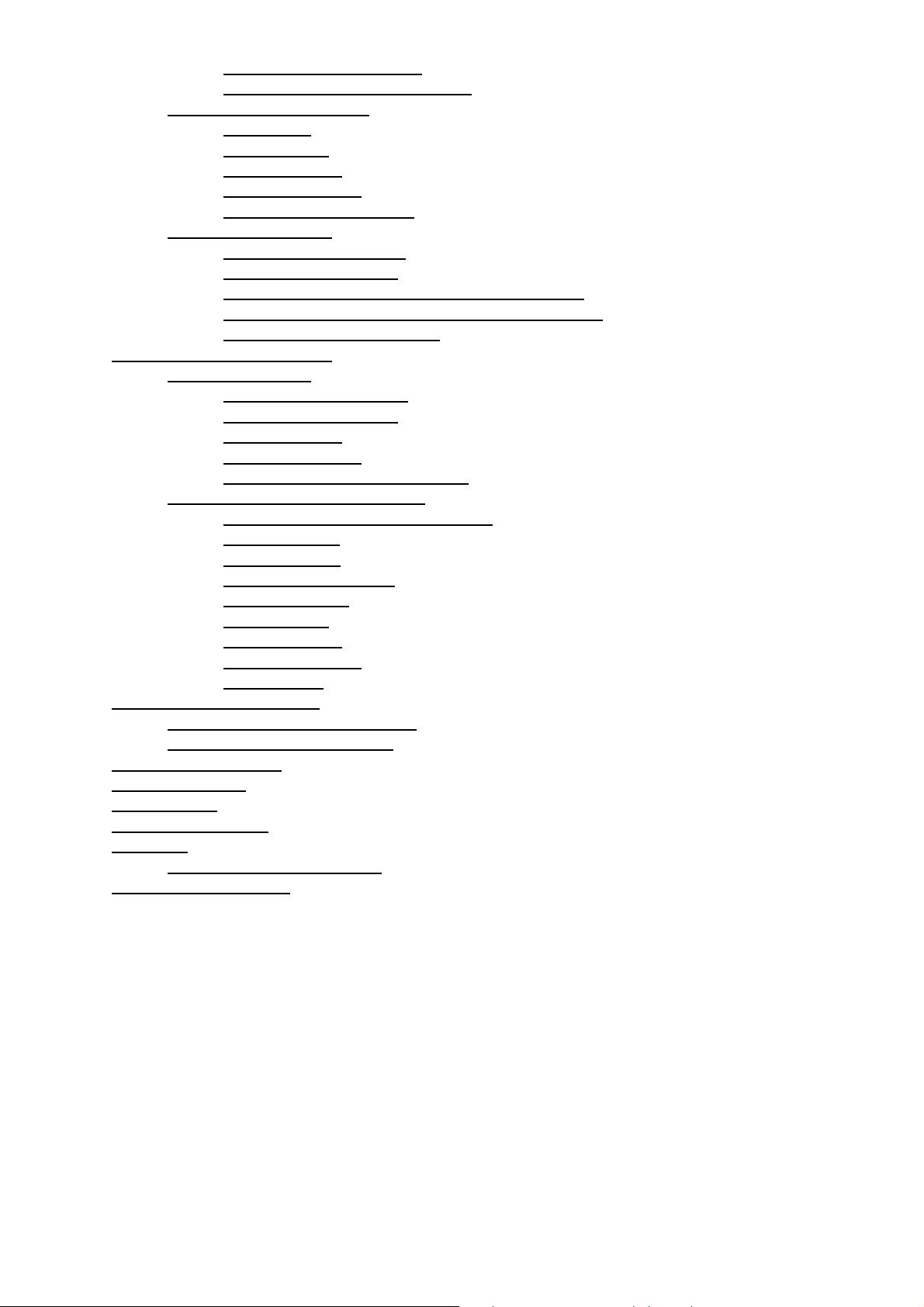

Interpretable machine learning•

• Summary•

Preface by the Author•

1 Introduction

1.1 Story Time

Lightning Never Strikes Twice◊

Trust Fall◊

Fermi's Paperclips◊

♦

1.2 What Is Machine Learning?♦

1.3 Terminology♦

•

2 Interpretability

2.1 Importance of Interpretability♦

2.2 Taxonomy of Interpretability Methods♦

2.3 Scope of Interpretability

2.3.1 Algorithm Transparency◊

2.3.2 Global, Holistic Model Interpretability◊

2.3.3 Global Model Interpretability on a Modular Level◊

2.3.4 Local Interpretability for a Single Prediction◊

2.3.5 Local Interpretability for a Group of Predictions◊

♦

2.4 Evaluation of Interpretability♦

2.5 Properties of Explanations♦

2.6 Human-friendly Explanations

2.6.1 What Is an Explanation?◊

2.6.2 What Is a Good Explanation?◊

♦

•

3 Datasets

3.1 Bike Rentals (Regression)♦

3.2 YouTube Spam Comments (Text Classification)♦

3.3 Risk Factors for Cervical Cancer (Classification)♦

•

4 Interpretable Models

4.1 Linear Regression

4.1.1 Interpretation◊

4.1.2 Example◊

4.1.3 Visual Interpretation◊

4.1.4 Explain Individual Predictions◊

4.1.5 Encoding of Categorical Features◊

4.1.6 Do Linear Models Create Good Explanations?◊

4.1.7 Sparse Linear Models◊

4.1.8 Advantages◊

4.1.9 Disadvantages◊

♦

4.2 Logistic Regression

4.2.1 What is Wrong with Linear Regression for Classification?◊

♦

•

4.2.2 Theory◊

4.2.3 Interpretation◊

4.2.4 Example◊

4.2.5 Advantages and Disadvantages◊

4.2.6 Software◊

4.3 GLM, GAM and more

4.3.1 Non-Gaussian Outcomes - GLMs◊

4.3.2 Interactions◊

4.3.3 Nonlinear Effects - GAMs◊

4.3.4 Advantages◊

4.3.5 Disadvantages◊

4.3.6 Software◊

4.3.7 Further Extensions◊

♦

4.4 Decision Tree

4.4.1 Interpretation◊

4.4.2 Example◊

4.4.3 Advantages◊

4.4.4 Disadvantages◊

4.4.5 Software◊

♦

4.5 Decision Rules

4.5.1 Learn Rules from a Single Feature (OneR)◊

4.5.2 Sequential Covering◊

4.5.3 Bayesian Rule Lists◊

4.5.4 Advantages◊

4.5.5 Disadvantages◊

4.5.6 Software and Alternatives◊

♦

4.6 RuleFit

4.6.1 Interpretation and Example◊

4.6.2 Theory◊

4.6.3 Advantages◊

4.6.4 Disadvantages◊

4.6.5 Software and Alternative◊

♦

4.7 Other Interpretable Models

4.7.1 Naive Bayes Classifier◊

4.7.2 K-Nearest Neighbors◊

♦

5 Model-Agnostic Methods

5.1 Partial Dependence Plot (PDP)

5.1.1 Examples◊

5.1.2 Advantages◊

5.1.3 Disadvantages◊

5.1.4 Software and Alternatives◊

♦

5.2 Individual Conditional Expectation (ICE)

5.2.1 Examples◊

5.2.2 Advantages◊

5.2.3 Disadvantages◊

5.2.4 Software and Alternatives◊

♦

5.3 Accumulated Local Effects (ALE) Plot

5.3.1 Motivation and Intuition◊

5.3.2 Theory◊

5.3.3 Estimation◊

5.3.4 Examples◊

5.3.5 Advantages◊

5.3.6 Disadvantages◊

5.3.7 Implementation and Alternatives◊

♦

5.4 Feature Interaction

5.4.1 Feature Interaction?◊

5.4.2 Theory: Friedman's H-statistic◊

5.4.3 Examples◊

♦

•

5.4.4 Advantages◊

5.4.5 Disadvantages◊

5.4.6 Implementations◊

5.4.7 Alternatives◊

5.5 Permutation Feature Importance

5.5.1 Theory◊

5.5.2 Should I Compute Importance on Training or Test Data?◊

5.5.3 Example and Interpretation◊

5.5.4 Advantages◊

5.5.5 Disadvantages◊

5.5.6 Software and Alternatives◊

♦

5.6 Global Surrogate

5.6.1 Theory◊

5.6.2 Example◊

5.6.3 Advantages◊

5.6.4 Disadvantages◊

5.6.5 Software◊

♦

5.7 Local Surrogate (LIME)

5.7.1 LIME for Tabular Data◊

5.7.2 LIME for Text◊

5.7.3 LIME for Images◊

5.7.4 Advantages◊

5.7.5 Disadvantages◊

♦

5.8 Scoped Rules (Anchors)

5.8.1 Finding Anchors◊

5.8.2 Complexity and Runtime◊

5.8.3 Tabular Data Example◊

5.8.4 Advantages◊

5.8.5 Disadvantages◊

5.8.6 Software and Alternatives◊

♦

5.9 Shapley Values

5.9.1 General Idea◊

5.9.2 Examples and Interpretation◊

5.9.3 The Shapley Value in Detail◊

5.9.4 Advantages◊

5.9.5 Disadvantages◊

5.9.6 Software and Alternatives◊

♦

5.10 SHAP (SHapley Additive exPlanations)

5.10.1 Definition◊

5.10.2 KernelSHAP◊

5.10.3 TreeSHAP◊

5.10.4 Examples◊

5.10.5 SHAP Feature Importance◊

5.10.6 SHAP Summary Plot◊

5.10.7 SHAP Dependence Plot◊

5.10.8 SHAP Interaction Values◊

5.10.9 Clustering SHAP values◊

5.10.10 Advantages◊

5.10.11 Disadvantages◊

5.10.12 Software◊

♦

6 Example-Based Explanations

6.1 Counterfactual Explanations

6.1.1 Generating Counterfactual Explanations◊

6.1.2 Example◊

6.1.3 Advantages◊

6.1.4 Disadvantages◊

6.1.5 Software and Alternatives◊

♦

6.2 Adversarial Examples♦

•

6.2.1 Methods and Examples◊

6.2.2 The Cybersecurity Perspective◊

6.3 Prototypes and Criticisms

6.3.1 Theory◊

6.3.2 Examples◊

6.3.3 Advantages◊

6.3.4 Disadvantages◊

6.3.5 Code and Alternatives◊

♦

6.4 Influential Instances

6.4.1 Deletion Diagnostics◊

6.4.2 Influence Functions◊

6.4.3 Advantages of Identifying Influential Instances◊

6.4.4 Disadvantages of Identifying Influential Instances◊

6.4.5 Software and Alternatives◊

♦

7 Neural Network Interpretation

7.1 Learned Features

7.1.1 Feature Visualization◊

7.1.2 Network Dissection◊

7.1.3 Advantages◊

7.1.4 Disadvantages◊

7.1.5 Software and Further Material◊

♦

7.2 Pixel Attribution (Saliency Maps)

7.2.1 Vanilla Gradient (Saliency Maps)◊

7.2.2 DeconvNet◊

7.2.3 Grad-CAM◊

7.2.4 Guided Grad-CAM◊

7.2.5 SmoothGrad◊

7.2.6 Examples◊

7.2.7 Advantages◊

7.2.8 Disadvantages◊

7.2.9 Software◊

♦

•

8 A Look into the Crystal Ball

8.1 The Future of Machine Learning♦

8.2 The Future of Interpretability♦

•

9 Contribute to the Book•

10 Citing this Book•

11 Translations•

12 Acknowledgements•

References

R Packages Used for Examples♦

•

• Published with bookdown•

剩余254页未读,继续阅读

资源评论

田祥2022-07-25这个版本还不错,能够满足我的需要 #内容详尽

田祥2022-07-25这个版本还不错,能够满足我的需要 #内容详尽

m0_49448618

- 粉丝: 0

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功