没有合适的资源?快使用搜索试试~ 我知道了~

Overview, Definition, and Architecture

需积分: 3 0 下载量 30 浏览量

2023-07-08

23:42:46

上传

评论

收藏 616KB PDF 举报

温馨提示

试读

13页

MLOps (Machine Learning Operations)的范式解决了这个问题。MLOps包括几个方面,例如最佳实践、概念集和开发文化。然而,MLOps 仍然是一个模糊的术语,它对研究人员和专业人士的影响是模棱两可的。

资源推荐

资源详情

资源评论

Machine Learning Operations (MLOps):

Overview, Definition, and Architecture

Dominik Kreuzberger

KIT

Germany

dominik.kreuzberger@alumni.kit.edu

Niklas Kühl

KIT

Germany

kuehl@kit.edu

Sebastian Hirschl

IBM

†

Germany

sebastian.hirschl@de.ibm.com

ABSTRACT

The final goal of all industrial machine learning (ML) projects is to

develop ML products and rapidly bring them into production.

However, it is highly challenging to automate and operationalize

ML products and thus many ML endeavors fail to deliver on their

expectations. The paradigm of Machine Learning Operations

(MLOps) addresses this issue. MLOps includes several aspects,

such as best practices, sets of concepts, and development culture.

However, MLOps is still a vague term and its consequences for

researchers and professionals are ambiguous. To address this gap,

we conduct mixed-method research, including a literature review,

a tool review, and expert interviews. As a result of these

investigations, we provide an aggregated overview of the necessary

principles, components, and roles, as well as the associated

architecture and workflows. Furthermore, we furnish a definition

of MLOps and highlight open challenges in the field. Finally, this

work provides guidance for ML researchers and practitioners who

want to automate and operate their ML products with a designated

set of technologies.

KEYWORDS

CI/CD, DevOps, Machine Learning, MLOps, Operations,

Workflow Orchestration

1 Introduction

Machine Learning (ML) has become an important technique to

leverage the potential of data and allows businesses to be more

innovative [1], efficient [13], and sustainable [22]. However, the

success of many productive ML applications in real-world settings

falls short of expectations [21]. A large number of ML projects

fail—with many ML proofs of concept never progressing as far as

production [30]. From a research perspective, this does not come as

a surprise as the ML community has focused extensively on the

building of ML models, but not on (a) building production-ready

ML products and (b) providing the necessary coordination of the

resulting, often complex ML system components and infrastructure,

including the roles required to automate and operate an ML system

in a real-world setting [35]. For instance, in many industrial

applications, data scientists still manage ML workflows manually

†

This paper does not represent an official IBM

statement

to a great extent, resulting in many issues during the operations of

the respective ML solution [26].

To address these issues, the goal of this work is to examine how

manual ML processes can be automated and operationalized so that

more ML proofs of concept can be brought into production. In this

work, we explore the emerging ML engineering practice “Machine

Learning Operations”—MLOps for short—precisely addressing

the issue of designing and maintaining productive ML. We take a

holistic perspective to gain a common understanding of the

involved components, principles, roles, and architectures. While

existing research sheds some light on various specific aspects of

MLOps, a holistic conceptualization, generalization, and

clarification of ML systems design are still missing. Different

perspectives and conceptions of the term “MLOps” might lead to

misunderstandings and miscommunication, which, in turn, can lead

to errors in the overall setup of the entire ML system. Thus, we ask

the research question:

RQ: What is MLOps?

To answer that question, we conduct a mixed-method research

endeavor to (a) identify important principles of MLOps, (b) carve

out functional core components, (c) highlight the roles necessary to

successfully implement MLOps, and (d) derive a general

architecture for ML systems design. In combination, these insights

result in a definition of MLOps, which contributes to a common

understanding of the term and related concepts.

In so doing, we hope to positively impact academic and

practical discussions by providing clear guidelines for

professionals and researchers alike with precise responsibilities.

These insights can assist in allowing more proofs of concept to

make it into production by having fewer errors in the system’s

design and, finally, enabling more robust predictions in real-world

environments.

The remainder of this work is structured as follows. We will first

elaborate on the necessary foundations and related work in the field.

Next, we will give an overview of the utilized methodology,

consisting of a literature review, a tool review, and an interview

study. We then present the insights derived from the application of

the methodology and conceptualize these by providing a unifying

definition. We conclude the paper with a short summary,

limitations, and outlook.

MLOps: Overview, Definition, and Architecture

Kreuzberger, Kühl, and Hirschl

2 Foundations of DevOps

In the past, different software process models and development

methodologies surfaced in the field of software engineering.

Prominent examples include waterfall [37] and the agile manifesto

[5]. Those methodologies have similar aims, namely to deliver

production-ready software products. A concept called “DevOps”

emerged in the years 2008/2009 and aims to reduce issues in

software development [9,31]. DevOps is more than a pure

methodology and rather represents a paradigm addressing social

and technical issues in organizations engaged in software

development. It has the goal of eliminating the gap between

development and operations and emphasizes collaboration,

communication, and knowledge sharing. It ensures automation

with continuous integration, continuous delivery, and continuous

deployment (CI/CD), thus allowing for fast, frequent, and reliable

releases. Moreover, it is designed to ensure continuous testing,

quality assurance, continuous monitoring, logging, and feedback

loops. Due to the commercialization of DevOps, many DevOps

tools are emerging, which can be differentiated into six groups

[23,28]: collaboration and knowledge sharing (e.g., Slack, Trello,

GitLab wiki), source code management (e.g., GitHub, GitLab),

build process (e.g., Maven), continuous integration (e.g., Jenkins,

GitLab CI), deployment automation (e.g., Kubernetes, Docker),

monitoring and logging (e.g., Prometheus, Logstash). Cloud

environments are increasingly equipped with ready-to-use DevOps

tooling that is designed for cloud use, facilitating the efficient

generation of value [38]. With this novel shift towards DevOps,

developers need to care about what they develop, as they need to

operate it as well. As empirical results demonstrate, DevOps

ensures better software quality [34]. People in the industry, as well

as academics, have gained a wealth of experience in software

engineering using DevOps. This experience is now being used to

automate and operationalize ML.

3 Methodology

To derive insights from the academic knowledge base while

also drawing upon the expertise of practitioners from the field, we

apply a mixed-method approach, as depicted in Figure 1. As a first

step, we conduct a structured literature review [20,43] to obtain an

overview of relevant research. Furthermore, we review relevant

tooling support in the field of MLOps to gain a better understanding

of the technical components involved. Finally, we conduct semi-

structured interviews [33,39] with experts from different domains.

On that basis, we conceptualize the term “MLOps” and elaborate

on our findings by synthesizing literature and interviews in the next

chapter (“Results”).

3.1 Literature Review

To ensure that our results are based on scientific knowledge, we

conduct a systematic literature review according to the method of

Webster and Watson [43] and Kitchenham et al. [20]. After an

initial exploratory search, we define our search query as follows:

((("DevOps" OR "CICD" OR "Continuous Integration" OR

"Continuous Delivery" OR "Continuous Deployment") AND

"Machine Learning") OR "MLOps" OR "CD4ML"). We query the

scientific databases of Google Scholar, Web of Science, Science

Direct, Scopus, and the Association for Information Systems

eLibrary. It should be mentioned that the use of DevOps for ML,

MLOps, and continuous practices in combination with ML is a

relatively new field in academic literature. Thus, only a few peer-

reviewed studies are available at the time of this research.

Nevertheless, to gain experience in this area, the search included

non-peer-reviewed literature as well. The search was performed in

May 2021 and resulted in 1,864 retrieved articles. Of those, we

screened 194 papers in detail. From that group, 27 articles were

selected based on our inclusion and exclusion criteria (e.g., the term

MLOps or DevOps and CI/CD in combination with ML was

described in detail, the article was written in English, etc.). All 27

of these articles were peer-reviewed.

3.2 Tool Review

After going through 27 articles and eight interviews, various

open-source tools, frameworks, and commercial cloud ML services

were identified. These tools, frameworks, and ML services were

reviewed to gain an understanding of the technical components of

which they consist. An overview of the identified tools is depicted

in Table 1 of the Appendix.

3.3 Interview Study

To answer the research questions with insights from practice,

we conduct semi-structured expert interviews according to Myers

and Newman [33]. One major aspect in the research design of

expert interviews is choosing an appropriate sample size [8]. We

apply a theoretical sampling approach [12], which allows us to

choose experienced interview partners to obtain high-quality data.

Such data can provide meaningful insights with a limited number

of interviews. To get an adequate sample group and reliable

insights, we use LinkedIn—a social network for professionals—to

identify experienced ML professionals with profound MLOps

knowledge on a global level. To gain insights from various

perspectives, we choose interview partners from different

organizations and industries, different countries and nationalities,

as well as different genders. Interviews are conducted until no new

categories and concepts emerge in the analysis of the data. In total,

we conduct eight interviews with experts (α - θ), whose details are

depicted in Table 2 of the Appendix. According to Glaser and

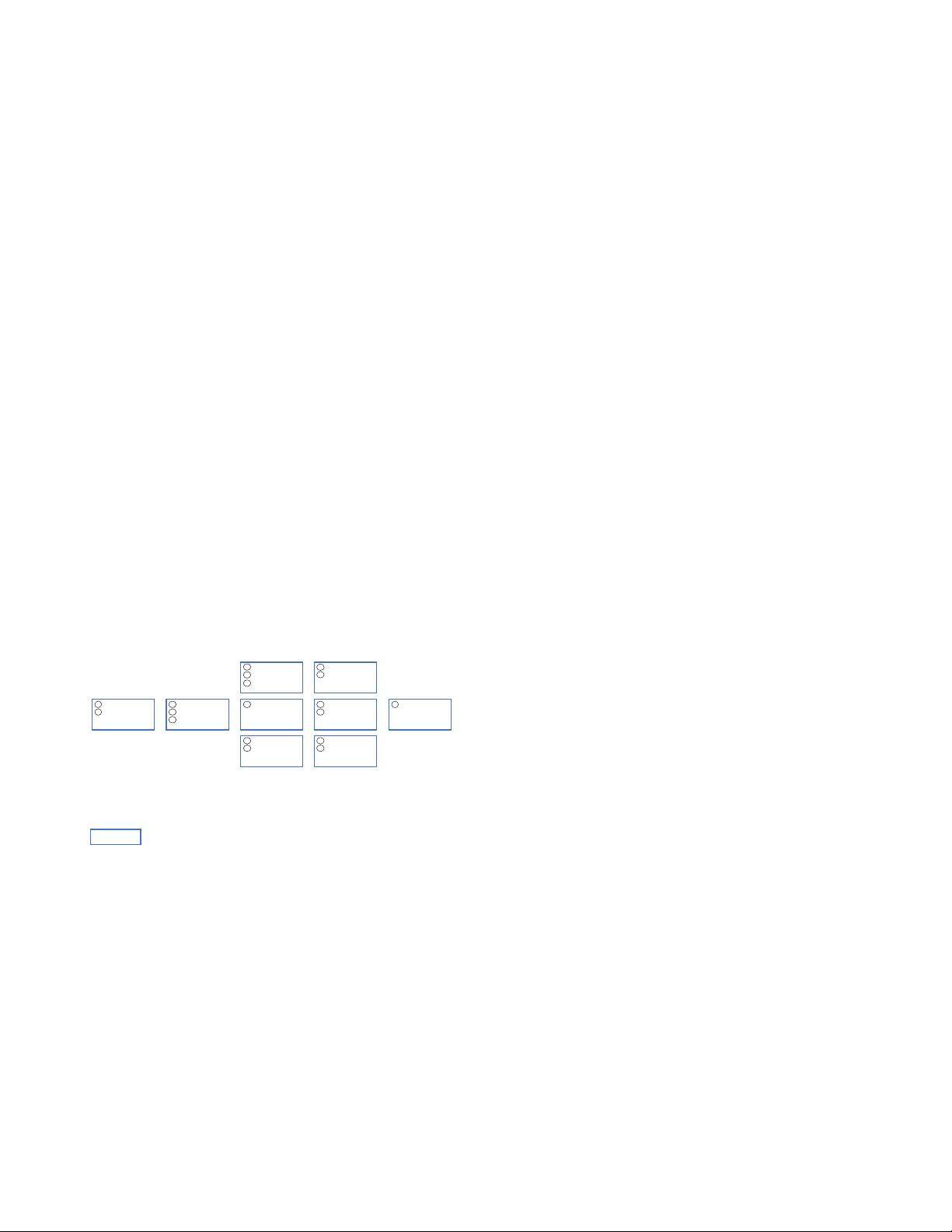

Methodology

Results

MLOps

Principles

Components

Roles

Architecture

Literature Review

(27 articles)

Tool Review

(11 tools)

Interview Study

(8 interviewees)

Figure 1. Overview of the methodology

MLOps

Kreuzberger, Kühl, and Hirschl

Strauss [5, p.61], this stage is called “theoretical saturation.” All

interviews are conducted between June and August 2021.

With regard to the interview design, we prepare a semi-

structured guide with several questions, documented as an

interview script [33]. During the interviews, “soft laddering” is

used with “how” and “why” questions to probe the interviewees’

means-end chain [39]. This methodical approach allowed us to gain

additional insight into the experiences of the interviewees when

required. All interviews are recorded and then transcribed. To

evaluate the interview transcripts, we use an open coding scheme

[8].

4 Results

We apply the described methodology and structure our resulting

insights into a presentation of important principles, their resulting

instantiation as components, the description of necessary roles, as

well as a suggestion for the architecture and workflow resulting

from the combination of these aspects. Finally, we derive the

conceptualization of the term and provide a definition of MLOps.

4.1 Principles

A principle is viewed as a general or basic truth, a value, or a

guide for behavior. In the context of MLOps, a principle is a guide

to how things should be realized in MLOps and is closely related

to the term “best practices” from the professional sector. Based on

the outlined methodology, we identified nine principles required to

realize MLOps. Figure 2 provides an illustration of these principles

and links them to the components with which they are associated.

P1 CI/CD automation. CI/CD automation provides continuous

integration, continuous delivery, and continuous deployment. It

carries out the build, test, delivery, and deploy steps. It provides

fast feedback to developers regarding the success or failure of

certain steps, thus increasing the overall productivity

[15,17,26,27,35,42,46] [α, β, θ].

P2 Workflow orchestration. Workflow orchestration

coordinates the tasks of an ML workflow pipeline according to

directed acyclic graphs (DAGs). DAGs define the task execution

order by considering relationships and dependencies

[14,17,26,32,40,41] [α, β, γ, δ, ζ, η].

P3 Reproducibility. Reproducibility is the ability to reproduce

an ML experiment and obtain the exact same results [14,32,40,46]

[α, β, δ, ε, η].

P4 Versioning. Versioning ensures the versioning of data,

model, and code to enable not only reproducibility, but also

traceability (for compliance and auditing reasons) [14,32,40,46] [α,

β, δ, ε, η].

P5 Collaboration. Collaboration ensures the possibility to

work collaboratively on data, model, and code. Besides the

technical aspect, this principle emphasizes a collaborative and

communicative work culture aiming to reduce domain silos

between different roles [14,26,40] [α, δ, θ].

P6 Continuous ML training & evaluation. Continuous

training means periodic retraining of the ML model based on new

feature data. Continuous training is enabled through the support of

a monitoring component, a feedback loop, and an automated ML

workflow pipeline. Continuous training always includes an

evaluation run to assess the change in model quality [10,17,19,46]

[β, δ, η, θ].

P7 ML metadata tracking/logging. Metadata is tracked and

logged for each orchestrated ML workflow task. Metadata tracking

and logging is required for each training job iteration (e.g., training

date and time, duration, etc.), including the model specific

metadata—e.g., used parameters and the resulting performance

metrics, model lineage: data and code used—to ensure the full

traceability of experiment runs [26,27,29,32,35] [α, β, δ, ε, ζ, η, θ].

P8 Continuous monitoring. Continuous monitoring implies

the periodic assessment of data, model, code, infrastructure

resources, and model serving performance (e.g., prediction

accuracy) to detect potential errors or changes that influence the

product quality [4,7,10,27,29,42,46] [α, β, γ, δ, ε, ζ, η].

P9 Feedback loops. Multiple feedback loops are required to

integrate insights from the quality assessment step into the

development or engineering process (e.g., a feedback loop from the

experimental model engineering stage to the previous feature

engineering stage). Another feedback loop is required from the

monitoring component (e.g., observing the model serving

performance) to the scheduler to enable the retraining

[4,6,7,17,27,46] [α, β, δ, ζ, η, θ].

4.2 Technical Components

After identifying the principles that need to be incorporated into

MLOps, we now elaborate on the precise components and

implement them in the ML systems design. In the following, the

components are listed and described in a generic way with their

essential functionalities. The references in brackets refer to the

respective principles that the technical components are

implementing.

C1 CI/CD Component (P1, P6, P9). The CI/CD component

ensures continuous integration, continuous delivery, and

continuous deployment. It takes care of the build, test, delivery, and

deploy steps. It provides rapid feedback to developers regarding the

success or failure of certain steps, thus increasing the overall

productivity [10,15,17,26,35,46] [α, β, γ, ε, ζ, η]. Examples are

Jenkins [17,26] and GitHub actions (η).

Source Code

Repository

CI/CD

Component

Workflow

Orchestration

Component

Feature

Stores

Model Training

Infrastructure

Model

Registry

ML Metadata

Stores

Monitoring

Component

Model Serving

Component

PRINCIPLES

P1 CI/CD automation

P2 Workflow orchestration

P3 Reproducibility

P4 Versioning of data, code, model

P5 Collaboration

P6 Continuous ML training & evaluation

P7 ML metadata tracking

P8 Continuous monitoring

P9 Feedback loops

P1

P6

P9

P4

P5

P2

P3

P6

P6

P3

P4

P3

P4

P8

P9

P4

P7

P1

COMPONENT

Figure 2. Implementation of principles within technical

components

剩余12页未读,继续阅读

资源评论

layyuiop

- 粉丝: 11

- 资源: 12

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 论文(最终)_20240430235101.pdf

- 基于python编写的Keras深度学习框架开发,利用卷积神经网络CNN,快速识别图片并进行分类

- 最全空间计量实证方法(空间杜宾模型和检验以及结果解释文档).txt

- 5uonly.apk

- 蓝桥杯Python组的历年真题

- 2023-04-06-项目笔记 - 第一百十九阶段 - 4.4.2.117全局变量的作用域-117 -2024.04.30

- 2023-04-06-项目笔记 - 第一百十九阶段 - 4.4.2.117全局变量的作用域-117 -2024.04.30

- 前端开发技术实验报告:内含4四实验&实验报告

- Highlight Plus v20.0.1

- 林周瑜-论文.docx

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功