没有合适的资源?快使用搜索试试~ 我知道了~

资源推荐

资源详情

资源评论

Copyright (c) 2013 IEEE. Personal use is permitted. For any other purposes, permission must be obtained from the IEEE by emailing pubs-permissions@ieee.org.

This article has been accepted for publication in a future issue of this journal, but has not been fully edited. Content may change prior to final publication.

> REPLACE THIS LINE WITH YOUR PAPER IDENTIFICATION NUMBER (DOUBLE-CLICK HERE TO EDIT) <

Copyright (c) 2013 IEEE. Personal use of this material is permitted. However, permission to use this material for any other purposes must be obtained from the

IEEE by sending a request to pubs-permissions@ieee.org

1

Abstract— High Efficiency Video Coding (HEVC) is an

emerging international video coding standard developed by the

Joint Collaborative Team on Video Coding (JCT-VC). Compared

to H.264/AVC, HEVC has achieved substantial compression

performance improvement. During the HEVC standardization, we

proposed several motion vector coding techniques, which were

crosschecked by other experts and then adopted into the standard.

In this paper, an overview of the motion vector coding techniques

in HEVC is firstly provided. Next, the proposed motion vector

coding techniques including a priority-based derivation algorithm

for spatial motion candidates, a priority-based derivation

algorithm for temporal motion candidates, a surrounding-based

candidate list, and a parallel derivation of the candidate list, are

also presented. Based on HEVC test model 9 (HM9), experimental

results show that the combination of the proposed techniques

achieves on average 3.1% bit-rate saving under the common test

conditions used for HEVC development.

Index Terms— Video standard, JCT-VC, HEVC, AMVP,

Merge, motion vector prediction, motion vector coding

I. INTRODUCTION

HE advances of digital video coding standards have

resulted in successes of multimedia systems such as

smartphones, digital TVs, and digital cameras for the past

decade. After standardization activities of H.261, MPEG-1,

MPEG-2, H.263, MPEG-4, and H.264/AVC [1], the demand

for improving video compression performance was still strong

due to requirements of larger picture resolutions, higher frame

rates, and better video qualities, so people kept looking for new

video coding techniques which can provide better coding

efficiency than H.264/AVC. In April 2010, 27 proposals were

submitted to the 1

st

JCT-VC meeting [2] as responses to the call

for proposals for the next generation video standard, and the

standardization of HEVC was formally launched. Later on

during the past JCT-VC meetings, many video coding

techniques proposed for HEVC were investigated in terms of

objective and/or subjective coding efficiency, computational

complexity, and parallel friendliness. The first edition of the

HEVC standard has been finalized after the 12th JCT-VC

meeting held in January 2013.

HEVC is based on a hybrid block-based motion-compensated

transform coding architecture [3][4]. The basic unit for

compression is termed coding tree unit (CTU). Each CTU may

Manuscript received January 30; 2013; revised May 10, 2013.

Jian-Liang Lin, Yi-Wen Chen, Yu-Wen Huang and Shaw-Min Lei are with

MediaTek, No. 1, Dusing Rd. 1, Hsinchu, Taiwan 30078 (e-mail: {jl.lin,

yiwen.chen, yuwen.huang, shawmin.lei}@mediatek.com).

contain one coding unit (CU) or recursively split into four

smaller CUs until the predefined minimum CU size is reached.

Each CU (also named leaf CU) contains one or multiple

prediction units (PUs) and a tree of transform units (TUs).

In general, a CTU consists of one luma coding tree block

(CTB) and two corresponding chroma CTBs, a CU consists of

one luma coding block (CB) and two corresponding chroma

CBs, a PU consists of one luma prediction block (PB) and two

corresponding chroma PBs, and a TU consists of one luma

transform block (TB) and two corresponding chroma TBs.

However, exceptions can occur because the minimum TB size is

4x4 for both luma and chroma (i.e., no 2x2 chroma TB

supported for 4:2:0 color format) and each intra chroma CB

always has only one intra chroma PB regardless of the number

of intra luma PBs in the corresponding intra luma CB.

For an intra CU, the luma CB can be predicted by one or four

luma PBs, and each of the two chroma CBs is always predicted

by one chroma PB, where each luma PB has one intra luma

prediction mode and the two chroma PBs share one intra

chroma prediction mode. Moreover, for the intra CU, the TB

size cannot be larger than the PB size. In each PB, the intra

prediction is applied to predict samples of each TB inside the

PB from neighboring reconstructed samples of the TB. For each

PB, in addition to 33 directional intra prediction modes, DC and

planar modes are also supported to predict flat regions and

gradually varying regions, respectively.

For each inter PU, one of three prediction modes including

inter, skip, and merge, can be selected. Generally speaking, a

motion vector competition (MVC) scheme [5] is introduced to

select a motion candidate from a given candidate set that

includes spatial and temporal motion candidates. Multiple

references to the motion estimation allows finding the best

reference in 2 possible reconstructed reference picture list

(namely List 0 and List 1). For the inter mode (unofficially

termed AMVP mode, where AMVP stands for advanced motion

vector prediction [6]), inter prediction indicators (List 0, List 1,

or bi-directional prediction), reference indices, motion

candidate indices, motion vector differences (MVDs) and

prediction residual are transmitted. As for the skip mode and the

merge mode, only merge indices are transmitted, and the current

PU inherits the inter prediction indicator, reference indices, and

motion vectors from a neighboring PU referred by the coded

merge index. In the case of a skip coded CU, the residual signal

is also omitted

Quantization, entropy coding, and deblocking filter (DF) [7]

are also in the coding loop of HEVC. The basic operations of

these three modules are conceptually similar to those used in

Motion Vector Coding in the HEVC Standard

Jian-Liang Lin, Yi-Wen Chen, Yu-Wen Huang, and Shaw-Min Lei, Fellow, IEEE

T

Copyright (c) 2013 IEEE. Personal use is permitted. For any other purposes, permission must be obtained from the IEEE by emailing pubs-permissions@ieee.org.

This article has been accepted for publication in a future issue of this journal, but has not been fully edited. Content may change prior to final publication.

> REPLACE THIS LINE WITH YOUR PAPER IDENTIFICATION NUMBER (DOUBLE-CLICK HERE TO EDIT) <

Copyright (c) 2013 IEEE. Personal use of this material is permitted. However, permission to use this material for any other purposes must be obtained from the

IEEE by sending a request to pubs-permissions@ieee.org

2

H.264/AVC but differ in details.

Sample adaptive offset (SAO)[8][9] is a new in-loop filtering

technique applied after DF. SAO aims to reduce sample

distortion by classifying deblocked samples into different

categories and then adding an offset to deblocked samples of

each category.

In [10], an overview of the HEVC standard is given. In [11],

HEVC is compared with H.264/AVC in terms of complexity.

More detailed comparisons of the coding efficiency and

complexity between HEVC and other video coding standards

are carried in [12][13][14]. In [15] high-level syntax and

reference picture management in HEVC are described. In [16],

the block partitioning structure in HEVC is explained. In [17]

and [18], intra prediction and intra mode coding are stated. In

[19], [20], [7], and [8][9], merge operations, coefficient coding,

DF, and SAO are introduced, respectively. To provide a

satisfactory introduction to the motion vector (MV) prediction

and MV coding in HEVC, especially the derivation procedures

of motion candidates for the inter mode, skip mode, and merge

mode, in this paper, a general overview of MV prediction and

MV coding techniques is first presented. Moreover, this paper

also describes our several proposed MV prediction and MV

coding techniques, which were adopted into the standard. The

proposed techniques include a priority-based derivation

algorithm for spatial motion candidates, a priority-based

derivation algorithm for temporal motion candidates, a

surrounding-based candidate list, and parallel derivation of the

candidate list.

The rest of this paper is organized as follows. Section II gives

an overview of the inter prediction in HEVC. Section III

introduces the proposed techniques to improve the MV coding

and MV prediction. The experimental results and the conclusion

are given in section IV and section V, respectively.

II. OVERVIEW OF THE INTER PREDICTION IN HEVC

A. Competitive Spatial-temporal Motion Candidate in Inter

Prediction

There are three prediction modes for the inter prediction in

HEVC [3] [4], including the inter mode, skip mode and merge

mode. For all the three modes, to increase the coding efficiency

of the MV prediction and MV coding, a motion vector

competition (MVC) scheme is applied to select one motion

candidate among a given candidate list containing spatial and

temporal motion candidates.

For the inter mode, an inter prediction indicator is transmitted

to denote list 0 prediction, list 1 prediction, or bi-prediction.

Next, one or two reference indices are transmitted when there

are multiple reference pictures. An index is transmitted for each

prediction direction to select one motion candidate from the

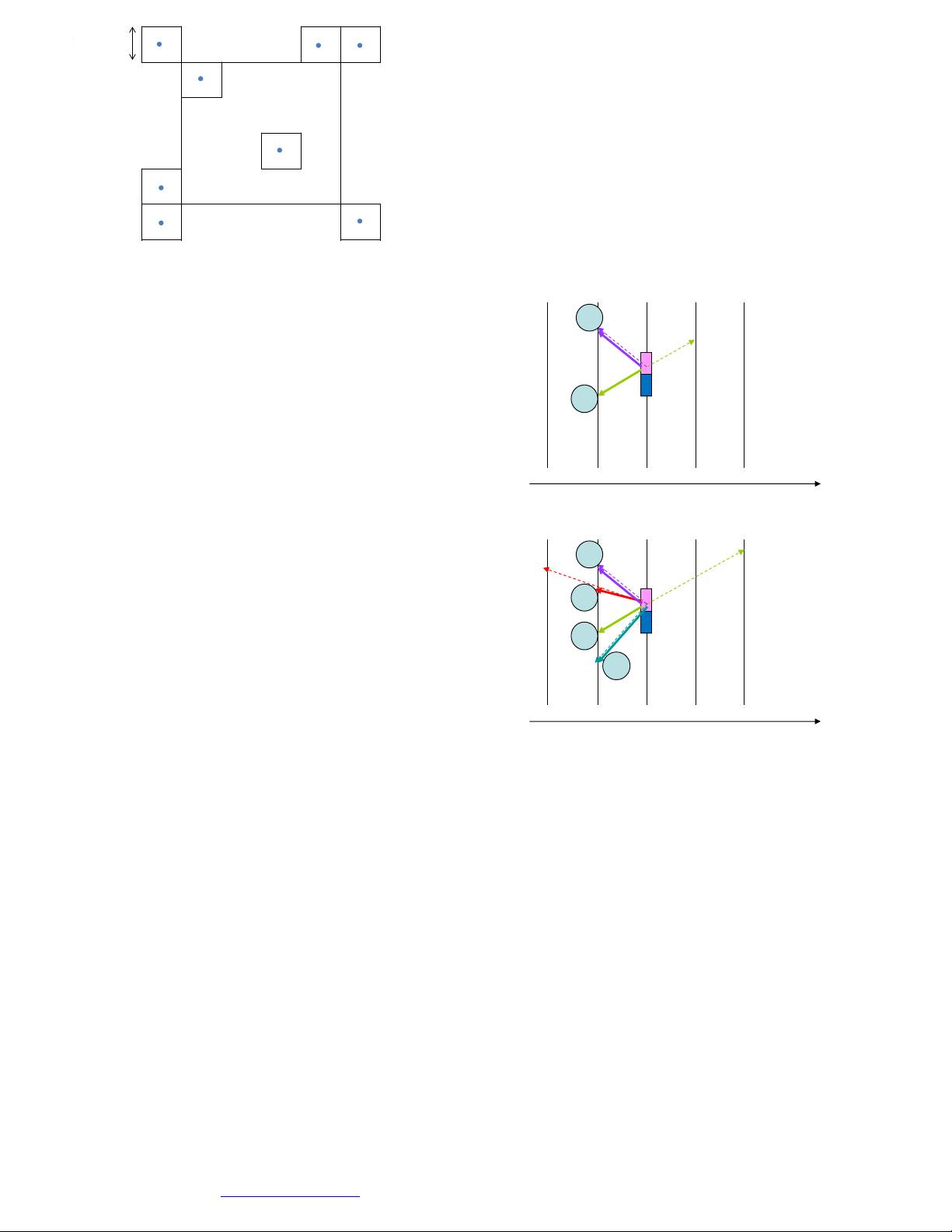

candidate list. As shown in Fig. 1, the candidate list for the inter

mode includes two spatial motion candidates and one temporal

motion candidate:

1. Left candidate (the first available from A

0

, A

1

)

2. Top candidate (the first available from B

0

, B

1

, B

2

)

3. Temporal candidate (the first available from T

BR

and T

CT

)

The left spatial motion candidate is searched from the below

left to the left (i.e., A

0

and A

1

) and the first available one is

selected as the left candidate, while the top spatial motion

candidate is searched from the above right to the above left (i.e.,

B

0

, B

1

, and B

2

) and the first available one is selected as the top

candidate. A temporal motion candidate is derived from a block

(T

BR

or T

CT

) located in a reference picture, which is termed

temporal collocated picture. The temporal collocated picture is

indicated by transmitting one flag in slice header to specify

which reference picture list and one reference index in slice

header to indicate which reference picture in the reference list is

used as the collocated reference picture. After the index is

transmitted, one or two corresponding motion vector

differences (MVDs) are transmitted.

For the skip mode and merge mode, a merge index is

signalled to indicate which candidate in the merging candidate

list is used. No inter prediction indicator, reference index, or

MVD is transmitted. Each PU of the skip or merge mode reuses

the inter prediction indicator, reference index (or indices), and

motion vector(s) of the selected candidate. It is noted that if the

selected candidate is a temporal motion candidate, the reference

index is always set to 0. As shown in Fig. 1, the merging

candidate list for the skip mode and the merge mode includes

four spatial motion candidates and one temporal motion

candidate:

1. Left candidate (A

1

)

2. Top candidate (B

1

)

3. Above right candidate (B

0

)

4. Below left candidate (A0)

5. Above left candidate (B2), used only when any of the

above spatial candidate is not available

6. Temporal candidate (the first available from T

BR

and T

CT

)

B. Redundancy Removal and Additional Motion Candidates

For the inter mode, skip mode, and merge mode, after

deriving the spatial motion candidates, a pruning process is

performed to check the redundancy among the spatial

candidates.

After removing redundant or unavailable candidates, the size

of the candidate list could be adjusted dynamically at both the

encoder and decoder sides so that the truncated unary

binarization can be beneficial for entropy coding of the index.

Although the dynamic size of candidate list could bring coding

gains, it also introduces a parsing problem. Because the

temporal motion candidate is included in the candidate list,

when one MV of a previous picture cannot be decoded correctly,

a mismatch between the candidate list on the encoder side and

that on the decoder side may occur, resulting in a parsing error

of the candidate index. This parsing error can propagate

severely, and the rest of the current picture cannot be parsed or

decoded properly. What is even worse, this parsing error can

affect subsequent inter pictures that also allow temporal motion

candidates. One small decoding error of a MV may cause

failures of parsing many subsequent pictures.

In HEVC, in order to solve the mentioned parsing problem, a

fixed candidate list size is used to decouple the candidate list

construction and the parsing of the index. Moreover, in order to

Copyright (c) 2013 IEEE. Personal use is permitted. For any other purposes, permission must be obtained from the IEEE by emailing pubs-permissions@ieee.org.

This article has been accepted for publication in a future issue of this journal, but has not been fully edited. Content may change prior to final publication.

> REPLACE THIS LINE WITH YOUR PAPER IDENTIFICATION NUMBER (DOUBLE-CLICK HERE TO EDIT) <

Copyright (c) 2013 IEEE. Personal use of this material is permitted. However, permission to use this material for any other purposes must be obtained from the

IEEE by sending a request to pubs-permissions@ieee.org

3

compensate the coding performance loss caused by the fixed list

size, additional candidates are assigned to the empty positions in

the candidate list. In this process, the index is coded in truncated

unary codes of a maximum length, where the maximum length is

transmitted in slice header for the skip mode and merge mode

and fixed to 2 for the inter mode.

For the inter mode, a zero vector motion candidate is added to

fill the empty positions in the AMVP candidate list after the

derivation and pruning of the two spatial motion candidates and

the one temporal motion candidate. As for the skip mode and

merge mode, after the derivation and pruning of the four spatial

motion candidates and the one temporal motion candidate, if the

number of available candidates is smaller than the fixed

candidate list size, additional candidates are derived and added

to fill the empty positions in the merging candidate list.

Two types of additional candidates are used to fill the

merging candidate list: the combined bi-predictive motion

candidate and the zero vector motion candidate. The combined

bi-predictive motion candidates [21] are created by combining

two original motion candidates according to a predefined order.

After adding the combined bi-predictive motion candidates, if

the merging candidate list still has empty position(s), zero

vector motion candidates are added to the remaining positions.

III. THE ENHANCED CODING TOOLS FOR MOTION CANDIDATE

This section describes our proposed methods for improving

the MV coding efficiency. Four improvements including a

priority-based derivation algorithm of spatial motion candidates,

a priority-based derivation algorithm of temporal motion

candidates, a surrounding-based candidate list, and parallel

derivation of the candidate list are described as follows.

A. Priority-based Derivation Algorithm of Spatial Motion

Candidates

To improve the coding efficiency of the inter mode, a

priority-based scheme is proposed to derive each spatial motion

candidate based on a predefined priority. In the previous

scheme for deriving spatial motion candidate, only MVs with

the same reference list and the same reference index as the

current block are considered as available spatial motion

candidates. In the proposed priority-based scheme, the spatial

motion candidate can be derived from the MV with a different

list or a different reference picture to increase the chance of

availability for the spatial motion candidate [22][23]. The

spatial candidate is derived based on the following ordered

steps:

1. The MV from the same reference list and the same

reference picture

2. The MV from the other reference list and the same

reference picture

3. The scaled MV from the same reference list and a different

reference picture

4. The scaled MV from the other reference list and a different

reference picture

time

k l mji

picture id

current

block

neighboring

block b

j

L0mv

l

L1mv

1

2

1’

2’

(a)

time

k l mji

picture id

current

block

j

L0mv

m

L1mv

j

mv L1

i

mv L0

1

2

3

4

1’

2’

3’

4’

neighboring

block b

(b)

Fig. 2. Examples of deriving spatial motion candidate, where the numbers

denote the priority for the derivation of the spatial motion candidate.

B

2

4

B

1

B

0

A

1

A

0

T

TL

T

CT

T

BR

Fig. 1. Illustration of deriving AMVP or merging candidate list.

剩余12页未读,继续阅读

资源评论

hzxtzhan2014-04-12目前正在学习HEVC,这篇论文对我很重要,相信会有所帮助。

hzxtzhan2014-04-12目前正在学习HEVC,这篇论文对我很重要,相信会有所帮助。 hcf123cx2014-07-18挺好的资源

hcf123cx2014-07-18挺好的资源

road-cae

- 粉丝: 5

- 资源: 29

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 基于STM32使用HAL库实现USB组合设备之多路CDC源码+说明文档.zip

- 金融贸易项目springboot

- mybatis动态sqlSQL 映射 XML 文件是所有 sql 语句

- 基于基于STM32的智能家居系统源码+qt上位机源码.zip

- 深圳房地产资源数据报告

- 基于stm32的智能门禁系统源码+设计文档+演示视频.zip

- cef + chromium 完整源码支持h265和h264

- 基于SpringBoot的API管理平台源代码+数据库,以项目的形式管理API文档,可以进行API的编辑、测试、Mock等操作

- protobuf 3.11版本,静态编译

- 2023NOC创客智慧编程赛项真题图形化-选拔赛(有解析)

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功