没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

随着自然语言处理(NLP)模型,特别是大型语言模型(LLMs)的显著进步,人们对隐私侵犯的担忧日益增加。尽管联邦学习(FL)增强了隐私保护,但攻击者仍可能通过利用模型参数和梯度来恢复私有训练数据。因此,防御此类嵌入攻击仍然是一个开放的挑战。为了解决这个问题,我们提出了一种新的子词嵌入方法——从字节到子词嵌入(SEB),并使用深度神经网络对子词进行字节序列编码,使得输入文本的恢复变得更加困难。重要的是,我们的方法在保持相同输入长度的效率的同时,只需要256字节的词汇表大小的内存。因此,我们的解决方案通过保持隐私而不失效率或准确性,优于传统方法。我们的实验表明,SEB可以有效地防御联邦学习中基于嵌入的攻击,恢复原始句子。同时,我们验证了SEB在机器翻译、情感分析和语言建模方面获得了与标准子词嵌入方法相当甚至更好的结果,并且时间和空间复杂度更低。

资源推荐

资源详情

资源评论

Subword Embedding from Bytes Gains

Privacy without Sacrificing Accuracy and Complexity

Mengjiao Zhang

Department of Computer Science

Stevens Institute of Technology

mzhang49@stevens.edu

Jia Xu

Department of Computer Science

Stevens Institute of Technology

jxu70@stevens.edu

Abstract

While NLP models significantly impact our lives, there are rising concerns about

privacy invasion. Although federated learning enhances privacy, attackers may

recover private training data by exploiting model parameters and gradients. There-

fore, protecting against such embedding attacks remains an open challenge. To

address this, we propose Subword Embedding from Bytes (SEB) and encode sub-

words to byte sequences using deep neural networks, making input text recovery

harder. Importantly, our method requires a smaller memory with

256

bytes of

vocabulary while keeping efficiency with the same input length. Thus, our solution

outperforms conventional approaches by preserving privacy without sacrificing

efficiency or accuracy. Our experiments show

SEB

can effectively protect against

embedding-based attacks from recovering original sentences in federated learning.

Meanwhile, we verify that

SEB

obtains comparable and even better results over

standard subword embedding methods in machine translation, sentiment analysis,

and language modeling with even lower time and space complexity.

1 Introduction

Advances in Natural Language Processing (NLP), such as Large Language Models (LLMs), have

made noticeable advancements in performance over the last decades, partially attributed to the large

datasets available. Since most data are from users, their privacy concerns play an increasingly critical

role, which is essential to building user trust, encouraging the responsible use of language data,

protecting personal information, ensuring ethical use, and avoiding potential harm to individuals.

Federated learning (FL) enables training shared models across multiple clients without transferring

the data to a central server to preserve user privacy. Although only the model updates are sent to

the central server, adversaries can still use model updates to reconstruct the original data and leak

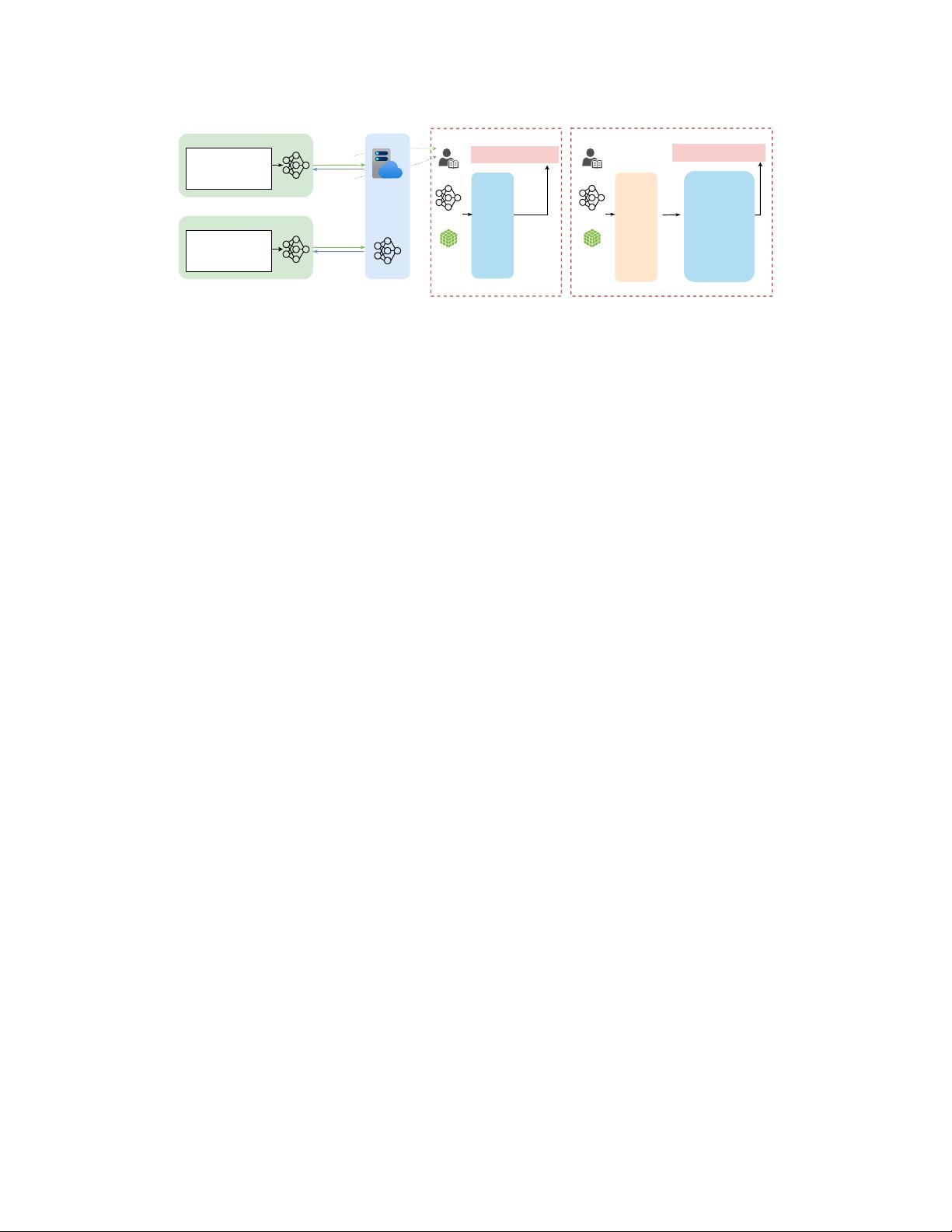

sensitive information to compromise the user’s privacy. Figure 1(a) demonstrates an FL framework,

and Figure 1(b) shows how embedding-based attacks work as in [

7

]. In the illustrated example,

the attacker extracts all candidate words in a batch of data from the embedding gradients and can

easily reconstruct the text with beam search and reordering since one can perform straightforward

lookups when a vector is updated due to the one-to-one mapping between word/subword tokens and

embedding vectors.

Our intuitive idea is to apply the byte embedding method because the same bytes are repeatedly

used for multiple subwords. We aim to design a one-to-many mapping between words/subwords and

embedding vectors to increase the difficulty of the simple lookup so that retrieving input subwords

with the updated byte embeddings is harder, which makes the byte embedding in NLP models a

potential defense. For example, in subword embedding, if the word “good” is updated, the attacker

will only retrieve this word based on embedding updates. However, if we tokenize “good” into four

bytes, such as “50, 10, 128, 32", all subwords containing at least one of these bytes will be retrieved,

Preprint. Under review.

arXiv:2410.16410v1 [cs.AI] 21 Oct 2024

Private batch data

Tom lives in New York.

He is 20 years old.

...

Private batch data

This patient has heart

disease.

...

Clients Server

...

Gradients

Model

parameters

Gradients

Model

parameters

(a)

Attacker

Reconstructed text:

Tom lives in New York.

Model

Gradient

"New",

"is",

"He",

"years",

"old",

"20",

"lives",

"in",

"York",

"Tom",

"."

Beam search

and reorder

Bag-of-Words Extraction

(b)

Attacker

Reconstructed text:

Lucius lives in Tokyo now.

Model

Gradient

46, 48, 50,

67, 69, 72,

76, 78, 80,

83, 84, 89,

91, 93, 97,

100, 101,

105, 107,

108, 109,

110, 111,

114, 115,

118, 119,

121

Bag-of-Bytes Extraction Bag-of-Words Extraction

"Liberty", "Lab",

"Outside",

"Lucius", "left",

"airports",

"Jimmie", "Tokyo",

"in","sleepy",

"Canada", "71",

"is", "fine",

"##cian","##ture",

"now", "lives"

...

(c)

Figure 1: An attack example of recovering text in FL. (a): An FL framework. (b) and (c): Recovering

text using embedding gradients of subwords and bytes.

resulting in a larger search space and more possibilities to recover the original sentence. As shown in

Figure 1(c), although the attacker extracts a set of bytes, the number of candidate subwords is much

greater than that of using subword embeddings.

There are two major challenges to directly apply existing byte encodings [

27

,

21

,

31

] to enhance

privacy: First, smaller textual granularity cannot show the semantic meaning of each word, leading

to a less interpretable and analyzable model. Second, byte-based models are more computationally

expensive, as input sequences become much longer after byte tokenization.

To address these challenges in byte-based models, we propose to encode subwords with bytes and

aggregate the byte embeddings to obtain a single subword embedding. The procedure consists of three

steps: (1) Construct a mapping between subwords and bytes. (2) Convert the input text into a byte

sequence. (3) Retrieve the corresponding byte embeddings and aggregate them back into subword

embeddings using a feed-forward network while maintaining the subword boundaries. By adopting

this approach, we can leverage the privacy protection provided by bytes with a small vocabulary size

of 256 while keeping the same input sequence length as the subword sequence.

Our main contributions are:

•

We introduce a novel text representation method

SEB

, which achieves a vocabulary size of

256

of

the learned model without increasing the input sequence length.

•

We verify that our

SEB

can protect NLP models against data leaking attacks based on embedding

gradients. To the best of our knowledge, our work is the first one to study privacy preservation with

byte representations in FL.

•

We demonstrate that

SEB

improves privacy and, at the same time, achieves comparable or better

accuracy with enhanced time and space efficiency without the privacy-performance/efficiency

trade-off in conventional approaches.

2 Related Work

Attacks and defenses in language model Some recent works consider the reconstruction as an

optimization task [

32

,

5

,

2

]. The attacker updates its dummy inputs and labels to minimize the

distance between the gradients of the victim uploaded and the gradients the attacker calculated based

on its dummy inputs and labels. [

7

] shows that the attackers can reconstruct a set of words with the

embedding gradients, then apply beam search and reorder with a pretrained language model for input

recovery. One defense described in [

32

,

5

,

2

] is to encrypt the gradients or make them not directly

inferable. However, encryption requires special setups and could be costly to implement. Moreover,

it does not provide effective protection against server-side privacy leakage [

1

,

8

,

6

]. Differential

privacy is another defense strategy, but it can hurt model accuracy [

32

,

26

,

29

,

10

]. While [

30

]

proposed a secure federated learning framework that can prevent privacy leakage based on gradient

reconstruction, it does not effectively address the retrieval of a bag of words from the embedding

matrix gradients, as proposed in [7].

2

Subword-level and byte-level language models Subword tokenization such as BPE [

19

] has some

limitations, despite the wide application. It cannot handle out-of-vocabulary subwords and requires

language-specific tokenizers. Another challenge is the high space complexity of the embedding matrix

when the vocabulary size is huge. Byte tokenization is a solution to address these issues [

20

,

31

,

27

].

UTF-8 can encode almost all languages. Therefore, there will be no out-of-vocabulary words and the

language-specific tokenizer is unnecessary. In addition, as the total number of bytes in UTF-8 is 256,

the embedding matrix for byte vocabulary is much smaller than most subword vocabularies, reducing

the number of parameters in the embedding layer and saving memory space.

Subword-level model with character- or byte-level fusion The character/byte-based models

often result in longer input sequences and higher time complexity compared to the subword-based

model. To make the model efficient, recent works have explored character/byte-level fusion. For

example, [

24

] proposes CHARFORMER, using a soft gradient-based subword tokenization module to

obtain “subword tokens”. It generates and scores multiple subword blocks, aggregates them to obtain

subword representation, and then performs downsampling to reduce the sequence length. Although

CHARFORMER is faster than vanilla byte/character-based models, it does not maintain subword

boundaries, limiting the model’s interpretability. [

23

] proposes Local Bytes Fusion (LOBEF) to

aggregate local semantic information and maintain the word boundary. However, it does not reduce

the sequence length, making training and inference time-consuming.

3 Preliminaries

3.1 Federated Learning

In federated learning (FL), multiple clients jointly train a model using their private data. Assume we

have

N

clients,

C = {c

1

, c

2

, . . . , c

N

}

, and a server

s

, in an FL system. The jointly trained model is

f

with parameters

θ

. The clients’ private data are

D

1

, D

2

, . . . , D

N

and the objective function is

L

. For

easier illustration, we assume all clients participate in each communication and use FedSGD [

13

]

to update the model parameters. In each communication round

t

, server

s

first sends the model

parameters

θ

t

to all clients. Then each client

c

i

compute

∇

θ

t

L

θ

t

(B

i

)

, the gradients of current model

f

θ

t

, based on a randomly sampled data batch

B

i

⊂ D

i

. After local computation, the clients send the

gradients ∆

t

1

, ∆

t

2

, . . . , ∆

t

N

to server and server s aggregate all the gradients and update the model:

θ

t+1

= θ

t

− η

N

X

i=1

∇

θ

t

L

θ

t

(B

i

). (1)

Here, Equation (1) is the gradient descent, and η is the learning rate.

3.2 Threat Model

Adversary’s capabilities and objective We follow the attack settings in [

7

]. The optimized model

is a language model

L

, parameterized by

θ

. This scenario makes the attacker white box access to the

gradients

∇

θ

t

L

θ

t

(B

i

)

sent by the victim client

c

i

.

θ

t

is the model parameter that the server sends

to the clients at any communication round

t

. From parameters

θ

t

and gradients

∇

θ

t

L

θ

t

(B

i

)

, the

attacker can get the information of the vocabulary

V

and the embedding matrix

W

to retrieve which

tokens are updated. The goal of the attacker is to recover at least one sentence from

B

i

, based on

∇

θ

t

L

θ

t

(B

i

) and θ

t

.

Attack model This paper does not address the gradient leakage attack which aims to obtain private

data by minimizing the difference between gradients derived from a dummy input and the actual

gradients of the victim’s data, because several methods have been proposed to mitigate this particular

attack [

32

,

5

,

26

]. Instead, we focus on a specific attack model, FILM, introduced in [

7

], for which

effective defenses have yet to be explored. In this model, the attacker attempts to reconstruct sentences

from the victim’s training batches as follows: (1) extracting candidate tokens from the gradients, (2)

applying beam search with a pre-trained Language Model, such as GPT-2, to reconstruct the input

sentence, and (3) reordering the subword tokens to achieve the best reconstruction.

3

剩余14页未读,继续阅读

资源评论

sp_fyf_2024

- 粉丝: 1515

- 资源: 59

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功