没有合适的资源?快使用搜索试试~ 我知道了~

Instruction Tuning for Large Language Models A Survey.pdf

需积分: 5 0 下载量 103 浏览量

2024-06-13

21:07:02

上传

评论

收藏 4.71MB PDF 举报

温馨提示

试读

31页

Instruction Tuning for Large Language Models A Survey.pdf

资源推荐

资源详情

资源评论

Instruction Tuning for Large Language Models: A Survey

Shengyu Zhang

♠

, Linfeng Dong

♠

, Xiaoya Li

♣

, Sen Zhang

♠

Xiaofei Sun

♠

, Shuhe Wang

♣

, Jiwei Li

♠

, Runyi Hu

♠

Tianwei Zhang

▲

, Fei Wu

♠

and Guoyin Wang

♦

Abstract

This paper surveys research works in the

quickly advancing field of instruction tuning

(IT), a crucial technique to enhance the

capabilities and controllability of large

language models (LLMs). Instruction tuning

refers to the process of further training LLMs

on a dataset consisting of (INSTRUCTION,

OUTPUT) pairs in a supervised fashion,

which bridges the gap between the next-word

prediction objective of LLMs and the users’

objective of having LLMs adhere to human

instructions. In this work, we make a

systematic review of the literature, including

the general methodology of IT, the construction

of IT datasets, the training of IT models, and

applications to different modalities, domains

and application, along with analysis on

aspects that influence the outcome of IT (e.g.,

generation of instruction outputs, size of the

instruction dataset, etc). We also review the

potential pitfalls of IT along with criticism

against it, along with efforts pointing out

current deficiencies of existing strategies and

suggest some avenues for fruitful research.

1 Introduction

The field of large language models (LLMs)

has witnessed remarkable progress in recent

years. LLMs such as GPT-3 (Brown et al.,

2020b), PaLM (Chowdhery et al., 2022), and

LLaMA (Touvron et al., 2023a) have demonstrated

impressive capabilities across a wide range of

natural language tasks (Zhao et al., 2021; Wang

et al., 2022b, 2023a; Wan et al., 2023; Sun et al.,

2023c; Wei et al., 2023; Li et al., 2023a; Gao et al.,

2023a; Yao et al., 2023; Yang et al., 2022a; Qian

et al., 2022; Lee et al., 2022; Yang et al., 2022b;

Gao et al., 2023b; Ning et al., 2023; Liu et al.,

♠

Zhejiang University,

♣

Shannon.AI,

▲

Nanyang

Technological University,

♦

Amazon

Email: sy_zhang@zju.edu.cn

Project link:

https://github.com/xiaoya-li/

Instruction-Tuning-Survey

2021b; Wiegreffe et al., 2021; Sun et al., 2023b,a;

Adlakha et al., 2023; Chen et al., 2023). One of the

major issues with LLMs is the mismatch between

the training objective and users’ objective: LLMs

are typically trained on minimizing the contextual

word prediction error on large corpora; while

users want the model to "follow their instructions

helpfully and safely" (Radford et al., 2019; Brown

et al., 2020a; Fedus et al., 2021; Rae et al., 2021;

Thoppilan et al., 2022)

To address this mismatch, instruction tuning

(IT) is proposed, serving as an effective technique

to enhance the capabilities and controllability

of large language models. It involves further

training LLMs using (INSTRUCTION, OUTPUT)

pairs, where INSTRUCTION denotes the human

instruction for the model, and OUTPUT denotes the

desired output that follows the INSTRUCTION. The

benefits of IT are threefold: (1) Finetuning an LLM

on the instruction dataset bridges the gap between

the next-word prediction objective of LLMs and

the users’ objective of instruction following; (2)

IT allows for a more controllable and predictable

model behavior compared to standard LLMs. The

instructions serve to constrain the model’s outputs

to align with the desired response characteristics

or domain knowledge, providing a channel for

humans to intervene with the model’s behaviors;

and (3) IT is computationally efficient and can help

LLMs rapidly adapt to a specific domain without

extensive retraining or architectural changes.

Despite its effectiveness, IT also poses

challenges: (1) Crafting high-quality instructions

that properly cover the desired target behaviors is

non-trivial: existing instruction datasets are usually

limited in quantity, diversity, and creativity; (2)

there has been an increasing concern that IT only

improves on tasks that are heavily supported in

the IT training dataset (Gudibande et al., 2023);

and (3) there has been an intense criticism that

IT only captures surface-level patterns and styles

arXiv:2308.10792v4 [cs.CL] 9 Oct 2023

(e.g., the output format) rather than comprehending

and learning the task (Kung and Peng, 2023).

Improving instruction adherence and handling

unanticipated model responses remain open

research problems. These challenges highlight the

importance of further investigations, analysis, and

summarization in this field, to optimize the fine-

tuning process and better understand the behavior

of instruction fine-tuned LLMs.

In the literature, there has been an increasing

research interest in analysis and discussions on

LLMs, including pre-training methods (Zhao et al.,

2023), reasoning abilities (Huang and Chang,

2022), downstream applications (Yang et al., 2023;

Sun et al., 2023b), but rarely on the topic of

LLM instruction finetuning. This survey attempts

to fill this blank, organizing the most up-to-date

state of knowledge on this quickly advancing field.

Specifically,

•

Section 2 presents the general methodology

employed in instruction fine-tuning.

•

Section 3 outlines the construction process of

commonly-used IT representative datasets.

•

Section 4 presents representative instruction-

finetuned models.

•

Section 5 reviews multi-modality techniques

and datasets for instruction tuning, including

images, speech, and video.

•

Section 6 reviews efforts to adapt LLMs to

different domains and applications using the

IT strategy.

•

Section 7 reviews explorations to make

instruction fine-tuning more efficient,

reducing the computational and time costs

associated with adapting large models.

•

Section 8 presents the evaluation of IT models,

analysis on them, along with criticism against

them.

2 Methodology

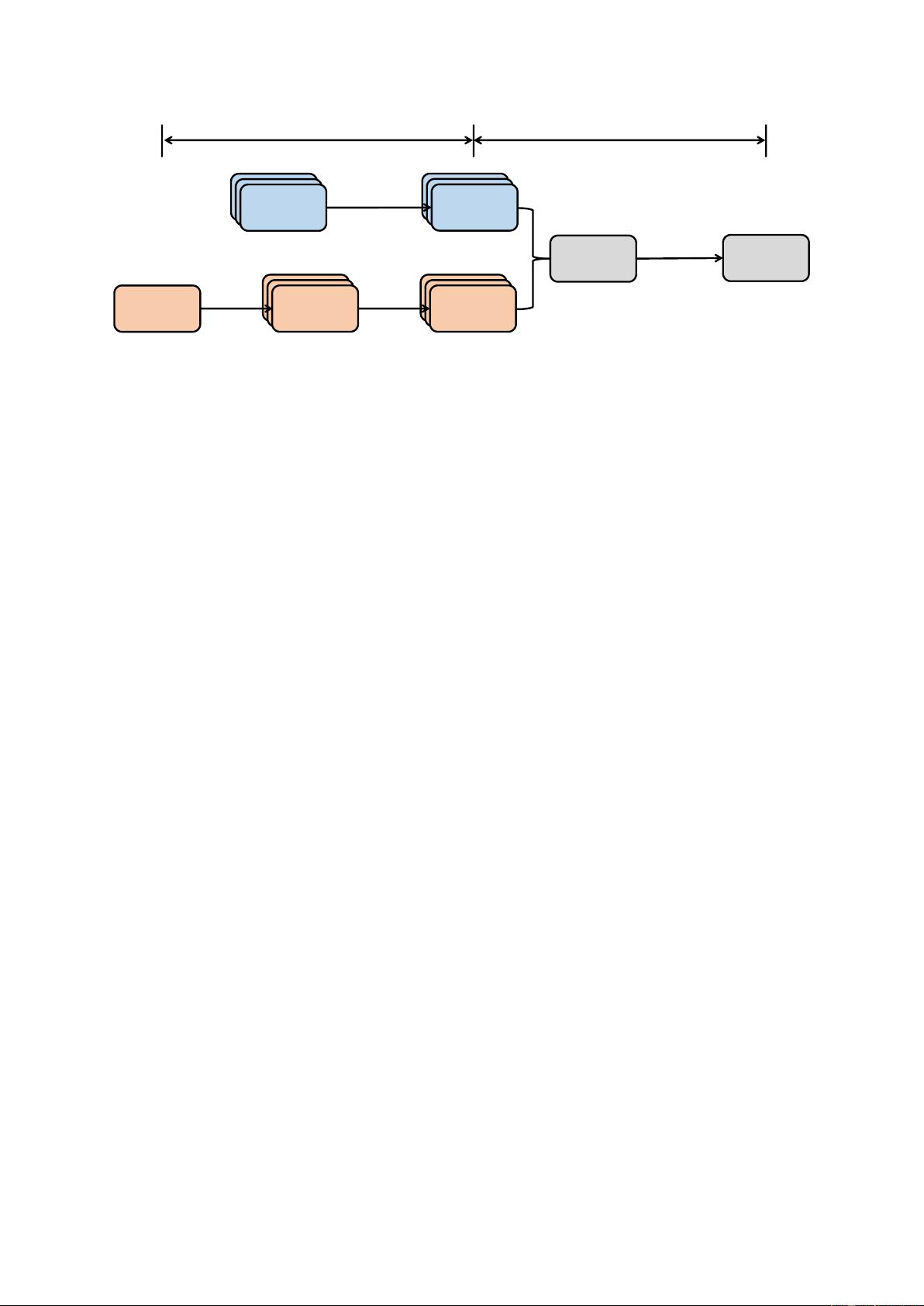

In this section, we describe the general pipeline

employed in instruction tuning.

2.1 Instruction Dataset Construction

Each instance in an instruction dataset consists of

three elements: an instruction, which is a natural

language text sequence to specify the task (e.g.,

write a thank-you letter to XX for XX, write a blog

on the topic of XX, etc); an optional input which

provides supplementary information for context;

and an anticipated output based on the instruction

and the input.

There are generally two methods for

constructing instruction datasets:

•

Data integration from annotated natural

language datasets. In this approach,

(instruction, output) pairs are collected from

existing annotated natural language datasets

by using templates to transform text-label

pairs to (instruction, output) pairs. Datasets

such as Flan (Longpre et al., 2023) and

P3 (Sanh et al., 2021) are constructed based

on the data integration strategy.

•

Generating outputs using LLMs: An alternate

way to quickly gather the desired outputs to

given instructions is to employ LLMs such as

GPT-3.5-Turbo or GPT4 instead of manually

collecting the outputs. Instructions can come

from two sources: (1) manually collected; or

(2) expanded based a small handwritten seed

instructions using LLMs. Next, the collected

instructions are fed to LLMs to obtain outputs.

Datasets such as InstructWild (Xue et al.,

2023) and Self-Instruct (Wang et al., 2022c)

are geneated following this approach.

For multi-turn conversational IT datasets, we

can have large language models self-play different

roles (user and AI assistant) to generate messages

in a conversational format (Xu et al., 2023b).

2.2 Instruction Tuning

Based on the collected IT dataset, a pretrained

model can be directly fune-tuned in a fully-

supervised manner, where given the instruction and

the input, the model is trained by predicting each

token in the output sequentially.

3 Datasets

In this section, we detail widely-used instruction

tuning datasets in the community. Table 1 gives an

overview of the datasets.

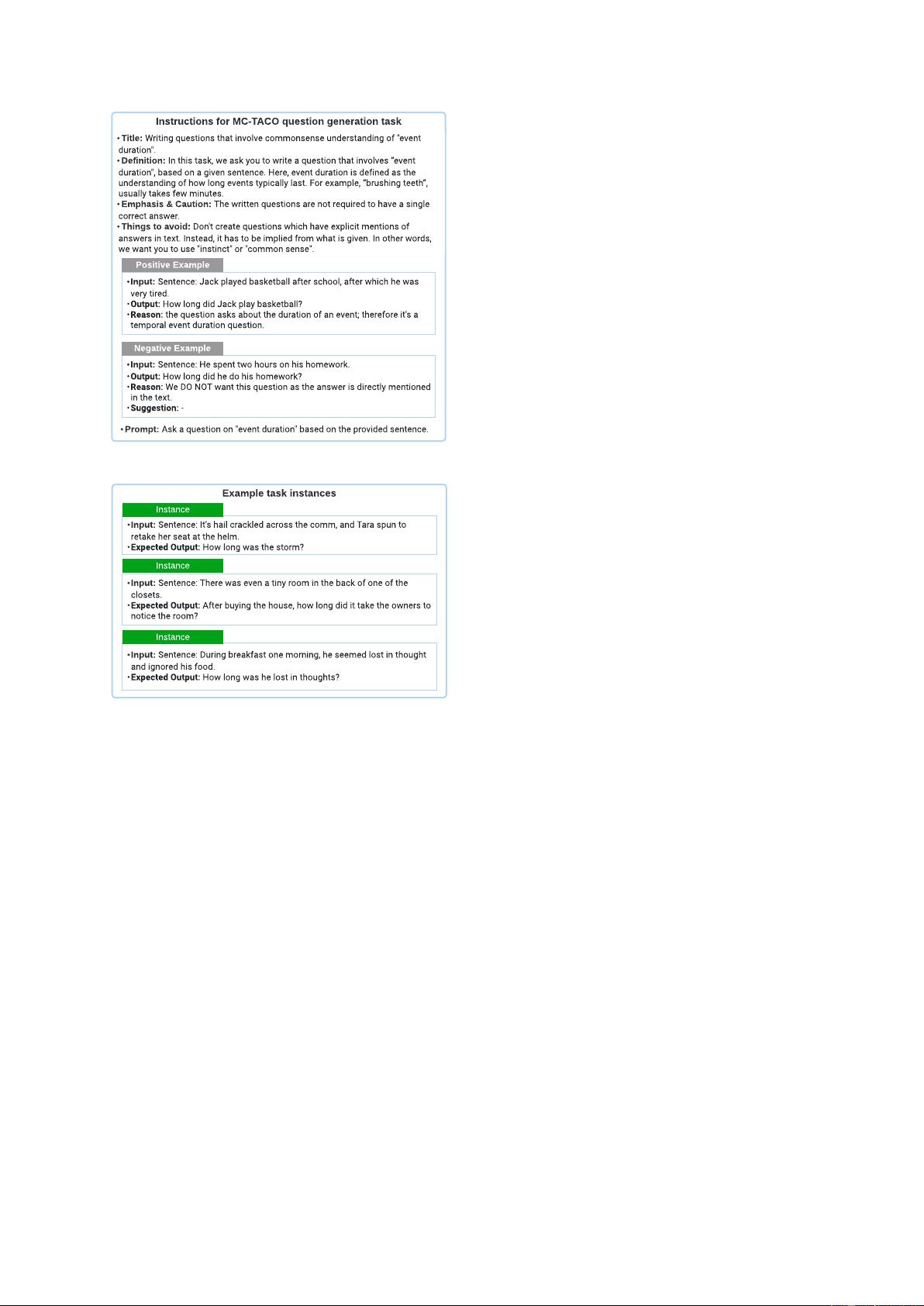

3.1 Natural Instructions

Natural Instructions (Mishra et al., 2021) is

a human-crafted English instruction dataset

consisting of 193K instances, coming from 61

distinct NLP tasks. The dataset is comprised of

"instructions" and "instances". Each instance in

the "instructions" is a task description consisting

of 7 components: title, definition, things to avoid

text-label

instruction-

output

seed

instructions

more

instructions

output

LLM

Supervised

Finetuning

templates

ChatGPT

& GPT4

Step1: Instruction Dataset Construction Step2: Instruction Tuning

LLM

ChatGPT

& GPT4

Figure 1: General pipeline of instruction tuning.

emphasis/caution, prompt, positive example, and

negative example. Subfigure (a) in Figure 2 gives

an example of the "instructions". "Instances"

consists of ("input", "output") pairs, which are the

input data and textual result that follows the given

instruction correctly. Subfigure (b) in Figure 2

gives an example of the instances.

The data comes from existing NLP datasets of

61 tasks. The authors collected the "instructions"

by referring to the dataset annotating instruction

file. Next, the authors constructed the "instances"

by unifying data instances across all NLP datasets

to ("input", "output") pairs.

3.2 P3

P3 (Public Pool of Prompts) (Sanh et al., 2021) is

an instruction fine-tuning dataset constructed by

integrating 170 English NLP datasets and 2,052

English prompts. Prompts, which are sometimes

named task templates, are functions that map a data

instance in a conventional NLP task (e.g., question

answering, text classification) to a natural language

input-output pair.

Each instance in P3 has three components:

"inputs", "answer_choices", and “targets". "Inputs"

is a sequence of text that describes the task in

natural language (e.g., "If he like Mary is true, is

it also true that he like Mary’s cat?"). "Answer

choices" is a list of text string that are applicable

responses to the given task (e.g., ["yes", "no",

"undetermined"]). "Targets" is a text string that

is the correct response to the given "inputs" (e.g.,

"yes"). The authors built PromptSource, a tool for

creating high-quality prompts collaboratively and

an archive for open-sourcing high-quality prompts.

the P3 dataset was built by randomly sampling a

prompt from multiple prompts in the PromptSource

and mapping each instance into a ("inputs",

"answer choices", "targets") triplet.

3.3 xP3

xP3 (Crosslingual Public Pool of

Prompts) (Muennighoff et al., 2022) is a

multilingual instruction dataset consisting of 16

diverse natural language tasks in 46 languages.

Each instance in the dataset has two components:

"inputs" and "targets". "Inputs" is a task description

in natural language. "Targets" is the textual result

that follows the "inputs" instruction correctly.

The original data in xP3 comes from three

sources: the English instruction dataset P3, 4

English unseen tasks in P3 (e.g., translation,

program synthesis), and 30 multilingual NLP

datasets. The authors built the xP3 dataset

by sampling human-written task templates from

PromptSource and then filling templates to

transform diverse NLP tasks into a unified

formalization. For example, a task template for

the natural language inference task is as follows:

“If Premise is true, is it also true that Hypothesis?”;

"yes", "maybe", no" with respect to the original

task labels "entailment (0)", "neutral (1)" and

"contradiction (2)".

3.4 Flan 2021

Flan 2021 (Longpre et al., 2023) is an English

instruction dataset constructed by transforming

62 widely-used NLP benchmarks (e.g., SST-2,

SNLI, AG News, MultiRC) into language input-

output pairs. Each instance in the Flan 2021

has "input" and "target" components. "Input"

is a sequence of text that describes a task via a

natural language instruction (e.g., "determine the

sentiment of the sentence ’He likes the cat.’ is

Instructions for MC-TACO question generation task

- Title: Writing questions that involve commonsense understanding of "event

duration".

- Definition: In this task, we ask you to write a question that involves ?event

duration", based on a given sentence. Here, event duration is defined as the

understanding of how long events typically last. For example, ?brushing teeth?,

usually takes few minutes.

- Emphasis & Caution: The written questions are not required to have a single

correct answer.

- Things to avoid: Don't create questions which have explicit mentions of

answers in text. Instead, it has to be implied from what is given. In other words,

we want you to use "instinct" or "common sense".

-Input: Sentence: Jack played basketball after school, after which he was

very tired.

-Output: How long did Jack play basketball?

-Reason: the question asks about the duration of an event; therefore it's a

temporal event duration question.

Positive Example

-Input: Sentence: He spent two hours on his homework.

-Output: How long did he do his homework?

-Reason: We DO NOT want this question as the answer is directly mentioned

in the text.

-Suggestion: -

Negative Example

- Prompt: Ask a question on "event duration" based on the provided sentence.

Example task instances

-Input: Sentence: It's hail crackled across the comm, and Tara spun to

retake her seat at the helm.

-Expected Output: How long was the storm?

Instance

-Input: Sentence: There was even a tiny room in the back of one of the

closets.

-Expected Output: After buying the house, how long did it take the owners to

notice the room?

Instance

-Input: Sentence: During breakfast one morning, he seemed lost in thought

and ignored his food.

-Expected Output: How long was he lost in thoughts?

Instance

(a) An example of INSTRUCTIONS in Natural Instruction

dataset.

Instructions for MC-TACO question generation task

- Title: Writing questions that involve commonsense understanding of "event

duration".

- Definition: In this task, we ask you to write a question that involves ?event

duration", based on a given sentence. Here, event duration is defined as the

understanding of how long events typically last. For example, ?brushing teeth?,

usually takes few minutes.

- Emphasis & Caution: The written questions are not required to have a single

correct answer.

- Things to avoid: Don't create questions which have explicit mentions of

answers in text. Instead, it has to be implied from what is given. In other words,

we want you to use "instinct" or "common sense".

-Input: Sentence: Jack played basketball after school, after which he was

very tired.

-Output: How long did Jack play basketball?

-Reason: the question asks about the duration of an event; therefore it's a

temporal event duration question.

Positive Example

-Input: Sentence: He spent two hours on his homework.

-Output: How long did he do his homework?

-Reason: We DO NOT want this question as the answer is directly mentioned

in the text.

-Suggestion: -

Negative Example

- Prompt: Ask a question on "event duration" based on the provided sentence.

Example task instances

-Input: Sentence: It's hail crackled across the comm, and Tara spun to

retake her seat at the helm.

-Expected Output: How long was the storm?

Instance

-Input: Sentence: There was even a tiny room in the back of one of the

closets.

-Expected Output: After buying the house, how long did it take the owners to

notice the room?

Instance

-Input: Sentence: During breakfast one morning, he seemed lost in thought

and ignored his food.

-Expected Output: How long was he lost in thoughts?

Instance

(b) An example of INSTANCES in Natural Instruction dataset.

Figure 2: The figure is adapted from Mishra et al.

(2021).

positive or negative?"). "Target" is a textual result

that executes the "input" instruction correctly (e.g.,

"positive"). The authors transformed conventional

NLP datasets into input-target pairs by: Step

1: manually composing instruction and target

templates; Step 2: filling templates with data

instances from the dataset.

3.5 Unnatural Instructions

Unnatural Instructions (Honovich et al., 2022) is

an instruction dataset with approximately 240,000

instances, constructed using InstructGPT (text-

davinci-002) (Ouyang et al., 2022). Each instance

in the dataset has four components: INSTRUCTION,

INPUT, CONSTRAINTS, and OUTPUT. Instruction"

is a description of the instructing task in natural

language. "Input" is an argument in natural

language that instantiates the instruction task.

"Constraints" are restrictions of the output space

of the task. "Output" is a sequence of text that

correctly executes the instruction given the input

argument and the constraints.

The authors first sampled the seed instructions

from the Super-Natural Instructions dataset (Wang

et al., 2022d), which is manually constructed.

Then they prompted InstructGPT to elicit a

new (instructions, inputs, constraints) pair with

three seed instructions as demonstrations. Next,

the authors expanded the dataset by randomly

rephrasing the instruction or the input. The

concatenation of instruction, input and constraint

is fed to InstructGPT to obtain the output.

3.6 Self-Instruct

Self-Instruct (Wang et al., 2022c) is an English

instruction dataset with 52K training instructions

and 252 evaluation instructions, constructed using

InstructGPT (Ouyang et al., 2022). Each data

instance consists of "instruction", "input" and

"output". "Instruction" is a task definition

in natural language (e.g., "Please answer the

following question."). "Input" is optional and is

used as supplementary content for the instruction

(e.g., "Which country’s capital is Beijing?"), and

"output" is the textual result that follows the

instruction correctly (e.g., "Beijing"). The full

dataset is generated based on the following steps:

Step 1. The authors randomly sampled 8 natural

language instructions from the 175 seed tasks as

examples and prompted InstructGPT to generate

more task instructions.

Step 2. The authors determined whether the

instructions generated in Step 1 is a classification

task. If yes, they asked InstructGPT to generate

all possible options for the output based on the

given instruction and randomly selected a particular

output category to prompt InstructGPT to generate

the corresponding "input" content. For Instructions

that do not belong to a classification task, there

should be countless "output" options. The authors

proposed to use the Input-first strategy, where

InstructGPT was prompted to generate the "input"

based on the given "instruction" first and then

generate the "output" according to the "instruction"

and the generated "input".

Step 3. Based on results of step-2, the authors

used InstructGPT to generate the "input" and

"output" for corresponding instruction tasks using

the output-first or input-first strategy.

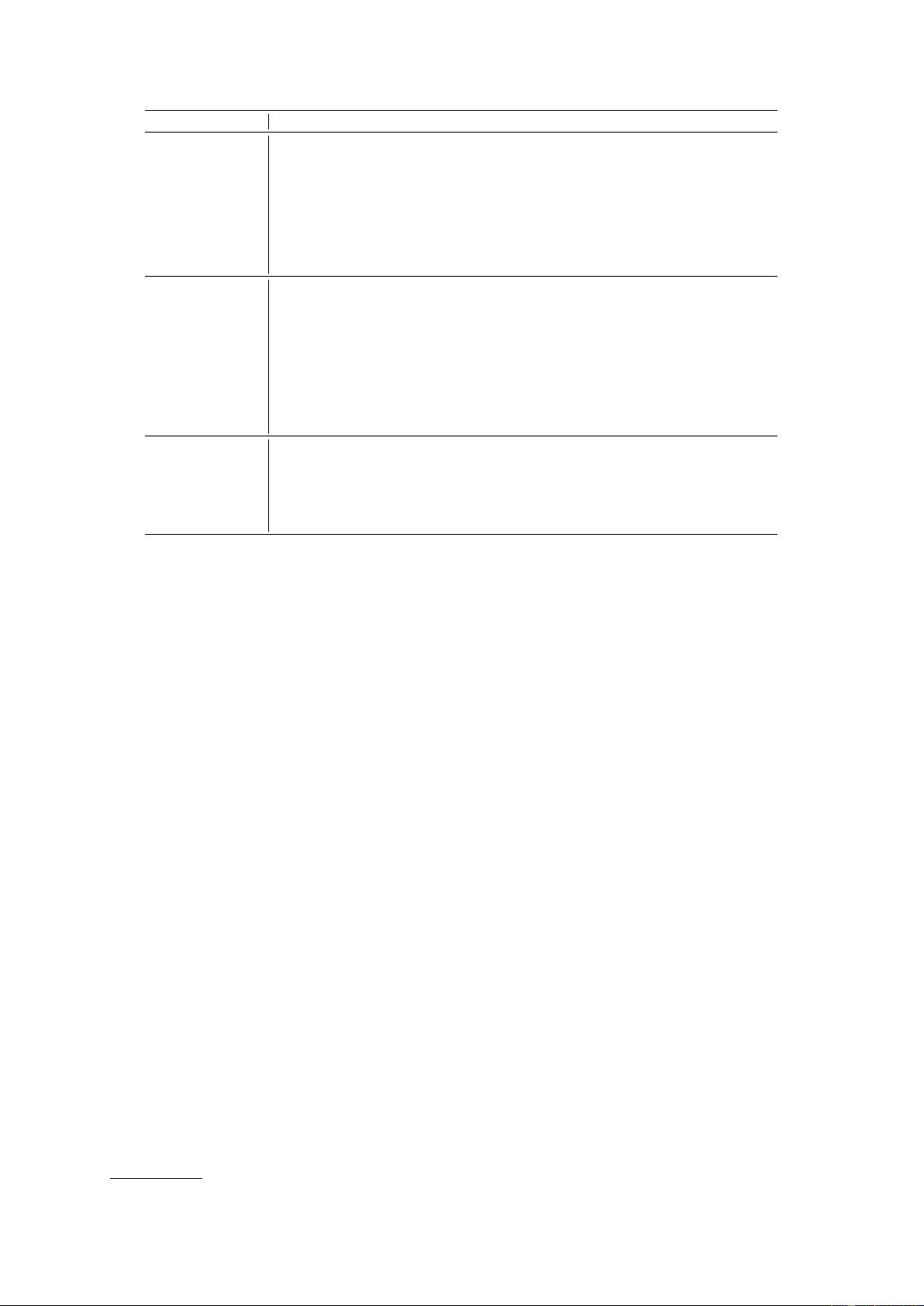

Type Dataset Name # of Instances # of Tasks # of Lang Construction Open-source

Generalize to unseen tasks

UnifiedQA (Khashabi et al., 2020)

1

750K 46 En human-crafted Yes

OIG (LAION.ai, 2023)

2

43M 30 En human-model-mixed Yes

UnifiedSKG (Xie et al., 2022)

3

0.8M - En human-crafted Yes

Natural Instructions (Honovich et al., 2022)

4

193K 61 En human-crafted Yes

Super-Natural Instructions (?)

5

5M 76 55 Lang human-crafted Yes

P3 (Sanh et al., 2021)

6

12M 62 En human-crafted Yes

xP3 (Muennighoff et al., 2022)

7

81M 53 46 Lang human-crafted Yes

Flan 2021 (Longpre et al., 2023)

8

4.4M 62 En human-crafted Yes

COIG (Zhang et al., 2023a)

9

- - - - Yes

Follow users’ instructions

in a single turn

InstructGPT (Ouyang et al., 2022) 13K - Multi human-crafted No

Unnatural Instructions (Honovich et al., 2022)

10

240K - En InstructGPT-generated Yes

Self-Instruct (Wang et al., 2022c)

11

52K - En InstructGPT-generated Yes

InstructWild (Xue et al., 2023)

12

104K 429 - model-generated Yes

Evol-Instruct (Xu et al., 2023a)

13

52K - En ChatGPT-generated Yes

Alpaca (Taori et al., 2023)

14

52K - En InstructGPT-generated Yes

LogiCoT (Liu et al., 2023a)

15

- 2 En GPT-4-generated Yes

Dolly (Conover et al., 2023)

16

15K 7 En human-crafted Yes

GPT-4-LLM (Peng et al., 2023)

17

52K - En&Zh GPT-4-generated Yes

LIMA (Zhou et al., 2023)

18

1K - En human-crafted Yes

Offer assistance like humans

across multiple turns

ChatGPT (OpenAI, 2022) - - Multi human-crafted No

Vicuna (Chiang et al., 2023) 70K - En user-shared No

Guanaco (JosephusCheung, 2021)

19

534,530 - Multi model-generated Yes

OpenAssistant (Köpf et al., 2023)

20

161,443 - Multi human-crafted Yes

Baize v1 (?)

21

111.5K - En ChatGPT-generated Yes

UltraChat (Ding et al., 2023a)

22

675K - En&Zh model-generated Yes

1

https://github.com/allenai/unifiedqa

2

https://github.com/LAION-AI/Open-Instruction-Generalist

3

https://github.com/hkunlp/unifiedskg

4

https://github.com/allenai/natural-instructions-v1

5

https://github.com/allenai/natural-instructions

6

https://huggingface.co/datasets/bigscience/P3

7

https://github.com/bigscience-workshop/xmtf

8

https://github.com/google-research/FLAN

9

https://github.com/BAAI-Zlab/COIG

10

https://github.com/orhonovich/unnatural-instructions

11

https://github.com/yizhongw/self-instruct

12

https://github.com/XueFuzhao/InstructionWild

13

https://github.com/nlpxucan/evol-instruct

14

https://github.com/tatsu-lab/stanford_alpaca

15

https://github.com/csitfun/LogiCoT

16

https://huggingface.co/datasets/databricks/databricks-dolly-15k

17

https://github.com/Instruction-Tuning-with-GPT-4/GPT-4-LLM

18

https://huggingface.co/datasets/GAIR/lima

19

https://huggingface.co/datasets/JosephusCheung/GuanacoDataset

20

https://github.com/LAION-AI/Open-Assistant

21

https://github.com/project-baize/baize-chatbot

22

https://github.com/thunlp/UltraChat#data

Table 1: An overview of instruction tuning datasets.

Step 4. The authors post-processed (e.g., filtering

out similar instructions and removing duplicate

data for input and output) the generated instruction

tasks and got a final number of 52K English

instructions.

3.7 Evol-Instruct

Evol-Instruct (Xu et al., 2023a) is an English

instruction dataset consisting of a training set

with 52K instructions and an evaluation set

with 218 instructions. The authors prompted

ChatGPT (OpenAI, 2022)

1

to rewrite instructions

using the in-depth and in-breath evolving strategies.

The in-depth evolving strategy contains five types

of operations, e.g., adding constraints, increasing

reasoning steps, complicating input and etc. The

in-breath evolving strategy upgrades the simple

1

https://chat.openai.com/chat

instruction to a more complex one or directly

generates a new instruction to increase diversity.

The authors first used 52K (instruction, response)

pairs as the initial set. Then they randomly

sampled an evolving strategy and asked ChatGPT

to rewrite the initial instruction based on the

chosen evolved strategy. The author employed

ChatGPT and rules to filter out no-evolved

instruction pairs and updated the dataset with

newly generated evolved instruction pairs. After

repeating the above process 4 times, the authors

collected 250K instruction pairs. Besides the train

set, the authors collected 218 human-generated

instructions from real scenarios (e.g., open-source

projects, platforms, and forums), called the Evol-

Instruct test set.

剩余30页未读,继续阅读

资源评论

(initial)

- 粉丝: 279

- 资源: 32

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功