没有合适的资源?快使用搜索试试~ 我知道了~

论文《CDNS content outsourcing via Generalized communities》

需积分: 10 14 下载量 105 浏览量

2011-01-21

00:03:46

上传

评论

收藏 2.5MB PDF 举报

温馨提示

试读

15页

论文《CDNS content outsourcing via Generalized communities》

资源推荐

资源详情

资源评论

CDNs Content Outsourcing

via Generalized Communities

Dimitrios Katsaros, George Pallis, Konstantinos Stamos, Athena Vakali,

Antonis Sidiropoulos, and Yannis Manolopoulos

Abstract—Content distribution networks (CDNs) balance costs and quality in services related to content delivery. Devising an efficient

content outsourcing policy is crucial since, based on such policies, CDN providers can provide client-tailored content, improve

performance, and result in significant economical gains. Earlier content outsourcing approaches may often prove ineffective since they

drive prefetching decisions by assuming knowledge of content popularity statistics, which are not always available and are extremely

volatile. This work addresses this issue, by proposing a novel self-adaptive technique under a CDN framework on which outsourced

content is identified with no a priori knowledge of (earlier) request statistics. This is employed by using a structure-based approach

identifying coherent clusters of “correlated” Web server content objects, the so-called Web page communities. These communities are

the core outsourcing unit, and in this paper, a detailed simulation experimentation has shown that the proposed technique is robust and

effective in reducing user-perceived latency as compared with competing approaches, i.e., two communities-based approaches, Web

caching, and non-CDN.

Index Terms—Caching, replication, Web communities, content distribution networks, social network analysis.

Ç

1INTRODUCTION

D

ISTRIBUTING information to Web users in an efficient and

cost-effective manner is a challenging problem, espe-

cially, under the increasing requirements emerging from a

variety of modern applications, e.g., voice-over-IP and

streaming media. Eager audiences embracing the “digital

lifestyle” are requesting greater and greater volumes of

content on a daily basis. For instance, the Internet video site

YouTube hits more than 100 million v ideos per day.

1

Estimations of YouTube’s bandwidth go from 25 TB/day

to 200 TB/day. At the same t ime, more and more

applications (such as e-commerce and e-learning) are relying

on the Web but with high sensitivity to delays. A delay even

of a few milliseconds in a Web server content may be

intolerable. At first, solutions such as Web caching and

replication were considered as the key to satisfy such

growing demands and expectations. However, such solu-

tions (e.g., Web caching) have become obsolete due to their

inability to keep up with the growing demands and the

unexpected Web-related phenomen a such as the flash-

crowd events [17] occurring when numerous users access a

Web server content simultaneously (now often occurring on

the Web due to its globalization and wide adoption).

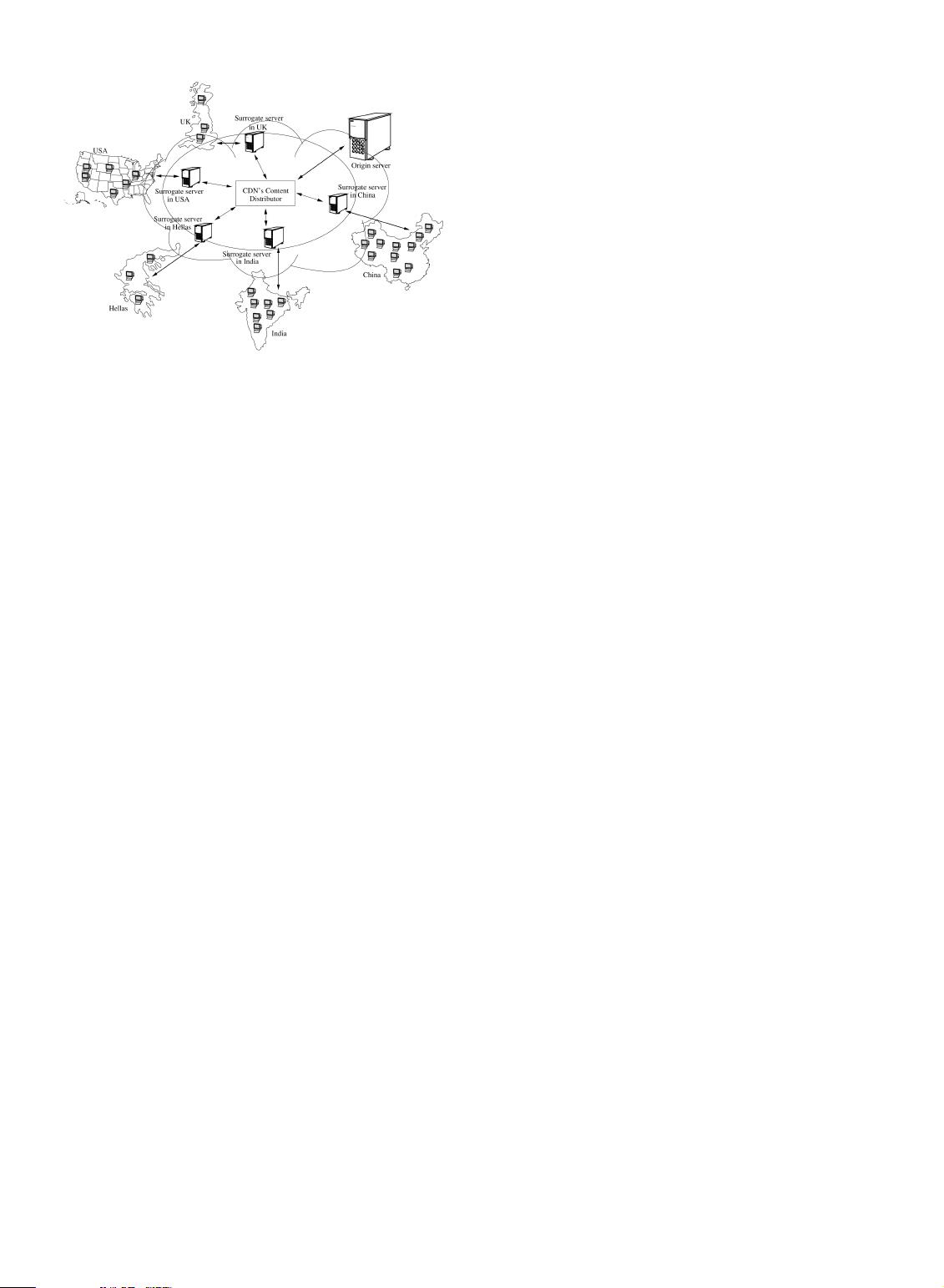

Content distribution networks (CDNs) have been pro-

posed to meet such challenges by providing a scalable and

cost-effective mechanism for accelerating the delivery of the

Web content [7], [27]. A CDN

2

is an overlay network across

Internet (Fig. 1), which consists of a set of surrogate servers

(distributed around the world), routers, and network

elements. Surrogate servers are the key elements in a

CDN, acting as proxy caches that serve directly cached

content to clients. They store copies of identical content,

such that clients’ requests are satisfied by the most

appropriate site. Once a client requests for content on an

origin server (managed by a CDN), his request is directed to

the appropriate CDN’s surrogate server. This results in an

improvement to both the response time (the requested

content is nearest to the client) and the system throughput

(the workload is distributed to several servers).

As emphasized in [4] and [34], CDNs significantly

reduce the bandwidth requirements for Web service

providers, since the requested content is closer to user

and there is no need to traverse all of the congested pipes

and peering points. So, reducing bandwidth reduces cost

for the Web service providers. CDNs provide also scalable

Web application hosting techniques (such as edge comput-

ing [10]) in order to accelerate the dynamic generation of

Web pages ; instead of replicating the dynamic pages

generated by a Web server, they replicate the means of

generating pages over multiple surrogate servers [34].

CDNs are expected to play a key role in the future of the

Internet infrastructure since their high user performance

IEEE TRANSACTIONS ON KNOWLEDGE AND DATA ENGINEERING, VOL. 21, NO. 1, JANUARY 2009 1

. D. Katsaros is with the Department of Computer and Communication

Engineering, University of Thessaly, Volos, Greece.

E-mail: dkatsar@inf.uth.gr.

. K. Stamos, A. Vakali, A. Sidiropoulos, and Y. Manolopoulos are with the

Department of Informatics, Aristotle University of Thessaloniki, 54124,

Thessaloniki, Greece.

E-mail: {kstamos, avakali, asidirop, manolopo}@csd.auth.gr.

. G. Pallis is with the Department of Computer Science, University of

Cyprus, 20537, Nicosia, Cyprus. E-mail: gpallis@cs.ucy.ac.cy.

Manuscript received 10 July 2007; revised 9 Feb. 2008; accepted 24 Apr. 2008;

published online 6 May 2008.

For information on obtaining reprints of this article, please send e-mail to:

tkde@computer.org, and reference IEEECS Log Number

TKDE-2007-07-0347.

Digital Object Identifier no. 10.1109/TKDE.2008.92.

1. http://www.youtube.com/.

2. A survey on the status and trends of CDNs is given in [36]. Detailed

information about the CDNs’ mechanisms is presented in [30].

1041-4347/09/$25.00 ß 2009 IEEE Published by the IEEE Computer Society

and cost savings have urged many Web entrepreneurs to

make contracts with CDNs.

3

1.1 Motivation and Paper’s Cont ributions

Currently, CDNs invest in large-scale infrastructure (surro-

gate servers, network resources, etc.) to provide high data

quality for their clients. To revenue their investment, CDNs

charge their customers (i.e., Web server owners) based on

two crite ria: the amount of cont ent (which has been

outsourced) and their traffic records (measured by the

content delivery from surrogate servers to clients). Accord-

ing to a CDN market report,

3

the average cost per GB of

streaming video transferred in 2004 through a CDN was

$1:75, while the average price to deliver a GB of Internet

radio was $1. Given that the bandwidth usage of Web

servers content may be huge (i.e., the bandwidth usage of

YouTube is about 6 petabytes per month), it is evident that

this cost may be extremely high.

Therefore, the proposal of a content outsourcing policy,

which will reduce both the Internet traffic and the replicas

maintenance costs, is a challenging research task due to the

huge-scale, the heterogeneity, the multilevels in the structure, the

hyperlinks and interconnections, and the dynamic and evolving

nature of Web content.

To the best of the authors’ knowledge, earlier approaches

for content outsourcing on CDNs assume knowledge of

content popularity statistics to drive t he prefetching

decisions [9], giving an indication of the popularity of

Web resources (a detailed review of relevant work will be

presented in Section 2). Such information though is not

always available, or it is extremely volatile, turning such

methods problematic. The use of popularity statistics has

several drawbacks. First, it requires quite a long time to

collect reliable request statistics for each object. Such a long

interval though may not be available, when a new site is

published to the Internet and should be protected from

“flash crowds” [17]. Moreover, the popularity of each object

varies considerably [4], [9]. In addition, the use of

administratively tuned parameters to select the hot objects

causes additional headaches, since there is no a priori

knowledge about how to set these parameters. Realizing the

limitations of such solutions, Rabinovich and Spatscheck

[30] implied the need for self-tuning content outsourcing

policies. In [32], we initiated the study of this problem by

outsourcing clusters of Web pages. The outsourced clusters

are identified by naively exploring the structure of the Web

site. Results showed that such an approach improves the

CDN’s performance in terms of user-perceived latency and

data redundancy.

The present work continues and improves upon the

authors’ preliminary efforts in [32], focusing on devising a

high-performance outsourcing policy under a CDN frame-

work. In this context, we point out that the following

challenges are involved:

. outsource objects that should be popular for long

time periods,

. refrai n from using (locally estima ted or server-

supplied) tunable parameters (e.g., number of

clusters) and keywords, which do not adapt well

to changing access distributions, and

. refrain from using popularity statistics which do not

represent effectively the dynamic users’ navigation

behavior. As observed in [9], only 40 percent of the

“popular” objects for one day remain “popular” and

the next day.

In accordance to the above challenges, we propose a

novel self-adaptive technique under a CDN framework on

which outsourced content is identified by exploring Web

server content structure and with no a priori knowledge of

(earl ier) request statistics. This paper’s cont ribution is

summarized as follows:

. Identifying content clusters, called Web page commu-

nities, based on the adopted Web graph structure

(where Web pages are nodes and hyperlinks are

edges), such that these communities serve as the core

outsourcing unit for replication. Typically, it can be

considered as a dense subgraph where the number

of edges within a community is larger than the

number of edges between communities. Such struc-

tures exist on the Web [1], [13], [20]—Web servers

content designers (humans or applications) tend to

organize sites into collections of Web pages related

to a common interest—and affect users’ navigation

behavior; a dense linkage implies a higher prob-

ability of selecting a link. Here, we exploit a

quantitative definition for Web page communities

introduced in [32], which is considered to be suitable

for CDNs content outsourcing problem. Our defini-

tion is flexible, allowing overlaps among commu-

nities (a Web page may belong to more than one

community), since a Web page usually covers a wide

range of topics (e.g., a news Web server content) and

cannot be classified by a single community. The

resulting communities are entirely being replicated

by the CDN’s surrogate servers.

. Defining a parameter-free outsourcing policy, since our

structure-based approach (unlike k-median, dense

k-subgraphs, min-sum, or min-max clustering) does

not require the number of communities as a pre-

determined parameter, but instead, the optimal

number of communities is any value between 1 and

the number of nodes of the Web site graph, depend-

ing on the node connectivity (captured by the Web

2 IEEE TRANSACTIONS ON KNOWLEDGE AND DATA ENGINEERING, VOL. 21, NO. 1, JANUARY 2009

3. “Content Delivery Networks, Market Strategies and Forecasts (2001-

2006),” AccuStream iMedia Research: http://www.marketresearch.com/.

Fig. 1. A typical CDN.

server content structure). The proposed policy called

Communities identification with Betweenness Cen-

trality (CiBC) identifies overlapped Web page com-

munities using the concept of Betweenness Centrality

(BC) [5]. Specifically, Newman and Girvan [22] have

used the concept of edge betweenness to select edges

to be removed from the graph so as to devise a

hierarchical agglomerative clustering procedure,

which though is not capable of providing the final

communities but requires intervening of adminis-

trators. Contrary to this work [22], the BC is used, in

this paper, to measure how central each node of the

Web site graph is within a community.

. Experimenting on a detailed simulation testbed, since the

experimentation carried out involves numerous

experiments to evaluate the proposed scheme under

regular traffic and under flash crowd events. Current

usage of Web technologies and Web server content

performance characteristics during a flash crowd

event are highlighted, and from our experimentation,

the proposed approach is shown to be robust and

effective in minimizing both the average response

time of users’ requests and the costs of CDNs’

providers.

1.2 Road Map

The rest of this paper is structured as follows: Section 2

discusses the related work. In Section 3, we formally define

the problem addressed in this paper. Section 4 presents the

proposed policy. Sections 5 and 6 present the simulation

testbed, examined policies, and performance measures.

Section 7 evaluates the proposed approach, and finally,

Section 8 concludes this paper.

2RELEVANT WORK

2.1 Content Outsourcing Policies

As identified by earlier research efforts [9], [15], the choice of

the outsourced content has a crucial impact in terms of

CDN’s pricing [15] and CDN’s performance [9], and it is

quite complex and challenging, if we consider the dynamic

nature of the Web. A naive solution to this problem is to

outsource all the objects of the Web server content (full

mirroring) to all the surrogate servers. The latter may seem

feasible, since the technological advances in storage media

and networking support have greatly improved. However,

the respective demand from the market greatly surpasses

these advantages. For instance, after the recent agreement

between Limelight Networks

4

and YouTube, under which

the first company is adopted as the content delivery platform

by YouTube, we can deduce, since this is proprietary

information, the huge storage requirements of the surrogate

servers. Moreover, the evolution toward completely perso-

nalized TV (e.g., the stage6)

5

reveals that the full content of

the origin servers cannot be completely outsourced as a

whole. Finally, the problem of updating such a huge

collection of Web objects is unmanageable. Thus, we have

to resort to a more “selective” outsourcing policy.

A few such content outsourcing policies have been

proposed in order to identify which objects to outsource for

replica ting to CDNs’ surrogate servers. These can be

categorized as follows:

. Empirical-based outsourcing. The Web server con-

tent administrators decide empirically about which

content will be outsourced [3].

. Popularity-based outsourcing. The most popular

objects are replicated to surrogate servers [37].

. Object-based outsourcing. The content is replicated

to surrogate servers in units of objects. Each object is

replicated to the surrogate server (under the storage

constraints) which gives the most performance gain

(greedy approach) [9], [37].

. Cluster-based outsourcing. The content is replicated

to surrogate servers in units of clusters [9], [14]. A

cluster is defined as a group of Web pages which

have some common characteristics with respect to

their content, the time of references, the number of

references, etc.

From the above content outsourcing policies, the object-

based one achieves high performance [9], [37]. However, as

pointed out by the authors of these policies, the huge

amount of objects results in not being implemented on a

real application. On the other hand, the popularity-based

outsourcing policies do not select the most suitable objects

for outsourcing, since the most popular objects remain

popular for a short time period [9]. Moreover, they require

quite a long time to collect reliable request statistics for each

object. Such a long interval though may not be available,

when a new Web server content is published to the Internet

and should be protected from flash crowd events.

Thus, we resort to exploit action of cluster-based out-

sourcing policies. The cluster-based one has also gained the

most attraction in the research community [9]. In such an

approach, the clusters may be identified by using conven-

tional data clustering algorithms. However, due to the lack

of a uniform schema for Web documents and dynamics of

Web data, the efficiency of these approaches is unsatisfac-

tory. Furthermore, most of them require administratively

tuned parameters (maximum cluster diameter, maximum

number of clusters) to decide the number of clusters, which

causes additional problems, since there is no a priori

knowledge about how many clusters of objects exist and of

what shape these clusters are.

In disaccordance with the above approaches, we exploit

the Web server content structure and consider each cluster

as a Web page community, where its characteristics are

that it reflects the dynamic and heterogeneity nature of the

Web. Specifically, it considers each page as a whole object,

rather than breaking down the Web page into information

pieces and reveals mutual relationships among the

concerned Web data.

2.2 Identifying Web Page Communities

In the literature there are several proposals for identifying

Web page communities [13], [16]. One of the key distin-

guishing properties of the algorithms that is usually

considered has to do with the degree of locality which is

used for assessing whether or not a page should be assigned

in a community. Regarding this feature, the methods for

identifying the communities can be summarized as follows:

KATSAROS ET AL.: CDNS CONTENT OUTSOURCING VIA GENERALIZED COMMUNITIES 3

4. http://www.limelightnetworks.com.

5. http://stage6.divx.com.

剩余14页未读,继续阅读

资源评论

morre

- 粉丝: 187

- 资源: 2337

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功